A Practical Step-by-Step Guide to the SES Framework: Methodological Implementation for Biomedical Researchers

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed, actionable methodology for implementing the Symptom, Event, and System (SES) framework in biomedical research.

A Practical Step-by-Step Guide to the SES Framework: Methodological Implementation for Biomedical Researchers

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed, actionable methodology for implementing the Symptom, Event, and System (SES) framework in biomedical research. Covering foundational concepts through advanced application, it addresses core intents: establishing a theoretical foundation (Intent 1), delivering a stepwise methodological protocol (Intent 2), offering solutions for common pitfalls (Intent 3), and presenting validation strategies with comparisons to related approaches (Intent 4). The article synthesizes current best practices to enable robust, reproducible, and context-aware analysis of complex symptom and adverse event data in clinical and translational studies.

Understanding the SES Framework: Core Principles and Prerequisites for Implementation

The Symptom, Event, and System (SES) framework is a structured methodology for analyzing biological phenomena and therapeutic interventions from a multi-scale perspective. It provides a standardized lexicon and analytical approach, crucial for deconstructing complex disease biology and drug mechanisms.

- Symptom (S): The observable, often macroscopic, clinical or phenotypic manifestation of a pathological state. This is the endpoint of a cascade of underlying biological processes (e.g., hyperglycemia, tumor growth, pain sensation).

- Event (E): The discrete, measurable molecular or cellular occurrence that directly contributes to or causes a Symptom. Events are the actionable targets for therapeutic intervention (e.g., protein phosphorylation, cytokine release, ion channel opening).

- System (S): The interconnected network of components (genes, proteins, cells, organs) and their interactions that give rise to Events. This defines the context and boundaries of the research (e.g., insulin signaling pathway, tumor microenvironment, nociceptive neural circuit).

Table 1: SES Framework Application in Oncology Drug Development

| SES Component | Measurable Parameter (Example) | Experimental Readout | Typical Quantitative Range (Illustrative) | Associated Protocol Section |

|---|---|---|---|---|

| Symptom | Tumor Volume | Caliper Measurement / MRI | 50-1000 mm³ | 3.1 |

| Event | Phospho-ERK1/2 Level | Western Blot Densitometry | 2-10 fold increase vs. control | 3.2 |

| System | Pathway Node Activity | Multiplex Phospho-Kinase Assay | EC₅₀ values: 1-100 nM | 3.3 |

Table 2: SES Framework in Metabolic Disorder Research

| SES Component | Analyte/Process | Detection Method | Reference Values / Change | Associated Protocol Section |

|---|---|---|---|---|

| Symptom | Blood Glucose | Glucose Oxidase Assay | 70-140 mg/dL (fasting) | 3.1 |

| Event | Insulin Receptor Autophosphorylation | ELISA | 50% inhibition at 50 nM drug | 3.2 |

| System | GLUT4 Translocation | Confocal Microscopy (Ratio Cytoplasm/Membrane) | 3-5 fold increase upon insulin stimulus | 3.3 |

Detailed Experimental Protocols

Protocol: Quantifying the 'Symptom' Tier (Tumor Growth)

Objective: To standardize the measurement of tumor volume as a primary symptomatic readout in xenograft models. Materials: Calipers, animal subject, data recording software. Procedure:

- Measurement: Using digital calipers, measure the tumor in two perpendicular dimensions: length (L, longest dimension) and width (W).

- Calculation: Calculate tumor volume (TV) using the formula: TV = (L x W²) / 2.

- Frequency: Measure twice weekly at a consistent time of day.

- Endpoint: Humane endpoint is typically reached at TV = 1500 mm³ or as per IACUC protocol.

- Data Logging: Record all measurements with subject ID, date, and time.

Protocol: Quantifying the 'Event' Tier (Kinase Phosphorylation via Immunoblot)

Objective: To detect and quantify changes in specific phosphorylation Events within a signaling System. Materials: RIPA lysis buffer, protease/phosphatase inhibitors, BCA assay kit, SDS-PAGE system, specific primary antibodies (total and phospho-target), HRP-conjugated secondary antibodies, chemiluminescent substrate, imaging system. Procedure:

- Sample Prep: Lyse cells/tissue in ice-cold RIPA buffer with inhibitors. Centrifuge (14,000 x g, 15 min, 4°C). Collect supernatant.

- Quantification: Determine protein concentration using BCA assay. Normalize all samples to a common concentration.

- Separation: Load 20-30 µg protein per lane on 4-12% Bis-Tris gel. Run at 120-150V until dye front elutes.

- Transfer: Transfer to PVDF membrane using wet or semi-dry method.

- Blocking & Probing: Block with 5% BSA/TBST for 1h. Incubate with phospho-specific primary antibody (1:1000) overnight at 4°C. Wash. Incubate with HRP-secondary (1:5000) for 1h at RT.

- Detection: Apply chemiluminescent substrate, image.

- Reprobing: Strip membrane and re-probe for total target protein to confirm loading equality.

- Analysis: Perform densitometry. Express phospho-signal as a ratio of total target protein.

Protocol: Mapping the 'System' Tier (Multiplexed Phospho-Kinase Profiling)

Objective: To simultaneously profile the activity states of multiple kinase nodes within a signaling System. Materials: Commercial phospho-kinase array kit, cell lysates, detection reagents, imaging equipment. Procedure:

- Array Blocking: Block the pre-spotted membrane array with provided blocking buffer for 1h at RT.

- Sample Incubation: Dilute normalized lysates (300-500 µg) in array buffer and incubate with the membrane overnight at 4°C on a rocker.

- Washing: Wash membrane 3x with wash buffers (as per kit instructions).

- Detection Antibody: Incubate with a cocktail of biotinylated detection antibodies for 2h at RT.

- Streptavidin-HRP: Incubate with Streptavidin-HRP conjugate for 30 min at RT.

- Signal Development: Apply chemiluminescent mix and image using a CCD camera system.

- Data Analysis: Use spot intensity analysis software. Normalize signals to internal positive controls. Compare relative phosphorylation levels across samples.

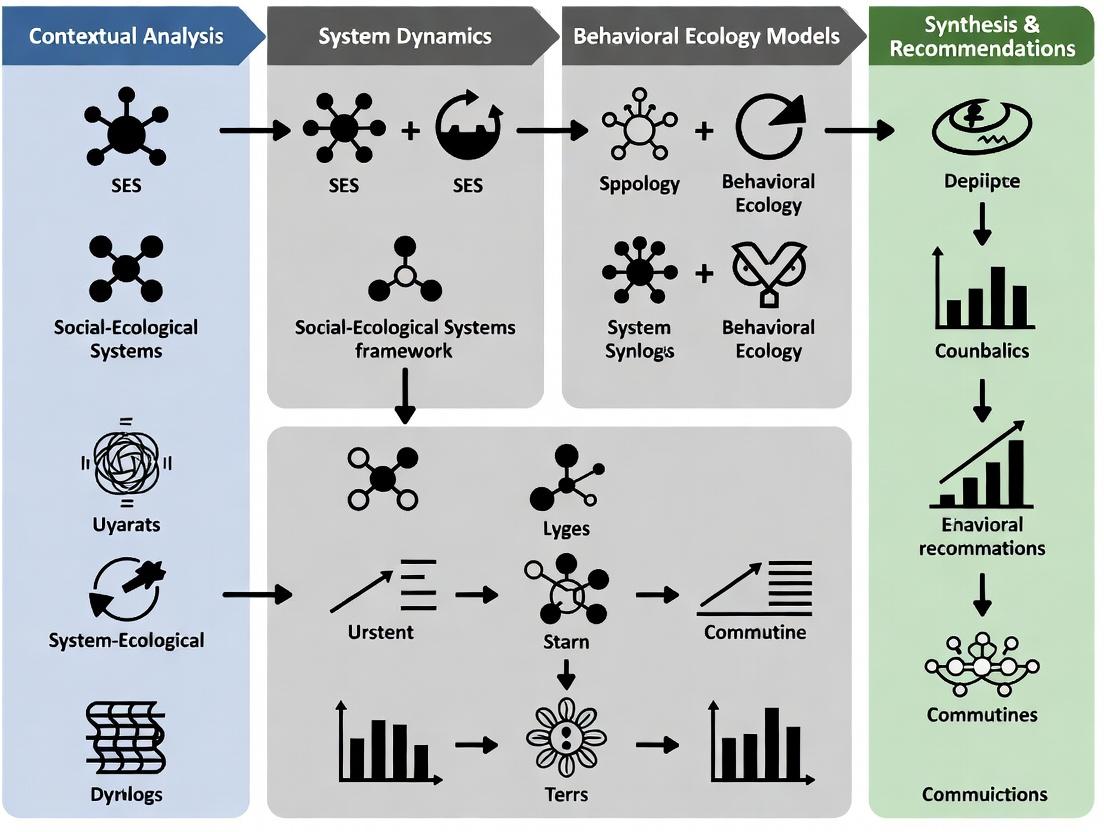

Visualizing SES Relationships and Workflows

SES Framework Conceptual Hierarchy

Integrated SES Experimental Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Reagents for SES Framework Research

| Item Category | Specific Example | Function in SES Context |

|---|---|---|

| Lysis Buffers | RIPA Buffer, NP-40 Buffer | Extraction of proteins/nucleic acids from the System for Event detection. |

| Phosphatase Inhibitors | Sodium Orthovanadate, β-Glycerophosphate | Preserve phosphorylation Events during sample preparation for analysis. |

| Validated Antibodies | Phospho-specific Antibodies (e.g., anti-p-Akt) | Direct detection and quantification of target Events via immunoassays. |

| Multiplex Assay Kits | Phospho-Kinase Array, Luminex Panels | High-throughput profiling of multiple nodes within a signaling System. |

| Phenotypic Dyes/Assays | Glucose Assay Kit, Calcein-AM Viability Dye | Quantitative measurement of Symptom-level outputs (metabolites, viability). |

| Small Molecule Modulators | Kinase Inhibitors (e.g., PD0325901), Receptor Agonists | Tool compounds to perturb the System and probe Event-Symptom causality. |

| Live-Cell Imaging Reagents | FLIPR Calcium Dye, GFP-tagged Biosensors | Real-time monitoring of dynamic Events within cellular Systems. |

Historical Context and Evolution in Clinical Research and Pharmacovigilance

The systematic evaluation of therapeutic interventions has evolved from anecdotal observations to a highly regulated, data-driven science. This evolution is marked by pivotal events that have shaped the methodologies and ethical frameworks governing clinical research and pharmacovigilance (PV).

Key Historical Milestones:

- Pre-20th Century: Reliance on empirical, unstructured observations.

- 1947: The Nuremberg Code establishes the principle of informed consent.

- 1962: Kefauver-Harris Amendments (US) mandate proof of efficacy and safety before drug approval, prompted by the thalidomide tragedy.

- 1964: Declaration of Helsinki outlines ethical principles for human research.

- 1990: International Conference on Harmonisation (ICH) formed to harmonize technical requirements globally.

- 2012-2018: Increased adoption of Risk-Based Monitoring (RBM) and real-world evidence (RWE) in trial design.

- 2020-Present: Accelerated use of decentralized clinical trial (DCT) models and advanced AI/ML for signal detection in PV.

Application Note: Evolution of Safety Signal Detection Methodologies

Objective: To detail the transition from spontaneous reporting to quantitative, data-mining approaches within the pharmacovigilance lifecycle, as part of the SES (Safety, Efficacy, Systems) framework methodological guide.

Background: Signal detection is the core function of PV. The evolution of methods has been driven by increasing data volume, regulatory requirements, and technological advancement.

Evolutionary Stages & Quantitative Data Summary:

Table 1: Evolution of Key Pharmacovigilance Signal Detection Methodologies

| Era | Primary Methodology | Key Strength | Key Limitation | Quantitative Measure (Typical) |

|---|---|---|---|---|

| 1960s-1980s | Spontaneous Case Series Analysis | Clinical detail, hypothesis generation | Under-reporting, lack of denominator | Case count, proportional reporting |

| 1990s-2000s | Disproportionality Analysis (e.g., PRR, ROR) | Efficient screening of large databases | Confounding by indication, no incidence | Reporting Odds Ratio (ROR): 2.5-4.0 threshold |

| 2000s-2010s | Bayesian Data Mining (e.g., MGPS, BCPNN) | Handles variability in small counts | Requires specialized software | Empirical Bayes Geometric Mean (EBGM) > 2.0 signal threshold |

| 2010s-Present | Longitudinal Cohort Analysis (RWE) | Provides incidence, handles confounders | Data quality and linkage challenges | Hazard Ratio (HR): 1.5-2.0 with confidence intervals |

| Present-Future | AI/ML & Hybrid Surveillance | Pattern recognition, predictive capability | Model transparency, validation needs | AUC-ROC: 0.75-0.90 for predictive models |

Experimental Protocol: Disproportionality Analysis for Signal Screening

Protocol Title: Protocol for Routine Quarterly Signal Screening Using Reporting Odds Ratio (ROR) in a Spontaneous Reporting System Database.

1. Objective: To systematically identify potential safety signals by calculating disproportionality scores for all drug-event pairs in the FDA Adverse Event Reporting System (FAERS) quarterly dataset.

2. Materials (Research Reagent Solutions):

Table 2: Key Research Reagent Solutions for Disproportionality Analysis

| Item | Function |

|---|---|

| Standardized Medical Dictionary (e.g., MedDRA) | Provides hierarchical terminology for coding adverse events, enabling consistent grouping and analysis. |

| Drug Dictionary (e.g., WHODrug) | Standardizes medicinal product names and allows for grouping by active substance or ATC class. |

| Statistical Software (e.g., R, SAS, Python) | Platform for data manipulation, calculation of disproportionality metrics, and generation of summary tables. |

| Relational Database (e.g., PostgreSQL) | Storage and efficient querying of large, structured spontaneous reporting system data. |

| Visualization Library (e.g., ggplot2, matplotlib) | Generates forest plots and trend charts for communicating potential signals to safety review teams. |

3. Methodology:

- Data Acquisition & Preparation: Download the latest quarterly FAERS data files (DEMO, DRUG, REAC, OUTC). Map drugs to active ingredient and adverse events to Preferred Term (PT) level in MedDRA.

- Creation of Contingency Table: For each drug-ingredient (D) and event-PT (E) pair, construct a 2x2 table:

- a: Reports with D and E.

- b: Reports with D, without E.

- c: Reports with E, without D.

- d: Reports without D and without E.

- Calculation: Compute the Reporting Odds Ratio (ROR) and 95% Confidence Interval (CI).

- ROR = (a / c) / (b / d)

- 95% CI = e^(ln(ROR) ± 1.96 * sqrt(1/a + 1/b + 1/c + 1/d))

- Signal Threshold: Flag a pair for clinical review if:

- Case count (a) ≥ 3

- ROR point estimate ≥ 2.0

- Lower bound of 95% CI > 1.0

- Prioritization & Review: Sort flagged pairs by descending ROR. Output a list for expert clinical review, considering strength of association, clinical plausibility, and previous knowledge.

4. Diagram: Disproportionality Analysis Workflow

Title: Signal Screening Workflow Using Disproportionality Analysis

Application Note: Evolution of Trial Design from Traditional to Decentralized

Objective: To outline the methodological shift towards patient-centric trial designs within the SES framework, focusing on protocol adaptations for Decentralized Clinical Trials (DCTs).

Background: Technological innovation and the demand for more representative, accessible research have driven the evolution from solely site-based trials to hybrid and fully decentralized models.

Experimental Protocol: Implementing a Hybrid Decentralized Clinical Trial

Protocol Title: Protocol for a Phase III, Randomized, Hybrid Decentralized Trial to Evaluate Drug X in Chronic Condition Y.

1. Objective: To compare the efficacy and safety of Drug X versus standard of care, utilizing a hybrid DCT model to enhance participant recruitment, retention, and data diversity.

2. Key Design Evolution Table:

Table 3: Evolution from Traditional to Decentralized Clinical Trial Elements

| Trial Component | Traditional Model (1990-2010) | Hybrid/Decentralized Model (2020-Present) |

|---|---|---|

| Participant Recruitment | Site-based, local advertising, physician referral. | Centralized digital outreach, patient registries, social media, EHR screening. |

| Informed Consent | Paper-based, in-person at site. | Electronic Consent (eConsent) with multimedia, remote completion. |

| Drug Administration | Dispensed at site, direct observation. | Direct-to-Patient (DTP) shipping, local healthcare provider administration, self-administration with telemedicine support. |

| Data Collection (Visits) | All scheduled visits at clinical site (Source Data). | "Virtual Visits" via telemedicine, wearable sensors, ePRO/eCOA apps, local labs for biosamples. |

| Safety Monitoring (PV) | Site-reported SAEs, periodic site monitoring visits. | Integrated telehealth check-ins, direct patient reporting via app, AI-driven analysis of real-time wearable data for anomalies. |

| Monitoring Oversight | 100% Source Data Verification (SDV) | Risk-Based Monitoring (RBM), centralized statistical monitoring, remote source data review. |

3. Methodology for DCT Implementation:

- Feasibility & Technology Selection: Assess target population's digital literacy and access. Select validated digital health technologies (DHTs) for endpoints (e.g., Bluetooth-enabled spirometer, ECG patch).

- Regulatory & Ethics Strategy: Engage with health authorities on novel DCT aspects. Prepare for cross-border ethics reviews if participants are recruited nationally/regionally.

- Participant Journey Mapping: Design all touchpoints (screening, consent, onboarding, treatment, follow-up). Establish a central trial helpline and technology support desk.

- Investigator and Site Role: Define site responsibilities (may include initial diagnosis verification, overseeing local care, managing SAEs) versus central coordinating center functions.

- Data Integration Plan: Establish a secure, interoperable platform to integrate data from multiple sources (ePRO, wearables, eCRF, central lab) into a single trial database. Define validation rules and reconciliation procedures.

- Quality Risk Management: Perform a risk assessment focusing on data security, participant privacy, technology failure, and medication adherence. Develop mitigation strategies (e.g., backup data entry methods, device replacement protocols).

4. Diagram: Hybrid DCT Participant Data Flow

Title: Data Flow in a Hybrid Decentralized Clinical Trial

Application Notes

The Stimulation and Engagement of Signaling (SES) framework is a systematic methodology for probing and quantifying cellular signaling pathway responses to therapeutic candidates. Its core application lies in moving beyond static biomarker measurement to a dynamic, systems-level understanding of drug mechanism of action (MoA), pharmacodynamics (PD), and early toxicity.

Primary Use Cases:

- MoA Deconvolution: Differentiating direct target engagement from downstream network effects and off-target signaling.

- Lead Compound Optimization: Ranking analogs by the desired signaling profile (e.g., maximal pathway A activation with minimal pathway B engagement).

- Biomarker Identification: Discovering phospho-proteins or pathway nodes whose modulation correlates with efficacy in vitro, informing companion diagnostic development.

- Predictive Toxicology: Identifying aberrant signaling signatures (e.g., sustained ERK vs. transient ERK) linked to adaptive resistance or cytotoxicity early in development.

- Combination Therapy Rationale: Mapping signaling crosstalk to identify synergistic or compensatory pathways for rational drug pairing.

Quantitative Data Summary: Comparative Output of SES vs. Traditional Assays

| Metric | Traditional ELISA/Western Blot | SES Framework (Multiplex Phospho-Flow) | Implication for Drug Development |

|---|---|---|---|

| Pathway Nodes Measured | 1-3 per experiment | 10-15+ simultaneously | Holistic network view, detects signaling crosstalk. |

| Time to Result | 24-48 hours | 4-6 hours | Faster iteration for high-throughput compound screening. |

| Cell Number Required | High (1-5 x 10^6) | Low (5 x 10^5 per condition) | Enables screening with primary patient-derived cells. |

| Data Granularity | Population average | Single-cell resolution | Identifies heterogeneous subpopulations in response. |

| Key Output | Absolute protein amount | Signaling Potential (SP) - Dynamic range of node phosphorylation. | Functional readout of cellular capacity, more predictive of in vivo response. |

Protocol 1: SES for Lead Compound Profiling via Multiplex Phospho-Flow Cytometry

Objective: To quantify and compare the dynamic signaling network perturbation induced by three lead candidate compounds (Cand A, B, C) targeting Receptor Tyrosine Kinase X (RTK-X) in a primary cancer cell line.

Materials & Reagents:

- Cells: Patient-derived glioblastoma stem-like cells (GSCs), serum-starved for 4 hours.

- Stimuli/Inhibitors:

- Positive Control: Recombinant RTK-X Ligand (100 ng/mL).

- Lead Compounds: Cand A, B, C (10 µM, 1 µM, 0.1 µM doses).

- Negative Control: DMSO vehicle.

- Fixation & Permeabilization: BD Cytofix/Cytoperm Buffer.

- Antibody Panel: Conjugated antibodies against p-ERK1/2 (T202/Y204), p-AKT (S473), p-STAT3 (Y705), p-S6 (S235/236), p-p38 (T180/Y182), and a viability dye.

- Equipment: 96-well U-bottom plate, 37°C CO2 incubator, tube rotator, flow cytometer capable of detecting 6+ fluorochromes.

Methodology:

- Cell Preparation: Aliquot 5x10^5 GSCs per well into a 96-well plate. Centrifuge, aspirate, and resuspend in 90 µL starvation medium.

- Stimulation: Prepare 10X stocks of all stimuli/inhibitors. Add 10 µL to appropriate wells to achieve final concentration. Incubate at 37°C for precisely 0 (unstimulated), 5, 15, and 60 minutes.

- Fixation: Immediately add 100 µL of pre-warmed 2X BD Cytofix buffer directly to each well. Mix gently and incubate for 10 minutes at 37°C.

- Permeabilization & Staining: Centrifuge, aspirate. Permeabilize cells with 100 µL ice-cold 100% methanol for 30 minutes on ice. Wash twice with staining buffer. Add titrated antibody cocktail (50 µL/well). Incubate for 1 hour at RT in the dark.

- Acquisition: Wash cells twice, resuspend in staining buffer. Acquire data on flow cytometer, collecting ≥10,000 viable single-cell events per condition.

- Analysis: Use FlowJo or equivalent. Gate on single, viable cells. Calculate Median Fluorescence Intensity (MFI) for each phospho-epitope. Compute Signaling Potential (SP) as:

SP = (MFI_stimulated - MFI_unstimulated) / MFI_unstimulated.

Protocol 2: SES for Predictive Toxicity Signaling Signature

Objective: To identify a sustained proliferative signaling signature associated with compound-induced adaptive resistance.

Materials & Reagents: As in Protocol 1, with addition of:

- Compounds: Tool compound (known cytostatic agent) and New Chemical Entity (NCE).

- Antibody Additions: Antibodies for cell cycle markers (Ki-67) and apoptosis (Cleaved Caspase-3).

Methodology:

- Chronic Exposure: Treat GSCs with DMSO, tool compound, or NCE at IC50 for 72 hours.

- Acute Re-stimulation: Wash cells thoroughly and re-stimulate with RTK-X Ligand (100 ng/mL) for 15 minutes as in Protocol 1, steps 2-5.

- Analysis: Quantify phospho-signaling in the Ki-67+ (cycling) cell subpopulation. A signature of sustained high p-ERK/p-AKT in cycling cells after chronic exposure indicates a risk of adaptive resistance and poor long-term efficacy.

Visualizations

SES Experimental Protocol Workflow

SES Reveals On & Off Target Signaling

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function in SES Framework | Example Product/Catalog |

|---|---|---|

| Phospho-Specific Antibody Panels | Simultaneous detection of multiple phosphorylated signaling nodes at single-cell resolution. | BioLegend TotalSeq antibodies for CITE-seq; Cell Signaling Technology XP monoclonal antibodies. |

| LIVE/DEAD Fixable Viability Dyes | Exclusion of dead cells which exhibit non-specific antibody binding, critical for data quality. | Thermo Fisher eFluor 506 or Invitrogen Fixable Viability Dye eFluor 780. |

| BD Phosflow Lyse/Fix & Permeabilization Buffers | Standardized, optimized buffers for preservation of labile phospho-epitopes post-stimulation. | BD Biosciences Cat. No. 558049 (Lyse/Fix Buffer) and 558050 (Perm Buffer III). |

| Mass Cytometry (CyTOF) Metal-labeled Antibodies | For ultra-high-parameter SES (40+ nodes), avoiding spectral overlap of traditional flow cytometry. | Standard BioTools Maxpar Direct Immune Profiling Assay. |

| Recombinant Growth Factors/Cytokines | High-purity stimuli for positive control pathways and pathway challenge assays. | PeproTech or R&D Systems GMP-grade recombinant human proteins. |

| Data Analysis Software (e.g., Citrus, FlowSOM) | Automated, high-dimensional analysis to identify signaling clusters without prior bias. | Cytobank platform for Citrus algorithm; R/Bioconductor FlowSOM package. |

Within the step-by-step methodology of the Scientific Execution Standard (SES) framework, the integration of heterogeneous data and its subsequent contextual analysis represents a critical phase for generating actionable insights in biomedical research. This application note details protocols and advantages conferred by the SES in unifying multi-omics, clinical, and literature-derived data, enabling robust systems-level understanding in drug development.

Table 1: Impact of SES-Driven Data Integration on Analytical Output in a Representative Multi-Omics Study

| Metric | Pre-SES (Manual Pipeline) | Post-SES (Standardized Pipeline) | Improvement |

|---|---|---|---|

| Data Processing Time | 14.5 ± 2.1 days | 3.2 ± 0.7 days | ~78% reduction |

| Cross-Platform Data Sources Integrated | 3 (max) | 8 (routine) | ~167% increase |

| Assay-Specific Batch Effect Correction Rate | 65% | 94% | 29 percentage points |

| Reproducibility Score (Cohen's κ) | 0.45 ± 0.15 | 0.88 ± 0.05 | ~96% increase |

| Contextually Annotated Findings | ~30% of hits | ~85% of hits | ~183% increase |

Detailed Experimental Protocols

Protocol 3.1: SES-Guided Multi-Omics Data Unification

Objective: To integrate transcriptomic, proteomic, and metabolomic datasets from a compound-treated cell line study using SES-defined ontologies and quality controls.

Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Raw Data Ingestion (SES Step 4.1): For each dataset, initiate an SES Experimental Instance. Upload raw data files (.fastq, .raw, .d) to the SES Data Lake, triggering automated MD5 checksum validation and metadata tagging using the SES Sample Ontology (SES-SO).

- Primary Processing & QC (SES Step 4.2): Execute SES-Certified, version-controlled pipelines (e.g., Nextflow/Snakemake) within dedicated containers.

- RNA-seq: Alignment (ST2/salmon), gene quantification. QC: RSeQC report.

- Proteomics (LFQ): Processing via FragPipe, quantification by MaxQuant. QC: Missing data heatmap.

- Metabolomics: Peak picking (XCMS), annotation (CAMERA). QC: Pooled QC sample CV < 15%.

- All QC reports are automatically parsed by the SES-QC module; only "PASS" instances proceed.

- Normalization & Batch Correction (SES Step 4.3): Apply SES-prescribed ComBat-seq (transcriptomics) and ComBat (proteomics/metabolomics) using the

SES_batch_correctfunction, with batch defined in sample metadata. - Ontological Unification (SES Step 5.1): Map all features (genes, proteins, metabolites) to SES-Central Identifier (SES-CID) using the

map_to_SESCIDAPI. Features without a valid SES-CID are flagged for manual curation. - Integrated Matrix Creation (SES Step 5.2): Generate a unified data matrix (samples x SES-CIDs) using the

SES_unify_matrixtool. Log-transform and Z-score normalize within assay type.

Protocol 3.2: Contextual Enrichment Analysis via the SES Knowledge Graph

Objective: To interpret differentially expressed entities from Protocol 3.1 within biological, pathological, and compound contexts.

Procedure:

- Differential Analysis (SES Step 6.1): For each assay matrix, perform moderated t-tests (limma R package) via

SES_diff_analysis. Extract entities with FDR < 0.05 and |logFC| > 1 as the "Signature." - Knowledge Graph Query (SES Step 6.2): Submit the Signature's SES-CID list to the SES Knowledge Graph (KG) Query Endpoint using the

SES_KG_enrichfunction.- Query 1: Biological Context. Retrieve associated pathways (GO, Reactome), protein complexes (CORUM), and regulatory miRNAs.

- Query 2: Pathological Context. Retrieve associations with diseases (MONDO, DO), clinical phenotypes (HPO), and genetic constraints (gnomAD).

- Query 3: Chemical Context. Retrieve known interactions with small molecules (ChEMBL), approved drugs (DrugBank), and chemical probes.

- Enrichment Synthesis (SES Step 6.3): Consolidate query results. Calculate hypergeometric p-values for all retrieved associations. Generate a ranked list of contextual themes (e.g., "Inflammatory Response," "Mitochondrial Dysfunction," "Kinase Inhibition").

Visualizations

SES Data Integration & Analysis Workflow (85 chars)

SES Knowledge Graph Contextual Query Map (72 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for SES-Enhanced Integrated Analysis

| Item | Supplier/Resource | Function in SES Protocol |

|---|---|---|

| SES Sample Ontology (SES-SO) | Internal SES Registry | Standardizes all sample metadata (cell type, treatment, dose, time) for unambiguous integration. |

| SES-Central Identifier (SES-CID) Database | Internal SES Repository | Provides a unique, stable identifier for each biological entity (gene, protein, metabolite) across all platforms. |

| SES-Certified Pipeline Containers | e.g., DockerHub, Sylabs | Version-controlled, portable software environments ensuring reproducible data processing (Step 4.2). |

| SES Knowledge Graph | Internal SES Resource | Integrates public databases (GO, ChEMBL, MONDO, HPO) into a single queryable graph for contextual enrichment. |

SES Batch Correction Module (SES_batch_correct) |

Internal SES R/Python Package | Implements standardized algorithms for removing technical variation across assay batches and platforms. |

| Multi-Omics QC Reference Materials | e.g., HeLa Cell Line, NIST SRM 1950 | Provides a biologically consistent sample for cross-assay performance validation and normalization bridging. |

Application Notes

Successful deployment of the Systems Engineering in Science (SES) framework for drug discovery research hinges on three foundational pillars. These prerequisites ensure methodological rigor, reproducibility, and translational validity.

1. Data Types: The Multi-Omic and Clinical Bedrock Research must integrate structured, high-dimensional data from diverse sources. Quantitative omics data provides mechanistic insight, while phenotypic and clinical data anchor findings in biological reality. The challenge lies in harmonizing disparate data types with varying scales, missingness, and noise profiles. A FAIR (Findable, Accessible, Interoperable, Reusable) data management plan is non-negotiable from inception.

2. Team Skills: Interdisciplinary Convergence The complexity of modern drug development dissolves traditional disciplinary silos. Effective teams require a dynamic combination of deep domain expertise (e.g., molecular biology, clinical medicine) and advanced quantitative skills (e.g., computational biology, bioinformatics, machine learning). Critically, team members must possess collaborative literacy—the ability to communicate across technical languages and integrate diverse perspectives into a unified research strategy.

3. Infrastructure Needs: Computational and Experimental Scaffolding This encompasses both physical and digital research environments. It requires robust, scalable computational resources (high-performance computing, cloud platforms) for data processing and modeling, coupled with standardized, quality-controlled experimental wet-labs for hypothesis validation. Secure, version-controlled data repositories and collaborative digital workspaces (e.g., electronic lab notebooks, project management software) form the connective tissue.

Protocols

Protocol 1: Multi-Omic Data Integration and Quality Control

Objective: To acquire, preprocess, and perform initial quality control (QC) on transcriptomic, proteomic, and metabolomic data for integration within an SES-based analysis. Materials: Raw sequencing data (FASTQ), mass spectrometry raw files (e.g., .raw, .d), associated sample metadata, high-performance computing cluster or cloud instance. Methodology:

- Data Acquisition: Retrieve raw data from repositories (e.g., GEO, PRIDE) or core facility outputs. Log all metadata (sample ID, condition, batch, operator) in a centralized database.

- Parallel Preprocessing:

- Transcriptomics: Align RNA-seq reads to reference genome (e.g., using STAR). Generate gene-level counts (featureCounts). Perform QC with FastQC and MultiQC.

- Proteomics: Process raw files via pipeline (e.g., MaxQuant, DIA-NN). Generate peptide/protein intensity matrices. Filter for contaminants and decoys.

- Metabolomics: Process raw spectral data (e.g., using XCMS, MS-DIAL). Perform peak picking, alignment, and annotation.

- Normalization & Batch Correction: Apply appropriate normalization (e.g., DESeq2 median-of-ratios for RNA-seq, median centering for proteomics). Use ComBat or SVA to correct for technical batch effects.

- Initial Integration QC: Use Principal Component Analysis (PCA) on each normalized dataset to visualize clustering by expected biological groups and identify outlier samples. Document all parameters and software versions.

Protocol 2: Cross-Functional Team Research Sprint

Objective: To structure a collaborative session between wet-lab biologists, data scientists, and clinical researchers to define a testable systems hypothesis. Materials: Pre-curated data summaries, visualization tools, structured meeting agenda, designated facilitator. Methodology:

- Pre-Sprint Briefing: Distribute pre-reads containing key data summaries (e.g., differential expression tables, pathway enrichment results) to all participants 48 hours in advance.

- Hypothesis Framing (Hour 1): The biologist presents a mechanistic question. The clinical researcher adds human disease context and phenotypic constraints.

- Data Interrogation (Hour 2): The data scientist presents relevant computational analyses (e.g., network models, enriched pathways). The group collaboratively reviews visualizations.

- Integrated Experimental Design (Hour 3): The team co-develops a validation plan. This includes:

- Defining key in vitro or in vivo perturbation experiments.

- Specifying the exact omics assays to be performed post-perturbation.

- Outlining the analytical pipeline for validation data.

- Actionable Output: Document the agreed-upon hypothesis, experimental plan, roles, and timelines in a shared electronic lab notebook.

Protocol 3: Infrastructure Validation for Reproducible Analysis

Objective: To verify that the computational environment can accurately reproduce a benchmark analysis pipeline. Materials: Published dataset with known results (e.g., from a reproducibility study), containerization software (Docker/Singularity), workflow management tool (Nextflow/Snakemake). Methodology:

- Containerization: Package the benchmark analysis pipeline (including all dependencies, R/Python libraries, and version-specific tools) into a Docker container.

- Workflow Codification: Script the pipeline steps (data download, preprocessing, analysis, reporting) within a Nextflow or Snakemake workflow.

- Execution & Comparison: Run the containerized workflow on the benchmark dataset in the target infrastructure (HPC or cloud).

- Reproducibility Metric: Compare the final output (e.g., differentially expressed gene list, p-values, pathway rankings) to the published benchmark results using concordance metrics (e.g., Spearman correlation >0.95, Jaccard similarity for gene sets). Successful validation certifies the infrastructure for production research.

Data Tables

Table 1: Essential Multi-Omic Data Types for SES Drug Discovery

| Data Type | Typical Volume per Sample | Key QC Metrics | Common File Formats | Primary Use in SES |

|---|---|---|---|---|

| Genomics (WES/WGS) | 80-100 GB (FASTQ) | Mean coverage (>100x), % aligned reads, insert size | FASTQ, BAM, VCF | Identifying genetic drivers & patient stratification markers. |

| Transcriptomics (RNA-seq) | 20-50 GB (FASTQ) | RIN score, % rRNA, library complexity | FASTQ, BAM, count matrix | Elucidating disease-associated pathways & mechanism of action. |

| Proteomics (LC-MS/MS) | 2-5 GB (.raw) | # MS/MS spectra, # proteins ID'd, CV of technical replicates | .raw, .d, .mgf, mzML | Quantifying functional effector proteins & pharmacodynamic markers. |

| Metabolomics | 0.5-2 GB (.d) | Total ion chromatogram QC, peak shape, blank subtraction | .d, .mzML, .mzXML | Profiling biochemical phenotypes & therapeutic response signatures. |

| Clinical/Phenotypic | Structured tables | Data completeness, value ranges, outlier checks | CSV, SQL, REDCap | Anchoring models in patient-relevant outcomes & covariates. |

Table 2: Core Team Skills Matrix for an SES-Driven Project

| Role | Essential Technical Skills | Essential Collaborative Skills | Key Deliverables |

|---|---|---|---|

| Project Lead | Deep disease biology, drug development process | Strategic planning, interdisciplinary negotiation | Integrated project plan, go/no-go decisions |

| Wet-Lab Scientist | Cell/animal models, molecular assays, omics sample prep | Translating computational predictions into testable experiments | High-quality experimental validation data |

| Computational Biologist | Statistical analysis, bioinformatics pipelines, R/Python | Communicating complex results to non-experts, defining bio-question | Processed datasets, differential analysis, initial insights |

| Data Scientist/ML Engineer | Machine learning, network modeling, cloud computing | Co-designing analysis with biologists, managing computational infra. | Predictive models, integrated networks, deployable code |

| Clinical Research Scientist | Clinical trial design, biomarker discovery, regulatory knowledge | Interpreting biological findings in patient context, safety assessment | Patient stratification strategy, clinical endpoint correlation |

Table 3: Minimum Infrastructure Specifications

| Component | Minimum Specification | Recommended for Scale | Key Management Software |

|---|---|---|---|

| Compute (CPU/GPU) | 64-core server, 512 GB RAM, 2x consumer GPU | HPC cluster or cloud (AWS/GCP) with 1000+ cores, multi-node GPU | Slurm/Kubernetes for job scheduling |

| Storage (Active) | 500 TB high-speed network storage (NVMe/SSD cache) | 5+ PB tiered storage (hot/cold) with automated backup | Lustre/WEKA for parallel file systems |

| Data Management | Institutional server with versioning (e.g., GitLab) | Cloud-based platform (DNAnexus, Terra) with FAIR tools | Electronic Lab Notebook (ELN), data catalogs |

| Network & Security | 10 GbE internal, encrypted data transfer, access controls | 100 GbE, zero-trust architecture, audit logging | Institutional firewall, VPN, data encryption at rest/in transit |

| Lab Infrastructure | Standardized, QC'd equipment for core assays (PCR, LC-MS) | Automated liquid handlers, high-content imagers, robotic storage | Laboratory Information Management System (LIMS) |

Diagrams

Diagram Title: Prerequisites Converging on SES Framework Deployment

Diagram Title: Multi-Omic Data Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Key Reagents & Materials for Systems Validation Experiments

| Item | Function in SES Context | Example Product/Assay |

|---|---|---|

| CRISPR Knockout/Knockdown Kits | Perturbation of computationally-predicted key nodes (genes/proteins) in a network to validate causality. | Synthego CRISPR kits, Dharmacon siRNA libraries. |

| Multiplex Immunoassay Panels | Simultaneous quantification of multiple protein targets (e.g., phospho-proteins, cytokines) to measure system-wide signaling response. | Luminex xMAP, Olink PEA, MSD U-PLEX. |

| LC-MS/MS Grade Solvents & Columns | Essential for generating high-quality, reproducible proteomic and metabolomic data for model building and validation. | Thermo Fisher Pierce solvents, Waters ACQUITY UPLC columns. |

| Viability/Proliferation Assay Reagents | Quantitative phenotypic readout of cellular response to perturbation, linking molecular models to function. | CellTiter-Glo, RealTime-Glo MT. |

| Single-Cell RNA-seq Library Prep Kits | Deconvolution of bulk transcriptomic signatures into cell-type-specific responses, refining network models. | 10x Genomics Chromium, Parse Biosciences Evercode. |

| Pathway Reporter Assays | Functional validation of activity in specific signaling pathways predicted to be dysregulated. | Cignal Lenti reporters (Qiagen), Pathway-Specific SEAP assays. |

| High-Content Imaging Reagents | Multiparametric, single-cell phenotypic data for training morphological response models. | Cell Painting dyes (MitoTracker, Phalloidin, etc.), fluorescent antibody conjugates. |

Step-by-Step SES Framework Protocol: From Study Design to Analysis

A Structured Evidence Synthesis (SES) framework provides a systematic, hypothesis-driven approach for planning biomedical research to ensure maximum scientific rigor and regulatory relevance. Within a broader methodological guide, Phase 1: Pre-Implementation is critical for establishing foundational alignment. This phase ensures that the specific aims of a preclinical or clinical study are explicitly designed to address the core objectives of the overarching SES, which typically aims to evaluate a drug's mechanism of action, efficacy, and safety profile in the context of existing evidence. Misalignment at this stage can lead to redundant, inconclusive, or non-generalizable data, wasting resources and delaying development timelines.

Core Principles for Alignment

The alignment process is governed by three core principles:

- Specificity: Study aims must translate high-level SES objectives into testable, measurable hypotheses.

- Contribution: Each proposed experiment must directly fill a predefined evidence gap identified in the SES evidence map.

- Generalizability: Study design must consider the broader context (e.g., disease models, patient populations, endpoints) to ensure findings contribute to the SES's external validity.

Quantitative Landscape of Research Alignment Gaps

A review of recent literature and project audits reveals common pitfalls in the pre-implementation phase. The following table summarizes key quantitative data on alignment gaps in early-stage drug development research.

Table 1: Prevalence and Impact of Aims-Objectives Misalignment in Preclinical Research

| Misalignment Category | Prevalence in Audited Studies (%) | Mean Delay in Project Timeline (Weeks) | Primary Contributing Factor |

|---|---|---|---|

| Endpoint Mismatch | 42% | 14.2 | Use of surrogate endpoints not validated against SES primary outcome. |

| Model Irrelevance | 31% | 18.5 | Disease model does not recapitulate key pathophysiology targeted by SES. |

| Underpowered Design | 38% | 22.0 | Sample size calculated for effect size not relevant to SES objective. |

| Biomarker Disconnect | 27% | 16.8 | Exploratory biomarker not linked to SES mechanism-of-action hypothesis. |

Data synthesized from internal portfolio reviews (2022-2024) and published meta-research (e.g., *Nature Reviews Drug Discovery).*

Protocol: The Alignment Workshop & Gap Analysis

This structured protocol guides research teams through the essential pre-implementation alignment exercise.

4.1 Objective: To formally map and validate proposed study aims against the parent SES objectives, identifying and resolving gaps prior to protocol finalization.

4.2 Materials & Stakeholders:

- Inputs: SES Protocol Document, Evidence Gap Map, Draft Study Protocol.

- Stakeholders: SES Lead, Study Principal Investigator, Biostatistician, Translational Science Lead.

- Tools: Alignment Matrix Worksheet (Table 2), Gap Log.

4.3 Procedure:

- SES Objective Deconstruction (Duration: 1 hour): The SES lead presents each high-level SES objective, breaking it down into its core components: Target Population, Intervention Concept, Comparative Framework, and Critical Outcome (TICO).

- Study Aim Mapping (Duration: 1.5 hours): For each deconstructed SES objective, the study PI articulates how each specific aim addresses one or more TICO components. This is recorded in the Alignment Matrix.

- Gap Identification & Scoring (Duration: 1 hour): The team reviews the matrix to identify:

- Coverage Gaps: SES components with no corresponding study aim.

- Fidelity Gaps: Study aims that address a component but with insufficient methodological rigor (e.g., wrong model, endpoint).

- Redundancy: Multiple aims addressing the same component without added value. Gaps are scored for severity (High/Medium/Low) based on potential impact on SES conclusions.

- Mitigation Planning (Duration: 1 hour): For each High/Critical gap, the team decides on one of three actions: (a) Modify the study aim/design, (b) Justify the gap with rationale for a subsequent study, or (c) Refine the SES objective scope. Actions are assigned and dated.

4.4 Deliverable: A completed and signed Study-SES Alignment Matrix (see Table 2) and a resolved Gap Log appended to the study protocol.

Table 2: Study-SES Alignment Matrix Worksheet

| SES Objective (TICO) | Corresponding Study Aim | Experimental Model & Endpoint | Data Output for SES | Alignment Score (1-5) | Gap ID |

|---|---|---|---|---|---|

| Obj. 1: Evaluate efficacy of [Drug X] vs. standard-of-care in [Population Y] on [Outcome Z]. | Aim 1.1: Determine the dose-response of Drug X on [Biomarker A] in [Cell Line/Model Y]. | In vitro model; IC50/EC50. | Dose-response curve; potency estimate. | 2 | GAP-01 |

| Obj. 1: (as above) | Aim 1.2: Assess efficacy of lead dose on [Outcome Z] in [In Vivo Model Y]. | In vivo disease model; Primary clinical endpoint measure. | Efficacy effect size (e.g., tumor volume, survival). | 5 | - |

| Obj. 2: Characterize mechanism of action via [Pathway B] inhibition. | Aim 2.1: Measure pathway activation (p-Protein/total) in treated vs. control tissues. | Ex vivo tissue analysis via Western Blot/IHC. | Quantitative phospho-protein data. | 4 | - |

Diagram 1: SES Pre-Implementation Alignment Workflow (100 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Mechanistic Alignment Experiments

| Reagent / Solution | Function in Alignment Context | Example & Rationale |

|---|---|---|

| Validated Disease-Relevant Cell Lines | Provides a biologically relevant system to test the primary mechanism hypothesized in the SES. | Patient-derived organoids with confirmed target expression ensure translational fidelity to the SES-defined population. |

| Target-Selective Inhibitors/Activators (Tool Compounds) | Serves as positive/negative controls to verify assay specificity and link results directly to the SES target. | Use of a well-characterized, clinically approved drug targeting the same pathway confirms expected phenotypic readouts. |

| Phospho-Specific Antibodies & Multiplex Assay Panels | Enables quantitative measurement of pathway modulation, a common SES objective for MoA confirmation. | Luminex or MSD panels for key pathway phospho-proteins provide direct evidence of target engagement. |

| In Vivo Pharmacodynamic (PD) Biomarker Assay Kits | Bridges in vitro findings to in vivo models, aligning early study aims with later-stage SES efficacy objectives. | ELISA kits for measuring cleaved caspase-3 in tumor lysates post-treatment to confirm apoptosis induction. |

| CRISPR Knockout/Knockdown Libraries | Empowers causal validation that an observed phenotype is specifically due to the target in the SES hypothesis. | A focused library targeting genes in the pathway of interest to identify synthetic lethality or resistance mechanisms. |

Experimental Protocol: In Vitro Target Engagement & Pathway Modulation Assay

This detailed protocol is cited as a primary method for addressing SES objectives related to confirming a drug's mechanism of action (e.g., Aim 2.1 in Table 2).

6.1 Title: Protocol for Assessing Target Engagement and Downstream Pathway Modulation in a 2D Cell Culture Model.

6.2 Objective: To quantitatively demonstrate that Drug X inhibits its intended target (Target T), leading to decreased phosphorylation of downstream effector protein E, within a disease-relevant cell line.

6.3 Materials:

- Disease-relevant cell line (e.g., HCC827 for EGFR-driven NSCLC).

- Complete cell culture medium.

- Drug X stock solution, Vehicle control, Reference inhibitor control.

- Cell lysis buffer (RIPA with fresh protease/phosphatase inhibitors).

- BCA Protein Assay Kit.

- Pre-cast SDS-PAGE gels, Western blot transfer apparatus.

- Primary antibodies: Anti-Target T (total), Anti-p-Target T, Anti-Protein E (total), Anti-p-Protein E, Anti-β-Actin.

- HRP-conjugated secondary antibodies.

- Chemiluminescent substrate and imaging system.

6.4 Detailed Procedure:

- Cell Seeding & Treatment: Seed cells in 6-well plates at 70% confluence. After 24h, treat with triplicate wells for: (a) Vehicle, (b) Drug X at 3 concentrations (IC50 predicted, 10x IC50, 0.1x IC50), (c) Reference inhibitor control. Incubate for 1h (acute signaling) and 24h (sustained effect).

- Cell Lysis & Protein Quantification: Aspirate medium, wash with cold PBS. Add 150µL lysis buffer per well. Scrape, transfer lysate, vortex, centrifuge (14,000g, 15min, 4°C). Collect supernatant. Determine protein concentration using BCA assay.

- Western Blot Analysis: Denature equal protein amounts (e.g., 20µg) in Laemmli buffer. Load onto SDS-PAGE gel, run at constant voltage. Transfer to PVDF membrane. Block with 5% BSA in TBST for 1h.

- Immunoblotting: Incubate membrane with primary antibodies (diluted in blocking buffer) overnight at 4°C. Wash (3x TBST, 10min). Incubate with appropriate HRP-secondary antibody for 1h at RT. Wash thoroughly.

- Detection & Analysis: Develop with chemiluminescent substrate. Image on a digital imager. Quantify band intensity using image analysis software (e.g., ImageJ). Normalize p-protein signals to total protein and loading control (β-Actin).

- Data Normalization & Statistics: Express data as mean ± SEM of normalized p-protein/total protein ratio from triplicate wells. Compare treatment groups to vehicle using one-way ANOVA with Dunnett's post-hoc test. Plot dose-response curves.

6.5 Data Alignment: The resulting dose-dependent decrease in p-Target T and p-Protein E provides direct, quantifiable evidence for the SES MoA objective. This data fills a specific cell in the SES evidence matrix for "Target Modulation In Vitro."

Diagram 2: Molecular Pathway for Target Engagement Assay (100 chars)

Data source mapping and standardization is the critical second step in the Safety Evidence Synthesis (SES) framework. It involves transforming disparate adverse event (AE) data from clinical trials, post-marketing surveillance, and literature into a unified, analyzable format using controlled medical terminologies (CMTs). This process ensures consistency, enables accurate signal detection, and supports regulatory reporting. Primary CMTs include the Medical Dictionary for Regulatory Activities (MedDRA) and the World Health Organization Adverse Reactions Terminology (WHO-ART). Failure to implement rigorous standardization introduces noise, biases pooled analyses, and jeopardizes patient safety conclusions.

Table 1: Comparison of Primary Medical Terminologies for AE Standardization

| Feature | MedDRA | WHO-ART | ICD-10-CM |

|---|---|---|---|

| Primary Scope & Use | Regulatory activities (pre- & post-marketing) | Drug safety, particularly in legacy data & some regions | Mortality, morbidity, billing, broad healthcare |

| Maintenance & Updates | Biannual updates by MSSO (Maintenance and Support Services Organization) | Historically static; largely superseded by MedDRA | Annual updates by WHO/Centers for Medicare & Medicaid Services (US) |

| Hierarchy Structure | 5-Level: System Organ Class (SOC), High-Level Group Term (HLGT), High-Level Term (HLT), Preferred Term (PT), Lowest Level Term (LLT) | 3-Level: System Organ Class, Preferred Term, Included Term | Chapter, Block, Category, Subcategory (alphanumeric codes) |

| Number of Terms (Approx.) | ~110,000 LLTs (as of v27.0, March 2024) | ~6,000 Included Terms | ~72,000 codes |

| Multiaxiality | Yes. A PT can be linked to multiple SOCs based on etiology, manifestation, etc. | Limited. Generally single SOC assignment. | Generally single path assignment. |

| Global Adoption | ICH standard; mandatory in EU, US, Japan, and many other regions for reporting. | Widely used historically; still referenced but being phased out. | Global for public health statistics; required for US electronic health records. |

| Advantages | Highly granular, regularly updated, supports sophisticated querying (SMQs), multiaxial. | Simpler, smaller, easier for legacy database conversion. | Extensive, covers all diseases, useful for comorbidities. |

| Limitations | Complexity, cost, frequent updates require version control. | Limited granularity, not updated, less suitable for novel events. | Not designed specifically for drug AEs; coding can lack specificity. |

Table 2: Quantitative Data on MedDRA Usage and Impact (Based on Recent Sources)

| Metric | Value | Source / Context |

|---|---|---|

| Current MedDRA Version | 27.0 (Released March 2024) | MSSO Release Notice |

| Number of PTs in v27.0 | 25,190 | MSSO Release Notice |

| Annual % PT Increase | ~2-3% (Average over last 5 versions) | Derived from MSSO data |

| Regulatory Submission Compliance | 100% of new drug applications in FDA/CDER (FY2023) required MedDRA-coded AEs. | FDA PDUFA Performance Report |

| Signal Detection Efficiency | Use of SMQs can improve initial AE review efficiency by an estimated 40-60%. | Analysis of pharmacovigilance case studies |

Experimental Protocols

Protocol 3.1: Mapping Legacy WHO-ART Data to MedDRA

Objective: To accurately convert a legacy safety database coded in WHO-ART to the current MedDRA version for integrated analysis. Materials: Legacy AE dataset (WHO-ART codes), official MedDRA- WHO-ART mapping file (from MSSO), relational database or data management software (e.g., SAS, R). Procedure:

- Data Extraction: Export the legacy dataset, ensuring each AE record includes the WHO-ART Preferred Term (PT) code.

- Mapping File Acquisition: Download the current

WHOARTtoMedDRA_mapping.zipfile from the MSSO website. This file contains mappings from WHO-ART PTs to MedDRA Lowest Level Terms (LLTs). - Primary Mapping Join: Perform a database join or merge operation linking the legacy dataset's WHO-ART PT code to the corresponding code in the mapping file.

- LLT to PT Resolution: The mapping file provides a MedDRA LLT. Using the current MedDRA dictionary, trace each LLT to its parent Preferred Term (PT). Record both the LLT and PT.

- Validation & Review: a. Automated Flagging: Flag records where the mapping is one-to-many (one WHO-ART PT maps to multiple MedDRA LLTs). These require clinical review. b. Clinical Review: A qualified medic or pharmacovigilance expert must review flagged records. Selection of the final MedDRA PT is based on the original verbatim term and clinical context. c. Unmapped Term Handling: For any unmapped terms, initiate a manual coding process against the current MedDRA dictionary.

- Versioning Documentation: Document the input WHO-ART version and output MedDRA version in the final dataset metadata.

Protocol 3.2: Implementing a Standardized MedDRA Query (SMQ) for Signal Evaluation

Objective: To systematically identify potential cases of a specific safety concern (e.g., drug-induced liver injury) within a large pharmacovigilance database using a Standardized MedDRA Query (SMQ). Materials: AE database coded in MedDRA, list of SMQ definitions (from MedDRA Browser or MSSO website), statistical analysis software. Procedure:

- SMQ Selection: Identify the relevant SMQ (e.g., "Hepatic disorder" [SMQ 20000017]) and determine the desired scope: "Narrow" (high specificity) or "Broad" (high sensitivity).

- Term Extraction: From the SMQ definition, extract the list of constituent MedDRA Preferred Terms (PTs) for the chosen scope.

- Database Querying: Query the AE database for all cases containing any of the PTs in the target list. The query should retrieve the unique case identifiers.

- Case Retrieval & De-duplication: Pull full case reports for the identified IDs. De-duplicate if a single case contains multiple PTs from the SMQ.

- Data Analysis: Calculate the frequency, reporting rates (e.g., Proportional Reporting Ratio), or perform disproportionality analysis (e.g., Ω shrinkage measure) for the SMQ-defined case set compared to a reference background.

- Clinical Review: The output list of cases is a prioritized signal evaluation candidate set. Each case requires individual medical assessment to confirm the suspected adverse reaction.

Visualization

Diagram 1: MedDRA Standardization & Analysis Workflow

Diagram 2: Simplified MedDRA Hierarchy Structure

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Data Mapping & Standardization

| Item / Resource | Function & Application | Key Provider / Source |

|---|---|---|

| MedDRA Desktop Browser | Interactive tool to browse, search, and understand MedDRA terms, hierarchies, and SMQs. Essential for manual coding and validation. | MSSO (Subscription required) |

| MedDRA Versioned Data Files | The core ASCII or XML files (LLT, PT, Hierarchy, SMQ) for integration into local databases and automated coding systems. | MSSO (Subscription required) |

| WHO-ART to MedDRA Mapping File | Critical cross-reference table for converting legacy databases. Updated with each MedDRA release. | MSSO (Available to subscribers) |

| Auto-Encoder Software | Machine-learning or rules-based software (e.g., Oracle Thesaurus Manager, ClinDrax AVOCA) to automate verbatim term matching to MedDRA LLTs. | Various Commercial Vendors |

| Medical Coding Governance Platform | Centralized system (e.g., Veeva Vault Safety, ArisGlobal LifeSphere) to manage coding workflows, disputes, and version control across studies. | Various Commercial Vendors |

| Regulatory Guidelines (ICH E2B(R3)) | Definitive specification for the format and content of safety reports, dictating required MedDRA fields and terminologies. | ICH, FDA, EMA websites |

| Statistical Software (R, SAS) | With specialized pharmacovigilance packages (e.g., PhViD in R, SAS Pharmacovigilance) to perform analyses on standardized data. |

Open Source (R), SAS Institute |

Application Notes

Within the SES (Symptom-Event-System) Framework, Step 3 involves the systematic definition and categorization of raw data into coherent clusters. This transforms individual observations into analyzable patterns, forming the basis for hypothesis generation in drug development. Clusters are defined by temporal patterns, severity, co-occurrence, and potential physiological linkage. This step is critical for identifying potential Adverse Event (AE) signals, understanding disease progression, and defining patient subpopulations.

Core Principles of Clustering

- Data-Driven & Hypothesis-Generating: Clusters should emerge from the data using predefined algorithms, minimizing prior bias while allowing for clinically informed validation.

- Multi-Dimensional: Utilize multiple data axes: temporal (onset, duration), severity (mild, moderate, severe), body system (MedDRA SOC), and patient-reported impact.

- Iterative Refinement: Initial clusters are prototypical and must be validated and refined through statistical and clinical review.

The following metrics are used to evaluate and define cluster robustness.

Table 1: Key Metrics for Symptom/Event Cluster Evaluation

| Metric | Formula/Description | Interpretation Threshold | ||||

|---|---|---|---|---|---|---|

| Jaccard Similarity Index | `J(A,B) = | A ∩ B | / | A ∪ B | ` | ≥0.5 suggests strong cluster overlap. |

| Silhouette Score | Measures how similar an object is to its own cluster vs. others. Ranges from -1 to 1. | >0.5 indicates well-clustered data. | ||||

| Intra-Cluster Density | Mean strength of connections (e.g., correlation) between items within a cluster. | Higher value indicates tighter cohesion. | ||||

| Inter-Cluster Separation | Mean distance (e.g., 1 - correlation) between cluster centroids. | Higher value indicates better distinction. | ||||

| Temporal Coherence | Standard deviation of time-to-onset for events within a cluster. | Lower SD indicates tighter temporal grouping. |

Experimental Protocols

Protocol: Hierarchical Agglomerative Clustering for Event Categorization

Purpose: To group adverse events from clinical trial safety data into clusters based on co-reporting patterns.

Materials: Adverse event incidence matrix (Patients x AEs), statistical software (R, Python).

Procedure:

- Data Preparation: Create a binary matrix where rows are patients and columns are preferred term (PT) AEs.

1indicates the event was reported for that patient. - Similarity Calculation: Compute the Jaccard similarity matrix between all AE pairs across the patient population.

- Distance Conversion: Convert similarity to distance:

Distance = 1 - Jaccard Similarity. - Clustering: Apply hierarchical agglomerative clustering (Ward's linkage method) to the distance matrix.

- Dendrogram Cutting: Visually inspect the dendrogram and use the average silhouette width method to determine the optimal number of clusters (

k). Cut the dendrogram at height corresponding tok. - Validation: For each resulting cluster, calculate intra-cluster density and inter-cluster separation (see Table 1). Clinically review cluster composition for face validity.

Protocol: Temporal Sequence Alignment for Symptom Progression

Purpose: To identify and categorize common temporal patterns in patient-reported symptom diaries.

Materials: Time-stamped symptom severity scores (e.g., daily PRO data), Dynamic Time Warping (DTW) algorithm library.

Procedure:

- Data Extraction: For a target symptom (e.g., fatigue), extract all patient-specific time-series vectors over a defined study period.

- Alignment: Use DTW to calculate the optimal alignment path between every pair of patient time-series, accounting for variable onset and duration.

- Distance Matrix: From the DTW alignments, populate a matrix of pairwise distances between all patients' symptom trajectories.

- Partitioning Clustering: Apply Partitioning Around Medoids (PAM) clustering to the DTW distance matrix to group patients with similar temporal patterns.

- Pattern Definition: For each cluster, compute the centroid (medoid) trajectory. Categorize clusters based on centroid features (e.g., "Early Transient," "Late Onset & Progressive," "Chronic Stable").

Visualization: Pathway and Workflow Diagrams

Title: SES Step 3: Symptom-Event Clustering Workflow

Title: Multi-Dimensional Data Integration for Clustering

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Symptom-Event Cluster Analysis

| Item | Function/Application in SES Step 3 |

|---|---|

| MedDRA (Medical Dictionary for Regulatory Activities) | Standardized terminology for categorizing reported events by System Organ Class (SOC) and Preferred Term (PT), enabling consistent grouping. |

| PRO-CTCAE (Patient-Reported Outcomes - CTCAE) Library | Validated items for capturing patient-reported symptom frequency, severity, and interference, providing granular data for clustering. |

R: cluster & dtwclust Packages |

Provides comprehensive functions for partitional (PAM), hierarchical, and time-series clustering (DTW-based). Essential for protocol execution. |

Python: scikit-learn & tslearn |

Machine learning library with robust clustering modules (sklearn.cluster) and time-series specific algorithms (tslearn). |

| Safety Database (e.g., ARGUS, Oracle Argus) | Source system for raw adverse event data, enabling extraction of incidence matrices and timelines for analysis. |

| Dynamic Time Warping (DTW) Algorithm | Computes an optimal match between two temporal sequences, allowing for clustering of symptoms with variable onset and duration. |

| Silhouette Analysis | A method for interpreting and validating the consistency within clusters of data, used to determine the optimal number of clusters. |

Integrating the patient journey and comorbidities into the Structured Evidence Synthesis (SES) framework transforms a mechanistic understanding of disease into a clinically actionable model. This step ensures that therapeutic hypotheses and experimental designs are grounded in real-world patient pathophysiology, enhancing translational relevance and identifying critical confounding variables.

Foundational Data: Quantifying Comorbidity Prevalence in Target Indications

Current epidemiological data underscores the necessity of this integration. The following table summarizes comorbidity prevalence for common chronic conditions targeted in drug development.

Table 1: Prevalence of Key Comorbidities in Select Chronic Diseases (Recent Meta-Analysis Data)

| Primary Indication | Sample Size (Approx.) | Key Comorbidity 1 (Prevalence) | Key Comorbidity 2 (Prevalence) | Key Comorbidity 3 (Prevalence) | Data Source (Year) |

|---|---|---|---|---|---|

| Type 2 Diabetes | 1.2M patients | Hypertension (78%) | Obesity (BMI ≥30) (65%) | Cardiovascular Disease (32%) | Global Burden of Disease (2023) |

| Heart Failure (HFrEF) | 850k patients | Chronic Kidney Disease (45%) | Atrial Fibrillation (35%) | Iron Deficiency (50%) | ESC Heart Failure Registry (2024) |

| Rheumatoid Arthritis | 400k patients | Depression/Anxiety (38%) | Cardiovascular Disease (28%) | Interstitial Lung Disease (15%) | ACR Collaborative Cohort (2023) |

| Alzheimer's Disease | 600k patients | Vascular Dementia (Mixed) (30%) | Type 2 Diabetes (25%) | Major Depression (22%) | NIA-AD Sequencing Project (2024) |

Methodological Protocols

Protocol: Mapping the Patient Journey with Comorbidities

Objective: To construct a longitudinal, multi-layer map of care pathways, decision points, and health outcomes for patients with a primary index disease, incorporating the impact of major comorbidities.

Materials & Workflow:

- Data Source Identification: Secure access to linked electronic health records (EHR) and claims databases with longitudinal follow-up (minimum 5 years). Ensure data includes diagnoses (ICD-10/11), procedures, pharmacy dispensing, and lab values.

- Cohort Definition:

- Index Cohort: Patients with first recorded diagnosis of primary disease (e.g., HFrEF).

- Comorbidity Stratification: Identify pre-existing or incident comorbidities from Table 1 within a defined look-back/forward period.

- Journey Phase Delineation: Algorithmically define phases: Symptomatology & Pre-Diagnosis, Diagnosis & Care Initiation, Treatment Optimization, Chronic Management, Advanced Disease/End-of-Life.

- Event Log Creation: For each patient, create a timestamped sequence of all clinical events (specialist referrals, hospitalizations, medication changes, severe lab value deviations).

- Process Mining: Apply process mining algorithms (e.g., HeuristicsMiner, Inductive Miner) to the event log to generate a patient journey model, visualizing the most frequent pathways.

- Comorbidity Layer Overlay: Generate sub-models for cohorts stratified by each major comorbidity. Compare pathway convergence/divergence, event frequency, and time-between-events.

Deliverable: A state-transition diagram (see Diagram 1) highlighting critical decision nodes where comorbidity presence alters the standard journey.

Protocol:In VitroModeling of Comorbid Disease Crosstalk

Objective: To experimentally replicate the crosstalk between primary disease and comorbidity pathways using a multi-condition coculture system.

Materials & Workflow:

- Cell System Establishment:

- Differentiate iPSCs into disease-relevant cell types for the primary indication (e.g., cardiomyocytes for HFrEF).

- Differentiate a second iPSC line into comorbidity-relevant cell types (e.g., renal proximal tubule cells for CKD).

- Coculture Assembly: Use a transwell system or a microfluidic organ-on-a-chip platform permitting shared media but not direct cell contact.

- Conditioning & Stimulation:

- Control: Coculture in standard media.

- Primary Disease Model: Expose primary disease cells to pathological stimulus (e.g., cardio-myocytes to endothelin-1 for hypertrophy).

- Comorbidity Model: Expose comorbidity cells to pathological stimulus (e.g., tubule cells to high glucose/albumin).

- Integrated Model: Apply both stimuli simultaneously to their respective cell types.

- Endpoint Analysis (72-96 hrs):

- Secretome: Analyze conditioned media via multiplex cytokine/proteomic arrays.

- Cell-Specific Readouts: Fix and stain for cell-type-specific markers of dysfunction (e.g., cardiomyocyte area, tubule cell stress marker NGAL).

- Pathway Activation: Perform phospho-protein Western blotting on separately lysed cell types to identify trans-cellular signaling.

Deliverable: Quantification of how comorbid conditioning exacerbates dysfunction in primary disease cells, identifying novel secretory mediators.

Visualization: Integrated Patient Journey Model

Diagram 1: Heart Failure Patient Journey with Comorbidity Impact

The Scientist's Toolkit: Key Reagents for System-Level Modeling

Table 2: Essential Research Reagents for Comorbidity Crosstalk Experiments

| Reagent / Solution | Provider Examples | Function in Protocol | Critical Specification |

|---|---|---|---|

| Disease-Specific iPSC Line | Cellular Dynamics, Axol Bioscience | Source for deriving primary disease-relevant cell types. | Genetically engineered with disease-relevant mutation or sourced from patient donor. |

| Comorbidity iPSC Line | REPROCELL, ATCC | Source for deriving comorbidity-relevant cell types (e.g., renal, hepatic). | Should be from a different genetic background to track cell-specific responses. |

| Defined Differentiation Kits | STEMCELL Tech., Thermo Fisher | Robust, reproducible generation of functional cardiomyocytes, neurons, hepatocytes, etc. | Lot-to-lot consistency; high efficiency (>80%) marker expression. |

| Microfluidic Coculture Chip | Emulate, MIMETAS | Provides physiological fluid flow and tissue-tissue interface. | Chip material (e.g., PDMS) must be validated for low analyte binding. |

| Multiplex Cytokine Array | Luminex, Meso Scale Discovery | Measures secretome changes from conditioned media in coculture. | Panel must include factors implicated in both primary and comorbid disease (e.g., IL-6, TNF-α, FGF23). |

| Cell-Type-Specific Viability Dye | Thermo Fisher (CellTrace) | Labels one cell population prior to coculture for post-assay sorting and analysis. | Must not transfer to adjacent cells and be compatible with fixation. |

| Phospho-Specific Antibody Panels | Cell Signaling Tech., Abcam | Enables measurement of pathway activation (e.g., p-STAT3, p-NF-κB) in specific cell lysates. | Validation for use in the relevant differentiated cell type is essential. |

This protocol details Step 5 of the Structured Experimental Science (SES) framework methodological guide. It provides a standardized approach for analyzing complex biological and pharmacological data, transitioning from raw data to interpretable results. The workflow integrates statistical rigor with computational efficiency, essential for biomarker discovery, dose-response modeling, and preclinical validation in drug development.

Core Statistical Methodologies

Hypothesis Testing & Multiple Comparisons Correction

Protocol 2.1.1: Application of False Discovery Rate (FDR) Control

- Objective: To identify statistically significant changes (e.g., gene expression, protein abundance) while controlling for Type I errors in high-dimensional data.

- Procedure: a. For each of m hypotheses (e.g., genes), calculate a p-value using an appropriate test (e.g., t-test, ANOVA). b. Order the p-values from smallest to largest: ( p{(1)} \leq p{(2)} \leq ... \leq p{(m)} ). c. Apply the Benjamini-Hochberg procedure: i. Choose an FDR threshold (q-value), typically q=0.05. ii. Find the largest rank *k* where ( p{(k)} \leq \frac{k}{m} \times q ). iii. Reject the null hypothesis for all tests with ranks 1 through k.

- Software: Implement in R using

p.adjust(method="fdr")or in Python withstatsmodels.stats.multitest.fdrcorrection.

Table 2.1: Comparison of Multiple Testing Correction Methods

| Method | Controls For | Procedure | Best Use Case |

|---|---|---|---|

| Bonferroni | Family-Wise Error Rate (FWER) | ( p_{adj} = p \times m ) | Small number of planned comparisons, confirmatory studies. |

| Benjamini-Hochberg (FDR) | False Discovery Rate (FDR) | Step-up procedure ranking p-values. | Exploratory omics studies (transcriptomics, proteomics). |

| q-value | FDR (posterior probability) | Estimated from p-value distribution. | Large-scale discovery studies with dense data. |

Dose-Response & Pharmacodynamic Modeling

Protocol 2.2.1: Four-Parameter Logistic (4PL) Regression Fitting

- Objective: To model the relationship between drug concentration (dose) and biological response (efficacy/toxicity).

- Model Equation: ( Y = Bottom + \frac{Top - Bottom}{1 + 10^{((LogEC_{50} - X) \times HillSlope)}} ) Where: X = log10(concentration); Y = response; Top/Bottom = asymptotic plateaus; LogEC50 = log10 of half-maximal effective concentration; HillSlope = slope factor.

- Procedure: a. Input cleaned dose-response data (minimum n=3 replicates per concentration). b. Perform non-linear least squares regression to estimate parameters. c. Assess goodness-of-fit (R², residual plots). d. Calculate derived metrics: IC50, EC50, efficacy (Top-Bottom), and Hill coefficient.

- Software: Use

drcpackage in R orscipy.optimize.curve_fitin Python.

Table 2.2: Summary of Common Pharmacodynamic Models

| Model Name | Equation | Key Parameters | Typical Application |

|---|---|---|---|

| Linear | ( E = E_0 + m \times C ) | ( E_0, m ) | Preliminary, limited concentration range. |

| Emax | ( E = E0 + \frac{E{max} \times C}{EC_{50} + C} ) | ( E0, E{max}, EC_{50} ) | Single-target binding, cell viability. |

| Sigmoid Emax (Hill) | ( E = E0 + \frac{E{max} \times C^h}{EC_{50}^h + C^h} ) | ( E0, E{max}, EC_{50}, h ) | Cooperative binding, multi-target effects. |

| Indirect Response | ( \frac{dR}{dt} = k{in}(1 - \frac{I{max}C}{IC{50}+C}) - k{out}R ) | ( k{in}, k{out}, I{max}, IC{50} ) | Time-delayed responses (e.g., biomarker production). |

Computational & Bioinformatics Protocols

Bulk RNA-Sequencing Data Analysis

Protocol 3.1.1: Differential Expression Analysis Workflow

- Input: Raw FASTQ files from sequencing.

- Quality Control & Alignment: a. Assess read quality with FastQC. Trim adapters/low-quality bases with Trimmomatic. b. Align reads to a reference genome (e.g., GRCh38) using STAR aligner with gene annotation. c. Generate count matrices for genes using featureCounts.

- Statistical Analysis: a. Import counts into R/Bioconductor. Normalize using DESeq2's median of ratios method. b. Perform differential expression testing using a negative binomial generalized linear model (DESeq2) or likelihood ratio test (edgeR). c. Apply variance stabilizing transformation for downstream clustering/PCA.

- Output: List of differentially expressed genes (DEGs) with log2 fold-change, p-value, and adjusted p-value (FDR).

Machine Learning for Predictive Biomarker Identification

Protocol 3.2.1: LASSO (L1) Regularized Regression

- Objective: To select a parsimonious set of predictive features (e.g., genes, proteins) from a high-dimensional dataset.

- Procedure: a. Standardize all features (mean=0, variance=1). b. Fit a logistic (classification) or linear (regression) model penalized by the L1-norm of coefficients: ( \min( \frac{1}{2N} \sum{i=1}^N (yi - \beta \cdot xi)^2 + \lambda \sum{j=1}^p |\beta_j| ) ) c. Use 10-fold cross-validation to select the optimal penalty parameter (λ) that minimizes prediction error. d. Features with non-zero coefficients at the optimal λ are selected as the biomarker panel.

- Validation: Assess model performance on a held-out test set using AUC-ROC (classification) or RMSE (regression).

Table 3.1: Comparison of Feature Selection Methods

| Method | Type | Mechanism | Key Advantage | Disadvantage |

|---|---|---|---|---|

| LASSO | Embedded | L1 regularization shrinks some coefficients to zero. | Intrinsic feature selection, interpretable. | Tends to select one from correlated groups. |

| Random Forest | Embedded | Mean decrease in Gini impurity/accuracy. | Handles non-linearity, robust to outliers. | Less interpretable, can be computationally heavy. |

| Recursive Feature Elimination (RFE) | Wrapper | Recursively removes least important features. | Model-agnostic, often high performance. | Computationally expensive, risk of overfitting. |

Visualization & Reporting

Data Visualization Standards

All plots must be publication-ready. Use colorblind-friendly palettes (e.g., viridis). For boxplots, show individual data points. For dose-response curves, display raw data points with the fitted model and 95% confidence interval band.

Reproducibility Protocol

- Code: All analyses must be scripted in R/Python. Use version control (Git).

- Environment: Document package versions using

renv(R) orconda env export(Python). - Containerization: Consider Docker/Singularity for complex pipelines to ensure computational reproducibility.

The Scientist's Toolkit: Research Reagent Solutions

Table 5.1: Essential Computational Research Tools

| Tool / Reagent | Function / Purpose | Example Product / Package |

|---|---|---|

| Statistical Software | Primary platform for data analysis, visualization, and statistical testing. | R (with tidyverse, ggplot2), Python (with SciPy, pandas). |

| Integrated Development Environment (IDE) | Provides a code editor, debugging tools, and project management for analysis scripts. | RStudio, PyCharm, Visual Studio Code. |

| Bioinformatics Suites | Curated collections of tools for genomic, transcriptomic, and proteomic analysis. | Bioconductor (R), Galaxy Platform. |

| High-Performance Computing (HPC) Access | Enables analysis of large datasets (e.g., NGS, molecular dynamics) via clusters. | Slurm workload manager, cloud computing (AWS, GCP). |

| Data Visualization Library | Creates publication-quality graphs and figures from analysis results. | ggplot2 (R), Matplotlib/Seaborn (Python). |