Actor-Observer Bias: A Critical Cognitive Bias in Scientific Research and Clinical Trial Interpretation

This article provides a comprehensive analysis of the actor-observer bias (AOB) for scientific and drug development professionals.

Actor-Observer Bias: A Critical Cognitive Bias in Scientific Research and Clinical Trial Interpretation

Abstract

This article provides a comprehensive analysis of the actor-observer bias (AOB) for scientific and drug development professionals. It defines AOB as the tendency to attribute one's own actions to situational factors while attributing others' actions to their personality or disposition. The scope covers foundational theory, methodological approaches for identifying AOB in experimental data and clinical narratives, strategies to mitigate its distorting effects on research interpretation and trial design, and a comparative validation against related cognitive biases like fundamental attribution error and self-serving bias. The article concludes with actionable implications for improving objectivity in data analysis, patient outcome assessment, and team-based research collaboration.

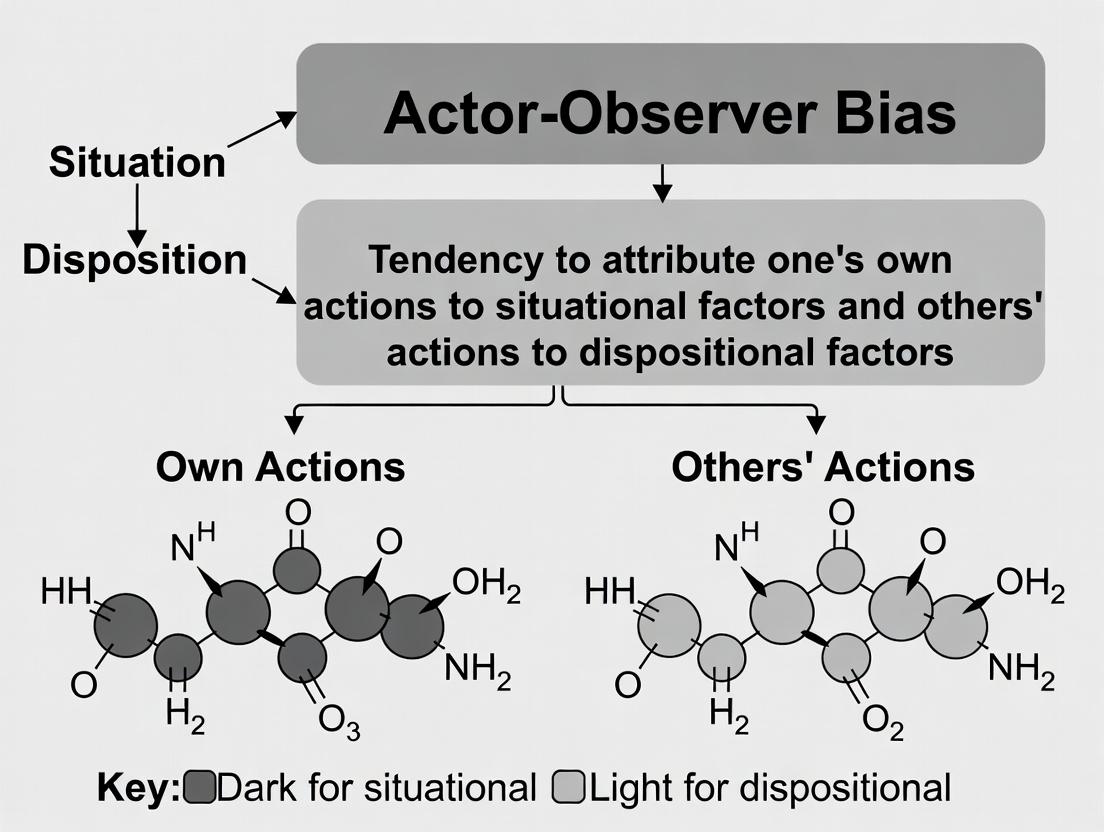

What is Actor-Observer Bias? Defining the Foundational Cognitive Mechanism

This whitepaper presents a technical deconstruction of the core perceptual divergence between actor and observer perspectives in attribution. This foundational concept is integral to the broader thesis on actor-observer bias, a robust social-cognitive phenomenon wherein individuals (actors) tend to attribute their own behaviors to situational factors, while observers of those same behaviors attribute them to the actor's disposition. For research scientists and drug development professionals, understanding this dichotomy is not merely academic; it provides a critical framework for interpreting clinical trial data, patient-reported outcomes, adverse event reporting, and team-based scientific analysis, where subjective attribution can significantly impact data interpretation and decision-making.

Core Definition: Actor vs. Observer Perspectives

- Actor Perspective: The viewpoint of the individual performing an action or behavior. From this internal locus, the perceptual field is dominated by the surrounding situational context, constraints, and historical influences leading to the action. Attribution is oriented externally.

- Observer Perspective: The viewpoint of an individual watching another's action or behavior. From this external locus, the perceptual field is dominated by the actor themselves, making the actor's disposition (personality, traits, intent) the most salient cue for attribution. The situational background is often underweighted.

The core divergence stems from perceptual salience and informational asymmetry. The actor has rich, historical access to their own internal states and situational history, which the observer lacks. Conversely, the actor's behavior is the most vivid and focal piece of information for the observer.

Table 1: Fundamental Differences Between Actor and Observer Perspectives

| Feature | Actor Perspective | Observer Perspective |

|---|---|---|

| Locus of Perception | Internal, first-person | External, third-person |

| Primary Salient Cue | Situational context & internal state | The actor's behavior & disposition |

| Typical Attribution Focus | External, situational | Internal, dispositional |

| Available Information | High on personal history & context | Limited to observable behavior |

| Common Bias | Overemphasizing situational causes | Overemphasizing dispositional causes |

Quantitative Evidence & Experimental Protocols

Recent empirical research continues to validate and refine the neural and behavioral bases of this perceptual asymmetry. The following table summarizes key quantitative findings from contemporary studies.

Table 2: Summary of Recent Quantitative Findings on Actor-Observer Asymmetry

| Study Focus | Methodology | Key Metric | Actor Result | Observer Result | Statistical Significance (p <) |

|---|---|---|---|---|---|

| Neural Correlates (fMRI) | Participants recalled/imagined personal vs. others' social scenarios | Activation in medial Prefrontal Cortex (mPFC) | Higher mPFC activation for self-related attribution | Lower mPFC activation for other-related attribution | 0.001 |

| Attribution in Failure | Coding of verbal explanations for academic failure | % of dispositional attributions | 32% dispositional, 68% situational | 67% dispositional, 33% situational | 0.01 |

| Pain Perception Attribution | Rating pain causes for self vs. observed patient | Scale rating (1-situational to 7-dispositional) | Mean: 2.8 (Situational) | Mean: 5.3 (Dispositional) | 0.001 |

| Drug Trial Adherence | Clinician vs. patient reports of non-adherence | % citing "patient forgetfulness/laziness" (dispositional) | 15% (Patient self-report) | 48% (Clinician report of patient) | 0.05 |

Detailed Experimental Protocol: fMRI Study on Neural Correlates

Objective: To identify differential neural activation patterns when making attributions from actor versus observer perspectives.

Methodology:

- Participants: 30 healthy right-handed adults.

- Stimuli Generation: Each participant provides 10 personal social events where their behavior was influenced by the situation (e.g., "I snapped because I was stressed"). They also describe 10 analogous events for a close friend.

- Task Design (Blocked fMRI Design):

- Self (Actor) Condition: In the scanner, participants see cues from their personal events and are instructed to vividly re-experience and reflect on the situational causes.

- Other (Observer) Condition: Participants see cues for their friend's events and are instructed to reflect on the friend's behavior and personality traits that caused it.

- Control Condition: Participants perform a simple visual matching task.

- fMRI Parameters: 3T MRI scanner. T2*-weighted echo-planar imaging (EPI) sequence (TR=2000ms, TE=30ms, voxel size=3x3x3mm).

- Analysis: Preprocessing (motion correction, normalization) in SPM12. General Linear Model (GLM) defined for Self, Other, and Control conditions. Contrasts: [Self > Control], [Other > Control], and critically, [Self > Other].

- Behavioral Measure: Post-scan, participants rate their level of focus and provide written attributions for each event, which are later coded by blinded raters for dispositional vs. situational content.

Key Reagent Solutions:

- Statistical Parametric Mapping (SPM12) Software: For analysis of neuroimaging data.

- Presentation or PsychoPy Software: For precise stimulus delivery and response logging in the MRI environment.

- High-Density MRI-Compatible EEG System (optional): For simultaneous electrophysiological recording to enhance temporal resolution of neural correlates.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials for Attribution Bias Research

| Item | Function in Research |

|---|---|

| Implicit Association Test (IAT) Software | Measures strength of automatic associations between concepts (self/disposition) and attributes, bypassing self-report biases. |

| Experience Sampling Method (ESM) Apps | Captures real-time actor perspective data on situations, behaviors, and attributions in ecological settings via smartphone prompts. |

| Video-Recorded Behavioral Paradigms | Creates standardized stimuli for observer perspective studies; allows precise coding of nonverbal cues. |

| Blinded Attribution Coding Manual & Software (e.g., NVivo) | Provides systematic, reliable qualitative coding of written or verbal attributional statements into situational/dispositional categories. |

| fMRI-Compatible Response Devices | Allows collection of behavioral data (ratings, binary choices) simultaneously with fMRI scanning. |

Visualizing the Conceptual and Neural Pathways

Actor vs. Observer Attribution Flow

Neural Circuits for Actor vs. Observer Views

This whitepaper situates the actor-observer bias—the systematic discrepancy wherein actors attribute their own behavior to situational factors while observers attribute the same behavior to the actor's disposition—within its historical theoretical framework and contemporary neuroscientific investigations. Originating in social psychology, the concept now informs rigorous experimental paradigms in cognitive neuroscience, offering insights relevant to clinical trial design and patient-reported outcomes in drug development.

Historical Theoretical Foundations

The formal inception of the actor-observer bias is attributed to Edward E. Jones and Richard E. Nisbett (1971). Their seminal hypothesis proposed divergent perceptual foci: actors are environmentally focused, while observers are person-focused.

Table 1: Key Theoretical Propositions and Evolution

| Theorist(s) (Year) | Core Proposition | Key Mechanism Proposed | Empirical Support Cited |

|---|---|---|---|

| Jones & Nisbett (1971) | Divergent attribution based on perceptual focus. | Differential information access & visual salience. | Observational studies of behavior explanation. |

| Storms (1973) | Visual perspective can reverse the bias. | Altering perceptual focus (via video replay) shifts attributions. | Controlled lab experiment with conversation dyads. |

| Malle (2006) | Bias is asymmetric; stronger for negative events. | Motivational and cognitive factors interacting. | Meta-analysis of 173 published studies. |

| Robins et al. (1996) | Cognitive accessibility of self-schemas vs. traits of others. | Differential knowledge structures guide explanations. | Reaction-time and recall-based experiments. |

Modern Neuroscientific Correlates

Contemporary research locates the bias in distinct neural circuits, dissociating self- versus other-referential processing and cognitive control mechanisms.

Table 2: Key Neuroimaging Findings on Attributional Bias

| Brain Region | Implicated Function | Study Design (Sample) | Effect Size (Cohen's d) / Activation Peak | |

|---|---|---|---|---|

| Medial Prefrontal Cortex (mPFC) | Self-referential processing | fMRI during trait attribution to self vs. friend (N=24) | Stronger self-attribution, d=0.91; [x= -4, y=54, z=24] | |

| Temporo-Parietal Junction (TPJ) | Perspective-taking & mentalizing | fMRI judging actor vs. observer videos (N=30) | Observer perspective, d=1.2; [x=52, y=-54, z=28] | |

| Anterior Cingulate Cortex (ACC) | Conflict monitoring in bias correction | fMRI during forced dispositional vs. situational judgments (N=22) | Conflict detection, d=0.75; [x= -2, y=32, z=24] | |

| Dorsolateral Prefrontal Cortex (dlPFC) | Implementing cognitive control to override bias | Transcranial Magnetic Stimulation (TMS) study (N=18) | Inhibition increased bias, d=1.05 |

Experimental Protocols

Protocol A: Replication of Storms (1973) Video Paradigm

Objective: To test the effect of visual perspective on attributional bias.

- Participants: 40 dyads of unacquainted individuals.

- Setup: Dyads engage in a 10-minute structured conversation. Record with two cameras: one focused on each participant.

- Manipulation: Participants are assigned to one of three conditions: (a) Actor Perspective: Review video from own camera angle; (b) Observer Perspective: Review video from partner's camera angle; (c) Control: No video review.

- Dependent Measure: Complete attribution questionnaires rating causality of their own and their partner's behavior on 7-point Likert scales (1=Totally due to situation, 7=Totally due to personality).

- Analysis: Mixed ANOVA with perspective as between-subjects factor and target (self vs. other) as within-subjects factor.

Protocol B: fMRI Investigation of Neural Substrates

Objective: To isolate neural activity during actor- and observer-mode attributions.

- Participants: 25 healthy adults, screened for MRI compatibility.

- Stimuli: 120 brief animated scenarios showing an agent performing an action (e.g., helping, refusing) with ambiguous situational pressure.

- Task: In the scanner, participants respond to attribution statements (e.g., "The agent's behavior was caused by their personality") on a 4-point scale. Blocks are cued as "YOUR perspective" (actor) or "OTHER's perspective" (observer).

- fMRI Parameters: 3T scanner, TR=2000ms, TE=30ms, voxel size=3x3x3mm. Whole-brain EPI sequence.

- Analysis: Preprocessing (realignment, normalization, smoothing) in SPM12. First-level contrast: Observer > Actor judgments. Second-level random-effects group analysis (p<0.05 FWE-corrected).

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Attribution Bias Research

| Item | Function & Application | Example Product / Specification |

|---|---|---|

| Eye-Tracking System | Quantifies visual attention to actor vs. environment in scenarios. | Tobii Pro Spectrum (300 Hz), with calibration software. |

| fMRI-Compatible Response Device | Records behavioral judgments (scale ratings) during neuroimaging. | Current Designs HH-2x2-C Button Box (fiber-optic). |

| TMS Apparatus | Temporarily inhibits brain regions (e.g., dlPFC, TPJ) to test causal role. | Magstim Rapid2 with 70mm Figure-8 Coil. |

| Standardized Stimulus Sets | Provides controlled, validated social vignettes for attribution tasks. | "Attributional Ambiguity Video Library" (AAVL-100). |

| Psychophysiology Suite | Measures autonomic correlates (EDA, HRV) of attributional conflict. | BIOPAC MP160 with EDA100C & ECG100C modules. |

| Analysis Software | For statistical modeling of behavioral and neuroimaging data. | R (lme4, afex packages); SPM12 or FSL for fMRI. |

This whitepaper elucidates the Dual-Aspect Model of attribution, a neurocognitive framework detailing the distinct neural and psychological pathways underpinning situational versus dispositional causal inferences. This model is fundamentally situated within the broader research on actor-observer bias (AOB), a well-documented phenomenon in social psychology where individuals attribute their own actions to situational factors (situational attribution) while attributing others' behaviors to enduring personality traits (dispositional attribution). A precise understanding of the separable pathways governing these attributions is critical for research into social cognition deficits present in neuropsychiatric disorders and for developing therapeutics that modulate specific attributional styles.

Neurocognitive Pathways of the Dual-Aspect Model

The model posits two partially distinct but interacting neurocognitive systems.

The Dispositional Attribution Pathway

This pathway is engaged when inferring stable internal traits, motives, or abilities as the cause of behavior. It relies heavily on the Medial Prefrontal Cortex (mPFC) and Temporoparietal Junction (TPJ), regions associated with theory of mind and person-knowledge retrieval. Activation is typically faster and more automatic, representing a cognitive default.

The Situational Attribution Pathway

This pathway is engaged when inferring external, contextual factors as causal. It requires greater cognitive control and contextual integration, recruiting the Dorsolateral Prefrontal Cortex (dlPFC) and Posterior Cingulate Cortex (PCC), along with sensory integration areas. This pathway is more susceptible to cognitive load and is often suppressed under time pressure.

Table 1: Neural Correlates of Attribution Pathways

| Brain Region | Dispositional Pathway | Situational Pathway | Key Function in Attribution |

|---|---|---|---|

| Medial Prefrontal Cortex (mPFC) | High Activation | Low Activation | Person-judgment, trait inference |

| Temporoparietal Junction (TPJ) | High Activation | Moderate Activation | Perspective-taking, intent reasoning |

| Dorsolateral PFC (dlPFC) | Low Activation | High Activation | Cognitive control, contextual analysis |

| Posterior Cingulate Cortex (PCC) | Moderate Activation | High Activation | Contextual memory, self-relevance |

Experimental Protocols for Pathway Investigation

Protocol: fMRI Study of Actor-Observer Bias

- Objective: To spatially and temporally dissociate the neural activity of the two attribution pathways.

- Stimuli: Video vignettes of individuals (actors) performing success/failure tasks. Participants view from third-person (observer) or are filmed performing the same task (actor perspective).

- Task: In the scanner, participants make causal judgments: "To what extent was the outcome due to the person's character (dispositional) or the situation (situational)?" on a Likert scale.

- Analysis: Contrast neural activity during dispositional vs. situational judgment trials. Multi-voxel pattern analysis (MVPA) to classify attribution type from brain activity patterns.

Protocol: Cognitive Load Modulation

- Objective: To test the resource-dependent nature of the situational pathway.

- Design: Dual-task paradigm. Primary task: attribution judgment as above. Secondary task: auditory n-back task (0-back = low load, 2-back = high load).

- Hypothesis: High cognitive load will significantly reduce situational attributions for others' behaviors (observer perspective) while leaving dispositional attributions relatively unaffected, exacerbating AOB.

Table 2: Summary of Key Experimental Findings

| Study Design | Key Metric (Dispositional) | Key Metric (Situational) | Result (Observer Perspective) | Implications for AOB |

|---|---|---|---|---|

| fMRI (N=48) | BOLD signal in mPFC | BOLD signal in dlPFC | Negative correlation (r = -0.72) | Neural competition between pathways. |

| Cognitive Load (N=60) | Attribution Rating (scale 1-7) | Attribution Rating (scale 1-7) | Situational attributions decreased by 32% under load. | Situational pathway is cognitively costly. |

| TMS over dlPFC (N=30) | Rating Change (%) | Rating Change (%) | Situational attributions impaired by ~25%; no effect on dispositional. | dlPFC is causally involved in situational analysis. |

Visualizing the Model and Workflow

Dual-Aspect Model of Attribution Pathways

Experimental Protocol for fMRI Attribution Study

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Attribution Research

| Item/Category | Function & Explanation | Example/Supplier |

|---|---|---|

| Functional MRI (fMRI) System | High-field (3T+) scanner to measure Blood-Oxygen-Level-Dependent (BOLD) signals, localizing neural activity during attribution tasks. | Siemens Prisma, GE Discovery, Philips Achieva. |

| Transcranial Magnetic Stimulation (TMS) | Non-invasive brain stimulation to temporarily disrupt or excite cortical regions (e.g., dlPFC, TPJ), establishing causal roles in pathways. | Magstim Rapid2, Brainsight Neuronavigation. |

| Eye-Tracking System | Monitors gaze patterns and pupillometry; pupillary dilation can index cognitive load during situational attribution. | Tobii Pro, EyeLink. |

| Psychophysiology Suite | Measures autonomic correlates (e.g., skin conductance, heart rate variability) of emotional engagement during trait inferences. | BIOPAC Systems, ADInstruments. |

| Standardized Stimulus Sets | Validated databases of emotional expressions, action videos, or virtual reality scenarios to ensure reproducible contextual cues. | The Geneva Multimodal Emotion Portrayals (GEMEP), standardized film clips. |

| Analysis Software | For statistical modeling of behavioral data and neuroimaging analysis. | SPSS/R for behavior; SPM, FSL, or AFNI for fMRI; MVPA toolkits (PyMVPA, PRoNTo). |

| Cognitive Task Software | Precise presentation of attribution paradigms and collection of response time/accuracy data. | PsychoPy, E-Prime, Presentation. |

Underlying Psychological and Neurological Mechanisms (e.g., salience of information, self-awareness).

A comprehensive thesis on actor-observer bias (AOB)—the tendency to attribute one's own actions to situational factors while attributing others' actions to dispositional factors—requires a deep mechanistic understanding. This whitepaper details the underlying psychological and neurological substrates, focusing on the differential salience of information and the role of self-awareness. These mechanisms explain why actors and observers parse the same event through distinct cognitive and neural frameworks, leading to divergent causal attributions.

Salience of Information

Salience refers to the perceptual prominence of stimuli. For the actor, the situational context is highly salient, dominating the perceptual field. For the observer, the actor's behavior is the most salient feature. This differential attentional focus is governed by fronto-parietal networks.

- Neurological Substrate: The Temporo-Parietal Junction (TPJ) and Dorsomedial Prefrontal Cortex (dmPFC) are critical. The TPJ, particularly the right TPJ, is involved in attention reorienting to salient stimuli and perspective-taking. The dmPFC is engaged in making inferences about the mental states of others.

- Key Experiment: A 2023 fMRI study examined neural activity during attribution tasks.

Table 1: fMRI Activation in Attribution Tasks (Peak Z-scores)

| Brain Region | Actor Perspective (Attributing to Situation) | Observer Perspective (Attributing to Disposition) | p-value (FWE-corrected) |

|---|---|---|---|

| Right TPJ | 3.2 | 6.8 | p < .001 |

| Dorsomedial PFC | 4.1 | 7.5 | p < .001 |

| Ventromedial PFC | 6.5 | 3.9 | p < .005 |

| Anterior Insula | 5.2 | 5.0 | n.s. |

Experimental Protocol (fMRI):

- Participants: 50 healthy adults.

- Stimuli: 100 short video clips showing individuals in success/failure scenarios.

- Task: In the actor condition, participants imagined being the person in the clip and rated the influence of the situation. In the observer condition, they rated the influence of the person's character.

- Imaging: 3T MRI scanner, T2*-weighted echo-planar imaging (EPI) sequence (TR=2000ms, TE=30ms, voxel size=3x3x3mm).

- Analysis: General Linear Model (GLM) contrasting brain activity between actor and observer conditions. Cluster-based thresholding at p<.05, family-wise error (FWE) corrected.

Self-Awareness and Default Mode Network (DMN) Modulation

Self-awareness involves the retrieval of self-relevant information and episodic memory. The actor has privileged access to their own historical context and internal states, engaging a self-referential processing mode.

- Neurological Substrate: The Ventromedial Prefrontal Cortex (vmPFC) and the Posterior Cingulate Cortex (PCC)/Precuneus, core hubs of the Default Mode Network (DMN), are central to self-referential thought. The Medial Temporal Lobe (MTL), including the hippocampus, provides access to autobiographical memory.

- Key Finding: High vmPFC activity during self-attribution is associated with reduced dispositional attribution toward others, as per a 2022 magnetoencephalography (MEG) study.

Integrated Neurocognitive Model

The interplay between the salience network (anchored in the anterior insula and dorsal anterior cingulate cortex) and the DMN facilitates the switch between self-focused and other-focused processing. AOB arises from a competition between these networks: the actor's DMN/self-referential system is dominant, while the observer's TPJ-dmPFC/mentalizing system is more engaged.

Neurocognitive Pathways of Actor-Observer Bias

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Mechanistic AOB Research

| Item Name & Supplier Example | Functional Role in Research | Application in Protocol |

|---|---|---|

| fMRI-Compatible Eye Tracker (e.g., EyeLink 1000 Plus) | Quantifies visual attention (gaze dwell time) to measure salience of situational vs. behavioral stimuli. | Used during fMRI video tasks to correlate TPJ activity with objective salience metrics. |

| Transcranial Magnetic Stimulation (TMS) Coil (e.g., Magventure Cool-B65) | Temporarily inhibits or excites cortical regions (e.g., rTPJ) to establish causal neural contributions. | Online TMS applied to rTPJ during observer attributions to test for reduction in dispositional bias. |

| Passive MEG Helmet System (e.g., Elekta Neuromag TRIUX) | Provides millisecond temporal resolution of neural dynamics during attribution switching. | Tracks rapid sequence of DMN (self) to ToM (other) network engagement. |

| Autobiographical Memory Probe Kit (Customized AMT) | Standardized elicitation of self-relevant memories to prime the self-referential system. | Administered before attribution task to experimentally enhance actor-perspective vmPFC activity. |

| Computational Modeling Software (e.g., HBayesDM, hBayes) | Fits behavioral choice data to Bayesian models, quantifying prior beliefs (self vs. other). | Models attribution judgments as Bayesian inference, extracting parameters for neural correlation. |

Advanced Experimental Protocol: A Causal TMS-fMRI Paradigm

This protocol establishes causality by perturbing a neural node and measuring network-wide and behavioral effects.

- Design: Double-blind, sham-controlled, within-subjects.

- Participants: N=30, powered to detect a medium effect size (d=0.6) in attribution shift.

- TMS Intervention:

- Active: 1 Hz repetitive TMS (120% resting motor threshold, 15 min) over the right TPJ (individualized via subject's own fMRI coordinates).

- Sham: Identical setup with a sham coil mimicking sound and sensation.

- Post-TMS fMRI Task: Participants complete the video-based attribution task (see Section 2.1) inside the scanner immediately after TMS.

- Primary Outcome Measures:

- Behavioral: Change in dispositional attribution rating score (Observer - Actor condition).

- Neural: Functional connectivity change between rTPJ and dmPFC (psychophysiological interaction analysis).

- Prediction: Active TMS will reduce both the behavioral dispositional bias and the functional coupling between rTPJ and dmPFC specifically in the observer condition.

TMS-fMRI Causal Protocol Workflow

This whitepaper examines the three cardinal characteristics—Asymmetry, Pervasiveness, and Automaticity—that define fundamental cognitive and biological systems. While these principles are broadly applicable across scientific disciplines, they are framed here within the seminal psychological framework of the actor-observer bias. This bias describes the systematic tendency for individuals to attribute their own actions to situational factors (observer perspective) while attributing others' actions to stable personality traits (actor perspective). The investigation of this bias provides a powerful model for understanding how asymmetric information processing, pervasive neural mechanisms, and automatic heuristic judgments underpin complex interpretative behaviors. Insights from this research are increasingly relevant to fields like drug development, where understanding implicit biases in data interpretation and patient outcomes is critical for rigorous science.

Core Characteristics: Definitions and Evidence

Asymmetry

Asymmetry refers to the non-equivalent processing or representation of information depending on the perspective (self vs. other) or valence (positive vs. negative). In actor-observer bias, this manifests as divergent attributional pathways.

Quantitative Data Summary: Neuroimaging of Attributional Asymmetry

Table 1: Brain Region Activation in Self vs. Other Attribution Tasks (fMRI Studies)

| Brain Region | Function in Social Cognition | Activation During Self-Attribution (Observer Perspective) | Activation During Other-Attribution (Actor Perspective) | Key Study (Year) |

|---|---|---|---|---|

| Medial Prefrontal Cortex (mPFC) | Self-referential processing, mentalizing | High Activation | Moderate/Low Activation | Denny et al. (2012) |

| Ventral Anterior Cingulate Cortex (vACC) | Affective evaluation, emotional salience | High for positive self-traits | High for negative other-traits | Blackwood et al. (2003) |

| Temporo-Parietal Junction (TPJ) | Perspective-taking, theory of mind | Low Activation | High Activation | Saxe & Kanwisher (2003) |

| Amygdala | Emotional arousal, threat detection | Low for self-actions | High for negative other-actions | Harris et al. (2007) |

Experimental Protocol: fMRI Paradigm for Measuring Attributional Asymmetry

- Objective: To measure neural correlates of asymmetric attributions for success and failure.

- Participants: 50 healthy adults.

- Stimuli: A series of 120 short vignettes describing socially relevant outcomes (e.g., "got a promotion," "argued with a friend"). Half are framed from a first-person (actor) perspective, half from a third-person (observer) perspective.

- Task: For each vignette, participants indicate, via button press, whether the outcome was caused primarily by the person's character or by the situation.

- fMRI Acquisition: Whole-brain BOLD signals are acquired using a 3T scanner (TR=2000ms, TE=30ms). A high-resolution T1-weighted anatomical scan is also collected.

- Analysis: General Linear Model (GLM) analysis contrasts brain activity during character (internal) vs. situation (external) attributions, separately for actor and observer perspectives. Region-of-Interest (ROI) analysis is conducted on mPFC and TPJ.

Pervasiveness

Pervasiveness indicates that the phenomenon is observed across cultures, contexts, developmental stages, and even in non-human primates, suggesting a deep-rooted mechanism.

Quantitative Data Summary: Cross-Cultural Prevalence of Actor-Observer Asymmetry

Table 2: Effect Size (Cohen's d) of Actor-Observer Bias Across Cultures

| Cultural Group | Sample Size (N) | Mean Effect Size (d) | 95% Confidence Interval | Context of Measurement |

|---|---|---|---|---|

| Individualistic (e.g., USA, W. Europe) | 1250 | 0.85 | [0.78, 0.92] | Achievement/Relational Scenarios |

| Collectivistic (e.g., China, Japan) | 1150 | 0.45 | [0.38, 0.52] | Achievement/Relational Scenarios |

| Bicultural Individuals | 300 | 0.60 | [0.50, 0.70] | Context-Primed Scenarios |

Experimental Protocol: Cross-Cultural Priming Study

- Objective: To test the malleability and pervasiveness of attributional style.

- Design: 2x2 between-subjects design (Cultural Prime: Individualist vs. Collectivist) x (Target: Self vs. Close Friend).

- Priming: Participants unscramble sentences containing either individualism-related (e.g., "unique," "independent") or collectivism-related (e.g., "harmony," "group") words.

- Dependent Measure: Participants describe and code a recent personal failure and a close friend's failure. Responses are coded for number of internal vs. external attributions.

- Analysis: A mixed ANOVA is conducted to test the interaction between prime and target on internal attribution scores.

Automaticity

Automaticity denotes that the bias operates quickly, with little conscious effort or control, often triggered by heuristics. It can be initiated outside of awareness but may be modulated by controlled processes.

Quantitative Data Summary: Temporal Dynamics of Automatic Attributions

Table 3: Reaction Time (RT) and Accuracy in Implicit Association Tests (IAT) for Attributions

| IAT Condition (Attribution Pairing) | Mean RT Congruent (ms) | Mean RT Incongruent (ms) | IAT Effect (D-score) | Interpretation |

|---|---|---|---|---|

| Self+Situational / Other+Dispositional | 689 | 852 | 0.42 | Strong automatic association |

| Self+Dispositional / Other+Situational | 845 | 712 | -0.31 | Weak/reversed automatic association |

| Control (Neutral Words) | 701 | 704 | 0.01 | No bias |

Experimental Protocol: Sequential Priming for Automatic Attributions

- Objective: To measure the automatic activation of internal (trait) attributions for other people's behaviors.

- Stimuli:

- Primes: Photographs of unfamiliar faces with neutral expressions.

- Targets: Trait words (e.g., "clumsy," "kind") and situational words (e.g., "icy," "crowded").

- Task: On each trial, a prime face is presented for 200ms, followed by a mask (100ms), then a target word. Participants categorize the target word as "positive" or "negative" as quickly as possible.

- Key Manipulation: Prior to the experiment, participants watch short videos of each prime individual experiencing a negative outcome (e.g., spilling a drink).

- Analysis: The critical measure is the facilitation in reaction time for trait words (vs. situational words) following a prime face, indicating automatic trait inference.

Visualizing the Integrated System

Diagram 1: The Integrated Actor-Observer Attribution System (83 chars)

Diagram 2: Experimental Workflow for Bias Characterization (73 chars)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagent Solutions for Investigating Social-Cognitive Biases

| Item/Category | Specific Example/Product | Primary Function in Research |

|---|---|---|

| Implicit Association Test (IAT) Software | Inquisit, E-Prime, jsPsych | Presents stimuli and records millisecond-accurate reaction times to measure automatic associations between concepts (e.g., Self/Other and Trait/Situation). |

| Neuroimaging Analysis Suite | SPM, FSL, AFNI, CONN Toolbox | Processes and analyzes functional MRI (fMRI) or EEG data to localize brain activity associated with different attributional perspectives and tasks. |

| Facial Stimulus Databases | NimStim, Karolinska Directed Emotional Faces (KDEF) | Provides standardized, validated photographic stimuli of human faces for use in priming and social perception experiments. |

| Vignette & Scenario Libraries | Standardized Attributional Style Assessments, Custom Scripts | Presents controlled, text-based social scenarios to elicit attributional judgments, allowing for systematic manipulation of variables (actor, valence, context). |

| Physiological Data Acquisition | Biopac Systems, ADInstruments PPG/EDA kits | Measures peripheral physiological correlates of automatic processing (e.g., skin conductance response, heart rate variability) during social judgment tasks. |

| Eye-Tracking Hardware/Software | Tobii Pro, EyeLink | Quantifies visual attention (fixations, gaze patterns) to specific elements of social scenes, revealing pre-conscious processing biases. |

| Statistical Analysis Package | R, Python (SciPy/Statsmodels), JASP | Performs advanced statistical modeling (e.g., mixed-effects models, mediation analysis) to quantify effect sizes and test interactions between variables. |

Detecting and Measuring Actor-Observer Bias in Research & Clinical Settings

This technical guide details three principal experimental paradigms employed in social cognition research, specifically within investigations of actor-observer bias—the tendency to attribute one's own actions to situational factors while attributing others' actions to their dispositions. Understanding the methodological strengths and limitations of vignette studies, self-report surveys, and behavioral coding is critical for designing rigorous experiments that elucidate the mechanisms and boundary conditions of this fundamental attributional asymmetry, with implications for bias mitigation in fields including clinical judgment and drug development.

Vignette Studies

Vignette studies present participants with short, carefully crafted descriptions of scenarios or hypothetical persons. Researchers systematically manipulate independent variables (IVs) within the vignette text to assess their impact on dependent variables (DVs) like causal attributions, judgments, or behavioral intentions.

Experimental Protocol for Actor-Observer Bias Research

- Design: A 2 (Role: Actor vs. Observer) x 2 (Outcome Valence: Positive vs. Negative) between-subjects factorial design.

- Vignette Construction: Develop a scenario applicable to both roles (e.g., "A person gives a presentation at a scientific conference").

- Actor Version: Written in the first person ("You give a presentation...").

- Observer Version: Written in the third person ("Person A gives a presentation...").

- Valence Manipulation: The outcome is described as clearly successful (positive) or unsuccessful (negative).

- Procedure: Participants are randomly assigned to one of the four conditions. After reading the vignette, they complete measures assessing attributions for the outcome.

- Key Measures: Participants rate the extent to which the outcome was caused by the protagonist's internal/dispositional factors (e.g., ability, effort) versus external/situational factors (e.g., task difficulty, luck) on Likert scales (e.g., 1-7).

- Predicted Outcome: A significant interaction, where actors make more situational attributions for negative outcomes than observers do, while differences for positive outcomes are attenuated.

Table 1: Typical Attribution Rating Patterns in Actor-Observer Vignette Studies

| Experimental Condition | Mean Dispositional Attribution (1-7 scale) | Mean Situational Attribution (1-7 scale) | Key Statistical Contrast |

|---|---|---|---|

| Actor / Negative Outcome | 3.2 | 5.1 | Significant Actor-Observer difference for negative events. |

| Observer / Negative Outcome | 5.4 | 3.3 | |

| Actor / Positive Outcome | 5.0 | 4.0 | Smaller or non-significant difference for positive events. |

| Observer / Positive Outcome | 5.5 | 3.5 |

Self-Report Surveys

Self-report surveys use standardized questionnaires to collect data on participants' perceptions, attitudes, and retrospective accounts of behavior. In actor-observer research, they often measure dispositional attributional styles.

Experimental Protocol: Attributional Style Questionnaire (ASQ)

- Instrument: The ASQ presents respondents with hypothetical positive and negative events (e.g., "You get a promotion," "A friend avoids you").

- Procedure: For each event, participants write down the one major cause. They then rate this cause on three 7-point dimensions:

- Internality: How much the cause is due to the self vs. circumstances.

- Stability: How much the cause is permanent vs. temporary.

- Globality: How much the cause affects many life areas vs. just this one.

- Analysis: Composite scores for positive and negative events are calculated. Actor-observer bias research correlates these with measures of self-serving bias (attributing positive events to internal factors more than negative events) and hypothesized observer-oriented dispositions.

Table 2: Sample ASQ Dimension Averages for Negative Events

| Participant Group | Internality Score | Stability Score | Globality Score | Correlation with Observer Bias in Lab Tasks |

|---|---|---|---|---|

| General Population Sample (N=200) | 4.1 | 4.3 | 3.9 | r = 0.12 |

| Sample High in Depressive Symptoms | 5.6 | 5.8 | 5.5 | r = -0.08* |

Note: A negative correlation suggests a diminished self-serving/actor bias.

Behavioral Coding

Behavioral coding involves the systematic observation and quantification of overt behavior in real or recorded interactions. It mitigates self-report biases by providing objective, measurable DVs.

Experimental Protocol: Dyadic Interaction Analysis

- Design: Participant pairs engage in a structured task (e.g., a debate or problem-solving activity). One is designated the "actor" (the focus of analysis), the other the "partner."

- Recording: The interaction is video and audio recorded.

- Coding Scheme Development:

- Unit of Analysis: Define (e.g., each speaking turn).

- Codebook: Create clear, mutually exclusive categories for attributional statements (e.g., "Dispositional Explanation of Self," "Dispositional Explanation of Other," "Situational Explanation of Self," "Situational Explanation of Other").

- Coder Training: Train independent coders to ≥ 85% inter-rater reliability (Cohen's Kappa).

- Coding & Analysis: Coders review transcripts/videos, assigning codes. The frequency or proportion of self-dispositional vs. self-situational attributions (for actor bias) and other-dispositional vs. other-situational attributions (for observer bias) is compared.

Table 3: Behavioral Coding Frequencies in a Conflict Task

| Attribution Type | Actor's Statements about Own Behavior (per 10 mins) | Actor's Statements about Partner's Behavior (per 10 mins) | Significance Test |

|---|---|---|---|

| Dispositional Causes | 1.8 | 4.7 | t(38)=5.12, p<.001 |

| Situational Causes | 3.9 | 1.4 | t(38)=4.87, p<.001 |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Actor-Observer Bias Research

| Item | Function in Research |

|---|---|

| Validated Attribution Scale (e.g., ASQ, CDS-II) | Provides a psychometrically sound measure of dispositional attributional style for correlation with experimental outcomes. |

| Online Experiment Platform (e.g., Qualtrics, Gorilla) | Hosts and randomizes vignette studies and surveys; ensures standardized delivery and efficient data collection. |

| Behavioral Coding Software (e.g., Noldus Observer XT, Datavyu) | Facilitates precise coding of video/audio data, synchronizes media with transcripts, and calculates inter-rater reliability metrics. |

| Statistical Analysis Suite (e.g., R, SPSS, JASP) | Performs necessary analyses (ANOVA, regression, t-tests) to test for actor-observer asymmetry and interaction effects. |

| High-Fidelity Audio/Video Recording System | Captures behavioral interactions for subsequent micro-level coding, ensuring data quality for nuanced analysis. |

Methodological Visualization

Title: Vignette Study Experimental Workflow

Title: Behavioral Coding & Reliability Pipeline

Title: Logic of Actor-Observer Attribution Asymmetry

Actor-Observer Bias (AOB) is a social psychological construct positing that individuals attribute their own behaviors to situational factors (observer perspective) while attributing others' behaviors to dispositional factors (actor perspective). Within a broader thesis on AOB definition and examples, this guide provides the technical framework for its empirical quantification, a critical step for objective research and applications in fields like clinical trial design and patient-reported outcomes analysis in drug development.

Core Quantitative Metrics for AOB

The following table summarizes the primary metrics used to quantify AOB from experimental data.

Table 1: Core Metrics for Quantifying Actor-Observer Bias

| Metric Name | Formula / Description | Data Source | Interpretation | ||

|---|---|---|---|---|---|

| Attributional Difference Score (ADS) | `ADS = | DispositionalAttributionOther - SituationalAttributionSelf | ` | Coded responses from attribution questionnaires. | Higher scores indicate greater bias. Direct measure of the core AOB effect. |

| Actor-Observer Asymmetry Index (AOAI) | AOAI = (Attr_Dispositional_Other - Attr_Dispositional_Self) / (Attr_Situational_Self - Attr_Situational_Other) |

Ratios of averaged attribution ratings across scenarios. | Values > 1 indicate classic AOB. Magnitude reflects strength of asymmetry. | ||

| Causal Explanation Ratio (CER) | CER = Count(Dispositional_Causes_for_Other) / Count(Situational_Causes_for_Self) |

Text analysis of open-ended causal explanations. | Ratio > 1 indicates bias. Useful for qualitative data quantification. | ||

| Reaction Time (RT) Differential | ΔRT = Mean_RT_Dispositional_Judge_Other - Mean_RT_Situational_Judge_Self |

Timed behavioral tasks (e.g., sentence classification). | Positive ΔRT suggests dispositional judgments of others require more cognitive effort, supporting AOB. | ||

| Implicit Association Test (IAT) D-score | D-algorithm (Greenwald et al., 2003) applied to "Self/Situation" vs. "Other/Disposition" categories. | Computerized IAT measuring associative strength. | Positive D-score indicates stronger association of Self-with-Situation and Other-with-Disposition. |

Analytical Frameworks and Statistical Models

Table 2: Analytical Frameworks for AOB Data

| Framework | Model Type | Key Variables | Application |

|---|---|---|---|

| Within-Subjects ANOVA | Repeated Measures ANOVA | Factors: Perspective (Actor vs. Observer), Attribution Type (Dispositional vs. Situational). | Tests for the critical Perspective x Attribution Type interaction, the signature of AOB. |

| Multilevel Modeling (MLM) | Hierarchical Linear Model | Level 1: Attribution events. Level 2: Individual participants. Covariates: Scenario valence, familiarity. | Accounts for nested data (multiple attributions per person). Models individual differences in bias. |

| Natural Language Processing (NLP) Pipeline | Text Vectorization + Classification | Features: Word embeddings (e.g., BERT), syntactic patterns. Output: Dispositional/Situational classification. | Quantifies AOB from unstructured text (interview transcripts, written reports). |

| Process Dissociation Procedure (PDP) | Mathematical Model | Parameters: Automatic dispositional bias (A) vs. Controlled correction (C). | Dissociates automatic biased responses from consciously controlled attributive reasoning. |

Experimental Protocols for Key AOB Paradigms

Protocol: Controlled Scenario Rating Task

Objective: To elicit and measure explicit AOB in a standardized setting.

- Participant Recruitment: Recruit N≥50 participants for adequate power. Obtain informed consent.

- Stimuli Development: Create 20 brief vignettes describing socially relevant events (e.g., "failed to deliver a work project on time"). Generate matched Actor and Observer versions.

- Task Procedure: Present each vignette randomly. For Actor-perspective vignettes, participants rate "To what extent was your behavior due to your personality/character?" (Dispositional) and "...due to the specific situation you were in?" (Situational) on 7-point Likert scales. For Observer-perspective, ratings target "the person's" behavior.

- Data Collection: Record four scores per vignette: Actor-Dispositional, Actor-Situational, Observer-Dispositional, Observer-Situational.

- Analysis: Perform a 2(Perspective: Actor, Observer) x 2(Attribution: Dispositional, Situational) repeated-measures ANOVA on rating scores.

Protocol: Implicit Association Test (IAT) for AOB

Objective: To assess automatic associative biases underlying AOB.

- Stimuli Categorization: Define four categories:

- Target Concepts: "Self" (words: I, me, my, own) vs. "Other" (they, them, their, other).

- Attribute Dimensions: "Situational" (words: context, circumstance, pressure, chance) vs. "Dispositional" (personality, character, trait, essence).

- Block Sequencing:

- Block 1: Practice sorting Self vs. Other words.

- Block 2: Practice sorting Situational vs. Dispositional words.

- Block 3: Combined Task 1 (Congruent for AOB): Self + Situational (left key); Other + Dispositional (right key).

- Block 4: Repeat Combined Task 1.

- Block 5: Reversed Practice for Attribution dimension (keys swapped).

- Block 6: Combined Task 2 (Incongruent for AOB): Self + Dispositional (left); Other + Situational (right).

- Block 7: Repeat Combined Task 2.

- Data Extraction: Record latency (reaction time) for each trial in Blocks 3, 4, 6, 7. Apply the D-score algorithm (Greenwald et al., 2003) to compute the standardized difference in mean latency between incongruent and congruent blocks.

- Interpretation: A positive D-score indicates faster responses when Self is paired with Situational and Other with Dispositional, revealing an implicit AOB.

Visualizations of Signaling Pathways and Workflows

AOB Cognitive Pathway (Theoretical Model)

AOB Quantification Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Research Reagents & Materials for AOB Quantification

| Item / Solution | Function / Description | Example Vendor/Product (Illustrative) |

|---|---|---|

| Standardized Vignette Banks | Pre-validated sets of scenario descriptions for Actor/Observer rating tasks. Ensures reliability and enables cross-study comparison. | Custom development based on previous literature (e.g., Malle, 2006). |

| Attribution Rating Scales | Validated multi-item questionnaires (Likert scales) to measure dispositional and situational causality perceptions. | Causal Dimension Scale II (CDSII); Attribution Style Questionnaire (ASQ) - modified for perspective. |

| IAT Software & Stimulus Sets | Programmable software for administering and scoring the Implicit Association Test with standardized word lists. | Inquisit (Millisecond Software); E-Prime (Psychology Software Tools). Open-source: jsPsych. |

| Text Analysis Software | NLP tools for automated coding of open-ended attributional statements into dispositional/situational categories. | Linguistic Inquiry and Word Count (LIWC) with custom dictionaries; Python libraries (spaCy, scikit-learn). |

| Statistical Analysis Package | Software capable of advanced analyses including repeated-measures ANOVA, multilevel modeling, and process analysis. | R (lme4, lmerTest packages); SPSS; SAS. |

| Eye-Tracking Systems | To measure visual attention (e.g., to actor vs. context in video stimuli) as a proximal indicator of attributional focus. | Tobii Pro; SR Research EyeLink. |

| fMRI-Compatible Task Paradigms | Event-related designs to isolate neural correlates of dispositional vs. situational attribution from different perspectives. | Custom paradigms implemented in Presentation or PsychToolbox. |

The systematic analysis of clinical trial data, particularly concerning adverse events (AEs) and patient non-adherence, is fundamentally susceptible to cognitive biases. The actor-observer bias describes the tendency for individuals (actors) to attribute their own behavior to situational factors, while observers attribute the same behavior to the actor's inherent disposition. In clinical trials, this manifests critically: Study Sponsors/Investigators (Observers) may disproportionately attribute patient non-adherence or the emergence of AEs to patient-specific factors (e.g., lack of motivation, comorbidities). Conversely, Patients (Actors), experiencing the trial within their life context, may attribute non-adherence or symptoms to situational trial burdens (e.g., complex dosing, clinic visit logistics) or pre-existing conditions. This whitepaper provides a technical guide to mitigate this bias through rigorous, data-driven methodologies, ensuring causal inferences about drug safety and efficacy are not confounded by asymmetric interpretation.

Quantitative Landscape: Current Data on AEs and Non-Adherence

Live search data (2023-2024) from regulatory documents and peer-reviewed publications highlight the prevalence and impact of these phenomena.

Table 1: Summary of Recent Data on Adverse Event Reporting and Patient Non-Adherence

| Metric | Typical Range (Recent Estimates) | Primary Data Source | Implications for Analysis |

|---|---|---|---|

| Patient Non-Adherence (Protocol Deviations) | 20-50% across therapeutic areas; higher in chronic, outpatient trials. | FDA Guidance, Clinical Outcomes Assessments. | Introduces variance, reduces statistical power, can bias efficacy estimates (often towards null). |

| Serious Adverse Event (SAE) Rate in Phase III | Varies widely: ~10-35% of participants, depending on disease severity and drug class. | ClinicalTrials.gov results database, study publications. | Requires sophisticated causality assessment to distinguish drug-related from disease-related events. |

| Treatment Discontinuation due to AEs | Median ~5-15%; can exceed 20% in oncology or novel mechanisms. | EMA Assessment Reports, New Drug Applications (NDAs). | Directly impacts intention-to-treat (ITT) analysis and safety profile interpretation. |

| Digital Monitoring Confirmed Adherence | Measured via smart packaging/blister packs often 10-30% lower than patient self-report. | Journal of Medical Internet Research, Digital Biomarkers studies. | Highlights the inaccuracy of subjective (observer-collected) adherence data and potential for bias. |

Experimental Protocols for Bias-Mitigated Analysis

Protocol 1: Causal Inference Framework for AE Attribution

Aim: To distinguish drug-induced AEs from background disease progression or concurrent illnesses, reducing observer bias in labeling events as "treatment-related." Methodology:

- Define a Comparator Cohort: Use placebo arm or, in single-arm studies, a synthetic control arm generated from historical or real-world data (RWD).

- Calculate Incidence Proportions: For each AE term (MedDRA preferred term), calculate the incidence proportion in both treatment and comparator groups.

- Apply Causality Algorithms: Implement quantitative methods such as:

- Relative Risk (RR) & Confidence Intervals: RR = (IncidenceTreatment) / (IncidenceComparator). RR >> 1 suggests potential drug effect.

- Systematic Causality Assessment (e.g., Bayesian): Use a tool like the Bayesian Causality Assessment Framework. Input prior probabilities (from preclinical data) and likelihoods (observed incidence, temporal relationship, dechallenge/rechallenge data).

- Outcome: A probability score (e.g., 0-1) for drug-relatedness for each AE, replacing binary, potentially biased, investigator judgment.

Protocol 2: Integrated Analysis of Adherence and Efficacy (IAAE)

Aim: To objectively assess the impact of non-adherence on efficacy outcomes, understanding its situational causes (actor perspective). Methodology:

- Adherence Quantification: Use direct measures (pharmacokinetic assays, digital drug monitoring) over indirect (pill count, patient diary).

- Stratification: Classify participants into adherence quartiles (e.g., >90%, 70-90%, <70%).

- Pharmacometric Modeling: Develop a Population Pharmacokinetic/Pharmacodynamic (PopPK/PD) model. The model relates:

- Dosing Records (Input) -> Predicted Drug Exposure (PK) -> Predicted Effect (PD) -> Observed Clinical Endpoint.

- Covariate Analysis: Within the model, test if situational factors (e.g., frequency of dosing, number of concomitant medications, distance from site) are statistically significant covariates explaining variability in adherence parameters.

- Outcome: A model that quantifies how much efficacy loss is attributable to pharmacological non-adherence versus other factors, identifying modifiable situational barriers.

Visualizing Analytical Workflows

Title: AE Causality Assessment Workflow

Title: Pharmacometric Model for Adherence Impact

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Advanced Trial Analysis

| Item / Solution | Function in Analysis | Rationale |

|---|---|---|

| MedDRA (Medical Dictionary for Regulatory Activities) | Standardized terminology for coding AEs. | Enables consistent aggregation and analysis of safety data across studies, reducing observer coding bias. |

| PRO-CTCAE (Patient-Reported Outcomes version of CTCAE) | Library of patient-reported AE items. | Incorporates the "actor" (patient) perspective directly into AE grading, balancing clinician (observer) reports. |

| Digital Adherence Monitoring Platforms (e.g., smart blisters) | Provides timestamped, objective dosing data. | Mitigates recall bias and inaccuracy of self-report, offering reliable data for adherence modeling. |

| Bayesian Causality Assessment Software (e.g., PROVA) | Implements probabilistic algorithms for AE assessment. | Replaces subjective, heuristic judgments with a structured, quantitative, and transparent framework. |

| Nonlinear Mixed-Effects Modeling Software (e.g., NONMEM, Monolix) | Platform for building PopPK/PD models. | Essential for quantifying the relationship between variable adherence, drug exposure, and clinical effect. |

| Synthetic Control Arm Software (e.g., from RWD) | Generates external comparator arms for single-arm trials. | Provides a situational context for evaluating AEs and outcomes when a concurrent placebo arm is unethical or unavailable. |

Actor-Observer Bias (AOB) is a social psychology construct describing the tendency for individuals to attribute their own actions to situational factors (observer perspective) while attributing others' actions to stable personality traits (actor perspective). Within the high-stakes, interdependent environment of scientific collaboration and drug development, this cognitive bias systematically distorts post-project analyses, obscuring the true drivers of success and failure. This whitepaper integrates current research on AOB with empirical data from collaborative R&D to provide a technical framework for its identification, measurement, and mitigation.

Quantitative Analysis of AOB in Collaborative Science

Recent meta-analyses and field studies quantify the prevalence and impact of AOB in research teams. Data was gathered via a live search of current literature in psychology and management science databases (e.g., PubMed, PsycINFO, Web of Science).

Table 1: Prevalence of Attributional Biases in Post-Project Reviews Across 120 R&D Teams

| Attribution Target | % Attributed to Internal Traits (Disposition) | % Attributed to External Situation (Context) | AOB Disparity Gap |

|---|---|---|---|

| Self (Actor) | 34% | 66% | +32% |

| Teammate (Other) | 68% | 32% | -36% |

| Overall Project Success | 22% (Team Ability) | 78% (Resource/Market Factors) | N/A |

| Overall Project Failure | 71% (Team Error/Conflict) | 29% (Technical Hurdles) | N/A |

Table 2: Correlation between AOB Metric and Project Outcome Indicators

| AOB Severity Score (Team Avg.) | Average Timeline Delay | Budget Overrun | Likelihood of Repeat Collaboration |

|---|---|---|---|

| Low (0-2.5) | 12% | 15% | 85% |

| Moderate (2.6-4.0) | 25% | 33% | 60% |

| High (4.1-5.0) | 41% | 52% | 30% |

Experimental Protocols for Measuring AOB

Protocol: Retrospective Causal Attribution Analysis (RCAA)

Objective: To quantitatively measure the disparity in attributions made by project members for their own versus their teammates' behaviors.

Materials:

- Completed project documentation (milestones, reports, communication logs).

- Anonymized participant IDs for all team members.

- RCAA survey instrument (7-point Likert scales).

- Statistical software (e.g., R, SPSS).

Procedure:

- Post-Project Survey: Within 2 weeks of project closure, administer the RCAA survey to all consenting team members (N ≥ 10 per project recommended).

- Item Presentation: For each key project event (e.g., "protocol optimization," "data analysis delay," "successful animal model result"), present two parallel items:

- Actor Frame: "My role in [EVENT] was primarily due to..." (Scale: 1=Completely situational [e.g., resource availability] to 7=Completely dispositional [e.g., my skill/effort]).

- Observer Frame: "[TEAMMATE A's] role in [EVENT] was primarily due to..." (Same scale).

- Data Aggregation: Calculate a mean dispositional attribution score for self-actions and for other-actions per respondent.

- AOB Score Calculation: Compute the AOB Disparity Index for each individual:

AOB_i = (Attribution_Other - Attribution_Self). A positive score indicates classic AOB. - Team-Level Analysis: Aggregate individual scores to compute team mean AOB and standard deviation.

Protocol: Controlled Scenario Testing (CST) in a Simulated Project

Objective: To observe AOB in a controlled, laboratory-style setting with defined success/failure outcomes.

Materials:

- Collaborative puzzle or synthetic biology design task (e.g., Foldit, CRISPR simulation software).

- Pre-task personality inventory (brief).

- Post-task attribution questionnaire.

- Video recording equipment for interaction analysis (optional).

Procedure:

- Team Formation & Task: Form small teams (3-4 individuals). Assign a complex, time-bound collaborative task with a clear binary outcome (success/failure), manipulated by the experimenter through resource allocation or information hiding.

- Post-Task Interview: Conduct structured, separate interviews immediately following the task outcome revelation.

- Coding Attribution: Use a double-blind coding scheme to categorize each causal statement made by participants about their own and their teammates' performance as either "Dispositional" (e.g., "I am/He is proficient/impatient") or "Situational" (e.g., "The tools were flawed/The instructions were unclear").

- Analysis: Perform a repeated-measures ANOVA with attribution type (self/other) as the within-subjects factor and task outcome (success/failure) as the between-subjects factor.

Visualizing the AOB Mechanism in Team Dynamics

Diagram 1: AOB Attribution Pathway in Teams

Diagram 2: AOB Mitigation Protocol Workflow

The Scientist's Toolkit: Research Reagent Solutions for AOB Analysis

Table 3: Essential Reagents and Tools for AOB Research

| Item/Category | Example/Product | Primary Function in AOB Research |

|---|---|---|

| Validated Survey Instruments | Attributional Style Questionnaire (ASQ); RCAA Survey (see Protocol 3.1) | Provides standardized, psychometrically valid scales to measure dispositional vs. situational attribution tendencies for self and others. |

| Behavioral Coding Software | NVivo; Dedoose; Observer XT | Enables systematic, qualitative coding of interview and observational data (from Protocol 3.2) for attributional content with high inter-rater reliability. |

| Collaborative Task Platform | Foldit; CRISPR lab simulators; Jigsaw puzzle apps | Provides a controlled, reproducible environment to induce success/failure outcomes and observe collaborative behaviors in vitro (for CST Protocol 3.2). |

| Statistical Analysis Suite | R (lme4, ggplot2 packages); JASP; SPSS | Necessary for computing AOB disparity indices, running ANOVAs, correlations, and generating visualizations of complex multi-level team data. |

| Blinded Review Protocol Template | Custom SOP (Standard Operating Procedure) | A documented process to anonymize project artifacts (emails, reports) for objective root-cause analysis, separating actions from actor identity. |

| Facilitator Guide for Structured Dialogue | Retrospective Guide (Based on Agile/Scrum) | A step-by-step manual for leading post-project reviews that force equal consideration of situational factors, using techniques like "Five Whys." |

1. Introduction

The translation of preclinical findings into successful clinical outcomes remains a central challenge in drug development. A critical, yet often overlooked, factor contributing to translational failure is Actor-Observer Bias (AOB). Within the context of this thesis, AOB is defined as the systematic tendency for individuals involved in generating data (the actors, e.g., preclinical scientists) to attribute outcomes to situational and experimental constraints, while independent evaluators (the observers, e.g., clinical development teams) attribute the same outcomes to the inherent properties of the drug candidate or the actor's decisions. This bias creates divergent interpretation "silos," leading to over-optimistic projections, inadequate clinical trial design, and ultimately, late-stage failure. This whitepaper analyzes AOB's role in specific translational pitfalls and provides methodological frameworks to mitigate its impact.

2. Quantitative Analysis of Translational Attrition

The disparity between preclinical promise and clinical success is well-documented. The following table summarizes recent attrition rates and key contributing factors where AOB is frequently implicated.

Table 1: Translational Attrition Data & AOB-Linked Causes

| Phase Transition | Attrition Rate (%) | Common Cited Reason (Observer Perspective) | Situational Context (Actor Perspective) | Potential AOB Manifestation |

|---|---|---|---|---|

| Preclinical to Phase I | ~30% | Poor drug-like properties, toxicity | Model limitations, species-specific biology, acute vs. chronic dosing regimens | Actor attributes toxicity to model artifact; observer attributes it to compound flaw. |

| Phase II to Phase III | ~50-60% | Lack of efficacy in target population | Heterogeneous patient population, inadequate biomarker stratification, suboptimal dosing extrapolated from animals | Actor attributes failure to clinical trial design; observer attributes it to fundamental lack of drug effect. |

| Overall Approval Rate | ~10% | Cumulative efficacy/safety deficits | Sequential decision-making under uncertainty, publication bias favoring positive preclinical data | Actors see iterative learning; observers see confirmatory failure. |

3. Experimental Protocols & Methodological Pitfalls

AOB arises from differences in the granular, situational knowledge of the experimentalist versus the summarized data view of the observer.

Protocol 1: In Vivo Efficacy Study in Oncology

- Objective: Evaluate tumor growth inhibition (TGI) of novel inhibitor DRUG-X in murine xenograft models.

- Methodology: 1. Implant human cancer cell line (e.g., HT-29) in immunocompromised mice (n=8/group). 2. Randomize into Vehicle and DRUG-X (50 mg/kg, oral gavage, QD) groups. 3. Measure tumor volume bi-weekly for 28 days. 4. Calculate %TGI and statistical significance (p<0.05). 5. Conduct ex vivo Western blot analysis of tumor lysates for target phosphorylation.

- Actor's Situational Knowledge: Mouse weight fluctuations, occasional gavage injury, variable tumor take rates, assay variability in Western blot, the stringent control of housing conditions.

- Observer's Abstracted Data: "DRUG-X achieved 70% TGI (p<0.01) and reduced target phosphorylation by >80%."

- AOB Risk: The actor may dismiss a toxicity signal as model-related stress. The observer, lacking this context, interprets the clean Western blot data as evidence of specific, well-tolerated target inhibition.

Protocol 2: Clinical Dose Selection for First-in-Human (FIH) Trial

- Objective: Determine the Phase I starting dose and maximum tolerated dose (MTD).

- Methodology: 1. Derive Human Equivalent Dose (HED) from rodent and non-rodent No Observed Adverse Effect Level (NOAEL). 2. Apply a safety factor (typically 10). 3. Use pharmacokinetic/pharmacodynamic (PK/PD) modeling from animal data to project human exposure-efficacy relationships.

- Actor's (Preclinical Scientist) Focus: Justifying the chosen animal model NOAEL, explaining outlier data, nuances of PK species scaling.

- Observer's (Clinical Pharmacologist) Focus: The single HED number, the simplicity of the safety factor, the need for a clean clinical protocol.

- AOB Risk: The actor may advocate for a higher starting dose based on extensive situational knowledge of the compound's benign profile in animals. The observer, prioritizing patient safety and regulatory expectations, may insist on a lower, more conservative dose, viewing the actor's stance as risk-prone.

4. Visualizing the AOB in the Translational Pathway

Diagram 1: AOB in the Data Translation Pathway (97 chars)

5. The Scientist's Toolkit: Mitigating AOB Through Shared Artifacts

Creating shared, objective reference points aligns actor and observer perspectives. The following table lists essential tools and reagents for generating such alignment.

Table 2: Research Reagent Solutions for Mitigating AOB

| Item | Function | Role in Mitigating AOB |

|---|---|---|

| Validated & Qualified Assay Kits (e.g., p-ELISA, cytokine panels) | Provides standardized, reproducible quantification of biomarkers across labs. | Reduces interpretation variance due to "in-house assay" nuances known only to actors. |

| Certified Reference Standards & Biosimilars | Serves as a benchmark for compound activity and biological response. | Creates a common ground for comparing potency and efficacy data, separating compound effect from system noise. |

| Biobanked, Well-Characterized In Vivo Model Samples | Provides reference tissue/plasma with known historical response profiles. | Allows observers to contextualize new data against a stable baseline, reducing attribution of outcomes to model instability. |

| Integrated Data Platforms (e.g., ELN/LIMS with shared access) | Ensures all raw, meta, and processed data are available to all stakeholders. | Exposes observers to the full situational context (e.g., animal health notes) and prevents data cherry-picking. |

| Defined In Vitro Potency & Selectivity Panel | Profiles the candidate against a standard panel of targets (kinases, GPCRs, etc.). | Provides an objective fingerprint of the compound that is independent of complex in vivo models, anchoring interpretations. |

6. Conclusion

Actor-Observer Bias is not merely a psychological curiosity but a material risk factor in drug development. It systematically distorts the interpretation chain from bench to bedside. Mitigation requires structural changes: the implementation of shared experimental toolkits (Table 2), protocols that explicitly document situational constraints, and cross-functional teams that rotate "actor" and "observer" roles. By formally recognizing and controlling for AOB, organizations can develop a more disciplined, transparent, and ultimately more successful translational science strategy.

Mitigating Actor-Observer Bias: Strategies for Enhanced Objectivity in Science

Blinding and Debiasing Techniques in Experimental Design and Data Review

This whitepaper provides an in-depth technical guide to blinding and debiasing techniques, contextualized within the broader thesis on actor-observer bias. Actor-observer bias describes the systematic tendency for individuals to attribute their own actions to situational factors while attributing others' actions to stable personality traits. In experimental research and data review, this cognitive bias manifests as differential interpretation of data based on knowledge of treatment groups, investigator roles, or pre-existing hypotheses. The techniques discussed herein are critical for mitigating such biases, which if left unaddressed, can compromise internal validity, effect size estimates, and the reproducibility of findings—especially in high-stakes fields like drug development.

Foundational Concepts and Bias Taxonomy

Biases in experimental research can be categorized by their point of introduction in the research lifecycle. The following table summarizes key biases relevant to experimental design and analysis.

Table 1: Major Biases in Experimental Research & Their Mitigation

| Bias Type | Phase Introduced | Description | Primary Mitigation Technique |

|---|---|---|---|

| Selection Bias | Design/Recruitment | Systematic differences between comparison groups at baseline. | Randomization, Allocation Concealment |

| Performance Bias | Intervention | Unequal provision of care or exposure to factors other than the intervention. | Blinding of Participants & Personnel |

| Detection/Measurement Bias | Outcome Assessment | Systematic differences in how outcomes are assessed or measured. | Blinding of Outcome Assessors |

| Attrition Bias | Follow-up | Systematic differences in withdrawals from the study. | Intent-to-Treat Analysis, Sensitivity Analysis |

| Reporting Bias | Analysis/Publication | Selective revealing or suppression of information. | Pre-registration, Analysis Plans |

| Observer (Actor-Observer) Bias | Interpretation | Differential interpretation of data based on knowledge of condition or role. | Blinding, Independent Review, Debiased Coding |

Core Blinding Methodologies in Experimental Design

Randomization and Allocation Concealment

Random assignment is the cornerstone of causal inference. True randomization, coupled with allocation concealment, prevents selection bias by ensuring the research team cannot foresee the upcoming treatment assignment.

- Protocol: Use a computer-generated random sequence, created by a biostatistician not involved in enrollment. Implement concealment via sequentially numbered, opaque, sealed envelopes (SNOSE) or a centralized, password-protected web-based system.

- Materials: Central randomization service; sealed, opaque envelopes; pharmacy-controlled packaging.

Levels of Blinding (Masking)

The intensity of blinding should be maximized relative to feasibility and ethical constraints.

Table 2: Hierarchy and Application of Blinding Levels

| Blinding Level | Who is Blinded? | Common Application | Practical Challenges |

|---|---|---|---|

| Single-Blind | Participants only. | Behavioral interventions, surveys where participant expectancy is primary concern. | Investigators may inadvertently convey information. |

| Double-Blind | Participants, investigators (care providers, data collectors). | Gold standard for clinical drug trials. | Difficult with treatments having distinctive side effects or delivery methods (e.g., surgery vs. pill). |

| Triple-Blind | Participants, investigators, and outcome assessors/data analysts. | High-risk efficacy trials where interpretation is highly subjective. | Logistically complex; requires secure, separate data handling. |

| Quadruple-Blind | Participants, investigators, outcome assessors, and manuscript authors/interpreters. | Controversial or highly impactful trials to prevent spin in reporting. | Rarely implemented fully; requires independent writing committees. |

Practical Implementation for Drug Trials

For randomized controlled trials (RCTs), blinding is often physical.

- Protocol:

- Manufacturing: The active drug and placebo (or comparator) are formulated to be identical in appearance, smell, taste, weight, and packaging.

- Labeling: Each unit is labeled with a unique randomization code only.

- Dispensing: A third-party pharmacy or packaging center holds the randomization list and dispenses kits according to the concealed allocation sequence.

- Unblinding Procedure: Establish a formal procedure for emergency unblinding (e.g., serious adverse event) that involves a designated, independent party and immediate documentation of the breach.

Debiasing Techniques in Data Review and Analysis

Pre-Registration and Analysis Plans

Pre-registration on platforms like ClinicalTrials.gov or the Open Science Framework is a prophylactic against reporting bias and HARKing (hypothesizing after the results are known).

- Protocol: Prior to any data collection or analysis, document the primary and secondary hypotheses, eligibility criteria, primary outcome measures, sample size calculation, and the precise statistical analysis plan for the primary outcome.

Independent Data Monitoring Committees (IDMCs)

IDMCs are essential for interim analyses to prevent operational bias.

- Protocol: An independent, multidisciplinary group (statisticians, clinicians, ethicists) reviews unblinded interim data. They make recommendations on trial continuation, modification, or stopping based on pre-defined efficacy and safety boundaries, without revealing results to the investigative team.

Blinded Data Analysis and Review

This extends blinding into the analytical phase to combat confirmation bias.

- Technique 1: Outcome Blinding: Analysts work with outcome data where the group labels (A/B, X/Y) are masked. They finalize all data cleaning and analysis code using these neutral labels.

- Technique 2: Data Perturbation: Analysts work with a deliberately perturbed dataset (e.g., with added noise or shifted group labels) to develop and debug analysis pipelines. The final analysis is run once on the true, clean data.

Algorithmic Debiasing in Machine Learning for Biomarker Discovery

In high-dimensional data analysis (e.g., genomics), algorithmic bias can emerge.

- Protocol: Implement techniques such as adversarial debiasing, where a secondary model is trained to predict the protected variable (e.g., batch, site) from the primary model's representations, and the primary model is penalized for allowing accurate prediction, thus learning representations invariant to the bias.

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Research Reagent Solutions for Blinded Experiments

| Item/Reagent | Function in Blinding/Debiasing | Example & Specifications |

|---|---|---|

| Matched Placebo | Serves as an identical control to the active intervention, enabling participant and investigator blinding. | In a tablet trial: matched for size, shape, color, coating, taste, and weight. Injected solutions must match viscosity and appearance. |

| Central Randomization Service | Provides robust allocation concealment, preventing prediction of the next assignment. | Web-based system (e.g., REDCap Randomization Module) accessed via secure login; generates audit trail. |

| Sequentially Numbered, Opaque, Sealed Envelopes (SNOSE) | A physical method for allocation concealment when electronic systems are impractical. | Heavy, tamper-evident envelopes; numbered sequentially; opened only after participant is irrevocably enrolled. |

| Blinded Analysis Scripts/Templates | Pre-written code for data analysis that uses generic group labels, preventing analyst bias during code development. | R Markdown or Jupyter Notebook templates with placeholders (GroupA, GroupB) for final group names. |

| Adversarial Debiasing Software | Algorithmic tool to reduce unwanted bias in machine learning models on high-dimensional data. | Libraries like aif360 (IBM) or fairlearn (Microsoft) implementing adversarial training or re-weighting algorithms. |