Advanced GPS Telemetry in Movement Ecology: A Comprehensive Guide to Data Analysis for Precision Research

This article provides a detailed, current guide to GPS telemetry data analysis methods for researchers, scientists, and drug development professionals.

Advanced GPS Telemetry in Movement Ecology: A Comprehensive Guide to Data Analysis for Precision Research

Abstract

This article provides a detailed, current guide to GPS telemetry data analysis methods for researchers, scientists, and drug development professionals. Covering foundational concepts, core analytical methodologies, practical troubleshooting, and validation techniques, it synthesizes the latest approaches from movement ecology. The content is tailored to enable precise quantification of animal movement patterns, which serves as a critical behavioral biomarker with direct applications in neuroscience, toxicology, and translational biomedical research. The guide emphasizes robust, reproducible workflows to transform raw location data into interpretable biological insights.

GPS Telemetry Fundamentals: From Raw Fixes to Ecological Insight

Within a broader thesis on advancing GPS telemetry data analysis methods in movement ecology, this document details the foundational pipeline. Robust data collection, meticulous management, and rigorous preprocessing are critical for generating reliable inputs for subsequent analytical models (e.g., step selection functions, state-space models). This pipeline directly impacts the validity of inferences regarding animal movement, habitat use, and the effects of anthropogenic change, with methodological parallels applicable to sensor data in clinical and drug development trials.

Data Collection Protocols

GPS Telemetry Device Deployment

Objective: To collect high-resolution spatiotemporal location data from free-ranging animals. Protocol:

- Animal Capture & Handling: Follow institutionally approved Animal Care and Use Committee (IACUC) protocols. Minimize handling time and stress.

- Device Selection: Choose device based on species mass (<5% of body mass), target fix rate, battery life, and environmental durability (see Table 1).

- Attachment: Employ species-appropriate attachment (e.g., collar, harness, glue-on for birds/marine species). Ensure fit allows for normal behavior and growth.

- Programming: Program duty cycle (e.g., fix interval: 5 min - 4 hours) and data transmission schedule (store-on-board vs. satellite upload) using manufacturer software.

- Release & Monitoring: Release animal at capture site. Monitor for initial acclimation via remote data checks.

Field Calibration & Validation Data Collection

Objective: To collect ground-truth data for assessing and correcting GPS error. Protocol:

- Static Test: Deploy 10+ collars at known, geodetically surveyed locations across habitat types (open, closed canopy, rugged terrain) for ≥24 hours.

- Data Logging: Program collars at the study's standard fix rate. Record timestamps and true coordinates for each fix attempt.

- Habitat Covariate Measurement: At each test site, record canopy closure (using spherical densiometer), slope, and aspect for error modeling.

Data Management Framework

Ingestion & Storage Protocol

Objective: To create a secure, versioned, and queryable central repository for raw and derived data. Protocol:

- Raw Data Ingestion: Automate download from vendor portals (e.g., Movebank API, Argos) to a designated

./data/raw/directory. Files are immutable. - Database Schema: Implement a relational database (e.g., PostgreSQL/PostGIS) with tables:

animals,deployments,gps_fixes_raw,sensor_data. - Metadata Log: Maintain a

metadata.csvtracking deployment dates, animal biologics, device specs, and processing flags for each deployment.

Quality Assurance (QA) Tracking

Objective: To systematically log data issues for reproducible filtering. Protocol:

- Automated Flagging: Scripts flag potential outliers using initial filters (e.g., speed >150 km/h, improbable altitude).

- QA Table: Create

qa_flagstable linked togps_fixes_raw. Flags includespeed_outlier,missing_coords,dop_high(Dilution of Precision >10). - Review: Visually inspect flagged points in GIS software (e.g., QGIS) before final filtering decisions are logged.

Preprocessing Protocols

GPS Error Assessment & Correction

Objective: To quantify and mitigate location error using empirical calibration data. Protocol:

- Calculate Error Metrics: For static test data, compute error (step length) as Euclidean distance between observed fix and known true location.

- Model Error: Fit a Generalized Linear Mixed Model (GLMM) with Gaussian distribution:

Error ~ Habitat + DOP + (1|Device_ID). Habitat is a categorical factor. - Apply Correction: For field data, use model coefficients to generate habitat-specific error distributions. Incorporate into movement models as observation error, rather than altering raw coordinates.

Data Cleaning & Filtering

Objective: To remove biologically implausible locations while preserving natural movement variance. Protocol:

- Speed-Distance-Angle Filter: Implement a recursive algorithm (e.g.,

sdafilterinctmmR package). Remove points implying unrealistic velocity or turning angles based on species maximum speed. - DOP Filter: Exclude fixes with

HDOP(Horizontal DOP) > 10, indicating poor satellite geometry. - Manual Anomaly Review: Plot tracks and remove clear anomalies (e.g., single offshore point for a terrestrial mammal) not caught by automated filters.

Habitat Covariate Extraction

Objective: To annotate each GPS fix with environmental predictors for movement analysis. Protocol:

- Raster Stack Preparation: Compile geospatial rasters (resolution ≤30m) in a consistent projection (e.g., WGS84 UTM). See Table 2 for common layers.

- Batch Extraction: Using the

extractfunction in R (raster/terrapackages) or Python (rasterstats), sample raster values at each cleaned fix coordinate. - Temporal Covariates: Derive

julian_day,time_of_day, andseasonfrom timestamps.

Table 1: Performance Specifications of Common GPS Telemetry Systems

| Device Type | Typical Mass (g) | Fix Rate Options | Estimated Accuracy (m) | Primary Use Case |

|---|---|---|---|---|

| Satellite GPS (Iridium) | 200-1500 | 5 min - 12 hr | 10-30 (Clear Sky) | Large mammals, remote areas |

| UHF Download GPS | 20-300 | 1 min - 4 hr | 5-20 (Clear Sky) | Medium-sized mammals, accessible terrain |

| GPS-GSM (Cellular) | 50-500 | 5 min - 24 hr | 10-40 (Varies) | Areas with cellular coverage |

| Archival GPS (Data Loggers) | 5-50 | 1 sec - 1 hr | 5-15 (Post-processed) | Birds, marine species, recovery-based studies |

Table 2: Essential Environmental Covariates for Movement Ecology Studies

| Covariate Class | Example Data Sources | Spatial Resolution | Relevance to Movement Analysis |

|---|---|---|---|

| Land Cover/Cover | Copernicus Global Land Cover, NLCD (US) | 10m - 100m | Habitat selection, resource use |

| Topography | SRTM Digital Elevation Model (DEM) | 30m | Energetic costs, movement corridors |

| Human Footprint | Global Human Footprint Index | 1km | Anthropogenic avoidance/attraction |

| Vegetation Index (NDVI) | MODIS, Landsat | 250m - 30m | Foraging habitat quality, phenology |

| Distance to Features | Derived from OpenStreetMap or government layers | Vector | Proximity to roads, water, settlements |

Visualizations

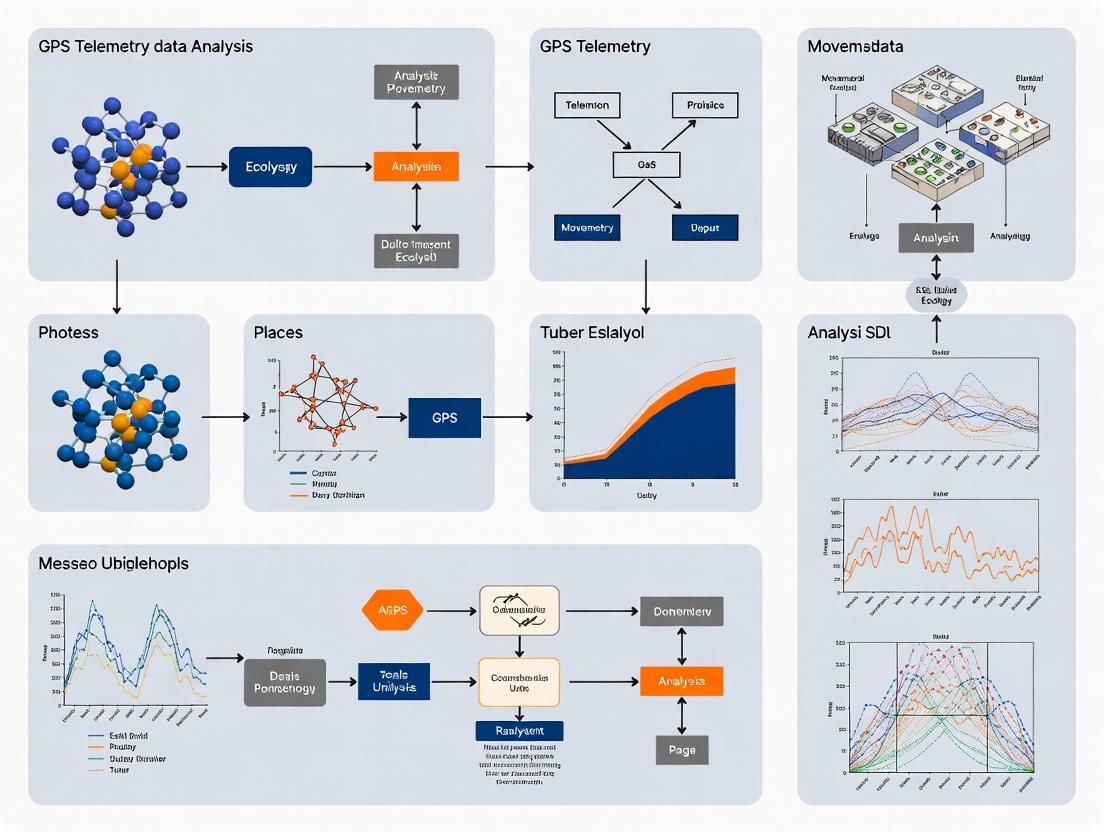

Diagram 1: GPS Telemetry Data Pipeline Overview

Diagram 2: GPS Error Assessment and Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Digital Tools for the Core Pipeline

| Item/Tool | Category | Function in Pipeline |

|---|---|---|

| GPS Telemetry Collar (e.g., Telonics, Vectronic) | Hardware | Primary data collection device; acquires timestamped location and optional sensor data. |

| Movebank (movebank.org) | Data Repository | Online platform for managing, sharing, and archiving animal tracking data with integrated tools. |

R Programming Language with tidyverse, amt, ctmm, sf |

Software | Primary environment for scripting all data management, preprocessing, and analysis steps. |

| PostgreSQL with PostGIS Extension | Software | Relational database for structured, spatial querying and storage of large tracking datasets. |

| QGIS (qgis.org) | Software | Open-source GIS for visual data inspection, manual track editing, and map creation. |

| Copernicus Global Land Cover | Data | Provides standardized, global raster layers for land cover covariate extraction. |

| Digital Elevation Model (DEM) (e.g., SRTM, ASTER) | Data | Provides topographic covariates (elevation, slope, terrain ruggedness). |

| Spherical Densiometer | Field Tool | Measures canopy closure at calibration sites for habitat-specific error modeling. |

Within the framework of a thesis on GPS telemetry data analysis in movement ecology, the precise quantification of animal movement is foundational. This Application Note details the operational definitions, calculation protocols, and ecological interpretations of three core movement metrics: Step Length, Turning Angle, and Residence Time. These metrics serve as the primary data for analyzing movement paths, identifying behavioral states, and linking movement to ecological processes, with applications extending to disease transmission modeling and environmental risk assessment in drug development.

Movement paths derived from GPS telemetry are discretized into a sequence of relocations at time interval Δt. The triad of Step Length, Turning Angle, and Residence Time transforms raw spatio-temporal coordinates into behavioral descriptors.

- Step Length (L): The straight-line distance between two consecutive relocations i and i+1. It is a measure of displacement speed and perceptual range.

- Turning Angle (Φ): The change in direction between two consecutive steps (vectors). Calculated at relocation i, it uses steps (i-1, i) and (i, i+1). It quantifies directionality and tortuosity.

- Residence Time (Rₜ): The cumulative duration an individual spends within a defined area or around a specific location (e.g., a radius around a point). It indicates site fidelity, foraging intensity, or resting behavior.

Table 1: Core Movement Metrics: Definitions, Units, and Ecological Interpretations

| Metric | Mathematical Definition | Units | Typical Range | Primary Ecological Interpretation |

|---|---|---|---|---|

| Step Length (L) | L = √[(xᵢ₊₁ - xᵢ)² + (yᵢ₊₁ - yᵢ)²] | Meters (m) | 0 to ∞ | Movement speed, dispersal, search intensity. Near-zero values indicate resting. |

| Turning Angle (Φ) | Φ = atan2(vᵢ × vᵢ₊₁, vᵢ · vᵢ₊₁) | Radians / Degrees | -π to π (-180° to 180°) | Tortuosity. Φ ≈ 0 indicates directed movement; Φ ≈ ±π indicates reversal; Φ ≈ ±π/2 indicates lateral movement. |

| Residence Time (Rₜ) | Rₜ = Σ Δt for all points within defined area | Seconds (s) / Hours (hr) | 0 to Total Track Duration | Site fidelity, resource use, foraging/resting duration. High Rₜ suggests a biologically significant site. |

Table 2: Common Derived Statistics from Movement Metrics for Path Analysis

| Statistic | Description | Calculated From | Informs Behavioral Mode |

|---|---|---|---|

| Net Squared Displacement | Square of distance from start point over time. | Step Lengths & Turning Angles | Migration vs. sedentariness. |

| Mean Squared Displacement | Average of squared displacements over time lags. | Step Lengths & Turning Angles | Diffusion type (e.g., Brownian vs. Lévy). |

| Path Sinuosity | (Step Length) / (Degree of Turning) | Joint distribution of L & Φ | Searching strategy (e.g., area-restricted search). |

Experimental Protocols

Protocol 1: Calculation of Step Length and Turning Angle from GPS Data

Objective: To derive primary movement metrics from cleaned GPS relocation data.

Input: Time-stamped GPS coordinates (x, y, t) in a projected coordinate system (e.g., UTM).

Software: R (with adehabitatLT, move packages) or Python (with pandas, numpy).

Data Cleaning & Preparation:

- Import data. Remove 2D/3D fixes with high dilution of precision (HDOP/PDOP > 5).

- Ensure data is sorted chronologically for each individual.

- Project coordinates to a Cartesian system (e.g., UTM) for accurate Euclidean distance calculation.

Step Length Calculation:

- For each individual, calculate the difference in x and y coordinates between consecutive fixes (i and i+1).

- Apply the Euclidean distance formula:

L_i = sqrt( (x[i+1] - x[i])^2 + (y[i+1] - y[i])^2 ). - Assign

L_ito the time stamp of the starting fix i.

Turning Angle Calculation:

- Create movement vectors:

v_i= (x[i]-x[i-1], y[i]-y[i-1]) andv_i+1= (x[i+1]-x[i], y[i+1]-y[i]). - Calculate the angle using the arctangent of the cross product and dot product:

Φ_i = atan2( (v_i.x * v_i+1.y) - (v_i.y * v_i+1.x), (v_i.x * v_i+1.x) + (v_i.y * v_i+1.y) ). - The result is in radians (-π, π]. Convert to degrees if required (Φdeg = Φrad * 180/π).

- The first and last fixes of a trajectory will have undefined (

NA) turning angles.

- Create movement vectors:

Protocol 2: Estimation of Residence Time Using Time-Local Convex Hulls

Objective: To quantify the duration an animal spends in a localized area, accounting for recursive movements.

Input: GPS trajectory with calculated Step Lengths and Turning Angles.

Software: R (with adehabitatHR, recurse package).

Define Revisitation Radius (r):

- Select a biologically relevant radius (r). This can be based on the animal's body length, perceptual range, or the spatial grain of the resource (e.g., 50m for a large herbivore at a water point).

Calculate Revisitations:

- For each GPS fix i, compute the distance to all other fixes j in the trajectory.

- Identify all fixes j that are within radius

rof fix i. - A "revisit" to the circle centered on i is counted when the animal leaves the circle (all subsequent fixes > r away) and then re-enters it.

Calculate Residence Time:

- For each unique visit to a circle (a bout of consecutive fixes within

rof a central point), sum the time intervals (Δt) between those fixes. - The total Residence Time for a specific location (cluster of circles) is the sum of all visit durations to that location.

- Visualize using recursion maps or plot residence time against revisit frequency.

- For each unique visit to a circle (a bout of consecutive fixes within

Visualizations

Title: Workflow for Analyzing Key Movement Metrics from GPS Data

Title: Geometric Definition of Step, Angle, and Residence

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Movement Metric Analysis

| Item / Solution | Function in Analysis | Example / Note |

|---|---|---|

| GPS Telemetry Collar | Primary data collection device. Logs time-stamped locations. | Manufacturers: Vectronic, Lotek, Followit. Key specs: Fix rate, battery life, GPS/accelerometer sensors. |

| Movement Analysis Software (R packages) | Data cleaning, calculation, visualization, and statistical modeling of movement metrics. | adehabitatLT: Core trajectory analysis. move: Comprehensive movement analysis. amt: Modern integrated toolkit. recurse: Specifically for residence/revisitation analysis. |

| Projected Coordinate Reference System | Provides a Cartesian plane for accurate calculation of Euclidean distances and angles. | Universal Transverse Mercator (UTM) zone appropriate for the study area. Essential for Step Length. |

| Behavioral State Model | Statistical framework to segment continuous movement metrics into discrete behavioral states (e.g., foraging, traveling). | Hidden Markov Models (HMMs) as implemented in moveHMM or momentuHMM R packages. |

| Spatial Clustering Algorithm | Identifies core areas from GPS point clusters to define regions for Residence Time calculation. | DBSCAN or mixture models. Implemented in dbscan R package or scikit-learn in Python. |

Exploratory Data Analysis (EDA) for Movement Trajectories

This document provides application notes and protocols for conducting Exploratory Data Analysis (EDA) on movement trajectories, a foundational step within a broader thesis on GPS telemetry data analysis in movement ecology. EDA enables researchers and drug development professionals to understand patterns, identify anomalies, and generate hypotheses before formal modeling, ensuring robust downstream analyses.

EDA for movement trajectories involves the visual and statistical examination of raw GPS telemetry data to uncover intrinsic properties. Within movement ecology, this process is critical for assessing data quality, understanding basic movement statistics (e.g., speed, turning angles), and informing subsequent hypothesis-driven analyses like path segmentation or habitat selection models.

Key Quantitative Metrics for Trajectory EDA

The following metrics form the core quantitative summary of any movement trajectory dataset.

Table 1: Core Movement Trajectory Metrics for EDA

| Metric | Formula/Description | Ecological Interpretation |

|---|---|---|

| Step Length | Euclidean distance between consecutive fixes. ∆d = √((x_{t+1} - x_t)² + (y_{t+1} - y_t)²) |

Movement speed/scale; related to energy expenditure. |

| Turning Angle | Relative angle between consecutive steps (range: -π to π). | Tortuosity and directionality of movement. |

| Time Interval | ∆t = t_{t+1} - t_t |

Temporal grain of observation; critical for rate calculations. |

| Net Displacement | Euclidean distance from start to end point over n steps. |

Overall linearity and dispersal from origin. |

| Mean Squared Displacement (MSD) | MSD(τ) = ⟨ (r(t+τ) - r(t))² ⟩ averaged over all start times t. |

Diffusive or exploratory behavior over time lag τ. |

| Residence Time | Time spent within a defined area or patch. | Indicates areas of potential resource use or resting. |

Table 2: Common Data Quality Issues in GPS Telemetry

| Issue | Cause | EDA Diagnostic Method |

|---|---|---|

| Fix Rate Dropout | Satellite obstruction, battery saving. | Histogram of time intervals (∆t). |

| Location Error | GPS dilution of precision (DOP), habitat. | Scatterplot of fixes with error ellipses (if DOP recorded). |

| Spatial Outliers | False fix, extreme error. | Visual inspection on a map; calculating improbable step lengths/speeds. |

| Temporal Gaps | Logger failure, animal out of range. | Timeline plot of fix acquisitions. |

Experimental Protocols for Trajectory EDA

Protocol 3.1: Basic Trajectory Visualization and Cleaning

Objective: To visualize raw movement tracks and identify obvious errors or patterns.

Materials: GPS telemetry data (CSV format with columns: ID, DateTime, X, Y, DOP).

Software: R (ggplot2, sf), Python (matplotlib, pandas, tracktable), or GIS software (QGIS).

Procedure:

- Data Import: Load the trajectory data, ensuring

DateTimeis parsed correctly. - Map Plot: Create a simple line plot of all tracks, color-coded by individual ID.

- Time-Series Plot: Plot the

XandYcoordinates over time to detect temporal gaps or drift. - Error Visualization: If Dilution of Precision (DOP) data exists, plot fixes with point size or color proportional to DOP value to highlight high-error regions.

- Flag Outliers: Calculate step lengths and speeds. Flag steps where speed exceeds a biologically plausible threshold (e.g., > 120 km/h for a terrestrial mammal).

- Document: Record the number and index of flagged points for removal or correction in subsequent analyses.

Protocol 3.2: Movement Metric Distribution Analysis

Objective: To characterize the statistical distribution of fundamental movement parameters. Materials: Cleaned trajectory data from Protocol 3.1.

Procedure:

- Calculate Metrics: For each individual, compute step lengths and turning angles for all sequential fixes.

- Summary Statistics: Generate a table (mean, median, sd, min, max) of step lengths per individual.

- Distribution Plots: Create combined histograms and kernel density estimates for:

- Log-transformed step lengths (often log-normal).

- Turning angles (often von Mises or uniform distributed).

- Temporal Autocorrelation: Plot the autocorrelation function (ACF) for step lengths and turning angles at various time lags to assess dependency structure.

- Interpretation: Note modality in distributions. A bimodal step length distribution may indicate a mixed movement process (e.g., encamped vs. exploratory).

Protocol 3.3: Behavioral Phase Identification via SSM

Objective: To use a State-Space Model (SSM) as an EDA tool to infer latent behavioral states. Materials: Cleaned, regularized trajectory data.

Procedure:

- Data Regularization: Interpolate the trajectory to constant time intervals using a continuous-time movement model (e.g.,

crawlin R). - Model Specification: Fit a simple Hierarchical or Correlated Random Walk (CRW) with discrete states (e.g., 2-state: "Restricted" vs. "Directed").

- State-Dependent Parameters: Assume step length and turning angle distributions differ by state.

- Model Fitting: Employ Expectation-Maximization (EM) algorithm or Bayesian Markov Chain Monte Carlo (MCMC) methods (e.g., using

momentuHMMin R orpymcin Python). - State Decoding: Use the Viterbi algorithm to assign the most probable behavioral state to each observation.

- Visual Validation: Map the trajectory with segments colored by the inferred state. Overlay on environmental covariates (e.g., habitat type) to assess face validity.

Protocol 3.4: Interactive Spatial-Temporal EDA

Objective: To dynamically explore the relationship between movement, space, and time. Materials: Cleaned trajectory data with inferred states (from Protocol 3.3).

Procedure:

- Build Interactive Map: Use a library like

leaflet(R or Python) or Kepler.gl to create a web-based map. - Add Layers: Include:

- Animated path of movement (points connected by lines, progressing through time).

- Base layers (satellite imagery, habitat maps).

- Interactive points showing metadata (time, state, speed) on click.

- Linked Visualizations: Implement linked brushing between the map and time-series plots of speed or state probability.

- Exploration: Interactively select a segment on the time-series plot to highlight it on the map, and vice-versa, to investigate unusual events.

Visualization of EDA Workflows and Relationships

EDA for Movement Trajectories Workflow

State-Space Model for Behavioral Phase ID

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Movement Trajectory EDA

| Tool / "Reagent" | Function in EDA | Example/Note |

|---|---|---|

| GPS Telemetry Collars | Primary data collection device. | Models from vendors like Vectronic-Aerospace or Lotek, providing time-stamped location, DOP, and activity data. |

| Movement Data Toolkit (R) | Core software libraries for calculation and visualization. | amt (animal movement tools), trajr, adehabitatLT, move for trajectory management and metric computation. |

| State-Space Modeling Package | For inferring latent behavioral states. | momentuHMM or bayesmove in R; provides frameworks for fitting hierarchical multi-state models. |

| Spatial Analysis Library | For GIS operations and spatial statistics. | sf (R) or geopandas (Python) for handling spatial data; raster for environmental data extraction. |

| Interactive Visualization Platform | For dynamic, exploratory data visualization. | leaflet (R/Python), shiny (R), or kepler.gl for creating linked, web-based visualizations. |

| Biologically Informed Thresholds | "Reagent" for data cleaning. | Pre-defined maximum realistic speed (e.g., species-specific velocity limits) to filter spatial outliers. |

| Regularization Algorithm | To interpolate data to constant time intervals. | Continuous-time correlated random walk models (e.g., crawl package) account for measurement error and irregular timing. |

Within the broader thesis on advancing GPS telemetry data analysis in movement ecology, three interconnected data properties fundamentally constrain inference and model validity: the rate of successful location fixes (Fix Rate), the spatial error of those fixes (Accuracy), and the statistical non-independence of sequential locations (Temporal Autocorrelation). This application note details protocols for quantifying these parameters and mitigating their confounding effects in ecological analysis, with relevance to environmental exposure assessments in pharmaceutical development.

Table 1: Typical Performance of Common GPS Telemetry Technologies

| Technology / Deployment | Mean Fix Rate (%) | Horizontal Accuracy (m, mean ± SD) | Recommended Minimum Fix Interval | Primary Source of Error |

|---|---|---|---|---|

| VHF Triangulation | 95-98* | 100 - 500 | 30 min | Bearing error, topography |

| Conventional GPS Collar (2D) | 70-90 | 10 - 30 | 1 hour | Satellite geometry, canopy |

| High-Sensitivity GPS (3D) | 85-99 | 5 - 20 | 15 min | Multipath, atmospheric delay |

| GPS/GLONASS Dual Constellation | 90-99.5 | 3 - 10 | 5 min | Multipath, receiver noise |

| Assisted-GPS (A-GPS) | >95 | 3 - 15 | 1 min | Urban canyon effects |

| Differential GPS (DGPS) | 90-98 | 0.5 - 5 | 1 sec | Baseline distance |

*Fix rate for VHF refers to successful triangulation, not a signal fix.

Table 2: Impact of Environmental Covariates on Fix Rate & Accuracy

| Covariate | Effect on Fix Rate (Δ%) | Effect on Accuracy (Δm) | Mitigation Strategy |

|---|---|---|---|

| Dense Canopy (CI > 70%) | -15 to -40 | +5 to +25 | Elevated antenna, dual-frequency |

| Rugged Terrain | -5 to -20 | +10 to +50 | 3D fixes, mask angle adjustment |

| Urban Canyon | -10 to -30 | +20 to +100 | A-GPS, outlier filtering |

| Animal Proximity to Body | -5 to -15 | +1 to +10 | Careful collar positioning |

| Low Battery Voltage | -20 to -60 | +10 to +100 | Voltage-regulated circuits |

Experimental Protocols

Protocol 1: Empirical Assessment of Fix Rate and Accuracy

Objective: To quantify true field-based fix rate and location accuracy for a GPS telemetry system under study-specific conditions. Materials: See "Scientist's Toolkit" below. Procedure:

- Stationary Test Deployment: Deploy 5-10 identical GPS tags at fixed, geodetically surveyed benchmark locations representative of the study habitat (e.g., under canopy, open sky).

- Scheduled Fix Attempts: Program tags to attempt fixes at the intended study fix interval (e.g., every 2 hours) for a minimum of 7 days.

- Data Collection: Retrieve tags and download data. Record all successful fixes, failed fix attempts, and Dilution of Precision (DOP) values.

- Accuracy Calculation: For each successful fix, calculate the horizontal error as the Euclidean distance between the fix coordinates and the surveyed benchmark coordinates.

- Fix Rate Calculation: Fix Rate (%) = (Number of Successful Fixes / Total Fix Attempts) × 100.

- Covariate Logging: Concurrently log environmental covariates (canopy cover via spherical densiometer, terrain model index) for each benchmark.

- Analysis: Fit generalized linear mixed models (GLMMs) with tag ID as a random effect to predict fix success (binomial) and accuracy error (Gamma) as functions of DOP and environmental covariates.

Protocol 2: Quantifying and Accounting for Temporal Autocorrelation

Objective: To measure the scale of autocorrelation in movement data and apply appropriate statistical corrections. Materials: Movement track data, statistical software (R, Python). Procedure:

- Data Preparation: Use a cleaned movement track (after applying accuracy filters from Protocol 1). Calculate step lengths (distances) and turning angles between consecutive fixes at the sampling interval

t. - Autocorrelation Function (ACF) Calculation:

- For each animal, compute the serial autocorrelation function for step lengths and turning angles at increasing time lags (e.g.,

t,2t,3t...). - Use robust correlation metrics (e.g., Spearman's ρ for step lengths, circular correlation for angles).

- For each animal, compute the serial autocorrelation function for step lengths and turning angles at increasing time lags (e.g.,

- Determine Autocorrelation Range: Identify the time lag at which the autocorrelation falls below a critical threshold (e.g., not significantly different from zero, or ρ < 0.1). This defines the "time to independence."

- Apply Statistical Corrections:

- Sub-sampling: Re-sample the track at intervals equal to or greater than the time to independence.

- Model Integration: Use autoregressive integrated moving average (ARIMA) structures in linear models.

- Use of Random Walks: In state-space or Bayesian hierarchical models, explicitly model the movement process as a correlated random walk.

- Validation: Compare the parameter estimates (e.g., habitat selection coefficients) from naive and autocorrelation-corrected models. Report changes in effect sizes and standard errors.

Mandatory Visualizations

Diagram Title: GPS Fix Acquisition and Validation Workflow

Diagram Title: Autocorrelation Consequences and Solutions

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GPS Telemetry Data Quality Assessment

| Item | Function | Example/Notes |

|---|---|---|

| Geodetic Survey-Grade GPS | Provides high-accuracy ground truth coordinates for accuracy validation. | Trimble R12, Spectra SP85. Accuracy: 8 mm horizontal. |

| Spherical Densiometer | Quantifies percent canopy cover at test locations, a key covariate for fix success. | Model-C convex. Take readings at tag height in four cardinal directions. |

| Programmable Test Tags | Identical to field-deployed tags, used for controlled stationary and mobile tests. | Lotek, Vectronic, or Telonics models matching study tags. |

| Voltage Regulator & Battery Simulator | Tests tag performance across a range of input voltages to establish battery life thresholds. | Keysight N6705B DC Power Analyzer. |

| Reference Clock (GNSS Disciplined Oscillator) | Synchronizes all data loggers and tags to absolute time, crucial for temporal analysis. | Microchip 8045C. Accuracy: ±20 ns. |

| RF Shielded Enclosure | Tests for self-interference or effects of animal body proximity on antenna performance. | Farady cage or bag. |

| Movement Analysis Software Suite | Processes tracks, calculates autocorrelation, fits movement models. | amt & ctmm packages in R; Movebank web platform. |

| Differential Correction Service | Post-processes GPS data to improve accuracy, especially for stationary tests. | Canadian Spatial Reference System (CSRS-PPP), NOAA OPUS. |

Application Notes

The Movement Ecology Paradigm (MEP) provides a unifying theoretical framework for studying organismal movement. It integrates four core components: the Internal State (Why), the Motion Capacity (How), the Navigation Capacity (When and Where), and the External Factors affecting movement. Within the context of a thesis on GPS telemetry data analysis, the MEP transforms raw location data into ecological insight by framing questions around these components.

Why Adopt the Paradigm? The MEP moves beyond descriptive tracking to mechanistic and functional understanding. It enables researchers to link discrete movement steps (from GPS data) to underlying drivers (e.g., hunger, reproduction), biomechanical constraints, and cognitive navigation strategies. This is critical for predictive modeling in conservation, disease ecology, and resource management.

Key Quantitative Metrics: The analysis of GPS telemetry data under the MEP focuses on deriving metrics that speak to each component.

Table 1: Core Movement Metrics Derived from GPS Telemetry Data

| MEP Component | Example Metrics | Ecological Interpretation |

|---|---|---|

| Internal State (Why) | Residence Time, Recursion Frequency, Diel Activity Pattern | Indicates site fidelity, foraging motivation, or predation risk avoidance. |

| Motion Capacity (How) | Step Length Distribution, Net Squared Displacement, Turning Angle Correlation | Reveals movement mode (e.g., Brownian vs. Lévy walk), energy expenditure, and mobility constraints. |

| Navigation Capacity (When & Where) | First-Passage Time, Path Efficiency (Net/Total Distance), Habitat Selection Indices (RSF) | Measures search efficiency, directional persistence, and cognitive mapping ability. |

| External Factors | Resource-Landscape Covariance, Distance to Human Infrastructure | Quantifies the effect of landscape heterogeneity and anthropogenic disturbance on movement decisions. |

Experimental Protocols

Protocol 1: Integrated Step Selection Analysis (iSSA) Objective: To simultaneously estimate the effects of internal state, motion capacity, navigation capacity, and external landscape factors on movement choices. Methodology:

- Data Preparation: From cleaned GPS tracks (e.g., 1 fix/hour), define movement steps (consecutive relocations) and turns (changes in direction).

- Generate Control Steps: For each observed step, generate 10-20 random "control" steps originating from the same start point. These control steps have the same step length distribution as the observed data (respecting Motion Capacity) but random turning angles.

- Attribute Extraction: For the end point of each observed and control step, extract relevant covariates:

- Internal State Proxy: Time since last kill, reproductive status from field obs.

- Navigation Cues: Solar azimuth, lunar phase.

- External Factors: Habitat type (from GIS), slope, NDVI, distance to road.

- Statistical Modeling: Fit a conditional logistic regression model where the outcome is the selection (1 for observed step, 0 for control steps) within each step stratum. The model coefficients reveal how covariates guide Where and When to move, conditional on the intrinsic Motion Capacity.

Protocol 2: Behavioral State Segmentation via Hidden Markov Models (HMM) Objective: To infer the latent Internal State ("Why") driving movement phases from GPS track metrics. Methodology:

- Calculate Step Metrics: For each step in the track, compute step length and turning angle (relative direction).

- Define HMM Structure: Specify a model with 2-4 discrete behavioral states (e.g., "Resting," "Foraging," "Transit"). Assume the observed step metrics are generated by state-dependent probability distributions (e.g., Gamma for step length, von Mises for turning angle).

- Model Fitting: Use the forward-backward algorithm (e.g., in R package

momentuHMM) to estimate: a) the transition probability matrix (prob. of switching states), and b) the parameters of the state-dependent distributions. - State Decoding: Apply the Viterbi algorithm to the fitted model to assign the most probable behavioral state to each observation in the track. This creates a time-series of "Why" for the animal.

Diagram Title: HMM Workflow for Behavioral State Segmentation

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for GPS Telemetry Analysis

| Item / Solution | Function / Purpose |

|---|---|

| GPS Telemetry Collars | Primary data collection device. Provides timestamped geolocation, often with auxiliary sensors (activity, temperature). |

Movement Analysis Software (R amt package) |

Comprehensive toolkit for track creation, step derivation, randomization (iSSA), and home range estimation. |

State-Space Modeling Platform (R momentuHMM) |

Specialized for fitting HMMs and correlated random walk models to movement data, accounting for measurement error. |

| Resource Selection Function (RSF) Raster Stack | A multi-layer GIS dataset (e.g., habitat, elevation, human footprint) used as spatial covariates in step selection analyses. |

| High-Performance Computing (HPC) Cluster | Enables computationally intensive steps like generating millions of control steps for iSSA or Bayesian MCMC fitting of complex models. |

Diagram Title: Movement Ecology Paradigm Links Data to Analysis

Core Analytical Toolbox: From Home Ranges to Path Segmentation

Application Notes

Home range estimation is a cornerstone of movement ecology, critical for understanding animal space use, habitat selection, and population dynamics. Within the broader thesis on GPS telemetry data analysis, this section provides a comparative application of four fundamental estimators: Minimum Convex Polygon (MCP), Kernel Density Estimation (KDE), Brownian Bridge Movement Model (BBMM), and adaptive Local Convex Hull (a-LoCoH). Each method operates on different statistical and biological assumptions, influencing their suitability for specific research questions.

MCP is a simple geometric method, drawing the smallest convex polygon around all location points. It is highly sensitive to outliers but provides a useful baseline and is often required for regulatory comparisons.

KDE applies a smoothing function (kernel) over each point to create a utilization distribution (UD). The critical choice is the smoothing parameter (h), which can be automated via likelihood cross-validation or reference bandwidth, but may over- or under-smooth biologically relevant space use.

BBMM models the probability of occurrence between successive GPS fixes based on the animal's motion variance and measurement error. It is explicitly temporal, incorporating movement paths to estimate areas used between points, making it superior for linear or corridor movement.

a-LoCoH constructs hulls around nearby points, adaptively scaling the hull size based on point density. It excels at identifying hard boundaries and interior holes (e.g., unused areas) within a home range without smoothing artifacts.

The selection of an estimator directly impacts ecological inference, such as estimates of habitat overlap, core area size, or response to environmental disturbance.

Table 1: Comparative Overview of Home Range Estimation Methods

| Method | Key Parameter(s) | Incorporates Temporality? | Handles Hard Edges? | Sensitivity to Outliers | Primary Output |

|---|---|---|---|---|---|

| MCP | Percentage of points (e.g., 95%) | No | No | Very High | Single polygon |

| KDE | Smoothing factor (h) / Kernel type | No (typically) | No | High | Utilization Distribution (Raster) |

| Brownian Bridge | Motion variance (σₘ²), GPS error (σₑ²) | Yes | No | Moderate | Time-weighted UD (Raster) |

| a-LoCoH | Number of neighbors (k) or radius (a) | Can be integrated | Yes | Low | Set of convex hulls |

Table 2: Typical Results from a Simulated Dataset (95% Home Range Area in km²) Data simulated for an animal with a central place and foraging excursions.

| Method | 50% Core Area (km²) | 95% Home Range (km²) | 99% Total Range (km²) |

|---|---|---|---|

| MCP (100%) | N/A | 12.5 | 12.5 |

| MCP (95%) | N/A | 9.1 | N/A |

| KDE (href) | 1.8 | 8.7 | 11.2 |

| KDE (LSCV) | 2.3 | 7.1 | 9.5 |

| Brownian Bridge | 2.1 | 6.9 | 8.8 |

| a-LoCoH (k=15) | 2.0 | 6.5 | 8.3 |

Experimental Protocols

Protocol 1: Data Pre-processing for Home Range Analysis

This universal protocol is prerequisite for all subsequent methods.

- Data Import: Load timestamped GPS location data (in CSV or shapefile format) into analysis software (e.g., R with

sp,sf,amtpackages; ArcGIS). - Cleaning:

- Remove 2D/3D fix inaccuracies based on dilution of precision (DOP) values if recorded (e.g., HDOP > 5).

- Remove physiologically impossible movements based on a speed threshold (e.g., >80 km/h for medium-sized mammals).

- Regularization (if needed): For BBMM, ensure roughly regular fix intervals. Use interpolation or subsampling to achieve a consistent rate (e.g., 2-hour intervals).

- Projection: Transform geographic coordinates (latitude/longitude) to a projected coordinate system (e.g., UTM) with units in meters for accurate area calculation.

Protocol 2: Minimum Convex Polygon (MCP) Estimation

Software: R (adehabitatHR package), ArcGIS (Home Range Tools).

- Execute Protocol 1.

- Create MCP: Use the

mcp()function in R. Specify thepercentparameter (typically 95%, 100%).mcp_95 <- mcp(spatial_points_df, percent=95)

- Calculate Area: Extract area from the resulting polygon object.

- Visualization: Plot the polygon over a base map.

Protocol 3: Kernel Density Estimation (KDE)

Software: R (adehabitatHR, kernelUD), ArcGIS (Kernel Density).

- Execute Protocol 1.

- Select Smoothing Parameter: Determine the bandwidth (h).

- Reference bandwidth (

href): Often the default; can be oversmooth. - Least Squares Cross-Validation (

LSCV): Automated, data-optimized. Usehrefas a starting point for grid search inLSCVroutine.

- Reference bandwidth (

- Calculate Utilization Distribution: Use the

kernelUD()function.kde_ud <- kernelUD(spatial_points_df, h="LSCV", grid=200)

- Derive Contours: Extract specific percentile volume contours (e.g., 50%, 95%) using the

getverticeshr()function. - Calculate/Visualize: Extract areas and plot contours.

Protocol 4: Brownian Bridge Movement Model (BBMM)

Software: R (BBMM or move package), ArcGIS (BBMM Tool).

- Execute Protocol 1, emphasizing step 3 (regularization).

- Estimate Parameters: Calculate the maximum likelihood estimates for:

- Location Error Variance (σₑ²): Known from GPS manufacturer specs or derived from stationary tests.

- Brownian Motion Variance (σₘ²): Estimated from the data (per time interval).

- Construct BBMM: Use the

brownian.bridge()function on a trajectory object (ordered, timed points).bbmm <- brownian.bridge(traj, location.error=15, cell.size=50)

- Derive Contours & Calculate: Extract UD raster and derive contour polygons as in KDE.

Protocol 5: Adaptive Local Convex Hull (a-LoCoH)

Software: R (adehabitatHR, t-locoh package).

- Execute Protocol 1.

- Construct Hulls: Use the

LoCoH.a()function. The 'a' method requires setting a distance threshold.- Determine the 'a' value by exploring the distribution of nearest neighbor distances.

- Isopleth Creation: Hulls are unioned and sorted by density to create volume contours (isopleths).

- Extract & Visualize: Derive polygons for specific isopleths (e.g., 95%) and calculate their areas.

Visualizations

Title: Workflow for Comparing Home Range Estimation Methods

Title: Conceptual Basis of the Four Home Range Estimators

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Home Range Analysis

| Item / Solution | Function in Analysis | Example / Note |

|---|---|---|

| GPS Telemetry Collar | Primary data collection device. Logs timestamped locations. | Specify fix schedule, expected error (e.g., <10m), and battery life. |

| Movement Data Repository | Platform for storing/archiving raw & processed telemetry data. | Movebank (free, widely used). Ensures reproducibility and meta-analysis. |

| R Statistical Software | Open-source platform for comprehensive analysis. | Essential packages: adehabitatHR, amt, move, sf, raster. |

| GIS Software | For visualization, spatial data management, and some analyses. | QGIS (open-source) or ArcGIS Pro. Critical for creating publication-quality maps. |

| Bandwidth Optimization Script | Algorithm to determine the KDE smoothing parameter (h). | LSCV or Plug-in bandwidth selectors within adehabitatHR. |

| Brownian Bridge Parameter Estimator | Tool to calculate motion variance (σₘ²) from trajectory data. | Function within the BBMM or move R packages. |

| Projected Coordinate System | A spatial reference system with constant linear units (meters). | Required for area calculation. UTM zone specific to study area is standard. |

| High-Performance Computing (HPC) Access | For large datasets or intensive simulations (e.g., BBMM on many animals). | Speeds up bootstrapping, autocorrelation analysis, and population-level models. |

Step Selection Functions (SSFs) and Resource Selection Analyses

This document provides application notes and protocols for Step Selection Functions (SSFs) and Resource Selection Analyses (RSAs), critical methods in the analysis of GPS telemetry data within movement ecology. These techniques bridge the gap between raw movement trajectories and ecological inference, allowing researchers to quantify how animals select resources and navigate their environment at multiple spatiotemporal scales. Their application extends to understanding habitat fragmentation, disease vector pathways, and the ecological impacts of pharmaceutical compounds.

Core Concepts and Comparative Framework

Table 1: Comparison of SSFs and Resource Selection Functions (RSFs)

| Feature | Step Selection Function (SSF) | Resource Selection Function (RSF) |

|---|---|---|

| Sampling Unit | Movement step (consecutive relocations) | Telemetry location (point) |

| Available Points | Generated along the step’s conditional distribution | Generated within a broader availability domain (e.g., home range) |

| Temporal Link | Explicitly conditions on the animal's previous location | Typically assumes serial independence of locations |

| Primary Inference | Movement mechanisms & immediate habitat selection | Long-term or general habitat preference |

| Model Form | Conditional logistic regression (Stratified by step) | GLM (Logistic/Poisson regression) or mixed-effects models |

| Controls For | Intrinsic movement constraints (speed, turning angles) | Sampling bias via random availability samples |

Table 2: Common Covariate Classes for SSF/RSF Analysis

| Covariate Class | Example Variables | Purpose in Model |

|---|---|---|

| Environmental | Elevation, slope, land cover type, NDVI | Quantify selection for static landscape features |

| Dynamic Environmental | Daily precipitation, snow depth, green-up phenology | Quantify selection for temporally variable resources |

| Anthropogenic | Distance to road, building density, light pollution | Quantify response to human disturbance |

| Movement | Step length, turning angle, speed | Characterize intrinsic movement behavior (SSF) |

| Interaction | Step length × vegetation density | Test how movement modulates selection |

Experimental Protocols

Protocol 1: Standardized SSF Analysis Workflow

Objective: To model fine-scale habitat selection conditional on movement.

- Data Preparation: Clean high-frequency GPS data. Define a consistent time interval (∆t) for steps.

- Step Generation: For each observed step (from i to i+1), calculate step length and turning angle.

- Generate Available Steps: For each observed step, generate k (e.g., 10-20) available steps. These start at location i but have step lengths and turning angles drawn from a species-specific or data-derived distribution (e.g., gamma for length, von Mises for angle).

- Extract Covariates: For the end point of the observed and all available steps, extract relevant environmental covariates (e.g., from GIS raster layers).

- Model Fitting: Fit a conditional logistic regression model (clogit) with strata defined by each step ID. The model form is: w(x) = exp(β₁x₁ + β₂x₂ + ... + βₙxₙ), where *w(x) is the relative selection strength.

- Model Validation: Use k-fold cross-validation based on individual animals or trajectories. Assess predictive performance with Spearman-rank correlations between used and predicted selection frequencies.

Protocol 2: Integrated Step-Selection Analysis (iSSA)

Objective: To jointly estimate movement parameters and selection coefficients.

- Steps 1-4: Follow Protocol 1.

- Parametrize Movement Distributions: Fit parametric distributions (e.g., Gamma, Weibull) to observed step lengths and (wrapped) Cauchy or von Mises to turning angles. Include covariates (e.g., habitat type) on these distributions if needed.

- Integrated Model: The iSSA likelihood incorporates both the movement and selection components. The log-RSS for a step from i to j is proportional to: log(f(step length, turning angle | θ)) + βᵀxⱼ, where f is the movement density function.

- Fitting: Implement via maximum likelihood estimation in a specialized package (e.g.,

amtin R,AniMove).

Diagram 1: SSF Analysis Workflow (79 chars)

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function & Application Notes |

|---|---|

| High-resolution GPS Collars | Data collection. Key specs: Fix success rate, sampling frequency, battery life, and onboard sensors (e.g., accelerometers). |

| GIS Software (e.g., QGIS, ArcGIS) | Spatial data management, covariate raster creation, and buffer/zone analysis for defining availability. |

| R Statistical Environment | Primary platform for analysis. Essential packages: amt (SSF/RSF), survival (clogit), lme4 (mixed models), sf (spatial data). |

| Covariate Raster Stack | Multilayer spatial data (e.g., terrain, vegetation, human footprint). Must be aligned, projected, and at appropriate resolution. |

| High-performance Computing (HPC) Access | For large datasets (many steps/individuals) or intensive cross-validation/bootstrap procedures. |

| Movement Distribution Fitting Tools | R packages circular and fitdistrplus for characterizing step length and turning angle distributions. |

Advanced Application: Pharmaco-Ecological Modeling

Context: In drug development, understanding how a pharmaceutical agent affects animal movement and space use can reveal off-target ecological impacts or efficacy in altering disease host behavior.

Protocol 3: Pre- vs. Post-Treatment SSF Analysis

- Experimental Design: GPS-track subjects pre- and post-administration of a compound (or placebo).

- Stratified SSF: Fit an SSF with an interaction term between treatment phase (pre/post) and key environmental covariates (e.g.,

covariate * phase). - Interpretation: A significant interaction indicates the compound altered habitat selection behavior. For example, a changed selection coefficient for "distance to water" post-treatment could indicate a drug-induced shift in thirst or thermoregulation.

- Dose-Response: Incorporate dosage levels as a covariate interacting with habitat variables to model selection gradient as a function of exposure.

Diagram 2: Drug Effects on Movement & Selection (81 chars)

Data Presentation & Outputs

Table 4: Example SSF Model Output Table

| Covariate | β (Coefficient) | SE | z-value | p-value | exp(β) [Relative Selection Strength (RSS)] |

|---|---|---|---|---|---|

| Forest Cover (%) | 0.85 | 0.12 | 7.08 | <0.001 | 2.34 |

| Distance to Road (km) | -1.20 | 0.18 | -6.67 | <0.001 | 0.30 |

| Slope (degrees) | -0.04 | 0.02 | -2.00 | 0.046 | 0.96 |

| Interaction: Step Length × Forest | 0.01 | 0.003 | 3.33 | <0.001 | 1.01 |

Interpretation: Animals strongly select for forest cover (RSS=2.34) and avoid roads (RSS=0.30). Selection for forest is stronger during longer, faster movement steps (positive interaction).

Within a doctoral thesis focused on advancing GPS telemetry data analysis in movement ecology, the segmentation of continuous movement tracks into discrete behavioral states is a fundamental challenge. This chapter addresses two principal methodological frameworks for identifying latent states (e.g., resting, foraging, transit) and pinpointing abrupt transitions (changepoints) in movement dynamics. Hidden Markov Models (HMMs) and Bayesian Changepoint Detection provide complementary, probabilistic approaches to move beyond simple thresholding, enabling robust inference of animal behavior from noisy, autocorrelated tracking data. These methods are directly applicable to broader ecological questions about resource selection, energy expenditure, and responses to environmental stimuli.

Core Methodologies and Application Notes

Hidden Markov Models (HMMs) for Behavioral State Inference

Concept: HMMs assume an animal's observed movement metrics (e.g., step length, turning angle) are generated by one of N hidden (latent) behavioral states. The model probabilistically infers the state sequence based on the observations and learned state-dependent probability distributions and transition rules.

Key Parameters & Data Requirements:

- Observed Data: Time-series of movement metrics derived from GPS fixes (e.g., step length, turning angle, velocity).

- Hidden States: The number of behavioral states (k) must be specified a priori or inferred using model selection.

- Emission Distributions: Probability distributions (e.g., gamma for step length, von Mises for turning angle) that model the data emitted from each state.

- Transition Probability Matrix: A k x k matrix governing the probability of switching from one state to another.

Protocol: Implementing an HMM for GPS Tracking Data

Data Preprocessing:

- Import GPS location data (timestamp, latitude, longitude).

- Calculate step lengths (distance between successive fixes) and turning angles (relative angle between successive steps).

- Handle missing fixes via interpolation or appropriate modeling.

- Standardize step lengths (e.g., log-transform) to improve model fitting.

Model Specification:

- Define the number of states (k). Start with an ecologically plausible range (e.g., 2-4 states: resting, foraging, traveling).

- Specify emission distributions: Typically, a gamma distribution for step length and a von Mises distribution for turning angle for each state. A state representing "resting" would have a gamma distribution concentrated near zero.

Parameter Estimation:

- Use the Expectation-Maximization (EM) algorithm (or Bayesian inference) to estimate the parameters of the emission distributions and the state transition probability matrix.

- Implement using statistical software packages (e.g.,

momentuHMMormoveHMMin R).

State Decoding:

- Apply the Viterbi algorithm to the fitted model to compute the most likely sequence of hidden behavioral states for each observation in the track.

Validation & Interpretation:

- Validate state classifications against independent observational data if available.

- Interpret states by examining the estimated parameters of the emission distributions (e.g., high mean step length, low turning angle concentration = "transit" state).

- Use information criteria (AIC, BIC) for model selection among different values of k.

Bayesian Changepoint Detection

Concept: This method identifies specific time points (changepoints) where the underlying statistical properties of the movement time-series change abruptly, segmenting the track into homogeneous behavioral phases. A Bayesian approach provides full posterior distributions for changepoint locations, quantifying uncertainty.

Key Parameters & Data Requirements:

- Observed Data: A univariate or multivariate time-series of a movement metric (e.g., speed).

- Changepoint Prior: A prior distribution on the number and/or location of changepoints (e.g., Poisson distribution for the number of changepoints).

- Segment Models: Probability models for the data within each segment (e.g., a Gaussian distribution with a mean that changes at each changepoint).

Protocol: Implementing Bayesian Changepoint Detection

Data Preparation:

- Select a primary metric indicative of behavioral shifts (e.g., speed, acceleration, net squared displacement).

- Ensure the time-series is evenly spaced; resample if necessary.

Model Specification:

- Define the likelihood model for data within segments. For normally distributed speed: y_t ~ N(μ_i, σ²), where i denotes the segment.

- Place priors on segment parameters (e.g., μi ~ N(μ0, σ_0²), σ² ~ Inv-Gamma(α, β)).

- Specify a prior for changepoint locations. A common choice is a discrete uniform distribution over all possible times, combined with a prior on the number of changepoints.

Posterior Inference:

- Use computational methods (e.g., Reversible Jump Markov Chain Monte Carlo (RJMCMC), or exact algorithms like the Pruned Exact Linear Time (PELT) method within a Bayesian framework) to sample from the joint posterior distribution of the number of changepoints, their locations, and the segment parameters.

- Implement using libraries like

bcpin R or custom scripts in Stan/PyMC.

Interpretation of Output:

- Analyze the posterior probability of a changepoint at each time point. Peaks above a threshold (e.g., 0.5) indicate high-probability changepoints.

- Examine the posterior distribution of the number of changepoints.

- Use the median or maximum a posteriori (MAP) changepoint configuration to segment the track. Interpret each segment by its estimated parameters (e.g., high mean speed segment = "travel").

Table 1: Comparison of HMM and Bayesian Changepoint Detection for Behavioral Segmentation

| Feature | Hidden Markov Model (HMM) | Bayesian Changepoint Detection |

|---|---|---|

| Core Objective | Infer a latent state for every observation. | Identify specific times where the data-generating process changes. |

| Output | A sequence of discrete behavioral labels (state 1, 2, 3...). | A set of changepoint times, segmenting the track into homogeneous periods. |

| Temporal Scale | Fine-scale, tied to the observation rate. | Can operate at the observation rate or detect changes at coarser, irregular intervals. |

| Key Assumption | Process is Markovian; the next state depends only on the current state. | Data within each segment is independent and identically distributed (i.i.d.) from a segment-specific model. |

| Handles Autocorrelation | Explicitly models it via the hidden state sequence. | Often assumes independence within segments; can be extended to autoregressive models. |

| Primary Uncertainty | State uncertainty for each time point (local decoding). | Uncertainty in the number and location of changepoints. |

| Best Suited For | Labeling behavior at each fix (e.g., classifying resident vs. exploratory movements). | Identifying major phases or events in a track (e.g., onset of migration, settlement in a new home range). |

Table 2: Typical Parameter Estimates from a Three-State HMM Fit to Animal GPS Data

| Behavioral State | Step Length (Gamma Dist. Params) | Turning Angle (Von Mises Params) | Interpreted Meaning |

|---|---|---|---|

| State 1 | Shape: 1.2, Scale: 0.05 → Mean: ~0.06 km | Concentration (κ): 0.8 → Highly Variable | Resting/Localized Activity |

| State 2 | Shape: 2.5, Scale: 0.15 → Mean: ~0.38 km | Concentration (κ): 1.5 → Moderately Directed | Foraging/Searching |

| State 3 | Shape: 5.0, Scale: 0.50 → Mean: ~2.5 km | Concentration (κ): 2.5 → Highly Directed | Directed Travel/Transit |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Behavioral State Segmentation Analysis

| Item/Software | Function & Explanation |

|---|---|

moveHMM / momentuHMM (R) |

Specialized R packages for fitting HMMs to movement data. Handle data preprocessing, parameter estimation, and state decoding. |

bcp / Rbeast (R) |

R packages for Bayesian changepoint analysis. Provide posterior sampling and visualization of changepoint probabilities. |

Stan / PyMC |

Probabilistic programming languages for building custom Bayesian models, including complex HMMs and changepoint models. |

| High-Resolution GPS Telemetry Collar | Data source. Provides regular (e.g., 5-min interval) location fixes. Accuracy and fix rate are critical for parameter estimation. |

| GIS Software (QGIS, ArcGIS) | Used for calculating movement metrics (step length, turning angle) from raw coordinates and linking states to environmental layers. |

| Computational Resources (HPC/Cloud) | Bayesian inference and fitting multiple HMMs are computationally intensive, often requiring parallel processing. |

Methodological Workflow Diagrams

Title: HMM Workflow for Behavioral State Segmentation

Title: Bayesian Changepoint Detection Workflow

Title: Hidden Markov Model State & Observation Structure

Within the broader thesis on GPS telemetry data analysis methods for movement ecology research, understanding animal movement patterns is paramount. This document provides detailed Application Notes and Protocols for analyzing trajectories using Net Squared Displacement (NSD) and Correlated Random Walk (CRW) models. These methods are critical for identifying phases of movement (e.g., dispersal, migration, sedentariness) and distinguishing directed movement from random exploration, with applications extending to quantifying drug effects on animal movement in preclinical studies.

Core Theoretical Framework

Net Squared Displacement (NSD): A measure of the squared straight-line distance from a starting point to each subsequent location in a trajectory. It is used to classify movement patterns over time. Correlated Random Walk (CRW): A movement model where the direction of a step is correlated with the direction of the previous step(s). It serves as a null model to test for the presence of directional persistence or external influences.

Key Data & Model Parameters

The following table summarizes the key quantitative parameters involved in NSD and CRW analysis.

Table 1: Core Parameters for Trajectory Analysis

| Parameter | Symbol/Formula | Description | Ecological Interpretation |

|---|---|---|---|

| Net Squared Displacement | ( NSD(t) = (xt - x0)^2 + (yt - y0)^2 ) | Squared Euclidean distance from start. | Reveals phases of movement: linear increase indicates directed movement (e.g., dispersal), asymptotic curve indicates bounded movement (e.g., home ranging). |

| Step Length ((l)) | ( li = \sqrt{(xi - x{i-1})^2 + (yi - y_{i-1})^2} ) | Distance between consecutive relocations. | Related to energy expenditure and speed. Mean and distribution are model inputs. |

| Turning Angle ((\theta)) | ( \thetai = \arctan2(\Delta yi, \Delta xi) - \arctan2(\Delta y{i-1}, \Delta x_{i-1}) ) | Change in direction between steps. | Measures directional persistence. Concentrated near 0° indicates high correlation (straight-line movement). |

| Mean Cosine of Turning Angles | ( c = \frac{1}{n-1} \sum{i=2}^{n} \cos(\thetai) ) | Measure of directional correlation. | ( c \rightarrow 1 ): Strong persistence (CRW). ( c \rightarrow 0 ): Uncorrelated (Simple Random Walk). |

| Mean Vector Length ((r)) | ( r = \sqrt{(\sum \cos \thetai)^2 + (\sum \sin \thetai)^2} / n ) | Concentration of turning angles. | Test statistic for directional correlation (Rayleigh test). |

| First-Passage Time (FPT) | Time to cross a circle of radius (r) centered on a location. | Measures residency time at different spatial scales. | Identifies area-restricted search behavior and scale of perception. |

Experimental Protocols

Protocol 1: Calculating Net Squared Displacement from GPS Telemetry Data

Objective: To compute NSD and classify individual movement patterns. Input: Pre-processed GPS location data (timestamp, animal ID, x-coordinate, y-coordinate).

- Define Origin: For each animal ID, set the first recorded GPS fix as the starting point ((x0, y0)).

- Iterative Calculation: For each subsequent fix (t) at coordinates ((xt, yt)), calculate: [ NSD(t) = (xt - x0)^2 + (yt - y0)^2 ]

- Visualization: Plot NSD(t) against time (or step number). Use a log-log plot to distinguish power-law relationships.

- Pattern Classification:

- Linear Increase: Consistent directional movement (potential dispersal/migration).

- Asymptotic Curve: Movement bounded in an area (home range establishment).

- Multiple Asymptotes: Sequential range shifts or seasonal migration.

Protocol 2: Fitting and Testing a Correlated Random Walk Model

Objective: To model movement and test for significant directional persistence. Input: A trajectory of step lengths (li) and turning angles (\thetai).

- Parameter Estimation:

- Calculate mean step length ((\bar{l})) and its variance.

- Calculate mean cosine of turning angles ((c)) and mean vector length ((r)).

- Goodness-of-Fit Test (Rayleigh Test):

- Null Hypothesis ((H0)): Turning angles are uniformly distributed (no correlation).

- Test Statistic: (z = n r^2).

- Compare (z) to (\chi^2) distribution with 2 degrees of freedom. Reject (H0) if (p < 0.05), indicating a significant CRW.

- Simulate CRW: Generate a null trajectory using (\bar{l}), its distribution, and a wrapped distribution for (\theta) centered on 0 with concentration determined by (c).

- Compare to Observed Data: Calculate Net Squared Displacement for 1000 simulated CRWs. Plot the mean and 95% confidence envelope of simulated NSD against the observed NSD. Observed NSD above the envelope indicates more directed movement than expected under CRW (e.g., migration).

Protocol 3: Integrated NSD-CRW Analysis Workflow

Objective: A complete pipeline from raw GPS data to movement classification.

- Data Pre-processing:

- Clean GPS data: Remove 2D fixes, high HDOP values.

- Regularize trajectory: Interpolate to constant time interval using a movement model.

- Derive steps and turning angles.

- CRW Null Model Construction: Follow Protocol 2 steps 1-3.

- NSD Calculation & Comparison: Follow Protocol 1, overlaying the CRW simulation envelope as in Protocol 2, step 4.

- Statistical Inference: If observed NSD significantly exceeds the CRW envelope, the movement is more directed than a correlated random walk, suggesting external factors (e.g., navigational goal, attractant) or an internal behavioral state change.

Visualizations

Title: Integrated NSD and CRW Analysis Workflow

Title: Interpretation of NSD Time Series Patterns

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Movement Analysis

| Item/Category | Function in Analysis | Example/Note |

|---|---|---|

| High-Resolution GPS Loggers | Primary data collection. Provides time-stamped location fixes. | Must have sufficient fix rate and accuracy for study species (e.g., 5 min vs. 1 hr intervals). Argos, GPS-GSM collars. |

| Movement Ecology R Packages | Statistical computing and modeling. | adehabitatLT (trajectory handling), circular (turning angle stats), moveHMM (state-space models), amt (animal movement tools). |

| Spatial Analysis Software | Geographic data visualization and GIS operations. | QGIS, ArcGIS for mapping trajectories and environmental covariate extraction. |

| CRW Simulation Code | Generating null models for hypothesis testing. | Custom scripts in R/Python using estimated step length and turning angle distributions. |

| Regularization Algorithm | Interpolates locations to constant time intervals for analysis. | Brownian Bridge or continuous-time correlated random walk (ctcrw) models in the crawl R package. |

| Statistical Test Suite | Formal testing of directional persistence and model fit. | Rayleigh Test (directional data), Likelihood Ratio Tests, Bayesian Information Criterion (BIC) for model selection. |

| Computational Environment | Handling large telemetry datasets and simulations. | High-performance computing clusters may be needed for population-level simulations and Bayesian MCMC methods. |

Spatio-Temporal Point Process Models for Complex Movement Patterns

Within the broader thesis on advancing GPS telemetry data analysis methods in movement ecology, this document establishes rigorous protocols for applying Spatio-Temporal Point Process (STPP) models. These models provide a foundational mathematical framework for deciphering the latent drivers behind observed animal movement sequences, moving beyond descriptive statistics to inferential, mechanism-based understanding. For researchers and drug development professionals, these methods are critical for pre-clinical behavioral phenotyping, assessing drug impacts on locomotor patterns, and modeling disease spread dynamics through host movement.

Core Theoretical Framework

A Spatio-Temporal Point Process is defined by a conditional intensity function, λ(s,t | Ht), which characterizes the expected rate of events (e.g., a GPS fix indicating a turn, acceleration, or residence) at location s and time t, given the history of the process Ht. For movement data, events are typically the observed spatio-temporal coordinates (xi, yi, t_i) from telemetry.

Key model classes include:

- Poisson Process Models: Assume independence between events.

- Hawkes Processes: Model self-excitatory behavior (e.g., clustering of foraging steps).

- Inhomogeneous Poisson Processes: Intensity is a function of spatial and temporal covariates (e.g., habitat, time of day).

- Cox Processes: Intensity is itself a stochastic process, accommodating latent environmental drivers.

STPP models translate complex movement tracks into interpretable parameters quantifying response to environmental gradients and internal state.

Table 1: Common STPP Models and Their Ecological/Drug Research Interpretations

| Model Type | Intensity Function Form | Key Parameters | Movement Ecology Interpretation | Pre-Clinical Research Application |

|---|---|---|---|---|

| Inhomogeneous Poisson | λ(s,t) = exp(β₀ + ΣβᵢXᵢ(s,t)) | Covariate coefficients (βᵢ) | Habitat selection strength, circadian influence. | Drug effect on place preference (e.g., aversion to open areas). |

| Spatio-Temporal Hawkes | λ(s,t) = μ(s,t) + ∫∫ g(s-s', t-t') dN(s',t') | Baseline rate (μ), triggering kernel (g) | Foraging hotspot persistence, social attraction. | Modeling repetitive, stereotypic behaviors induced by a compound. |

| Log-Gaussian Cox (LGCP) | λ(s,t) = exp(βX(s,t) + ξ(s,t)) | Gaussian Process parameters | Response to unmeasured latent spatial resources. | Quantifying unstructured inter-individual variability in locomotor response. |

Table 2: Example Parameter Estimates from Simulated Caribou Movement Data

| Covariate (Xᵢ) | Coefficient (βᵢ) | Std. Error | p-value | Interpretation |

|---|---|---|---|---|

| Intercept (Baseline log-rate) | -3.21 | 0.15 | <0.001 | Baseline movement intensity. |

| Forest Cover (%) | 1.85 | 0.22 | <0.001 | Strong attraction to forest. |

| Distance to Road (km) | 0.92 | 0.18 | <0.001 | Avoidance of roads. |

| Time since Sunrise (hr) | -0.15 | 0.05 | 0.002 | Decreasing activity as day progresses. |

Experimental Protocols

Protocol 4.1: Data Preprocessing for STPP Modeling

Objective: Transform raw GPS telemetry data into a marked spatio-temporal point pattern suitable for STPP analysis.

- Data Cleaning: Import GPS fixes (ID, DateTime, Lat, Lon). Remove 2D fixes with dilution of precision (DOP) > 5. Correct for erroneous fixes using speed filters (e.g., discard points requiring velocity > 10 m/s for the species).

- Projection: Project geographic coordinates (Lat/Lon) to a meaningful planar coordinate system (e.g., UTM) in meters.

- Event Definition: Define the "event" of interest. This is often the GPS fix itself for presence models. For activity models, derive new events from steps:

- Turn-angle events: Flag fixes where relative turning angle > 45°.

- Residence events: Apply a spatial cluster algorithm (DBSCAN) to identify localized fix clusters.

- Covariate Raster Alignment: For each event (x,y,t), extract covariate values (e.g., vegetation index, elevation, human footprint) from spatio-temporally aligned raster stacks using the

terraorrasterR package. - Create Point Pattern Object: Assemble data into a ppp or stpp object (R:

spatstat,stpp): coordinates, time stamps, marks (individual ID, derived activity state), and window (study area polygon).

Protocol 4.2: Fitting an Inhomogeneous Poisson STPP Model

Objective: Model movement intensity as a function of static and dynamic spatial covariates.

- Specify Model Formula: Define the log-linear model for intensity. Example:

~ forest_cover + dist_to_water + cosinor(time_of_day, period=24)wherecosinormodels diurnal periodicity. - Model Fitting: Use the

ppm()function inspatstat(for spatial) or adapt for space-time usingstpporinlabru.

- Model Checking: Perform residual analysis (e.g., quadrature residuals) using

diagnose.ppm. Test for remaining spatio-temporal interaction via the K-function (KestorKinhom).

Protocol 4.3: Fitting a Self-Exciting Hawkes Process Model

Objective: Model movement events where one event increases the probability of subsequent nearby events in time and space (e.g., foraging bursts).

- Kernel Specification: Define a parametric triggering kernel, e.g., an exponential decay in time and Gaussian in space: g(Δt, Δs) = α * exp(-δ * Δt) * (1/(2πσ²)) * exp(-Δs²/(2σ²)).

- Parameter Estimation: Use maximum likelihood estimation via the

hawkesorPtProcessR packages.

- Interpretation: The parameter

αindicates the strength of self-excitation,δthe temporal decay rate, andσthe spatial scale of clustering.

Visualizations

Title: STPP Modeling Workflow for Movement Data

Title: Self-Exciting Hawkes Process Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for STPP Analysis in Movement Ecology

| Item/Category | Specific Solution/Software Package | Primary Function in STPP Analysis |

|---|---|---|

| Programming Environment | R Statistical Software (spatstat, stpp, inlabru, animove) |

Core platform for statistical fitting, simulation, and visualization of point processes. |

| Spatio-Temporal Data Handling | Python (PyTorch, TensorFlow Probability with STPP extensions) |

Building custom, deep learning-based STPP models for very large datasets. |

| Bayesian Inference Engine | Stan (brms, spatiotemporal models) |

Fitting complex hierarchical STPP models with random effects and sophisticated GP priors. |

| Covariate Data Source | Remote Sensing Rasters (Landsat, MODIS, Copernicus) via Google Earth Engine (rgee) |

Provides high-resolution spatial (and temporal) environmental layers for the intensity function λ(s,t). |

| High-Performance Computing | Cloud Compute (Google Cloud VMs, AWS EC2) / Slurm Cluster | Enables fitting computationally intensive LGCP or large Hawkes models via parallelization. |

| Movement Data Repository | Movebank (movebank.org) | Hosts curated animal tracking data with associated environmental layers, useful for model validation. |

Solving Common GPS Data Challenges: Error, Gaps, and Model Fit

Within the broader thesis on GPS telemetry data analysis methods in movement ecology research, managing positional error is paramount for deriving accurate movement paths, home ranges, and behavioral inferences. Two critical components for error management are the Dilution of Precision (DOP) metrics, which quantify the geometric quality of satellite constellations, and speed filters, which identify and remove physiologically implausible locations based on movement rates. These protocols provide Application Notes for implementing these filters in research aimed at understanding animal movement, with cross-disciplinary relevance for researchers, scientists, and professionals in fields requiring precise spatial data, such as environmental monitoring and drug development logistics.

Understanding Dilution of Precision (DOP)

DOP Metrics and Interpretation

DOP values are dimensionless multipliers for expected positional error. Lower DOP values indicate superior satellite geometry.

Table 1: Common DOP Metrics and Their Significance

| DOP Metric | Description | Ideal Value | Acceptable Threshold* |

|---|---|---|---|

| GDOP | Geometric DOP (3D position + time) | ≤1 | ≤4 |

| PDOP | Positional DOP (3D position) | ≤1 | ≤5 |

| HDOP | Horizontal DOP (latitude, longitude) | ≤1 | ≤3 |

| VDOP | Vertical DOP (altitude) | ≤1 | ≤4 |

| TDOP | Time DOP (clock bias) | ≤1 | ≤3 |

*Thresholds are generalized; specific research needs may require stricter values.

Protocol: Filtering GPS Data by DOP Values

Objective: To remove GPS fixes with poor satellite geometry to improve overall dataset accuracy.

Materials & Software:

- Raw GPS telemetry data (e.g., CSV, Shapefile).

- Statistical software (R, Python) or GIS software (QGIS, ArcGIS Pro).

- Data from your GPS collars/transmitters must include DOP fields (typically HDOP or PDOP).

Procedure:

- Data Import: Load your GPS dataset, ensuring DOP fields are included.

- Threshold Determination: Consult your GPS device manufacturer's recommendations and review literature for your study species and environment. Establish maximum acceptable HDOP/PDOP thresholds (e.g., HDOP ≤ 5).

- Filter Application: Subset the data to retain only records where the DOP value is less than or equal to your threshold.

- R example:

filtered_data <- raw_data[raw_data$HDOP <= 5, ] - Python (Pandas) example:

filtered_df = raw_df[raw_df['HDOP'] <= 5]

- R example:

- Validation: Calculate and report the percentage of fixes removed. Visually inspect remaining fixes on a map for obvious outliers.

Speed Filtering Protocols

Theoretical Basis and Threshold Calculation

Speed filters identify and flag fixes that would require an implausible speed to have been traveled from the previous known location. The maximum plausible speed (Vmax) is species- and context-specific.

Protocol: Establishing a Species-Specific Maximum Speed (Vmax)