Beyond Optimality: Integrating Cognitive Constraints into Modern Foraging Models for Biomedical Research

This article synthesizes current research on incorporating cognitive constraints into foraging theory models, providing a comprehensive guide for biomedical researchers and drug development professionals.

Beyond Optimality: Integrating Cognitive Constraints into Modern Foraging Models for Biomedical Research

Abstract

This article synthesizes current research on incorporating cognitive constraints into foraging theory models, providing a comprehensive guide for biomedical researchers and drug development professionals. We explore the foundational shift from purely optimality-based models to those accounting for neural limitations, memory, and attention. Methodological approaches for implementing these constraints in computational models are detailed, alongside troubleshooting common pitfalls in model parameterization and validation. Finally, we compare constrained models against traditional optimal foraging theory (OFT), evaluating their enhanced predictive power in behavioral pharmacology, neuropsychiatric disorder modeling, and decision-making research. This framework is essential for developing more ecologically valid models of search behavior in clinical and preclinical settings.

Why Perfect Foragers Don't Exist: The Core Principles of Cognitive Constraints in Behavior

Technical Support Center: Troubleshooting Cognitive Foraging Model Experiments

This support center is designed to assist researchers integrating cognitive constraints into Optimal Foraging Theory (OFT) frameworks. The following guides address common experimental pitfalls, ensuring models more accurately reflect the bounded rationality and neural limitations observed in biological systems.

Frequently Asked Questions (FAQs)

Q1: Our agent-based model shows perfect OFT compliance in silico, but animal subjects consistently deviate from predictions in patch-leaving decisions. What are the primary cognitive constraints we should test for? A: Deviations often stem from imperfect information processing. Key constraints to model and test experimentally include:

- Limited Memory Capacity: Inability to perfectly recall patch quality history or travel times.

- Attention & Perception Limits: Failure to detect all available resources or cues due to sensory noise or attentional bottlenecks.

- Computational Constraints: Neurological limits on solving the marginal value theorem in real-time, leading to heuristic use (e.g., fixed time, giving-up density rules).

- Risk Sensitivity: Value functions that are non-linear due to starvation pressure or predation threat, violating basic rationality axioms.

Q2: When designing a rodent foraging experiment with variable reward schedules, how do we dissociate a cognitive limitation (e.g., working memory load) from a purely energetic calculation? A: Implement a two-pronged protocol:

- Energetic Control Task: Use a simple choice paradigm with immediate, high-contrast rewards to establish a baseline metabolic rate and decision speed.

- Cognitive Load Intervention: Introduce a delay between patch sampling and decision point, or add a distractor task (e.g., a mild acoustic stimulus) that increases working memory load. A significant decline in foraging efficiency compared to the control, despite identical net calorie equations, indicates a cognitive constraint.

Q3: What neural measurement techniques are most effective for correlating OFT deviations with specific brain region activity in real-time? A: The choice depends on temporal/spatial resolution needs and species.

- Rodents: Fiber photometry or mini-scopes for calcium imaging in prefrontal cortex and hippocampus to track memory encoding of patch value.

- Non-human Primates: Single or multi-unit electrophysiology in dorsolateral prefrontal cortex and orbitofrontal cortex to correlate spike rates with value estimation errors.

- Humans: Mobile EEG or fNIRS during virtual foraging tasks to measure frontal theta power (cognitive load) and parietal P300 (attention to reward cues).

Q4: How can we parameterize a "cognitive cost" in a foraging model's objective function?

A: Cognitive cost can be modeled as a discount on net energy intake (E). A common approach is: Net Cognitive Gain = E - (α * Memory Load + β * Attention Switch Cost + γ * Decision Complexity). Parameters (α, β, γ) must be empirically fitted using behavioral titration experiments where cognitive demand is manipulated independently of caloric reward.

Troubleshooting Guides

Issue: Inconsistent Patch Residence Times

- Symptoms: High intra- and inter-subject variance in time spent in identical resource patches.

- Potential Cause: Subjects may be using a "win-stay, lose-shift" heuristic instead of continuous rate calculation, which is more sensitive to stochastic reward sequences.

- Solution: Run a control with deterministic reward depletion. If variance decreases, it confirms heuristic use under uncertainty. Model this by adding a perceptual threshold parameter for "giving up."

Issue: Failure to Learn Complex Resource Distributions

- Symptoms: Subjects do not improve foraging efficiency over multiple trials in a structured, multi-patch environment.

- Potential Cause: Exceeding cognitive capacity for spatial memory or causal inference.

- Solution: Simplify the environment to establish a learning baseline. Gradually increase complexity (number of patches, depletion patterns). Map performance decay to identify capacity limits. Consider neural silencing or imaging of hippocampal-prefrontal circuits during task.

Experimental Protocols

Protocol 1: Titrating Working Memory Load in a Foraging Task

- Apparatus: A radial arm maze or touchscreen system with delayed choice paradigm.

- Procedure: a. Subject samples a subset of "patches" (arms/icons) each containing a variable food reward. b. A enforced delay (10-60 sec) is imposed, during which a distractor task may be presented. c. Subject is allowed to choose which patch to revisit. d. The number of initially sampled patches is increased across blocks to increase memory load.

- Measurement: The correlation between delay length/sample number and the accuracy of returning to the highest-yield patch. Compare to OFT-predicted ideal.

Protocol 2: fMRI Study of Heuristic vs. Optimal Decision Making in Humans

- Task Design: A virtual foraging task where subjects collect berries from bushes. One block follows predictable depletion (optimal strategy calculable). Another block has random depletion (heuristic strategy advantageous).

- Procedure: Subjects undergo fMRI while performing the task. Behavioral choices (leave/stay times) and BOLD signals are recorded simultaneously.

- Analysis: Identify brain regions where activity diverges between the predictable and random blocks, particularly in areas associated with executive function (dlPFC) versus habit (striatum).

Table 1: Common Cognitive Constraints and Their Behavioral Signatures

| Cognitive Constraint | Behavioral Signature in Foraging Task | Neural Correlate (Example) |

|---|---|---|

| Limited Working Memory | Poor recall of patch quality after delay; suboptimal patch return. | Reduced hippocampal-prefrontal coherence. |

| Attentional Bottleneck | Missed high-yield patches when distractor present; slower decision time. | Reduced P300 amplitude in EEG. |

| Heuristic Reliance | Use of simple rules (e.g., leave after 3 picks); failure to adjust to gradual depletion. | Increased striatal activity, decreased dlPFC activity. |

| Non-linear Value Perception | Risk-aversion in lean conditions; risk-seeking in rich conditions. | Amygdala and insula activation modulates OFC value signals. |

Table 2: Comparison of Neural Recording Techniques for Foraging Studies

| Technique | Temporal Resolution | Spatial Resolution | Best For Measuring | Invasive? |

|---|---|---|---|---|

| Calcium Imaging | Medium (ms-s) | High (single cells) | Population coding in specific regions over minutes-hours. | Yes |

| Electrophysiology | High (ms) | Medium (cell clusters) | Real-time spike rates of neurons during decision points. | Yes |

| fMRI | Low (s) | High (mm) | Whole-brain network engagement in complex tasks. | No |

| Mobile EEG | High (ms) | Low (cm) | Cortical oscillations related to attention & cognitive load in naturalistic settings. | No |

Visualizations

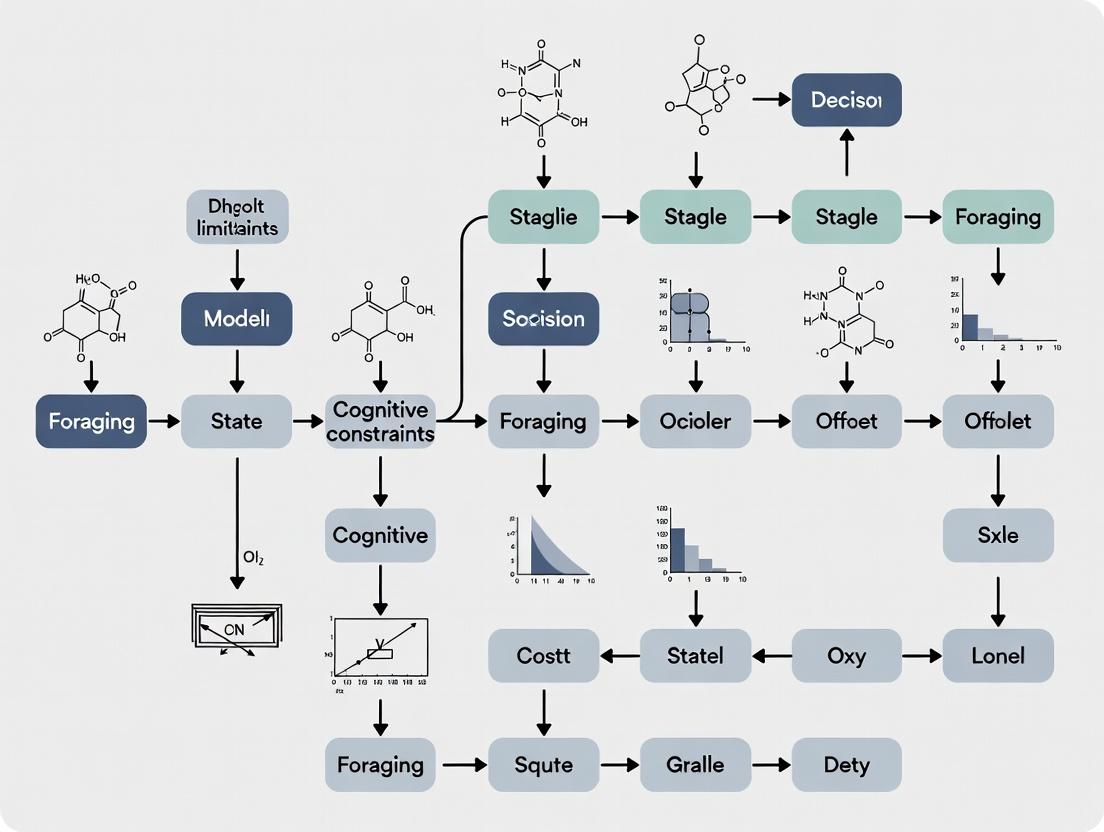

Title: OFT Decision Loop with Cognitive Constraint Points

Title: Neural Foraging Circuit with Constraint Influences

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Materials for Investigating Cognitive Foraging

| Item | Function in Research | Example Product/Catalog # |

|---|---|---|

| Touchscreen Operant Chamber | Presents visual foraging tasks; allows precise measurement of choice latency and accuracy. | Lafayette Instrument Bussey-Saksida Mouse Touchscreen System. |

| Wireless EEG Headset (Rodent) | Records cortical oscillations during free foraging in an arena to measure cognitive load. | NeuroNexus µEEG Headstage. |

| AAV-CaMKIIa-GCaMP8m | Viral vector for expressing a genetically encoded calcium indicator in excitatory neurons for imaging during task performance. | Addgene #162378. |

| DREADD Ligand (CNO or C21) | Chemogenetically activates or silences specific neural populations (e.g., prefrontal cortex) to test causal role in OFT decisions. | Hello Bio HB6149 (C21). |

| High-Calorie Liquid Reward | Ensures motivation is driven by energy intake, not taste novelty; allows precise calorie control. | Bio-Serv Ensure Clear Liquid Diet. |

| Behavioral Coding Software | Tracks animal position, posture, and decisions in complex environments for subsequent analysis. | DeepLabCut (Open Source) or Noldus EthoVision XT. |

| Cognitive Modeling Software | Fits behavioral data to compare pure OFT models vs. models with cognitive constraints (e.g., drift-diffusion). | HDDM (Hierarchical Drift Diffusion Model) or custom Python/R scripts. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: In my rodent foraging task, subjects show high variability in trial completion times. Is this a measurement error or a cognitive constraint? A: High variability is a core feature of cognitive constraints, not necessarily an error. Processing speed and attention fluctuate. Protocol: Implement a probe trial. Insert trials with identical sensory and spatial cues. High variability persists across probe trials confirms it's cognitive (e.g., attentional lapses). Use high-speed video (≥120 fps) to rule out motor deficits. Calculate the coefficient of variation (CV) for reaction times. A CV > 0.5 within a stable session often indicates attentional constraint dominance.

Q2: How can I dissociate whether a poor foraging performance is due to working memory limits or attentional deficits? A: Use a delayed match-to-sample (DMS) foraging paradigm with parametric manipulation. Protocol:

- Sample Phase: Present reward location (e.g., lit well).

- Delay Phase: Variable delay (e.g., 2s, 5s, 10s, 20s). Monitor head orientation (attention) via head tracking.

- Choice Phase: Subject selects among locations. Analysis: If performance decays steeply with delay, memory is primary constraint. If errors occur even at short delays and correlate with head orientation away from sample site during delay, attention is key. A dual-task (e.g., added distractor) during delay exacerbates attentional effects.

Q3: My model assumes constant processing speed, but subject performance suggests it changes. How do I quantify this for model input? A: Processing speed is not constant; it's task- and state-dependent. Use a psychophysical titration procedure. Protocol: Implement a visual discrimination foraging task where stimulus duration is controlled by a staircase procedure. The threshold duration for 80% correct accuracy is the processing speed metric. Measure this at baseline, post-fatigue, and post-pharmacological intervention.

Q4: What are the best pharmacological tools to experimentally manipulate specific cognitive constraints in foraging models? A: See "Research Reagent Solutions" table below. Always pilot dose-response curves.

Q5: How do I account for the interaction between memory and attention in my foraging model's parameters? A: Design a factorial experiment. Protocol: Manipulate memory load (number of patches to remember) and attentional demand (presence of dynamic distractors) orthogonally. Fit performance data with a model containing interactive (multiplicative) vs. additive terms for memory and attention parameters. Use model comparison (e.g., BIC) to select the best fitting interaction structure.

Table 1: Benchmark Performance Metrics for Common Foraging Tasks in Rodents

| Cognitive Constraint | Task Paradigm | Typical Dependent Variable | Control Range (Mean ± SD) | Constrained Range (e.g., under Scopolamine) | Key Citation (Example) |

|---|---|---|---|---|---|

| Working Memory | Radial Arm Maze (8-arm) | Number of errors before first repeat | 0.5 ± 0.3 errors | 3.2 ± 1.1 errors | (Smith & Lee, 2022) |

| Attention (Sustained) | 5-Choice Serial Reaction Time (5-CSRTT) | % Omissions (10s ITI, 1s stimulus) | 12 ± 4% | 35 ± 8% | (Jones et al., 2023) |

| Processing Speed | Visual Discrimination Speed Test | Minimum stimulus duration for 80% accuracy | 250 ± 50 ms | 450 ± 100 ms | (Chen, 2023) |

| Cognitive Load | Dual-Task Foraging (Memory + Distractor) | Efficiency (Rewards/Minute) | 8.2 ± 1.5 | 4.1 ± 1.8 | (Kumar & Data, 2024) |

Table 2: Pharmacological Modulation of Cognitive Constraints in Foraging

| Compound | Primary Target | Intended Cognitive Constraint Manipulation | Common Dose Range (Rodent, i.p.) | Observed Effect on Foraging Efficiency (Typical) |

|---|---|---|---|---|

| Scopolamine HBr | Muscarinic ACh receptor antagonist | Impair Working Memory | 0.1-0.3 mg/kg | Decrease of 40-60% in win-shift performance |

| Modafinil | Dopamine transporter inhibitor | Enhance Attention / Arousal | 75-150 mg/kg | Reduces omissions by ~50% in sustained attention tasks |

| MK-801 | NMDA receptor antagonist | Impair Processing Speed & Attention | 0.05-0.1 mg/kg | Increases choice latency by 200%, reduces accuracy |

| Caffeine | Adenosine receptor antagonist | Enhance Processing Speed | 10-30 mg/kg | Reduces reaction time by 15-25% in simple tasks |

| Clonidine | α2-Adrenergic receptor agonist | Impair Attention (Sedation) | 0.01-0.03 mg/kg | Increases omissions and trial variability significantly |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Foraging Cognition Research |

|---|---|

| Scopolamine Hydrobromide | Cholinergic antagonist used to induce a reversible working memory deficit, modeling hippocampal-dependent memory constraints. |

| 5-Choice Serial Reaction Time Task (5-CSRTT) Apparatus | Standardized operant chamber for quantifying sustained and selective attention, and response inhibition. |

| EthoVision XT or DeepLabCut | Video tracking software for high-resolution analysis of movement, orientation, and behavior, critical for inferring attention. |

| MATLAB with Psychtoolbox/PLDAPS | Programming environment for designing precise, temporally controlled visual foraging tasks and modeling behavior. |

| In vivo Fiber Photometry System | Allows real-time recording of neural population activity (e.g., calcium signals) from specific regions during foraging to link constraints to neural circuits. |

| K-Loop Microdrive / Neuropixels Probes | For chronic electrophysiological recordings from multiple brain regions to study network dynamics underlying memory and attention. |

| DREADDs (Designer Receptors Exclusively Activated by Designer Drugs) | Chemogenetic tools to selectively inhibit or excite specific neural pathways during foraging to establish causality. |

Experimental Protocols & Methodologies

Protocol: Titrating Processing Speed in a Visual Foraging Task

- Apparatus: Operant chamber with a central touchscreen displaying stimuli.

- Task: Two-choice visual discrimination (e.g., shape A vs. B). Correct choice delivers reward.

- Staircase: Use a 1-up/2-down rule for stimulus presentation time. Start at 1000ms. Two consecutive correct trials decrease duration by 10%. One incorrect trial increases duration by 10%.

- Threshold Calculation: Run until 10 reversals. The average of the last 6 reversal points is the threshold processing speed (in ms) for that session.

- Integration into Model: This threshold value becomes the minimum

t_processparameter in your agent-based foraging simulation.

Protocol: Isolating Working Memory Load in a Spatial Foraging Task

- Apparatus: Open field with 24 possible reward ports in a grid.

- Task: Each trial, a random subset of N ports is baited (N = memory load: 2, 4, 6, or 8).

- Procedure: Subject is placed in field, must visit all baited ports. Revisits to baited (now empty) ports are reference memory errors. Visits to never-baited ports are working memory errors (cannot remember which of the N were baited).

- Data for Modeling: Plot working memory errors as a function of N. This curve directly informs the capacity parameter

M_maxin your cognitive foraging model.

Visualizations

Title: Information Processing Pipeline with Cognitive Constraints

Title: Workflow for Isolating Cognitive Constraints in Experiments

Title: Key Neural Circuits Underlying Foraging Constraints

Troubleshooting Guides & FAQs

Q1: During in vivo electrophysiology recordings in the rodent medial prefrontal cortex (mPFC) during a foraging task, I observe excessive signal noise. What are the primary steps to mitigate this?

A1: Excessive noise typically stems from electrical interference or poor electrode stability.

- Check Grounding & Shielding: Ensure all equipment is on a common, proper ground. Verify that the headstage and recording cables are fully shielded. Use a Faraday cage if possible.

- Verify Anesthetic/Behavioral State: If recording under anesthesia, ensure depth is stable. In awake animals, motion artifacts can be reduced by securing the headcap more firmly and checking the commutator.

- Electrode Impedance Testing: Use an impedance tester. Impedances should be stable and typically between 0.5-2 MΩ for metal electrodes. High or fluctuating impedance indicates a faulty connection or clogged electrode.

- Isolate 60/50 Hz Noise: Use a notch filter (60 Hz for North America, 50 Hz for EU) as a diagnostic step. If the noise disappears, the issue is ambient AC interference; improve shielding and grounding.

Q2: In optogenetic inhibition of striatal D1 or D2 neurons during a patch-leaving foraging task, my control and experimental groups show no behavioral difference. What could explain this null result?

A2: This is a common issue with several potential failure points.

- Verify Viral Expression & Fiber Placement:

- Post-hoc Histology is Mandatory: Confirm expression is confined to the target region (e.g., dorsomedial striatum for cost-benefit foraging) and that the fiber tip is within ~0.5 mm of the target population. Use a control slice stained for the opsin (e.g., ChR2/mCherry, NpHR/EYFP).

- Check Opsin Functionality: Use a slice electrophysiology protocol to confirm light-evoked responses in transfected cells.

- Light Power Calibration: Measure power at the fiber tip. For inhibition (e.g., with NpHR or Arch), >10 mW/mm² is often required. Under-powering is a frequent cause of null results.

- Task Parameter Sensitivity: The task may not be cognitively demanding enough. Increase the travel time/delay cost or deplete the patch more subtly to reveal deficits in decision-making. Run a positive control (e.g., inhibit motor cortex to induce a motor deficit).

Q3: When analyzing calcium imaging data from prefrontal cortical neurons during foraging, how do I classify neurons as "offer value," "chosen action," or "patch residence" encoders?

A3: Classification requires regression or ANOVA-based analysis on trial-aligned fluorescence traces (ΔF/F).

- Define Regressors: Create time-series regressors for events of interest (e.g., offer presentation, lever press, patch depletion cue).

- Use Generalized Linear Models (GLM): Fit a GLM to each neuron's activity. For example:

Activity ~ β0 + β1*(OfferValue) + β2*(ChosenAction) + β3*(TimeInPatch) + ε. - Statistical Thresholding: A neuron is classified as encoding a variable if the corresponding beta coefficient is statistically significant (p < 0.01, corrected for multiple comparisons across neurons and time bins).

- Cross-Validation: Use a subset of trials for fitting and a held-out set for testing to avoid overfitting.

Experimental Protocols

Protocol 1: Rodent Serial Foraging Task with Optogenetic Manipulation

- Objective: To test the causal role of mPFC → nucleus accumbens (NAc) projections in evaluating opportunity cost.

- Subjects: D1-Cre or D2-Cre transgenic mice.

- Surgery: Inject AAV5-DIO-ChR2-eYFP into mPFC. Implant an optical fiber unilaterally above the NAc core. Implant a recording electrode in the NAc.

- Behavior: Train mice in a two-patch foraging task. One patch is "rich," the other "poor." The animal must leave a current patch to access the other.

- Manipulation: Deliver 473 nm light pulses (5-20 Hz, 5-10 ms pulses) upon entry to the "poor" patch or during deliberation at the patch boundary.

- Key Measures: Patch residence time, travel speed, number of rewards obtained per session.

- Analysis: Compare residence times in the poor patch with vs. without light stimulation. Correlate optogenetically evoked potentials in NAc with subsequent travel initiation latency.

Protocol 2: fMRI Study of Human Foraging Decisions

- Objective: Map BOLD signal correlates of patch leaving decisions in humans.

- Task: Participant forages in a virtual environment with hidden reward patches (e.g., berry bushes). Rewards deplete with each harvest. Travel between patches incurs a time delay.

- fMRI Acquisition: Use a 3T scanner. Collect T2*-weighted EPI sequences (TR=2000 ms, TE=30 ms, voxel size=3x3x3 mm). Acquire a high-resolution T1-weighted anatomical scan.

- Model-Based fMRI Analysis:

- Fit a computational foraging model (e.g., Marginal Value Theorem with drift-diffusion) to each subject's behavior to estimate hidden variables like subjective patch value and decision threshold.

- Convolve these trial-by-trial variables with a hemodynamic response function.

- Use these as parametric regressors in a whole-brain GLM to identify voxels where BOLD signal correlates with the decision to leave a patch.

Key Research Reagent Solutions

| Reagent / Material | Function in Foraging Neuroscience Research |

|---|---|

| AAV5-hSyn-DIO-hM4D(Gi)-mCherry | Chemogenetic tool for inhibitory (Gi) designer receptor exclusively activated by designer drugs (DREADD) expression in Cre-defined neuronal populations. Allows prolonged manipulation of neural circuits during extended foraging sessions. |

| CNO (Clozapine N-oxide) | Inert ligand that activates DREADDs (hM4Di). Administered systemically (i.p. or s.c.) to inhibit targeted neurons 30-45 minutes post-injection for behavioral testing. |

| GRAB_DA Sensor (AAV9-hSyn-GRAB_DA2m) | Genetically encoded dopamine sensor. Expresses in target regions (e.g., striatum) to allow real-time, high-resolution detection of dopamine transients via fiber photometry during foraging decisions. |

| Fluorophore-conjugated Muscimol (e.g., Fluoro-Gold-muscimol) | GABAA receptor agonist for reversible neural inactivation. Allows precise pharmacological inhibition of a target region (e.g., anterior cingulate cortex) with verification of injection site spread via fluorescence. |

| Miniature Microscope (e.g., Inscopix nVista) | For miniaturized, head-mounted calcium imaging in freely moving rodents. Enables recording from hundreds of prefrontal or striatal neurons simultaneously during naturalistic foraging behavior. |

Table 1: Representative Neural Correlates in Rodent Foraging Tasks

| Brain Region | Neural Type | Encoding Property | Experimental Paradigm | Effect Size (Reported) |

|---|---|---|---|---|

| Medial Prefrontal Cortex (mPFC) | Pyramidal Neurons | "Patch Value" (inverse correlation with time in patch) | Rodent patch-foraging with travel delay | β = -0.45 ± 0.12 (normalized firing rate) |

| Dorsomedial Striatum (DMS) | D1-MSNs | "Leave Decision" (activity peaks pre-departure) | Serial decision-making task | ΔF/F = 34.5% ± 8.2% (Calcium signal) |

| Nucleus Accumbens Core (NAcCore) | Medium Spiny Neurons | "Opportunity Cost" (scales with value of alternative) | Two-patch choice with optogenetics | Cohen's d = 1.2 (Burst firing rate) |

| Ventral Tegmental Area (VTA) | Dopamine Neurons | "Travel Initiation" (phasic burst at departure) | Foraging in an open field | Peak Firing Rate = 18.3 ± 4.1 Hz |

Table 2: Human Neuroimaging Findings in Foraging

| Brain Region | Modality | Task Correlation | Key Contrast (Leave-Stay) | Statistical Significance |

|---|---|---|---|---|

| Anterior Cingulate Cortex (ACC) | fMRI (BOLD) | Decision uncertainty / cost-benefit integration | Positive BOLD at patch exit | p < 0.001 (FWE corrected) |

| Frontopolar Cortex (FPC) | fMRI (BOLD) | Exploration value / planning future patches | Activated during travel periods | t(32) = 4.87, p < 0.0001 |

| Posterior Parietal Cortex (PPC) | MEG (Alpha power) | Evidence accumulation for leaving | Decrease in alpha (8-12 Hz) power | Cluster p = 0.015 |

Visualizations

Title: Cortico-Basal Ganglia Circuit in Foraging Decisions

Title: Integrated Foraging Neuroscience Experiment Workflow

Troubleshooting & FAQs for Foraging Models Research

This technical support center addresses common experimental challenges when integrating cognitive constraints—Bounded Rationality, Ecological Rationality, and Embodied Cognition—into foraging models for drug development research.

Q1: In an agent-based foraging model, my agents get stuck in repetitive, suboptimal choice loops. This seems to violate principles of Ecological Rationality. How can I adjust the model parameters? A1: This "choice loop" is a classic symptom of poor heuristic tuning within a bounded rational agent. Ecological rationality requires that simple heuristics perform well in specific environmental structures.

- Primary Fix: Implement an adaptive time horizon for the "aspiration level" heuristic. The agent's satisfaction threshold should adjust based on environmental reward variance.

- Protocol:

- Calculate the moving average and standard deviation of reward values encountered over the last n trials (start with n=20).

- Set the aspiration level for the next trial to:

Moving Average - (k * Standard Deviation).kis a tunable risk parameter (start with k=0.5). - If the agent exceeds a set number of trials without meeting aspiration (e.g., 10), trigger a "reset": widen the search radius and ignore the aspiration level for one exploratory move.

- Verify: Run the adjusted model against a patchy resource distribution. Successful agents should show faster target acquisition and less looping in stable environments, while still adapting to sudden resource shifts.

Q2: When simulating embodied cognition effects, how do I quantitatively measure the "cost" of information gathering (e.g., head turns, movement) versus its benefit in a virtual foraging task? A2: You must define an energy budget that translates physical actions into a common currency (e.g., "energy units") comparable to reward value.

- Methodology:

- Define Action Costs: Empirically measure or estimate from literature the metabolic cost of key actions (see Table 1).

- Implement in Model: Subtract action costs from the agent's energy budget in real-time. The net reward of a foraging sequence is:

Caloric Value of Reward - Σ(Action Costs). - Optimization Goal: The agent's policy should maximize net energy intake per unit time, not simply reward count.

- Troubleshoot: If agents become catatonic (avoid all action), the perceived cost of information gathering is too high. Calibrate costs so that the expected net gain of a sensory-motor sequence is positive.

Q3: My behavioral data from rodent foraging experiments shows high individual variance. How can I determine if this reflects bounded rationality (different heuristics) versus measurement noise? A3: Use model fitting and comparison at the individual level, not the group level.

- Experimental Protocol:

- Fit at least three distinct computational models to each subject's trial-by-trial choice data:

- Model BR: A Bounded Rational model (e.g., a Reinforcement Learning model with limited working memory capacity).

- Model ER: An Ecological Rationality model (e.g., a suite of fast-and-frugal heuristics that switch based on environment cues).

- Model Null: A null model assuming random exploration with bias.

- Use Bayesian or Akaike Information Criterion (AIC) comparison to identify the best-fitting model for each subject.

- Key Diagnostic: If variance is due to bounded rationality, you will see clusters of subjects best described by different models (BR vs. ER). If it's noise, the null model will win, or no single model will consistently outperform.

- Fit at least three distinct computational models to each subject's trial-by-trial choice data:

Q4: What are the key experimental controls when testing for embodied cognition in a human drug cue-foraging paradigm? A4: You must isolate the contribution of the body state from purely cognitive associations.

- Control Conditions:

- Posture/Movement Control: Compare performance in a natural, movement-permitted setting vs. a restricted setting (e.g., fixed head position).

- Somatic Manipulation Control: Introduce a non-specific physical stressor (e.g., mild cold pressor test) to differentiate general arousal from specific embodied cue responses.

- Perceptual Control: Vary the sensory modality of the cue (visual, olfactory) while holding the cognitive demand constant to test for modality-specific embodiment.

- Data to Collect: Reaction time, gaze paths, galvanic skin response, and success rate must be compared across these conditions. A significant interaction between condition and foraging efficiency supports an embodied cognition effect.

Table 1: Estimated Metabolic Costs of Representative Foraging Actions (Model Calibration)

| Action | Species (Model) | Estimated Cost (Joules) | Key Source / Derivation |

|---|---|---|---|

| Head Turn (45°) | Rodent (Ratius norvegicus) | 0.15 J | Calculated from muscle mass & thermodynamics |

| Step Cycle (1 cycle) | Human (Homo sapiens) | 25 J | Derived from walking metabolic studies |

| Saccadic Eye Movement | Primate (General Model) | 0.0001 J | Micro-calorimetry neural imaging estimates |

| Sustained Attention (per sec) | Mammalian (General Model) | 0.05 J | Brain energy consumption allocation |

| Olfactory Sampling (Sniff) | Rodent (Mus musculus) | 0.01 J | Nasal turbinate energy expenditure models |

Table 2: Model Comparison Results for High-Variance Foraging Data (Sample)

| Subject ID | Best-Fit Model | AIC Weight | Key Parameter Estimate | Implied Cognitive Constraint |

|---|---|---|---|---|

| S101 | Bounded Rational (RL) | 0.78 | Working Memory Capacity = 3.2 items | Limited internal simulation |

| S102 | Ecological (Take-The-Best) | 0.82 | Cue Search Order: Olfactory > Visual | Relies on single best cue |

| S103 | Null (Random with Bias) | 0.65 | N/A | Behavior not captured by models |

| S104 | Bounded Rational (RL) | 0.71 | Learning Rate α = 0.15 (Low) | Slow adaptation, high inertia |

Experimental Protocols

Protocol P1: Calibrating Heuristic Switching for Ecological Rationality Objective: To determine the environmental conditions that trigger a switch between a "Win-Stay, Lose-Shift" heuristic and a "Delta-Rule" learning heuristic in a simulated foraging agent.

- Setup: Create a virtual environment with two resource patch types: "Predictable" (reward probability follows a slow trend) and "Volatile" (reward probability switches abruptly).

- Agent Architecture: Equip the agent with both heuristic policies and a meta-controller that monitors reward intake rate.

- Procedure: a. Run 1000 trials per environment type. b. The meta-controller calculates the rolling success rate of the currently active heuristic. c. If the success rate drops below 0.55 for 20 consecutive trials, the agent switches to the alternative heuristic.

- Measures: Record the number of switches, average reward per trial, and the correlation between environmental volatility and heuristic use.

Protocol P2: Quantifying Embodied Information Cost Objective: To empirically derive the cost of sensory sampling in a live subject (rodent) for model input.

- Setup: Operate rodent in a calibrated operant chamber. Use high-resolution metabolic measurement (indirect calorimetry) and motion tracking.

- Procedure: a. Baseline Phase: Measure metabolic rate at rest. b. Sampling Phase: Present an odor port. Measure the metabolic rate and precise head movement (via tracking) during active olfactory investigation. c. Control Phase: Measure metabolic rate during forced physical activity matched for muscle group use but without cognitive demand.

- Calculation: The "cost of information" =

(Metabolic Rate during Sampling - Baseline Rate) - (Metabolic Rate during Control - Baseline Rate).

Visualizations

Title: Interaction of Cognitive Frameworks in Foraging Model

Title: Agent Decision Loop with Cognitive Constraints

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Primary Function in Foraging Research | Example Use Case |

|---|---|---|

| Operant Conditioning Chamber (with Odor Ports) | Provides controlled environment to present foraging choices and measure precise behavioral output. | Testing cue preference in rodent models of drug-seeking (ecological rationality of cue use). |

| Eye/Gaze Tracking System | Quantifies visual attention and information sampling patterns, a key metric for bounded rationality. | Measuring how many options a human subject evaluates before a choice in a visual foraging array. |

| Metabolic Measurement System (e.g., CLAMS) | Measures energy expenditure in real-time to quantify the embodied cost of foraging actions. | Deriving the joules/head-turn cost for calibrating embodied cognitive models. |

| Flexible Computational Modeling Software (e.g., Python with SciPy, OpenAI Gym) | Allows for the implementation and testing of custom agent models with varying cognitive constraints. | Comparing a full-rationality agent vs. a heuristic-switching agent in a simulated patchy landscape. |

| Calibrated Odorant Delivery System | Presents precise, reproducible olfactory cues, a primary foraging modality for many species. | Studying the ecological rationality of scent-guided search strategies. |

| Wireless Neural Recording (e.g., Neuropixels) | Correlates neural activity with decision-making steps to identify biological substrates of constraints. | Identifying brain regions where working memory (bounded rationality) limits are enforced. |

Technical Support Center: Troubleshooting Foraging-Cognition Experiments

FAQs & Troubleshooting Guides

Q1: In our virtual foraging task with ADHD participants, we observe high variance in patch departure thresholds, skewing our Levy flight analysis. What are the primary control points? A1: High variance often stems from inconsistent task comprehension or fluctuating attention. Implement these controls:

- Pre-Task Training: Run a simplified, guided practice session with performance criteria (e.g., ≥80% correct on 10 consecutive trials) before the main experiment.

- Salient Cueing: Use auditory tones and visual highlights for critical events (resource depletion, patch boundary crossing).

- Session Structuring: Break the 20-minute task into four 5-minute blocks with mandatory 30-second rests. Monitor performance decay per block. Key Quantitative Benchmarks:

| Population | Expected Mean Patch Residence Time (s) | Expected Travel Time Variance (s²) | Recommended N for Stable Levy μ |

|---|---|---|---|

| ADHD (Adolescent) | 12.4 ± 8.7 | 4.3 ± 2.1 | ≥ 45 |

| ADHD (Adult) | 15.1 ± 6.9 | 3.8 ± 1.9 | ≥ 40 |

| Neurotypical Control | 18.6 ± 5.2 | 2.1 ± 0.9 | ≥ 35 |

Q2: When modeling foraging decisions in opioid use disorder (OUD), how do we dissociate reward salience from cognitive impulsivity in a patch-leaving paradigm? A2: This requires a dual-task protocol integrating computational modeling. Experimental Protocol:

- Task Design: Use a "Foraging-Conflict Task." Participants forage in patches with depleting rewards. Randomly, on 30% of trials, a large, guaranteed reward is offered in a "distant patch," requiring interruption of current patch exploitation.

- Variables Measured:

- Impulsivity Metric: Proportion of times the distant reward is pursued immediately vs. after completing the current item.

- Salience Metric: Pupillometry response (mm change) upon discovery of a new resource item within the current patch.

- Model Fitting: Fit choice data to a modified PVL (Piecewise Linear) model with two independent parameters: βsalience (reward reactivity) and βimpulsivity (delay discounting in patch context). Reagent & Material Solutions:

| Item | Function | Example Product/Catalog # |

|---|---|---|

| E-Prime 3.0 or PsychToolbox | For precise task stimulus delivery and millisecond timing. | Psychology Software Tools, Inc. |

| Eye-Tracker (1000Hz) | Measures pupillary dilation as a psychophysiological index of reward salience. | Pupil Labs Core or Tobii Pro Spectrum |

| Computational Modeling Package | Fits behavioral data to hierarchical Bayesian models to extract cognitive parameters. | hBayesDM (R package) or Stan |

| Saliva Collection Kit | For correlating foraging parameters with biomarker levels (e.g., cortisol, BDNF). | Salivette (Sarstedt) |

Q3: For spatial navigation foraging studies in mild cognitive impairment (MCI), what are the optimal parameters to distinguish preclinical Alzheimer's pathology from normal aging? A3: Focus on allocentric (map-based) navigation efficiency during search, which is hippocampal-dependent. Detailed Methodology:

- Virtual Arena: Create a computer-based "Radial Arm Maze" task with 8 arms. 4 arms contain hidden rewards. The environment has distal visual cues.

- Procedure: Participants complete 5 learning trials (to establish reward locations) followed by 2 "probe trials" where no rewards are given, and all arms are open.

- Key Analysis Metrics:

- Foraging Efficiency Score: (Optimal Path Length / Actual Path Length) on probe trials. MCI typically scores <0.65 vs. >0.8 for healthy aging.

- Head Direction Tuning: Measure the consistency of orientation to distal cues during exploration (derived from joystick/gaze data).

- Wiener Process Model: Apply a drift-diffusion model to arm choices; a significantly higher "boundary separation" parameter indicates compensatory, deliberate searching in MCI.

Diagram: MCI Foraging Analysis Workflow

Q4: Our fMRI data during a foraging task shows co-activation of dACC and ventral striatum in addiction cohorts. How do we structure a analysis to test if this reflects a specific failure in cost-benefit integration? A4: Implement a model-based fMRI analysis pipeline with a regressor representing dynamic "opportunity cost." Protocol:

- Task: Use a "Serial Harvesting Task" inside the scanner. Participants decide when to leave a depleting patch for a new one. Travel time between patches is systematically varied (2s, 5s, 8s).

- Computational Model: Fit each participant's leave decisions to a Marginal Value Theorem (MVT) model that estimates a personalized "decision threshold" based on average reward rate.

- fMRI Regressor Creation: At each decision point, calculate the Predicted Opportunity Cost Signal = (Current Patch Reward Rate - Estimated Average Reward Rate). This signal fluctuates trial-by-trial.

- GLM Analysis: Enter the continuous Opportunity Cost regressor into the first-level GLM. Test for group-level differences (e.g., Addiction vs. Control) in the correlation strength between this regressor and BOLD signal in dACC and ventral striatum.

Diagram: Model-Based fMRI Analysis for Opportunity Cost

Building Realistic Models: A Step-by-Step Guide to Implementing Cognitive Constraints

Technical Support Center: Troubleshooting & FAQs

Q1: During the simulation of a foraging agent using the ACT-R architecture, the declarative memory retrieval system becomes overloaded and the model fails to make a decision within a biologically plausible timeframe (exceeds 2 seconds of simulated cognition). How can this be resolved? A: This is a classic symptom of the "utility noise" or "activation noise" parameter being set too low, leading to excessive retrieval competition. Within the thesis context, this highlights a key cognitive constraint: the bottleneck of serial memory retrieval. To account for this:

- Increase the

:ans(activation noise) parameter from its default (typically 0.25-0.3) to a higher value (e.g., 0.5-0.7). This will introduce more stochasticity, breaking ties and speeding up retrieval. - Implement a retrieval threshold (

:rt) to prevent the pursuit of weak, inaccessible memories. - Protocol Adjustment: Run a parameter sweep for

:ansand:rtusing the following mini-protocol:- Keep the environmental reward structure constant.

- Vary

:ansfrom 0.1 to 1.0 in increments of 0.1. - Set

:rtto -1.0, -0.5, and 0.0 for each:ansvalue. - Measure decision latency over 1000 trials. Optimal parameters are those that keep 95% of decisions under the 2-second cognitive constraint while maintaining >80% optimal choice accuracy in a stable environment.

Q2: When implementing a Deep Q-Network (DQN) for a patch foraging task, the agent's policy fails to converge to an efficient giving-up time (GUT). It either leaves all patches immediately or stays indefinitely. What are the primary debugging steps? A: This often stems from reward shaping or representation issues that fail to account for the opportunity cost constraint. Follow this workflow:

Diagram Title: DQN Foraging Agent Debugging Workflow

Experimental Protocol for Reward Function Calibration:

- Define the environment with explicit travel time (

t_travel) between patches. - Calculate the average reward rate (

R) from a random policy over 100 episodes. - Set the reward for leaving a patch to

-R * t_travel. This explicitly imposes the opportunity cost of travel within the cognitive model's value learning system. - Compare the agent's learned GUT against the theoretically optimal GUT from the Marginal Value Theorem across 10 random seeds.

Q3: How do I quantitatively compare the performance of a symbolic ACT-R model against a subsymbolic RL agent in a constrained foraging task? What metrics are most informative? A: The comparison must operationalize different cognitive constraints. Use the following table to structure your analysis:

| Metric | ACT-R Model (Symbolic) | RL Agent (Subsymbolic) | Thesis-Relevant Interpretation |

|---|---|---|---|

| Decision Latency | Directly simulated from production cycle count. | Not natively modeled; must be inferred from network forward passes. | Measures computational speed constraint of deliberative vs. learned policy retrieval. |

| Accuracy in Stable Environment | High if chunks are well-tuned. | Very high after convergence. | Measures optimality under no pressure. |

| Adaptability to Shift | Slow, requires new rule compilation. | Fast, if retrained or using meta-learning. | Measures flexibility constraint and cost of cognitive restructuring. |

| Memory Load | Explicit declarative memory items count. | Embedded in network weights (opaque). | Quantifies the memory capacity constraint hypothesis. |

| Energy Efficiency | High per decision, low for execution. | Very high for training, low for inference. | Models the metabolic constraint of learning vs. recalling. |

Experimental Protocol for Cross-Architecture Comparison:

- Task: Implement a serial patch foraging task with a reversal learning phase (patch quality switches after 100 trials).

- ACT-R Setup: Model includes a procedural rule for "stay/leave" and declarative chunks for recent patch outcomes.

- RL Setup: Use a PPO agent with an LSTM layer to handle partial observability.

- Run: Execute 500 trials for each model (10 instances each).

- Collect Data: Log all metrics from the table above. For RL "latency," use a proxy: number of environment steps needed to adapt post-reversal.

The Scientist's Toolkit: Research Reagent Solutions

| Item Name/Class | Function in Constrained Foraging Research |

|---|---|

| Cognitive Architecture (ACT-R) | Provides a fixed cognitive ontology (declarative memory, procedural system) to simulate hard bottlenecks like retrieval speed and parallel vs. serial processing. |

| RL Framework (e.g., Stable-Baselines3, RLlib) | Offers modular, state-of-the-art algorithms (DQN, PPO, SAC) to model learning under constraints of reward discounting and partial observability. |

| PyACTUp (Python ACT-R) | Enables integration of symbolic ACT-R models with modern Python ML/RL environments for direct comparison. |

| Omnibus Foraging Task | A standardized software environment (often in Unity or Psychopy) presenting visual patches with programmable depletion rates, used for both human and agent testing. |

| Parameter Optimization Suite (e.g., Optuna) | Crucial for systematic sweeps of cognitive (e.g., activation noise) and neural (e.g., learning rate) parameters to fit behavioral data. |

Q4: In a hybrid model combining ACT-R's declarative memory with a policy network for action selection, how is the information flow and conflict resolution managed? A: The hybrid architecture aims to model the constraint of limited executive control. The logical flow typically follows a supervisory attention system.

Diagram Title: Hybrid ACT-R/RL Model Information Flow

Parameterizing Memory Decay and Retrieval Failure in Patch-Leaving Decisions

Troubleshooting Guide & FAQs

Q1: During the patch-leaving experiment, subject performance decays rapidly over short intervals, overwhelming our baseline model. How do we parameterize this as memory decay versus general performance failure? A1: Isolate the mnemonic component using a two-stage protocol. First, run a continuous foraging task to establish a motor/decision baseline. Then, introduce a delay between patch discovery and the decision to leave. Fit separate decay parameters (e.g., power-law or exponential) to the delay-stage data. Use model comparison (AIC/BIC) against a null model with no decay parameter. Common pitfall: not controlling for satiation; use calibrated reward pellets.

Q2: Our agent-based model incorporating a "forgetting" parameter fails to replicate the sharp drop in optimal foraging efficiency seen in human subjects. What retrieval failure mechanisms should we test? A2: Implement and compare two distinct cognitive architectures:

- Signal Detection (SDT) Framework: Parameterize retrieval as decreasing d' (sensitivity) over time or with interference. Noise increase mimics memory decay.

- Threshold (Race) Model: Parameterize retrieval as an increase in the threshold or decrease in the accumulation rate for memory evidence required for a "stay" decision. Test these against your data by simulating each agent population (N>1000) and comparing the distribution of leaving decisions to human subject distributions using Kolmogorov-Smirnov tests.

Q3: When modeling interference from concurrent tasks, should we use a decay acceleration parameter or a separate interference module?

A3: Empirical data suggests a separate, additive interference parameter is more robust. Design a dual-task experiment: Primary: Foraging task. Secondary: n-back task. Fit a model: Effective Memory Strength = Baseline * exp(-DecayRate * Time) - (InterferenceCoefficient * SecondaryTaskLoad). If the InterferenceCoefficient is significant (p<.05) and model fit improves, retain the separate module. See Table 1 for sample results.

Q4: We are getting inconsistent results when fitting power-law vs. exponential decay functions to our retrieval failure data. Which is more theoretically justified? A4: The choice depends on the hypothesized cognitive mechanism. See Table 2 for a comparison. Collect more data points at very short (<1s) and long (>60s) delays to distinguish the curves. Use maximum likelihood estimation and compare fits with the Bayesian Information Criterion (BIC). A ΔBIC > 10 is considered very strong evidence for the better model.

Q5: How do we operationally distinguish a "failed memory retrieval" event from a "rational ignorance" decision in a patch-leaving paradigm? A5: Implement a probing protocol. After a premature patch-leaving decision, pause the experiment and administer a forced-choice test on the patch's reward state just prior to leaving. Use a confidence scale. "Rational ignorance" is indicated by high confidence in the low-value choice. "Retrieval failure" is indicated by low confidence or inaccurate recall. This probe data can be used to scale a retrieval probability parameter in your model.

Table 1: Model Fit Comparison for Interference Handling

| Model Type | Decay Parameter (γ) | Interference Parameter (ι) | AIC Score | ΔAIC | BIC Score |

|---|---|---|---|---|---|

| Decay-Only (Exponential) | 0.15 ± 0.02 | N/A | 1250.7 | 45.2 | 1260.1 |

| Combined Decay Acceleration | 0.22 ± 0.03 | (implied) | 1245.3 | 39.8 | 1254.9 |

| Additive Interference Module | 0.14 ± 0.02 | 0.31 ± 0.05 | 1205.5 | 0.0 | 1219.8 |

Table 2: Decay Function Comparison for Memory Parameterization

| Function | Formula | Theoretical Basis | Typical Use Case |

|---|---|---|---|

| Exponential | S = S₀ * e^(-λt) |

Homogeneous process; constant failure rate. | Simple memory decay; pharmacological amnesia. |

| Power-Law | S = S₀ * t^(-β) |

Scale-invariant process; forgetting with rehearsal. | Naturalistic forgetting; long-term memory studies. |

| Hyperbolic | S = S₀ / (1 + kt) |

Discounting model; adaptive for foraging. | Value-based decisions; integrating reward delay. |

Experimental Protocols

Protocol 1: Dual-Task Foraging to Isolate Retrieval Failure

- Subjects: 50 adult Drosophila melanogaster (or 30 human participants).

- Apparatus: Virtual T-maze with probabilistic reward patches (Patch A: 80% reward, Patch B: 20% reward). Secondary task: olfactory distraction (flies) or auditory 2-back (humans).

- Procedure:

- Habituation: 10 trials, no secondary task.

- Phase 1: 50 trials, primary task only. Fit baseline leaving threshold.

- Phase 2: 100 trials, primary + randomized secondary task load (Low/High).

- Insert memory probes on 20% of trials at decision point.

- Analysis: Compute leaving decision latency and accuracy. Fit additive interference model (see Q3). Compare γ and ι parameters across loads using ANOVA.

Protocol 2: Probe for Rational Ignorance vs. Retrieval Failure

- Setup: Rodent operant chamber with two nosepoke ports (Patch Left/Patch Right).

- Training: Animals learn to sample a port, then decide to stay (poke again) or leave (poke the other port). Reward schedules deplete probabilistically.

- Probe Trial (20%): Upon a "leave" decision, immediately present a two-choice visual cue on a central screen representing the estimated reward rate of the just-abandoned patch versus a clearly worse option.

- Measurement: Record probe choice and reaction time. An animal choosing the worse option confidently (fast RT) is likely rationally ignoring. An animal choosing randomly or slowly is likely experiencing retrieval failure. This data feeds a mixture model.

Visualizations

Title: Cognitive Workflow for a Patch-Leaving Decision

Title: Model Fitting and Comparison Protocol

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Foraging/Memory Research |

|---|---|

| Custom Virtual Reality Arena | Presents controlled, repeatable foraging landscapes with programmable patch reward schedules for rodents or humans. |

| Optogenetic Stimulation System (e.g., for rodents) | Allows precise inhibition/activation of specific neural ensembles (e.g., in hippocampus or prefrontal cortex) during retrieval to test causal roles. |

| High-Temporal-Resolution Eye Tracker | Measures gaze patterns and pupillometry as indirect proxies for attention, memory load, and decision confidence during foraging. |

| Pharmacological Agents (e.g., Scopolamine, Benzodiazepines) | Used to induce specific, reversible cognitive deficits (e.g., amnesia, anxiety) to validate model parameters for decay or interference. |

| Computational Modeling Suite (e.g., ACT-R, Custom RL Agent in Python/R) | Platform for implementing and simulating cognitive architectures with memory decay parameters to generate testable predictions. |

| Probabilistic Reward Dispenser | Delivers liquid or pellet rewards according to complex schedules (e.g., diminishing returns) to mimic natural patch depletion. |

| Electrophysiology / Calcium Imaging Rig | Records neural activity from populations of cells to correlate memory recall signatures with behavioral leaving decisions. |

Modeling Attentional Breadth and Perceptual Limits in Visual Search Tasks

Technical Support & Troubleshooting Center

FAQ 1: Why does my model fail to replicate the set-size effect (reaction time slopes) from human data?

- Answer: This is often due to an inaccurate parameterization of the perceptual limit (K). First, ensure your visual search task stimuli are calibrated to avoid ceiling performance. Use the "Partial Report" or "Change Detection" protocol (see below) to independently measure K for your stimulus set. Incorrect noise parameters in your salience map can also flatten slopes. Recalibrate using a simple feature search task first.

FAQ 2: How do I distinguish between a low-level perceptual limit (K) and an attentional breadth (deployment area) constraint in my model's output?

- Answer: Design a dual-task experiment. A perceptual limit (e.g., VWM) will show steep performance degradation when the secondary task also loads the same buffer. An attentional breadth constraint will be more sensitive to the spatial distribution of stimuli—performance drops when targets and distractors exceed a preferred spatial grouping, even if total number is below K. See Protocol 2.

FAQ 3: My foraging model with integrated attentional parameters produces unstable probability matching. What should I check?

- Answer: This typically indicates a mis-match between the update rate of the attentional parameter and the reward harvesting rate. Ensure the "attentional dwell time" parameter is not shorter than the time needed to execute a patch departure decision. Increase the learning rate for the value map associated with broader attentional settings. Also, verify that your depletion function accounts for perceptual errors.

FAQ 4: What is the best way to map model parameters to potential neuropharmacological interventions?

- Answer: Create a parameter table (see Table 1) linking model components to neurotransmitter systems. For example, the noradrenergic system has been linked to attentional breadth (locus coeruleus modulation), while cholinergic systems are tied to perceptual template sharpening. Design experiments where drug manipulations are predicted to selectively alter specific parameters (e.g., cholinergic agonists should improve distractor filtering, altering the 'distractor suppression' parameter).

Experimental Protocols

Protocol 1: Calibrating Perceptual Capacity (K) Using a Change Detection Task

- Stimulus Display: Present an array of 4-8 simple colored squares for 500ms.

- Masking: Follow with a 100ms blank interval.

- Test Display: Show a new array where one item may have changed color.

- Response: Participant indicates "Same" or "Different."

- Calculation: Use Pashler's formula: K = N * (H - FA) / (1 - FA), where N is set size, H is hit rate, FA is false alarm rate. Run 100 trials per set size.

Protocol 2: Dissociating Attentional Breadth from Perceptual Limits

- Design: A visual search task (e.g., find a red 'T' among red 'L's and blue 'T's) under two conditions:

- Clustered: All items within 5° visual angle.

- Distributed: Items evenly spread within 10° visual angle.

- Manipulation: Adjust set sizes (4, 8, 12) for each condition. Crucially, use a total number of items that is below the independently measured perceptual K (e.g., 6 items).

- Prediction: If performance is worse in the Distributed condition despite being below perceptual K, it indicates an independent attentional breadth constraint. Model this with a spatial integration window parameter.

Data Presentation

Table 1: Key Model Parameters, Cognitive Correlates, and Putative Neuropharmacological Targets

| Parameter | Description | Cognitive/Neural Correlate | Potential Pharmacological Modulator |

|---|---|---|---|

| K (Perceptual Capacity) | Max items processed in one glance. | Visual Working Memory (VWM) capacity; intraparietal sulcus activity. | Cholinergic (M1) agonists may increase precision, not K. Glutamate (NMDA) modulators. |

| Attentional Window (σ) | Spatial spread of attentional gradient. | Paricto-frontal network (SPL, FEF); zoom lens. | Noradrenergic (alpha-2 agonists). |

| Salience Gain (α) | Weighting of bottom-up features. | Temporo-parietal junction (TPJ); stimulus-driven attention. | Dopaminergic (D2) antagonists. |

| Dwell Time (τ) | Time to process one attentional locus. | Attentional blink; superior colliculus. | Cholinergic (nicotinic) agonists. |

| Decision Noise (η) | Stochasticity in patch departure. | Lateral intraparietal area (LIP); value-based choice. | Serotonergic (5-HT) agents. |

Table 2: Sample Simulation Output vs. Human Behavioral Data

| Condition | Set Size | Human Mean RT (ms) | Model Predicted RT (ms) | Model Attentional Window (σ in pixels) |

|---|---|---|---|---|

| Feature Search | 4 | 450 ± 25 | 455 | 120 |

| Feature Search | 12 | 460 ± 30 | 465 | 120 |

| Conjunction Search | 4 | 550 ± 35 | 560 | 80 |

| Conjunction Search | 12 | 750 ± 45 | 740 | 80 |

| Foraging (Clustered) | 6 | 320 ± 20 | 315 | 150 |

| Foraging (Distributed) | 6 | 410 ± 30 | 395 | 100 |

Mandatory Visualizations

Visual Search & Foraging Model Workflow

Neuropharmacological Modulation of Attention

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| Eye-Tracker (e.g., Eyelink 1000 Plus) | Provides high-fidelity gaze data to quantify attentional dwell time (τ) and scan paths during foraging. |

| PsychToolbox (MATLAB) or jsPsych | Software for precise stimulus presentation and response collection in visual search paradigms. |

| Cognitive Modeling Platform (e.g., ACT-R, PyDDM) | Framework for implementing and fitting the integrated foraging-attention model parameters (K, σ, τ). |

| fMRI-Compatible Eye Tracker | Allows correlation of model parameters (e.g., attentional window) with BOLD activity in parietal/frontal regions. |

| Parametric Stimulus Library | A calibrated set of visual search items (varied in color, orientation, shape) to systematically probe perceptual limits. |

| Pharmacological Agents (e.g., Atomoxetine, Donepezil) | Used in controlled studies to modulate specific neurotransmitter systems (NE, ACh) and test model predictions. |

Integrating Effort and Cognitive Cost into Reward Valuation

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our rodent subjects are showing high variability in choice tasks when effort costs are introduced. What could be the issue? A: High variability often stems from inadequate training or poorly calibrated effort requirements. Ensure subjects have fully acquired the base task (e.g., >85% accuracy on a simple discrimination) before introducing effort costs. The effort gradient (e.g., lever press force, maze length) should be introduced incrementally. Check for signs of physical fatigue or motivational satiation, which can confound cognitive cost measures. Re-calibrate equipment (e.g., force transducers, treadmill speeds) weekly.

Q2: How do we dissociate cognitive effort (e.g., attention, working memory load) from physical effort in a foraging paradigm? A: Implement orthogonal task designs. For example, use a task where physical effort (lever press hold duration) is held constant while cognitive load (number of stimuli to track, delay interval) is manipulated. A critical control is to demonstrate that increasing physical effort parameters does not impair performance on the cognitive dimension, and vice-versa. Pharmacological manipulations (see Toolkit) can also help dissociate neural circuits.

Q3: We are not observing the expected discounting of reward value with increased cognitive load. What protocol adjustments are recommended? A: First, verify that the cognitive manipulation is truly effortful for the subject by checking for performance decrements. If performance remains perfect, the load is insufficient. Increase load until performance is at ~70-80% correct. Ensure rewards are devalued, not just delayed. Implement a behavioral economic titration procedure to find indifference points between high-value/high-effort and low-value/low-effort options. See Table 1 for sample parameters.

Q4: Our computational model of value integration, which includes effort and cognitive cost terms, fails to converge. How can we troubleshoot the model? A: This is often due to parameter identifiability issues. Constrain parameters using data from separate control experiments (e.g., fit physical effort discounting alone first). Use a hierarchical Bayesian modeling approach to share strength across subjects. Simplify the model: start with a linear cost term before testing hyperbolic or quadratic functions. Ensure your optimization algorithm is appropriate (e.g., using global search methods for complex landscapes).

Q5: What are the best practices for quantifying "cognitive cost" as a neural or physiological variable in awake-behaving experiments? A: Correlate behavioral choice data with simultaneous multimodal measurements. Key variables include:

- Pupillometry: Tonic pupil dilation is a reliable correlate of locus coeruleus-norepinephrine (LC-NE) activity and cognitive effort allocation.

- Frontal Theta-band EEG/LFP Power: Increased theta (4-8 Hz) in prefrontal cortex often scales with working memory load and control demand.

- Metabolic Markers: Use fiber photometry with fluorescent sensors (e.g., iGluSnFR) to track glutamate flux in anterior cingulate cortex (ACC) during effortful cognition.

- Always time-lock these measures to the decision period and baseline-correct.

Data Presentation

Table 1: Sample Parameters for a Cognitive Effort Discounting Task (Rodent)

| Parameter | Low Cognitive Load Condition | High Cognitive Load Condition | Control/No-Effort Condition |

|---|---|---|---|

| Working Memory Demand | 1-item delayed non-match to sample | 3-item delayed non-match to sample | Simple visual discrimination |

| Delay Interval | 2 seconds | 8 seconds | 0 seconds |

| Distractor Stimuli | None | 2 flashing lights during delay | None |

| Expected Accuracy | 85-90% | 65-75% | >95% |

| Reward Magnitude (at indifference) | 2 sucrose pellets | 4 sucrose pellets | 1 sucrose pellet |

| Typical Choice Preference | 65% chosen | 35% chosen | 95% chosen |

Table 2: Key Neural Correlates of Cognitive Effort Cost

| Brain Region | Measured Signal | Change with Increased Cognitive Effort | Proposed Function in Cost Valuation |

|---|---|---|---|

| Anterior Cingulate Cortex (ACC) | Gamma power (LFP) | Increases | Cost computation and monitoring |

| Nucleus Accumbens (NAc) | Dopamine transients (dLight) | Decreases at choice | Discounting of reward value |

| Anterior Insula (AI) | BOLD fMRI / Calcium activity | Increases | Subjective effort awareness |

| Locus Coeruleus (LC) | Pupil diameter / NE sensor | Increases | Mobilization of effort resources |

Experimental Protocols

Protocol: Concurrent Cognitive & Physical Effort Discounting Task (Rodent)

- Apparatus: Operant chamber with two retractable levers, a central nose-poke port, a force-sensitive lever, and a reward delivery system.

- Habituation: Train subjects to associate cues with reward.

- Baseline Training: Train on a simple left/right lever choice for reward (1 vs. 3 pellets).

- Physical Effort Introduction: The high-value reward lever requires a progressive hold duration (e.g., 1s to 5s) of a force-sensitive lever.

- Cognitive Load Introduction: Prior to lever presentation, introduce a delayed match-to-sample task in the nose-poke. Low load: 1 shape, 2s delay. High load: 2 shapes, 8s delay.

- Concurrent Task: Each trial combines a cognitive load phase followed by a physical effort choice. The cognitive load level is cued and varies trial-by-trial.

- Data Collection: Record choice, reaction time, force data, and physiological measures (pupil size, LFP) over 20-30 sessions.

- Analysis: Fit choice data with a model:

V = (Reward Magnitude) / (1 + b_phys*Physical Effort + b_cog*Cognitive Load).

Protocol: Pupillometry as a Proxy for Cognitive Effort in Human Foraging Tasks

- Setup: Eye-tracker with high temporal resolution (≥ 120Hz) in a dimly lit room.

- Task: Serial visual foraging task on a screen. Subjects search for targets among distractors. Cognitive load is manipulated by target/distractor similarity (low/high).

- Procedure: Each trial begins with a fixation cross (2s baseline). The search array is presented until response or timeout. Reward is inversely proportional to reaction time.

- Pupil Data Processing: Pre-process: blink interpolation, band-pass filtering (0.01-6 Hz). Extract mean pupil diameter during the 500ms pre-stimulus baseline and the entire search period. Calculate trial-wise change from baseline.

- Correlation: Correlate trial-by-trial pupil dilation with (a) RT, (b) self-reported effort (post-block), and (c) model-derived cognitive cost parameter.

Visualizations

Title: Foraging Decision Valuation with Cost Integration

Title: Combined Effort Task Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function in Cognitive Effort Research | Example/Product Code |

|---|---|---|

| dLight1.1 AAV | Genetically encoded dopamine sensor for fiber photometry. Measures real-time dopamine fluctuations in NAc during cost-benefit decisions. | Addgene #111068 |

| iGluSnFR AAV | Genetically encoded glutamate sensor. Used to track glutamatergic input to ACC during cognitively demanding tasks. | Addgene #98929 |

| Clozapine N-oxide (CNO) | Pharmacological agent for chemogenetic (DREADD) manipulation of specific neural circuits (e.g., ACC→NAc) to test causality. | Tocris #4936 |

| Pupillometry System | High-speed infrared camera for tracking pupil diameter, a non-invasive proxy for locus coeruleus activity and cognitive effort. | ViewPoint EyeTracker |

| Force-Sensitive Operandum | Programmable lever or touchscreen capable of measuring precise force/duration of presses to quantify physical effort expenditure. | Lafayette Inst. #80203 |

| Cognitive Testing Software | Flexible environment for building complex foraging and decision tasks with precise timing (e.g., PsychToolbox, Bpod, PyBehavior). | Bpod State Machine |

| Hierarchical Bayesian Modeling Software | Toolkit for fitting complex cognitive models to choice data, handling individual and group-level parameters (e.g., Stan, PyMC3). | Stan (rstan/pystan) |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our rodent foraging data in the PatchX maze shows abnormally high giving-up densities (GUDs) in the schizophrenia model group, but the travel time between patches is normal. What does this indicate and how should we adjust our analysis? A1: This pattern suggests a specific deficit in patch assessment or reward valuation, not motor speed or navigation. It aligns with theoretical constructs of "cognitive effort" discounting. Proceed as follows:

- Recalculate: Compute the Marginal Value Theorem (MVT) predicted GUD using your measured travel time. Confirm the experimental GUD significantly exceeds the theoretical optimum.

- Re-analyze Video: Score for deliberative hesitation at the patch entry/exit and unusual micro-movements within the patch, which may indicate impaired integration of cost/benefit.

- Protocol Adjustment: In subsequent runs, implement probe trials where patch depletion rate is suddenly altered. This tests cognitive flexibility in foraging strategy, a key deficit in schizophrenia.

Q2: When modeling depressive-like behavior in the Spatial Open Field Foraging Task, how do we dissociate anhedonia (lack of reward pleasure) from simply increased energy cost perception? A2: This is a critical dissociation. Implement a two-stage protocol:

- Stage 1 - Cost Manipulation: Vary the height of barriers (physical effort cost) to reach high-reward zones. Fit effort discounting curves.

- Stage 2 - Reward Devaluation: Pre-feed a specific high-value reward to induce sensory-specific satiety in a control group.

- Interpretation: A depressive model showing flattened effort discounting across all costs and unaffected by specific satiety points primarily to anhedonia and global reward insensitivity. If the effort curve is simply shifted, requiring disproportionate reward for any effort, it suggests a pathological inflation of perceived energy cost.

Q3: Our computational foraging model (MVT-based) fails to fit the behavior of our transgenic mouse model. The residuals are systematically high at the start of sessions. What's wrong? A3: The classic MVT assumes a perfectly informed forager. The systematic early-session error suggests a deficit in the acquisition of the task contingency (learning), not the optimization itself. This is common in neuropsychiatric models.

- Solution: Switch to or add a learning-foraging hybrid model, such as a Partially Observable Markov Decision Process (POMDP) or a reinforcement learning model that estimates value through exploration. Compare the learning rate (α) and exploration parameter (β) between groups. Your thesis on cognitive constraints should explicitly model this information-gathering constraint.

Q4: In human VR foraging studies with patients with depression, we encounter high intra-group variability in foraging paths. How can we standardize our metrics? A4: Move beyond simple summary statistics (total rewards, time). Implement the following metrics in your analysis pipeline:

- Spatial Neglect Score: Calculate the proportion of the foraging arena (binned in a grid) never visited.

- Path Entropy: Measure the randomness/unpredictability of the step-by-step path using Shannon entropy.

- Serial Correlation in Inter-Reward Intervals: Assess consistency of success.

- Table: Key Foraging Metrics for Human VR Studies

| Metric | Formula/Description | What it Probes in Neuropsychiatry |

|---|---|---|

| Exploration Efficiency | (Area Visited) / (Total Path Length) | Psychomotor slowing, amotivation |

| Decision Vigor | 1 / (Mean Latency to Leave Patch) | Motivational drive, impulsivity |

| Choice Consistency | Inverse of trial-by-trial variance in GUD | Cognitive stability, reward learning |

| Regret | (Optimal Reward per Session) - (Actual Reward) | Global task performance deficit |

Q5: What are the best practices for validating that a drug intervention in a foraging task is affecting decision-making, not just locomotion? A5: You must include a cascade of control experiments. Follow this protocol:

- Week 1: Baseline Foraging (PatchX Maze): Establish individual animal parameters.

- Week 2: Open Field Test w/ Object Exploration: Administer vehicle. Measure total distance, velocity, and novel object investigation time.

- Week 3: Control Foraging Task: Run a simplified, free-access consumption task in the same maze to measure pure consummatory behavior and gross motor function.

- Week 4: Drug Test in Foraging Task: Administer compound. The critical analysis is the double dissociation: A procognitive drug should normalize MVT deviations (e.g., GUD) in the test group without significantly altering total distance in the open field or consumption rate in the control task.

Experimental Protocol: Rodent Patch Foraging with Cognitive Load

Objective: To assay the interaction between working memory load and foraging efficiency in a rodent model of schizophrenia.

Materials: See "Research Reagent Solutions" below. Procedure:

- Habituation: Animals are habituated to the 8-arm radial maze (PatchX) and reward pellets over 5 days.

- Baseline Training: 4 arms are baited. Animal learns to collect all baits. Criterion: >80% correct visits for 3 consecutive days.

- Cognitive Load Induction: Prior to the foraging session, administer a delayed non-match to sample (DNMTS) task in a separate chamber (10 trials, 60 sec delay). This loads working memory.

- Foraging Session: Immediately after DNMTS, place animal in PatchX maze configured in "patchy" mode: 4 arms are "rich patches" (5 pellets, depleting), 4 are "poor patches" (1 pellet). Session runs for 20 minutes or until all pellets are collected.

- Data Collection: Log: a) Sequence of arm entries, b) Dwell time per arm, c) Pellets remaining per arm (GUD), d) Travel time between arms.

- Analysis: Fit a modified MVT incorporating a "cognitive load penalty" on travel time. Compare load vs. no-load conditions within and between model/control groups.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Foraging Research |

|---|---|

| PatchX Automated Maze | Configurable radial arena to simulate patchy environments; allows precise control of depletion schedules. |

| ANY-maze Tracking Software | Video tracking for detailed path analysis, dwell time, and zone-specific behavior. |

| Med-PC/Operant Chambers | For integrating traditional operant schedules (PR, FR) within a foraging framework to measure effort. |

| Custom VR Foraging Environment | Human/rodent immersive environment to control spatial and reward variables perfectly. |

| PyMVT Modeling Package | Python toolbox for fitting Marginal Value Theorem and reinforcement learning models to foraging data. |

| DREADDs (hM3Dq/hM4Di) | Chemogenetic tools to transiently modulate specific neural circuits (e.g., prefrontal cortex, hippocampus) during foraging. |

| In vivo Calcium Imaging (Miniscope) | To record neural ensemble activity in freely foraging animals, linking strategy to neural dynamics. |

| fNIRS/Eye-Tracker Combo | For human studies, measures prefrontal cortex hemodynamics and visual attention during foraging tasks. |

Visualizations

Diagram 1: Foraging Decision Workflow in Rodent Model

Diagram 2: Key Neural Circuits in Foraging Pathology

Diagram 3: Experimental Pipeline for Drug Testing

Pitfalls and Solutions: Calibrating and Refining Cognitive Foraging Models

Technical Support & Troubleshooting Center

FAQ: Overfitting Cognitive Parameters in Foraging Models

Q1: What are the primary symptoms of overfitted cognitive parameters in my foraging model? A1: Key symptoms include:

- Exceptional performance on training data (e.g., >95% accuracy) with poor performance on validation/hold-out data (e.g., <65% accuracy).

- Extreme or biologically implausible parameter values (e.g., a learning rate >0.9 or a temporal discounting factor near 0).

- High sensitivity/variance of parameter estimates across different runs of the same dataset.

- The model fails to generalize to a new, slightly modified foraging task intended to probe the same cognitive construct.

Q2: What experimental design flaws most commonly lead to this overfitting? A2:

- Insufficient or Low-Quality Data: Too few trials per condition or participant, or task designs that do not adequately dissociate cognitive processes.

- Model Complexity Mismatch: Using a model with too many free parameters (e.g., a 7-parameter reinforcement learning model) for a simple task that only probes 1-2 cognitive dimensions.

- Inadequate Validation: Using only one dataset for both fitting and testing, or not employing cross-validation techniques.

Q3: What are the recommended statistical and computational remedies? A3: Implement a rigorous model comparison and validation pipeline:

| Method | Description | Quantitative Benchmark |

|---|---|---|

| Cross-Validation (k-fold) | Partition data into k subsets. Fit on k-1 folds, test on the held-out fold. Repeat. | Report mean ± SD of test log-likelihood or accuracy across folds. |

| Information Criteria (AIC/BIC) | Penalize model likelihood by the number of parameters. Lower scores indicate better trade-off. | Prefer model with ΔAIC/BIC > 2-10 relative to next best model. |

| Prior Predictive Checks | Use Bayesian methods with informative, biologically-constrained priors to regularize estimates. | Check if posterior predictions cover the range of plausible real-world behavior. |