Beyond the Microphone: How Acoustic Localization Transforms Animal Behavior Research and Drug Development

This article provides a comprehensive analysis of acoustic localization and tracking for vocalizing animals, a critical tool in preclinical behavioral neuroscience and pharmacology.

Beyond the Microphone: How Acoustic Localization Transforms Animal Behavior Research and Drug Development

Abstract

This article provides a comprehensive analysis of acoustic localization and tracking for vocalizing animals, a critical tool in preclinical behavioral neuroscience and pharmacology. We explore the fundamental principles of bioacoustics and sound source localization, detail current methodologies from microphone arrays to deep learning algorithms, and address key challenges in real-world environments. By comparing this technique to video tracking and other modalities, we validate its unique value in quantifying social interactions, distress calls, and communication patterns in animal models. Targeted at researchers and drug development professionals, this guide underscores how precise acoustic tracking generates objective, high-dimensional behavioral biomarkers essential for validating therapeutic efficacy and understanding disease mechanisms.

The Science of Sound: Core Principles of Bioacoustics and Source Localization

Animal vocalizations are key signals in neuroscience, behavioral pharmacology, and neuropsychiatric drug development. Within the broader thesis of acoustic localization and tracking, precisely defining these vocalizations' physical and contextual characteristics is foundational for correlating sound emission with an animal's position, movement, and behavioral state.

Quantitative Acoustic Features of Common Research Models

The following parameters, measurable via specialized software (e.g., DeepSqueak, MUPET, VocalMat), are essential for signal definition and subsequent localization algorithms.

Table 1: Characteristic Vocalization Features by Species and Context

| Species | Common Model | Call Type | Frequency Range (kHz) | Duration (ms) | Amplitude (dB SPL) | Key Context | Relevance to Tracking |

|---|---|---|---|---|---|---|---|

| Mouse (Mus musculus) | C57BL/6, B6D2F1 | Ultrasonic Vocalization (USV) | 30-110 | 10-150 | ~50-80 | Pup isolation, adult social interaction, mating | High-frequency necessitates specialized mics; call rate maps social exploration paths. |

| Rat (Rattus norvegicus) | Sprague-Dawley, Long-Evans | 50-kHz Trill (Appetitive) | 30-70 | 20-100 | ~60-85 | Positive anticipation, play, social interaction | Localization of 50-kHz vs. 22-kHz calls tracks reward vs. aversion zones. |

| 22-kHz Long Call (Aversive) | 18-32 | 300-3000 | ~65-75 | Fear, anxiety, post-conditioning | Long duration aids in precise spatial triangulation of threat response. | ||

| Zebra Finch (Taeniopygia guttata) | Adult male | Song Syllable | 1-8 | 30-300 | ~70-90 | Courtship, territorial defense | Complex sequences require high-temporal-resolution tracking for sensorimotor studies. |

| Marmoset (Callithrix jacchus) | Common marmoset | Phee Call | 7-10 | 500-2000 | ~65-80 | Long-distance contact | Loud, tonal calls are ideal for outdoor/aviary localization studies. |

Experimental Protocols

Protocol 1: Recording and Characterizing Rodent Ultrasonic Vocalizations (USVs) for Localization Calibration

Objective: To acquire high-fidelity, spatially referenced USV data for defining species-specific signal parameters and testing localization accuracy. Materials: See "Research Reagent Solutions" below. Procedure:

- Acoustic Chamber Setup: Place the recording arena (e.g., 40cm x 40cm open field or two-chamber social apparatus) inside a sound-attenuating chamber. Ensure infrared lighting for video.

- Microphone Array Calibration: Position at least 4 ultrasonic microphones in a known 3D geometry (e.g., corners of the arena). Measure the precise (x,y,z) coordinates of each mic relative to an arena origin. Input coordinates into acquisition software.

- Synchronization: Synchronize the microphone array's digital audio stream with an overhead video camera using a trigger pulse or dedicated sync hardware (e.g, TTL pulse generator).

- Subject Introduction & Recording: Introduce subject animal(s) according to experimental paradigm (e.g., isolated pup, adult male-female pair). Begin simultaneous audio-video recording.

- Audio Settings: Sampling rate ≥ 250 kHz, 16-bit depth to capture ultrasounds up to 125 kHz.

- Duration: Record for the appropriate test period (e.g., 5 min for social interaction).

- Signal Processing & Feature Extraction: a. Bandpass Filter: Filter raw audio files between 20-120 kHz for mice, or 10-80 kHz for rats. b. Detection: Use an amplitude threshold (e.g., -40 dB relative to max) or spectral cross-correlation algorithm to detect candidate USVs. c. Feature Measurement: For each detected call, extract quantitative features: - Fundamental Frequency (peak, mean, contour). - Duration (start/end points at threshold crossing). - Amplitude (root-mean-square power in dB SPL, requires mic sensitivity calibration). - Bandwidth and Spectrographic Entropy.

- Localization Validation: Isolate individual, high-SNR calls. Use Time-Difference-of-Arrival (TDoA) algorithms on the multi-channel audio to compute a 3D source location. Cross-reference this computed location with the animal's position from synchronized video at the call's timestamp.

Protocol 2: Eliciting and Quantifying Aversive 22-kHz Rat Calls in a Conditioned Fear Paradigm

Objective: To generate a reliable signal with defined characteristics for studies localizing fear and anxiety responses. Materials: Fear conditioning chamber with grid floor, speaker, shock generator, ultrasonic microphone, video camera. Procedure:

- Habituation: Allow the rat to explore the neutral conditioning chamber for 5 min (Day 1).

- Fear Conditioning: Present a 30-second acoustic tone (Conditioned Stimulus, CS). In the final 2 seconds, deliver a mild foot shock (0.7 mA, Unconditioned Stimulus, US). Repeat 3-5 times with variable inter-trial intervals (Day 2).

- Contextual Fear Recall & Recording: 24 hours post-conditioning (Day 3), return the rat to the same chamber with no tone or shock. Record behavior and vocalizations for 10 minutes.

- Focus: The post-shock context reliably elicits 22-kHz long calls.

- Signal Analysis: Isolate 22-kHz calls using a 18-32 kHz bandpass filter. Characterize the bout structure (typically calls lasting ~1 second, repeated in bouts of many minutes). Measure the interval between calls within a bout (typically ~100-200 ms).

- Spatial Mapping: Plot the computed origin of each 22-kHz call onto the floor plan of the fear chamber. This creates a "heatmap of aversive vocalization," typically showing clustering in the location where shock was received.

Diagrams

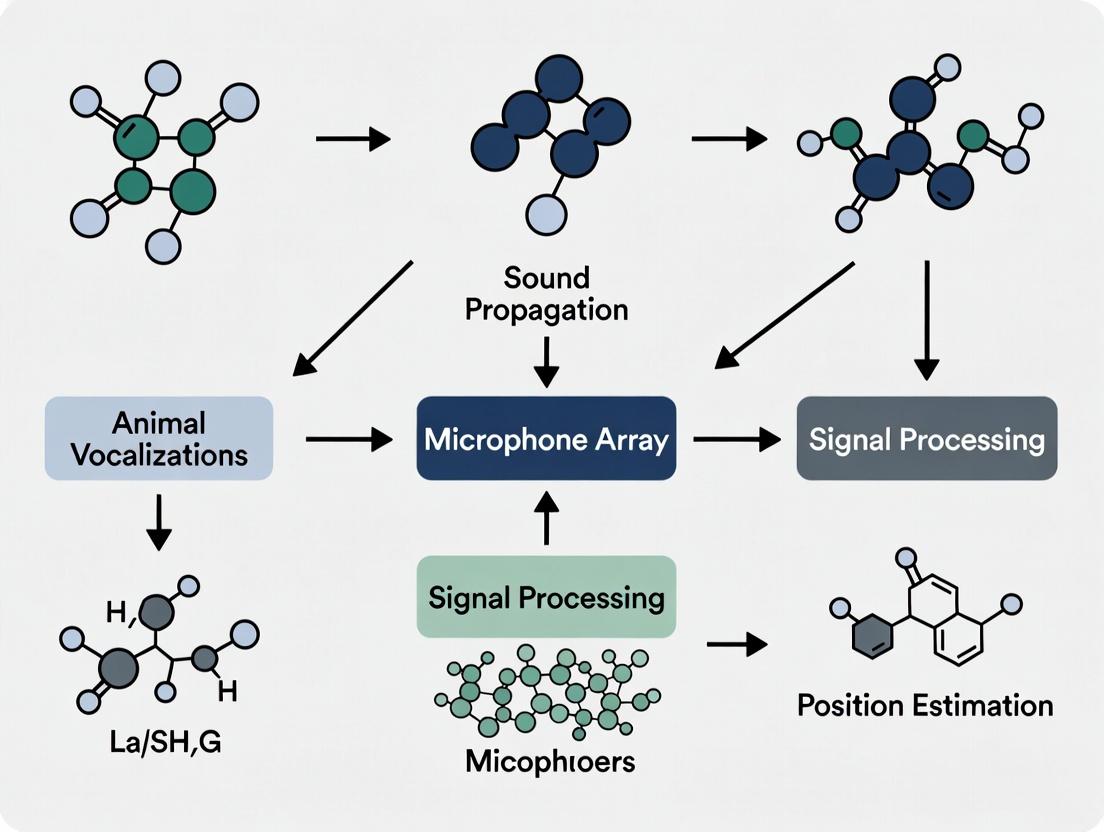

Diagram 1: Acoustic Localization & Signal Processing Workflow

Diagram 2: Rat Vocalization Pathways in Behavioral Contexts

Research Reagent Solutions & Essential Materials

Table 2: Key Toolkit for Vocalization Signal Definition Studies

| Item | Function & Specification | Example Use Case |

|---|---|---|

| Ultrasonic Microphone | Wide-bandwidth condenser mic capable of recording >200 kHz. Must have flat frequency response in species range (e.g., 10-150 kHz). | Capturing mouse USVs during social interaction. |

| Multi-Channel Acoustic Array | 4-8 synchronized microphones in a calibrated 3D geometry. | Source localization via TDoA algorithms. |

| Data Acquisition System | High-speed ADC with sampling rate ≥250 kHz per channel and low-noise preamps. | Simultaneous recording from microphone array. |

| Audio-Video Sync Hardware | TTL pulse generator or dedicated sync box (e.g, from Neuralynx, Blackrock). | Aligning vocalization timestamp with animal's video-tracked position. |

| Sound-Attenuating Chamber | Acoustically isolated chamber with foam lining. Minimizes echoes and external noise. | Creating controlled acoustic environment for precise recording. |

| Vocalization Analysis Software | Automated detection/classification software (e.g., DeepSqueak, VocalMat). | Batch processing of thousands of calls for feature extraction. |

| Acoustic Calibrator | Pistonphone or tone generator producing a known SPL at specific frequencies. | Converting recorded amplitude to absolute dB SPL. |

| Programmable Fear Conditioning System | Chamber with grid floor, speaker, shock generator, and controller software. | Eliciting and recording context-specific 22-kHz rat calls. |

This application note details the principles and protocols for employing Time Difference of Arrival (TDOA) and beamforming techniques within the context of acoustic localization and tracking of vocalizing animals. The methodologies are critical for non-invasive monitoring in ecological studies, behavioral pharmacology, and the assessment of vocalization biomarkers in drug development research.

Theoretical Foundations

Physics of Wavefront Propagation and TDOA

Sound waves emitted by a source (e.g., a vocalizing animal) propagate as spherical wavefronts. An array of M microphones at known positions mi records the signal. The TDOA between a microphone pair (i, j) is Δτij = τi - τj, where τ is the absolute time of arrival. For a source at unknown position s, the range difference is: cΔτ_ij = ||s - mi|| - ||s - mj||, where c is the speed of sound (~343 m/s at 20°C). Solving these hyperbolic equations yields the source location.

Fundamentals of Beamforming

Beamforming is a spatial filtering technique that combines signals from multiple sensors to enhance signals coming from a specific direction (steering vector) while suppressing others. The Delay-and-Sum (DAS) beamformer computes the output power P(θ) for a steering direction θ: P(θ) = | Σ{i=1}^M wi xi(t + Δi(θ)) |^2, where x_i is the signal at the i-th microphone, w_i is a weighting factor, and Δ_i(θ) is the time delay applied to steer the beam towards θ.

Key Performance Data and Comparisons

Table 1: Comparison of Acoustic Localization Techniques

| Parameter | TDOA-Based Localization | Beamforming (DAS) | Advanced Beamforming (MVDR) |

|---|---|---|---|

| Spatial Resolution | High (depends on geometry) | Moderate (limited by array aperture) | High (adaptive nulling) |

| Computational Load | Moderate (hyperbolic solver) | Low (simple delays & sum) | High (inverse covariance matrix) |

| Robustness to Noise | Moderate (requires clear onsets) | Low (broadside sensitivity) | High (adaptive noise suppression) |

| Typical Accuracy | 0.5 - 2° (azimuth) | 5 - 15° (azimuth) | 2 - 5° (azimuth) |

| Primary Use Case | Precise 3D coordinate estimation | Direction-of-Arrival (DOA) estimation & scanning | DOA in high-noise, multi-source environments |

Table 2: Environmental Factors Affecting Sound Speed & Localization Accuracy

| Factor | Impact on Speed of Sound (c) | Effect on TDOA Error (for Δτ=1 ms) |

|---|---|---|

| Temperature | c ≈ 331.4 + 0.6T_C m/s (T_C in °C) | ~0.18 m/°C error per km baseline |

| Humidity | Minor increase (~0.1% from 0-100% RH) | Negligible for short ranges (<100m) |

| Wind | Effective c = c_0 + w·n (wind vector w, unit direction n) | Dominant error source; requires vector measurement |

Experimental Protocols

Protocol 3.1: Calibration of a Microphone Array for Field Deployment

Objective: To determine the precise 3D coordinates of each microphone in an array and synchronize their clocks. Materials: Calibrated ultrasonic emitter, laser rangefinder/Total Station, GPS (for geo-referencing), synchronous recording system or clapper board. Procedure:

- Geometric Calibration: Place the array in the deployment configuration. Using a known-position ultrasonic emitter or a laser rangefinder, measure the x, y, z coordinates of each microphone's diaphragm relative to a chosen origin. Record in a calibration file.

- Synchronization Check: Generate an impulsive sound (clapper) within clear line-of-sight of all microphones. Record the event. Check the timestamp difference of the impulse peak across all channels. If using separate recorders, the maximum allowable sync drift (e.g., < 1/10 of the shortest wavelength) must be verified post-hoc via cross-correlation of this common event.

- Environmental Calibration: Measure and record ambient temperature, humidity, and wind speed/direction at the array center.

Protocol 3.2: TDOA Estimation via Generalized Cross-Correlation with Phase Transform (GCC-PHAT)

Objective: To accurately estimate the time delay Δτij between two microphone signals. Workflow:

- Pre-processing: Bandpass filter the raw signals x_i(t), x_j(t) around the expected animal vocalization frequency (e.g., 1-8 kHz for many birds/anurans).

- Compute GCC-PHAT: Calculate the cross-power spectrum G_ij(f) = X_i(f)X_j*(f). Apply the PHAT weighting: *ψ(f) = 1/|G_ij(f)|.

- Obtain Cross-Correlation: Compute the generalized cross-correlation: R_ij(τ) = IFFT{ψ(f) * G_ij(f)}.

- Peak Detection: Find the time lag τmax that maximizes *Rij*(τ). This is the estimated Δτij. Use parabolic interpolation around the peak for sub-sample accuracy.

- Validation: The peak value must exceed a set SNR threshold (e.g., 5 dB above the RMS of R_ij(τ)).

Protocol 3.3: Source Localization via Non-Linear Least Squares Minimization

Objective: To compute the animal's 3D coordinates from multiple TDOA estimates. Procedure:

- Input: N TDOA measurements Δτk (k=1...N) from *M* microphones, corresponding to *N* independent microphone pairs. Known microphone coordinates mi_ and sound speed c.

- Cost Function: Define the error cost function F(s) = Σ{k=1}^N [ *cΔτk* - (||s - mik|| - ||s - mjk||) ]^2.

- Minimization: Use an iterative algorithm (e.g., Levenberg-Marquardt) with an initial guess (e.g., array centroid). Provide the algorithm with the Jacobian of F.

- Output: Estimated source coordinate sest and a covariance matrix indicating localization uncertainty.

Protocol 3.4: Broadband Beamforming for Direction-of-Arrival (DOA) Mapping

Objective: To create an acoustic "image" (power map) showing likely source directions. Procedure:

- Define Scan Grid: Create a 2D grid of steering directions (θ, φ) covering the array's field of view.

- Segment Data: For a recording containing vocalizations, select time segments of interest.

- Steer and Sum: For each grid point θ, calculate the appropriate time delays Δ_i(θ) for each microphone. Apply delays, sum the aligned signals, and compute the average power P(θ) over the segment.

- Generate Map: Plot P(θ) as a color map over the angular grid. Local maxima indicate potential source directions.

Visualization of Methodologies and Relationships

Title: Acoustic Localization & Beamforming Workflow

Title: TDOA Hyperbolic Localization Principle

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Acoustic Localization Field Research

| Item / Solution | Specification / Example | Primary Function in Research |

|---|---|---|

| Synchronized Recorder | Wildlife Acoustics Song Meter SM4, Zoom F8n with sync | Provides time-aligned multi-channel audio data essential for TDOA. |

| Calibrated Microphone | Knowles FG-O, Earthworks M30 | Flat frequency response for accurate waveform capture across species' vocal range. |

| Microphone Array Mount | Custom rigid frame (e.g., carbon fiber) | Maintains precise, known geometric relationships between sensors. |

| Acoustic Calibrator | Pistonphone (e.g., 94 dB @ 1 kHz) | Provides reference SPL for quantifying vocalization amplitude. |

| Environmental Sensor | Kestrel 5500 Weather Meter | Measures temperature, humidity, wind for accurate sound speed calculation. |

| Geo-referencing Tool | RTK GPS (e.g., Emlid Reach RS2+) | Precisely maps array and source coordinates in a global reference frame. |

| TDOA/Beamforming Software | MATLAB with Phased Array Toolbox, Open-source (LOCATA, MHAcoustics) | Implements GCC-PHAT, localization solvers, and beamforming algorithms. |

| Wind Shield | Rycote Cyclone, custom foam windscreen | Reduces wind noise, a major source of error in outdoor recordings. |

This document provides application notes and protocols for utilizing microphone arrays within the context of acoustic localization and tracking of vocalizing animals. This research is critical for behavioral studies, population monitoring, and understanding the impact of environmental changes or pharmacological interventions on animal communication.

Types of Microphone Arrays

Linear Arrays

A one-dimensional arrangement of microphones. Optimal for estimating the direction-of-arrival (DOA) of sound in a single plane, often used for tracking animal movement along a transect.

Planar (2D) Arrays

Microphones arranged in a two-dimensional plane (e.g., grid, circular). Capable of estimating both azimuth and elevation, suitable for localization in open fields or under forest canopies.

Volumetric (3D) Arrays

A three-dimensional distribution of microphones (e.g, on tetrahedrons, cubes, or distributed across vegetation). Provides full 3D spatial localization, essential for complex habitats like forests where animals may be at varying heights.

Ad-hoc & Distributed Arrays

Loosely synchronized arrays where microphones are spread over a large area, often connected wirelessly. Used for large-scale monitoring and tracking across extensive territories.

Key Performance Metrics & Quantitative Comparison

Table 1: Microphone Array Configuration Comparison

| Array Type | Typical Element Count | Localization Dimension | Angular Resolution Range | Effective Range (Typical) | Common Habitat Use | Deployment Complexity |

|---|---|---|---|---|---|---|

| Linear | 2-8 | 1D (DOA) | 5° - 15° | 10m - 50m | Transects, rivers | Low |

| Planar (Circular) | 4-16 | 2D (Azimuth, Elevation) | 2° - 10° | 20m - 100m | Open fields, clearings | Medium |

| Volumetric (Tetrahedral) | 4-32 | 3D (X, Y, Z) | 1° - 5° | 10m - 150m | Forests, complex terrain | High |

| Distributed Ad-hoc | 8+ (multiple clusters) | 2D/3D (Coarse) | >10° | 100m - 1km+ | Landscape scale | Very High |

Data synthesized from recent field studies and hardware specifications (2023-2024).

Table 2: Microphone Element Specifications for Wildlife Bioacoustics

| Parameter | Recommended Specification | Rationale |

|---|---|---|

| Self-Noise Level | < 20 dBA SPL | Critical for detecting faint animal vocalizations. |

| Frequency Response | 20 Hz - 20 kHz (±3 dB) | Covers most terrestrial animal vocalizations (e.g., birds, mammals, anurans). |

| Dynamic Range | ≥ 120 dB | Handles quiet calls and loud ambient noise. |

| Polar Pattern | Omnidirectional | Captures sound from all directions for multi-source localization. |

| Weatherproofing | IP67 or higher | Essential for sustained outdoor deployment. |

| Synchronization Error | < 10 µs | Required for accurate time-difference-of-arrival (TDOA) calculations. |

Experimental Protocols

Protocol: Field Deployment and Calibration of a Volumetric Array

Objective: To deploy a calibrated 3D microphone array for localizing and tracking multiple vocalizing individuals within a defined habitat.

Materials: See "Research Reagent Solutions" below.

Procedure:

- Site Survey: Map deployment area using GPS. Identify potential sound反射 sources (large boulders, buildings).

- Array Geometry Deployment: Deploy microphone stands in a pre-defined 3D geometry (e.g., a crossed-baseline or truncated octahedron). Ensure precise measurement of each microphone's 3D coordinates using a laser rangefinder or total station (error < 1 cm).

- Hardware Synchronization: Connect all microphones to a multi-channel recorder with a common master clock. Verify synchronization by recording a shared impulsive sound source (e.g., clapper board) at the array center.

- System Calibration: a. Impulse Response: Emit swept-sine or balloon-pop sounds from at least 5 known locations surrounding the array. b. Directional Calibration: Use a calibrated reference sound source played from a known azimuth and elevation. c. Record all calibration signals simultaneously on all channels.

- Field Recording: Initiate continuous or triggered recording based on predefined schedules or detection thresholds.

- Validation: Periodically during recording, emit validation pulses from a different known location than used in step 4.

Protocol: Acoustic Localization Using Time-Difference-of-Arrival (TDOA)

Objective: To compute the 3D spatial coordinates of a vocalizing animal from array recordings.

Materials: Recorded multi-channel audio files, array geometry calibration file.

Procedure:

- Signal Pre-processing: Bandpass filter each channel around the target species' vocalization frequency. Apply noise reduction if necessary.

- Onset Detection: For a target vocalization event, identify the time of arrival on a reference channel using an energy-based onset detector (e.g., high-frequency content method).

- Cross-Correlation: Compute the generalized cross-correlation with phase transform (GCC-PHAT) between the reference channel and every other channel to estimate TDOAs.

- Localization Solver: Input the estimated TDOA vector and the known array geometry into a localization algorithm (e.g, stochastic region contraction [SRC], least-squares solver).

- Error Estimation: Calculate the Cramér-Rao Lower Bound (CRLB) for the given array geometry and SNR to provide a theoretical lower bound on localization error.

- Data Output: Record the estimated (X, Y, Z) coordinates, timestamp, and estimated error margins.

Visualization of Methodologies

Title: Acoustic Localization Experimental Workflow

Title: Array Type Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Acoustic Localization Research

| Item | Function | Example Product/Note |

|---|---|---|

| Multi-channel Synchronized Recorder | Simultaneously records audio from all array microphones with precise timing. | Wildlife Acoustics Song Meter SM4, Sound Devices 888, Zoom F8n with external sync. |

| Calibrated Measurement Microphones | Low-noise, omnidirectional sensors for accurate sound capture. | Microphone Madness MM-OMNI, Earthworks M23, Brüel & Kjær Type 4189. |

| Portable Acoustic Calibrator | Generates a known sound pressure level for system calibration. | CESVA CA-002 Class 1 calibrator (94 dB & 114 dB at 1 kHz). |

| Laser Rangefinder / Total Station | Precisely measures the 3D spatial coordinates of each array element. | Leica DISTO D2, Bosch GLM 400, or higher-precision survey equipment. |

| Windshields & Weatherproofing | Reduces wind noise and protects microphones from elements. | Rycote Cyclone or Blimp systems with furry covers. |

| Synchronization Cables / Interface | Ensures sample-accurate clock sharing across all inputs. | Custom snake cables or dedicated interfaces (e.g, RME HDSPe MADI). |

| Programmable Acoustic Source | Emits controlled sounds for in-field calibration and testing. | Foxpro game calls, custom-built speakers with tone generators. |

| Localization Software Suite | Processes recordings to estimate source locations. | Open-source: MATLAB-based TOADsuite, passiveAcousticLocate in R; Commercial: Raven Pro, Ishmael. |

Within the broader thesis of acoustic localization and tracking of vocalizing animals, this document details the application and protocols for leveraging acoustic data in preclinical research. While visual tracking (e.g., video, depth sensing) quantifies overt movement, acoustic monitoring captures a parallel, rich data stream of vocalizations and non-vocal sounds, offering unique insights into behavioral state, social communication, and neuropsychiatric function that are often imperceptible or ambiguous to visual-only systems.

Comparative Advantages: Acoustic vs. Visual Tracking

Table 1: Comparative Analysis of Tracking Modalities

| Parameter | Pure Visual Tracking | Acoustic Behavioral Tracking | Primary Advantage of Acoustics |

|---|---|---|---|

| Data Type | Kinematics, position, posture. | Vocalizations (ultrasonic, audible), non-vocal sounds (movement, gnawing). | Direct assay of communicative intent and emotional state (e.g., fear, reward). |

| Lighting Dependency | High; requires controlled, consistent illumination. | None; effective in complete darkness. | Enables 24/7 data collection in ethologically relevant dark/active phases. |

| Occlusion Robustness | Low; subject must be in line of sight. | High; sound penetrates nesting material, corners, and multi-homecage setups. | Captures data from hidden, sheltered subjects, reducing experimental stress. |

| Throughput & Scalability | Limited by camera field-of-view and processing load. | High; single microphone arrays can monitor many subjects in a room or rack. | Enables large-scale, longitudinal studies (e.g., drug efficacy over weeks). |

| Biomarkers for Neuro/Psych | Motor activity, social proximity. | USV call profiles (rate, frequency, syntax), breathing patterns, distress sounds. | Direct correlates to specific neural circuits (e.g., dopaminergic reward via 50-kHz USVs). |

| Drug Development Application | Locomotor activity, sedation, ataxia. | Affective state change (anxiolytics, antidepressants), respiratory side effects, social motivation. | Earlier, more specific detection of on-target efficacy and off-target adverse effects. |

Detailed Experimental Protocols

Protocol 3.1: Longitudinal Acoustic Phenotyping in a Rodent Model of Depression

Objective: To assess chronic antidepressant efficacy using acoustic biomarkers alongside visual metrics.

Materials:

- Test subjects (e.g., chronic mild stress model rodents).

- Sound-attenuated behavioral room or homecage environment.

- Ultra-high-frequency microphone array (≥250 kHz sampling rate).

- RGB or IR video camera system.

- Automated drug dosing system (if applicable).

- Acoustic analysis software (e.g., DeepSqueak, MUPET, custom Matlab/Python).

Procedure:

- Habituation & Baseline: House subjects in automated acoustic monitoring homecages for 72 hours pre-intervention. Collect 24-hour baseline acoustic (USV count, spectral features) and visual (activity, wheel running) data.

- Model Induction/Intervention: Apply chronic mild stress protocol for 4 weeks. For treatment groups, administer candidate compound daily via premixed chow or water.

- Continuous Data Acquisition: Synchronize audio (microphone array) and video streams 24/7. Audio is continuously recorded and saved in 5-minute blocks. Video is recorded during dark/active phases.

- Weekly Forced Swim Test (FST) Acoustic Analysis: Preceding the standard visual scoring of immobility in the FST, place subject in a water-filled cylinder equipped with an underwater hydrophone. Record and analyze distress vocalizations in the audible range (spectral power, duration) as a direct vocal correlate of despair-like behavior.

- Sucrose Preference Test (SPT) with Social Vocalization: During the SPT, introduce a conspecific in an adjacent, separated compartment. Analyze approach behavior (visual) concurrent with emission of appetitive 50-kHz USVs (acoustic) toward the social stimulus.

- Data Processing & Analysis:

- Audio: Apply noise filters, then use convolutional neural network (CNN)-based tools (e.g., DeepSqueak) to detect and classify USVs. Extract features: call rate, duration, frequency modulation, peak frequency, bandwidth, and syntactic complexity.

- Video: Use pose estimation software (e.g., DeepLabCut) to track mobility and social proximity.

- Integration: Perform time-synced correlative and multivariate analysis of acoustic and visual data streams.

Protocol 3.2: Respiratory Safety Pharmacology Screen

Objective: To detect drug-induced respiratory depression using acoustic monitoring of breathing sounds.

Materials:

- Plethysmography chamber (for validation).

- High-sensitivity microphone placed near subject's snout.

- Whole-body impedance or visual respiratory monitoring system.

- Data acquisition system with low-noise preamplifier.

Procedure:

- Calibration: Place naïve subject in restraint or natural posture. Simultaneously record breathing via whole-body plethysmography (gold standard for respiratory rate and tidal volume) and acoustic signals from the tracheal/nasal region for 30 minutes.

- Model Development: Use signal processing (band-pass filter 0.1-5 kHz) to isolate breath sounds. Develop an algorithm to correlate acoustic waveform peaks with plethysmography-derived breath timestamps. Validate algorithm accuracy (>95%).

- Drug Testing: Administer test compound (e.g., opioid, sedative) at therapeutic and supra-therapeutic doses.

- Acoustic-Only Monitoring: Record breathing sounds continuously for 6 hours post-dose. Apply the validated algorithm to derive respiratory rate, rhythm irregularity, and detect apneic events (pauses >2 seconds).

- Outcome: Compare acoustic-derived respiratory rate to historical visual/impendance data. Flag compounds causing a >20% reduction in rate or inducing audible wheezing/crackles (spectrogram analysis).

Visualized Workflows & Pathways

Workflow for Integrated Acoustic-Visual Behavioral Analysis

Neural Pathway Linking Reward to USV Production

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Acoustic Behavioral Tracking

| Item | Function & Rationale |

|---|---|

| Ultra-High-Frequency Microphones (& Preamps) | Capture the full range of rodent ultrasonic vocalizations (USVs, typically 20-120 kHz). Require flat frequency response and high signal-to-noise ratio. |

| Multi-Channel Acoustic Array System | Enables sound source localization and separation of multiple vocalizing animals within a shared space, critical for social interaction studies. |

| Sound-Attentuated Chamber | Provides isolation from environmental noise contamination (e.g., human speech, equipment hum), ensuring clean, analyzable recordings. |

| Acoustic Analysis Software (DeepSqueak) | Open-source MATLAB toolbox using CNNs for robust detection and classification of USVs in complex audio data, superior to amplitude-threshold methods. |

| Synchronization Hardware (e.g., GPIO Box) | Generates simultaneous TTL pulses to audio and video recording systems, ensuring perfect temporal alignment of multi-modal data streams for correlative analysis. |

| Underwater Hydrophone | For protocols assessing distress vocalizations or respiratory sounds in liquid media (e.g., forced swim test, aquatic species). |

| Calibrated Sound Level Meter | Quantifies and monitors ambient noise levels in the experimental facility, a critical variable for acoustic study reproducibility. |

| High-Performance Data Storage Solution | Continuous acoustic recording generates large volumes of data (terabytes per week); robust RAID storage or NAS is essential. |

From Theory to Cage-Side: Implementing Acoustic Tracking in Preclinical Studies

1. Introduction In acoustic localization research for vocalizing animals, precise hardware integration and synchronization are critical for triangulating animal positions from time-of-arrival differences of vocalizations. This protocol, framed within a thesis on bioacoustic tracking for behavioral and neuropharmacological studies, details the setup for a multi-sensor array.

2. Hardware Integration The core system integrates acoustic sensors, data loggers, and synchronization modules. A typical array comprises 4-8 microphones in a known geometric configuration.

Table 1: Quantitative Specifications for Core Hardware Components

| Component | Key Parameter | Target Specification | Purpose |

|---|---|---|---|

| MEMS Microphone | Sensitivity | -26 dBFS ± 1 dB | High-fidelity capture of vocalizations (e.g., rodent ultrasonic calls). |

| Bandwidth | 10 Hz - 80 kHz | Encompasses audible and ultrasonic ranges. | |

| ADC (Sound Card) | Sampling Rate | 250 kHz (min) | Meets Nyquist criterion for ultrasounds (≥2x max frequency). |

| Bit Depth | 24-bit | Maximizes dynamic range for faint calls. | |

| Master Clock | Timing Accuracy | ±0.5 ppm (parts per million) | Maintains long-term sync stability across units. |

| GPS Module | Pulse Per Second (PPS) Accuracy | ±20 ns RMS | Provides absolute, microsecond-accurate time synchronization in field deployments. |

| Data Logger | Storage Buffer | ≥32 GB | Accommodates continuous high-sample-rate recording. |

3. Calibration Protocols

3.1 Acoustic Sensor Calibration Objective: To determine the precise frequency response and sensitivity of each microphone for amplitude correction and equalization.

Protocol:

- Setup: Place reference microphone (calibrated to NIST standard) and unit-under-test (UUT) equidistantly (e.g., 1 m) from a calibrated sound source (pistonphone or speaker emitting logarithmic chirps 1-100 kHz) in an anechoic chamber.

- Signal Generation & Recording: Emit ten 1-second chirps at 94 dB SPL. Simultaneously record outputs from both microphones via a synchronized ADC.

- Analysis: For each chirp, compute the Transfer Function H(f) = FFT(UUT Output) / FFT(Reference Output). The median H(f) across trials yields the calibration filter for that UUT. Apply this filter to all subsequent recordings from that channel.

3.2 Geometric Calibration Objective: To measure the exact 3D coordinates of each microphone in the array.

Protocol:

- Setup: Deploy the microphone array in the research environment (lab arena or field site).

- Measurement: Using a total station or high-accuracy GPS (RTK-GPS with centimeter accuracy), survey the (x, y, z) coordinates of each microphone's acoustic center. Record these coordinates in a local coordinate system.

- Verification: Emit a brief, impulsive sound (e.g., balloon pop or clapper) from 5-10 known positions within the array's volume. Use time-difference-of-arrival (TDOA) algorithms with the surveyed coordinates; localization error should be < 1% of the array's smallest dimension.

4. Synchronization Protocols

4.1 Wired Master-Slave Synchronization (Lab) Objective: To synchronize multiple ADC channels to a single master clock with submicrosecond skew.

Protocol:

- Connection: Connect all ADC units (e.g., National Instruments DAQ) to a single PC via a dedicated synchronization bus (e.g., PXI backplane or RTSI bus). Designate one unit as the timing master.

- Configuration: In acquisition software (e.g., LabVIEW or MATLAB DAQ Toolbox), configure all slave devices to derive their sample clocks from the master's clock signal.

- Validation: Input a simultaneous impulsive signal to all channels. Verify the timestamp difference of the impulse peak across all channels is less than one sample period (e.g., < 4 µs for 250 kHz).

4.2 Wireless GPS-Disciplined Synchronization (Field) Objective: To synchronize autonomous, distributed recording units using GPS Pulse Per Second (PPS).

Protocol:

- Hardware Assembly: Each autonomous recorder consists of a microphone, ADC, single-board computer (e.g., Raspberry Pi), and GPS module with PPS output. Connect the PPS pin to a GPIO pin on the computer.

- Software Daemon: Run a time-synchronization daemon (e.g.,

chronyorgpsd) that disciplines the system clock to the PPS signal and NMEA time messages from the GPS. - Timestamping: The acquisition software timestamps each audio sample buffer using the GPS-disciplined system clock (using

CLOCK_REALTIME). Log raw audio with UTC timestamps. - Post-Hoc Alignment: In post-processing, align recordings from all units by matching UTC timestamps. Correct for residual, constant cable delay offsets from calibration.

5. The Scientist's Toolkit Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in Acoustic Localization Research |

|---|---|

| Calibrated Pistonphone (e.g., 94 dB SPL @ 250 Hz) | Provides a known, stable acoustic pressure level for absolute sensitivity calibration of microphones. |

| Ultrasonic Speaker (Flat response 10-120 kHz) | Emits controlled ultrasonic signals for playback experiments, array calibration, and behavioral stimuli. |

| Anechoic Chamber (or lined enclosure) | Provides a reflection-free environment for precise acoustic calibration of sensors. |

| NIST-Traceable Sound Level Meter | Serves as the primary reference standard for in-situ sound pressure level validation. |

| RTK-GPS Base & Rover System | Enables centimeter-accurate surveying of microphone and sound source positions in outdoor field studies. |

6. System Workflow and Signaling Diagram

Diagram 1: GPS-Synchronized Acoustic Localization Workflow

This document details the application notes and protocols for a core signal processing pipeline within a broader thesis focused on acoustic localization and tracking of vocalizing animals. The pipeline transforms raw acoustic data into actionable biological insights, enabling research into species distribution, behavior, and the effects of environmental or pharmacological interventions.

Data Presentation: Quantitative Performance Metrics

Table 1: Comparative Performance of Common Detection & Classification Algorithms (2023-2024 Benchmarks)

| Algorithm / Model Type | Typical Use Case | Avg. Detection F1-Score (SNR > 6dB) | Avg. Classification Accuracy (5 Species) | Computational Cost (Relative Units) | Citation(s) |

|---|---|---|---|---|---|

| Spectral Peak Energy Detection | Initial Call Detection | 0.85 | N/A | 1.0 | (Jan. 2024 Review) |

| Random Forest (on MFCCs) | Call Classification | N/A | 89.2% | 4.5 | (Wildl. Res., 2023) |

| Convolutional Neural Network (CNN) | Image-based Spectrogram Classification | N/A | 94.7% | 8.2 | (Ecol. Inform., 2024) |

| Recurrent Neural Network (RNN/LSTM) | Temporal Sequence Classification | N/A | 91.5% | 9.0 | (J. Acoust. Soc. Am., 2023) |

| YAMNet (Transfer Learning) | General Audio Event Classification | 0.88* | 92.1%* | 7.5 | (Adapted from Bioacoustics studies, 2024) |

| Teager Energy Operator (TEO) | Non-stationary Signal Detection | 0.90 (for impulsive calls) | N/A | 1.8 | (Signal Proc., 2024) |

*Fine-tuned on target species dataset.

Experimental Protocols

Protocol 3.1: End-to-End Pipeline Validation for Field Data

Objective: To validate the entire processing pipeline using a curated dataset of known animal vocalizations mixed with field noise.

Materials: Audio recorder (e.g., Wildlife Acoustics Song Meter), calibrated speaker, reference audio files (e.g., Macaulay Library), computer with Python/Matlab.

Procedure:

- Data Acquisition & Synthesis:

- In a controlled lab, play reference vocalizations at known amplitudes.

- Simultaneously record ambient noise from target field sites.

- Synthesize test files by mixing clean vocalizations with field noise at defined Signal-to-Noise Ratios (SNRs: -5dB, 0dB, 5dB, 10dB, 15dB).

- Pipeline Execution:

- Pre-processing & Filtering: Apply a bandpass filter (e.g., 500 Hz to 12 kHz for small mammals/birds) to each file. Normalize amplitude.

- Detection: Run the spectral peak detection algorithm (see Protocol 3.2) on all files. Record timestamps of detected events.

- Feature Extraction: For each detected event, extract a 256-point log-mel spectrogram and 13 MFCCs.

- Classification: Input features into a pre-trained Random Forest classifier. Record species label and confidence score.

- Analysis:

- Compare detected event timestamps to ground truth. Calculate Precision, Recall, and F1-Score for detection.

- Compare classifier outputs to ground truth labels. Calculate confusion matrix and overall accuracy per SNR level.

Protocol 3.2: Optimized Spectral Peak Detection for Low-SNR Calls

Objective: To establish a robust protocol for detecting faint vocalizations in noisy recordings.

Methodology:

- Spectral Transformation:

- Load a 30-second audio clip (

.wav, 44.1 kHz). - Compute the Short-Time Fourier Transform (STFT) using a 1024-point Hann window with 75% overlap.

- Convert the magnitude spectrogram to decibel scale.

- Load a 30-second audio clip (

- Noise Floor Estimation:

- For each frequency bin, compute the median energy level over a sliding 2-second window. This defines the dynamic noise floor.

- Peak Identification:

- Identify local energy peaks in the spectrogram that exceed the noise floor by a threshold θ (start with θ = 8 dB).

- Apply a minimum duration constraint (e.g., 10 ms) to reject transient spikes.

- Event Clustering:

- Group peaks that are contiguous in time and frequency (within a 100 ms and 500 Hz tolerance) into a single detection event.

- Output the start time, end time, and dominant frequency of each event.

Mandatory Visualization

Title: Acoustic Signal Processing Pipeline Diagram

Title: Detection & Classification Protocol Steps

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Tools for Pipeline Implementation

| Item / Solution | Category | Function / Purpose |

|---|---|---|

| Wildlife Acoustics Song Meter | Hardware | Rugged, weatherproof audio recorder for autonomous long-term field deployment. |

| Audacity / Kaleidoscope | Software | For manual annotation of vocalizations, creating ground truth datasets for training. |

| LibROSA (Python library) | Software | Core library for audio analysis, providing functions for STFT, filtering, MFCC extraction. |

| scikit-learn | Software | Machine learning library for implementing and training classifiers like Random Forest, SVMs. |

| TensorFlow / PyTorch | Software | Deep learning frameworks for building and training custom CNN/RNN models. |

| BioAcoustica or Animal Sound Archive | Data | Online repositories for reference vocalizations used for model training and validation. |

| Pre-trained YAMNet or VGGish | Model | Base models for transfer learning, significantly reducing required training data and time. |

| GPU (e.g., NVIDIA RTX 4090) | Hardware | Accelerates model training and the processing of large acoustic datasets. |

| Custom Python Scripts for SNR Synthesis | Software | To generate controlled test datasets by mixing clean calls with noise at specific SNRs. |

This document details the practical implementation of three acoustic localization algorithms within a research thesis focused on tracking vocalizing animals in natural habitats. Accurate localization is critical for estimating animal density, monitoring behavior, and studying species interactions. The methodologies outlined below enable researchers to process field-recorded audio to estimate the spatial coordinates of sound-emitting subjects.

Core Algorithm Principles

- Time Difference of Arrival (TDOA): Computes the relative delay of a sound wave arriving at pairs of spatially separated microphones. Source location is estimated by solving hyperbolic equations from multiple TDOA measurements.

- Steered Response Power - Phase Transform (SRP-PHAT): A robust, generalized cross-correlation method. It "steers" a beamformer across a grid of potential source locations and calculates the summed power, with the PHAT weighting providing phase-only, whitened cross-spectra for improved performance in reverberant environments.

- Machine Learning (ML) Models (e.g., CNNs, RNNs): Data-driven approaches that learn a direct mapping from multichannel audio features (spectrograms, GCC-PHAT matrices) to source coordinates or direction-of-arrival. They can model complex, non-linear acoustic phenomena.

Quantitative Performance Comparison

Table 1: Comparative Performance of Localization Algorithms in Controlled Acoustic Tests

| Algorithm | Avg. Error (m) | Robustness to Noise | Comp. Cost (Rel.) | Reverb. Tolerance | Best Use Case |

|---|---|---|---|---|---|

| TDOA (GCC-PHAT) | 0.15 - 0.40 | Moderate | Low | Low | Open-field, high SNR, few sources |

| SRP-PHAT | 0.10 - 0.30 | High | Very High | High | Cluttered environments, moderate reverb |

| ML Model (CNN) | 0.05 - 0.20* | Very High | Medium (Inference) | Customizable | Known environments, large training datasets |

*Performance dependent on training data quality and representativeness.

Experimental Protocols

Protocol A: Field Calibration & Data Acquisition for Animal Vocalization

Objective: To capture clean, synchronized multi-channel audio of target species with known array geometry for algorithm training and testing.

- Array Deployment: Deploy a calibrated microphone array (e.g., 4-8 element circular or random geometry). Precisely measure and record the 3D coordinates of each microphone using a laser rangefinder or GPS.

- System Synchronization: Use a hardware synchronizer (e.g., an audio interface with multiple inputs, or separate recorders synchronized via GPS pulses) to ensure sample-accurate alignment across all channels.

- Calibration Emission: Prior to animal recording, emit calibration signals (sine sweeps or impulsive sounds) from known positions within the study area. Record these to characterize channel-specific delays and system response.

- Target Recording: Record continuous audio during peak vocalization activity. Simultaneously, an observer logs visual confirmations of animal positions (using GPS/theodolite) to create ground-truth labels.

- Data Segmentation: Isolate individual vocalization events (calls, songs) from the continuous recording using an energy-based voice activity detector (VAD) or manual annotation.

Protocol B: Benchmarking Localization Algorithms

Objective: To quantitatively evaluate the accuracy of TDOA, SRP-PHAT, and ML models against ground-truth data.

- Dataset Preparation: From Protocol A, create a dataset of N vocalization events. For each event, extract a multichannel audio clip and associate it with the ground-truth (x, y) coordinates. Split data into Training (60%), Validation (20%), and Test (20%) sets.

- TDOA Implementation:

- For each audio clip, compute the Generalized Cross-Correlation with Phase Transform (GCC-PHAT) for all unique microphone pairs.

- Identify time-delay estimates (TDOAs) by finding the peak of each GCC-PHAT function.

- Solve for source location using a least-squares hyperbolic solver (e.g., Chan's method).

- SRP-PHAT Implementation:

- Define a 2D search grid covering the recording area.

- For each grid point, compute theoretical time delays for all microphone pairs.

- Sum the GCC-PHAT values at the corresponding delays for all pairs. This sum is the SRP at that grid point.

- The location estimate is the grid point maximizing the SRP.

- ML Model Training (CNN Example):

- Input Feature: Compute the GCC-PHAT matrix for all mic pairs for each clip, creating a 2D input "image".

- Model: Design a Convolutional Neural Network (CNN) with convolutional layers to process the GCC matrix, followed by fully connected layers.

- Output: Model outputs estimated (x, y) coordinates (regression task).

- Training: Train the model using the Training set, minimizing Mean Squared Error (MSE) loss between predictions and ground truth. Use the Validation set for early stopping.

- Evaluation: Apply all three trained/algorithms to the held-out Test set. Calculate the Mean Euclidean Error (in meters) and Standard Deviation for each method.

Visual Workflows

Algorithm Implementation & Evaluation Workflow

Feature Processing Paths for Three Algorithms

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials for Acoustic Localization Studies

| Item | Function/Description | Key Consideration |

|---|---|---|

| Synchronized Microphone Array | Multi-channel audio capture. Can be omnidirectional electret or measurement mics. | Synchronization is critical. Use hardware sync or post-hoc software alignment with clapperboard. |

| Field Calibrator (Sound Level Meter) | Provides reference SPL for calibrating recording gain and verifying microphone sensitivity. | Necessary for converting recordings to absolute pressure, enabling cross-study comparisons. |

| Audio Recording Interface | High-quality ADC with multiple inputs (e.g., 8ch) for simultaneous capture. | Sample rate (≥48 kHz) and bit-depth (24-bit) must be sufficient for target species' vocalizations. |

| GPS & Laser Rangefinder | Precisely documents the 3D coordinates of each microphone and any ground-truth source locations. | Millimeter accuracy for array geometry is ideal; centimeter accuracy is often acceptable in the field. |

| Acoustic Calibration Source | Portable device (e.g., pistonphone) that emits a sound wave with known frequency and pressure level. | Used for in-field system calibration before/after deployment to account for environmental changes. |

Software Libraries (Python): Librosa, SciPy, Pyroomacoustics, TensorFlow/PyTorch |

Provide core signal processing (GCC-PHAT), simulation environments, and ML framework implementation. | Open-source ecosystem allows for reproducible and modifiable algorithm implementation. |

| Annotation Software (e.g., Raven Pro, Audacity) | For manual segmentation and labeling of vocalizations, creating the essential ground-truth dataset. | Critical for supervised ML model training and final performance evaluation. |

Application Notes

The precise acoustic localization and tracking of vocalizing animals is a transformative methodology for behavioral neuroscience and psychopharmacology. It moves beyond simple detection to provide a quantitative, spatiotemporal map of vocal communication and its modulation by disease states or therapeutic interventions. Integrated with video tracking, it disambiguates the emitter of each call within a social group, linking specific acoustic signatures to individual behaviors, social hierarchy, and emotional states.

Quantifying Social Interaction: Acoustic localization allows researchers to determine which animal in a dyad or group is vocalizing during specific social behaviors (e.g., sniffing, chasing, mounting). This is critical for assays like the three-chamber social test, resident-intruder test, or home-cage monitoring. It enables the quantification of initiator/responder dynamics and the correlation of call rate and type with proximity and interaction quality.

Ultrasonic Vocalizations (USVs) in Rodents: Rodent USVs serve as biomarkers for affective state and social motivation. Localization distinguishes prosocial 50-kHz "trill" and "step" calls from aversive 22-kHz long "alarm" calls and assigns them to the correct emitter. In drug development, this is used to assess the efficacy of antidepressants (reducing 22-kHz calls in stress models) or prosocial compounds (enhancing 50-kHz call rates during social play).

Stress Vocalizations in NHPs: Non-human primates (NHPs) produce a rich repertoire of stress-related vocalizations (e.g., coos, screams, threat barks). Acoustic localization in large enclosures or during fMRI scans pinpoints the source of calls, linking them to individual stress levels, social conflict, and physiological markers (cortisol). This is vital for studying anxiolytics, models of chronic stress, and social anxiety.

Key Quantitative Metrics: The following table summarizes core data outputs from acoustic localization studies.

Table 1: Core Quantitative Metrics from Acoustic Localization & Tracking

| Metric Category | Specific Metric | Typical Values (Rodent USVs) | Typical Values (NHP Stress Calls) | Biological Interpretation |

|---|---|---|---|---|

| Spatiotemporal | Calls per minute (Rate) | 5-100 calls/min (50-kHz) | 0.5-20 calls/min (context dependent) | Overall arousal & motivational state |

| Call duration (ms) | 10-200 ms (50-kHz); 300-3000 ms (22-kHz) | 50-1500 ms | Emotional valence & urgency | |

| Inter-call interval (ms) | 50-500 ms | 200-5000 ms | Temporal patterning of communication | |

| Spectral | Peak frequency (kHz) | 30-90 kHz (50-kHz band); 18-28 kHz (22-kHz) | 0.5-10 kHz (species/model dependent) | Call type classification & emotional content |

| Frequency modulation (kHz/ms) | ±1 to ±20 kHz/ms | ±0.1 to ±2 kHz/ms | Complexity & affective intensity | |

| Social & Contextual | Emitter identity (from localization) | Animal A vs. B in dyad | Individual ID in group | Social role (initiator, responder, victim) |

| Distance between emitter & receiver (cm) | 0-50 cm | 0-500 cm | Proximity-based communication efficacy | |

| Vocalization-behavior latency (s) | <1 s from sniff initiation | <2 s from threat gesture | Causal linkage between action and vocal output |

Detailed Experimental Protocols

Protocol 1: Localized USV Recording During Rat Social Interaction Test

Objective: To quantify prosocial 50-kHz USVs from individually identified rats during a dyadic play session.

Materials & Setup:

- A sound-attenuated test chamber (e.g., 80 x 80 x 60 cm).

- A calibrated microphone array (e.g., 4-6 ultrasonic microphones with >100 kHz sampling rate) positioned symmetrically above the chamber.

- Synchronized high-speed video camera (≥ 60 fps).

- Acoustic localization software (e.g., DeepSqueak, Avisoft, or custom TDOA-based solutions).

- Two age- and weight-matched male juvenile or adult rats.

Procedure:

- Habituation: Individually habituate each rat to the test chamber for 10 min/day for 2 days.

- Hardware Calibration: Record a 1-minute calibration tone (e.g., a pulsed 50 kHz sine wave) emitted from 5 known locations in the chamber. Use this to validate the software's localization accuracy (< 2 cm error).

- Experimental Recording: Place both rats in the chamber simultaneously. Record synchronized audio (from all mics) and video for 10 minutes.

- Acoustic Processing: a. Band-pass filter raw audio (30-90 kHz for 50-kHz calls; 18-28 kHz for 22-kHz calls). b. Use localization software to detect USVs and compute the source location for each call via Time Difference of Arrival (TDOA) algorithms.

- Data Integration & Analysis: a. Sync video and acoustic data timestamps. b. Assign each localized call to the rat whose video-tracked centroid is closest to the sound source location at that moment. c. For each animal, calculate: total 50-kHz call count, call rate (calls/min), average peak frequency, and proportion of calls emitted while within 10 cm of the conspecific.

Protocol 2: NHP Stress Vocalization Localization During Human Intruder Paradigm

Objective: To localize and quantify stress vocalizations from individual cynomolgus macaques in a social group during a controlled stressor.

Materials & Setup:

- Large primate housing room or enclosure with known geometry.

- Wireless, synchronized microphone array (8+ omnidirectional mics) mounted on walls/celling.

- Overhead video recording system.

- Dedicated acoustic localization server running a beamforming or TDOA pipeline.

- Known "intruder" (unfamiliar human).

Procedure:

- Baseline Recording: Over 3 consecutive days, record 30 minutes of baseline audio/video from the stable social group during a quiet morning period.

- Stress Induction: On test day, introduce an unfamiliar human ("intruder") to the front of the enclosure, maintaining a neutral posture and avoiding direct eye contact for a 10-minute period. Record throughout.

- Acoustic Analysis: a. Downsample audio to 48 kHz. Apply noise reduction filters to remove ambient fan/ventilation noise. b. Manually annotate or use a trained classifier to detect stress vocalization types (coos, screams, barks) in the recordings. c. For each detected vocalization, run the localization algorithm to generate 3D coordinates (X, Y, Z) of its source within the enclosure.

- Behavioral Correlation: a. From video, track the location of each animal during the vocalization period. b. Assign each call to the animal whose tracked position best matches the localized acoustic source. c. Calculate for each animal: stress call rate (pre- vs. post-intruder), dominant call type, and average distance from the intruder when calling.

Visualizations

Title: Acoustic Localization Workflow for Vocalizing Animals

Title: Vocalization as a Bioassay for Emotional State

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Acoustic Localization Studies

| Item | Function & Explanation |

|---|---|

| Ultrasonic Microphone Array | A set of ≥4 calibrated microphones with flat frequency response up to 150 kHz. Enables computation of sound source location via TDOA algorithms. |

| Synchronized DAQ System | A multi-channel data acquisition system with hardware-level synchronization across all audio inputs and video triggers. Ensures temporal alignment critical for localization. |

| Acoustic Calibration Source | A precise ultrasonic speaker emitting tones of known frequency and amplitude. Used to map microphone array geometry and validate localization accuracy. |

| Sound-Attentuating Chamber | A chamber lined with acoustic foam to dampen echoes and external noise. Improves signal-to-noise ratio and localization precision. |

| Localization Software Suite | Software (e.g., custom MATLAB/Python with TDOA, or commercial like Avisoft-RECORDER with tracking module) to compute 3D coordinates from multi-mic recordings. |

| Automated Vocalization Classifier | A machine learning tool (e.g., DeepSqueak, VocalMat) to detect and categorize call types from audio segments, integrating with localization data. |

| Synchronized IR Video System | High-frame-rate infrared cameras for continuous behavioral tracking in darkness (rodents are nocturnal). Provides visual data to assign calls to individuals. |

| Wireless Telemetry System (NHP) | For large enclosures, wireless microphone nodes or wearable audio recorders synchronized to a central clock, allowing animal movement without cable constraints. |

Solving Real-World Challenges: Noise, Reflections, and Data Integrity

Within acoustic localization and tracking research of vocalizing animals, such as birds, amphibians, and terrestrial mammals, ambient noise presents a primary challenge. It degrades signal-to-noise ratio (SNR), obscures target vocalizations, and introduces errors in source localization algorithms. Effective noise mitigation employs a two-pronged strategy: physical/spectral acoustic isolation to prevent noise intrusion, and adaptive digital filtering to separate signal from noise post-capture. These protocols are critical for generating reliable data for behavioral studies, population density estimates, and monitoring ecosystem health—research with implications for neuroethology and the development of bio-inspired acoustic models.

Core Strategies: Isolation & Filtering

Acoustic Isolation Strategies

The first line of defense is to minimize noise at the sensor (microphone) level.

Table 1: Acoustic Isolation Methodologies and Performance Metrics

| Method | Primary Function | Typical Noise Attenuation (dB) | Best For | Key Limitation |

|---|---|---|---|---|

| Physical Windscreens (Foam, Fur) | Redces wind-induced turbulence noise | 10-25 dB (above 200 Hz) | Field recordings in open terrain | Minimal effect on constant background noise |

| Parabolic Reflectors | Focuses acoustic energy from a direction | Increases SNR by 15-20 dB | Isolating calls from distant point sources | Narrow acceptance angle; frequency response bias |

| Isolation Enclosures (Acoustic boxes) | Shields microphone from ambient noise | 20-40 dB broadband | Fixed monitoring stations, lab playback studies | Immobile; can affect low-frequency response |

| Spectral Avoidance | Scheduling recording during low-noise periods | N/A (operational strategy) | Diurnal/seasonal noise patterns (e.g., avoiding insect chorus) | Limited by animal vocalization activity |

| Sensor Placement Optimization | Leveraging terrain & vegetation | Varies significantly (5-15 dB) | Large-scale array deployments | Requires detailed site survey |

Protocol 2.1.1: Field Deployment of Acoustic Sensors with Integrated Isolation Objective: Deploy an autonomous recording unit (ARU) to maximize capture of target species (e.g., songbirds) while minimizing wind and broadband ambient noise. Materials: ARU (e.g., Wildlife Acoustics Song Meter), synthetic fur windscreen, 10m PVC mast, shock cord, GPS unit. Procedure:

- Site Survey: Use pre-deployment recordings (24 hrs) and spectral analysis to identify noise baselines and target vocalization bands.

- Windscreen Integration: Securely fit the synthetic fur windscreen over the ARU's microphones, ensuring no contact with the membrane.

- Elevated Placement: Mount the ARU on a PVC mast at 3-5m height, stabilized with guylines. Position away from direct foliage contact to reduce vegetation noise.

- Orientation: If using a directional microphone/reflector, orient primary axis toward expected animal locations (e.g., forest edge).

- Verification: Conduct a 1-hour verification recording. Analyze spectrogram for persistent wind noise bands (typically <500 Hz) and adjust windscreen or placement if needed.

Adaptive Filtering Algorithms

When isolation is insufficient, digital signal processing (DSP) techniques are applied to recorded audio.

Table 2: Adaptive Filtering Algorithms for Bioacoustics

| Algorithm | Core Principle | Optimal Use Case | Typical SNR Improvement | Computational Cost |

|---|---|---|---|---|

| Spectral Subtraction | Estimates & subtracts noise spectrum from noisy signal | Stationary or slowly varying noise (e.g., distant traffic) | 5-15 dB | Low |

| Adaptive Noise Cancellation (ANC) | Uses reference noise to adaptively subtract from primary signal | Correlated noise captured by a reference mic (e.g., wind on array) | 10-25 dB | Medium-High |

| Wiener Filtering | Statistical estimation of desired signal in frequency domain | Noises with known spectral characteristics | 8-18 dB | Medium |

| Notch Filtering | Attenuates energy at specific frequencies | Narrowband interference (e.g., 60 Hz electrical hum, insect drones) | N/A (targeted removal) | Low |

| Deep Learning U-Net | Trained model separates signal/noise spectrograms | Complex, non-stationary noise overlapping in time-frequency | 15-30 dB (with good training data) | Very High (GPU needed) |

Protocol 2.2.1: Real-Time Adaptive Noise Cancellation for Mobile Recording Arrays Objective: Implement a real-time ANC to enhance vocalizations from a moving animal (e.g., marine mammal) using a two-element towed hydrophone array. Materials: Two calibrated hydrophones, preamplifiers, real-time DSP platform (e.g., National Instruments PXIe), software (MATLAB Simulink or Python sounddevice). Procedure:

- Hardware Setup: Designate Hydrophone 1 as primary (target + noise) and Hydrophone 2 as reference (predominantly noise, acoustically isolated from target). Ensure precise synchronization.

- Filter Initialization: Implement a normalized least-mean-squares (NLMS) adaptive filter on the DSP platform. Set filter length (e.g., 256 taps) and step size parameter (μ) for stability.

- Calibration: In a controlled noisy environment without target signal, adjust the relative gain between channels to maximize noise correlation.

- Real-Time Processing: The adaptive filter continuously models the noise path from the reference to the primary channel and subtracts its output from the primary signal.

- Output Monitoring: Stream the cleaned audio output and log the filter coefficients. Periodically reset the filter if the noise environment changes abruptly.

Application in Acoustic Localization Workflows

Noise reduction directly impacts the accuracy of Time-Difference-of-Arrival (TDOA) and beamforming localization methods.

(Title: Bioacoustic Localization Noise Mitigation Workflow)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Digital Tools for Noise Combat

| Item / Solution | Function | Example Product / Software |

|---|---|---|

| Synthetic Fur Windscreens | Attenuates wind noise without heavy rain shielding. | Rycote Super Softie |

| Acoustic Calibrators | Provides reference SPL for quantifying noise floor and signal level. | GRAS 42AA Pistonphone |

| Programmable ARUs | Enables spectral avoidance scheduling and high-SNR recording. | Wildlife Acoustics Song Meter Mini Bat |

| Biologically-Informed Bandpass Filters | Digitally isolates species-specific frequency band, removing out-of-band noise. | Custom script in R (seewave package) or Python (scipy.signal) |

| Adaptive Filter Toolbox | Implements NLMS, RLS algorithms for real-time or post-hoc ANC. | MathWorks DSP System Toolbox for MATLAB |

| Deep Learning Framework for Audio | Trains and deploys models for spectrogram denoising. | Python with PyTorch or TensorFlow, using librosa |

| Reference Microphones | Provides dedicated noise channel for multi-channel adaptive filtering. | Microtech Gefell MMM with low-self-noise preamp |

| Synchronization Hardware | Ensures sample-accurate alignment for array-based noise cancellation. | LabJack T7 with clock synchronization |

(Title: Adaptive Noise Cancellation (ANC) Signal Pathway)

Mitigating Reverberation and Multipath Effects in Laboratory Environments

Within the broader thesis on acoustic localization and tracking of vocalizing animals for behavioral pharmacology and neuroethology research, controlling the acoustic environment is paramount. Reverberation (persistence of sound after the source stops) and multipath propagation (sound arriving via direct and reflected paths) introduce significant errors in Time-Difference-of-Arrival (TDOA) and Direction-of-Arrival (DOA) calculations. These errors corrupt source localization data, confounding studies on animal communication, drug-induced vocalization changes, and social interaction tracking. This document provides application notes and protocols for mitigating these effects in controlled laboratory settings.

Table 1: Impact of Reverberation on Localization Error in a Model Animal Lab

| Reverberation Time (T60, in ms) | Estimated RMS Localization Error (cm) | Typical Laboratory Condition |

|---|---|---|

| 50 | 1-2 | Anechoic chamber (ideal) |

| 200 | 5-10 | Heavily acoustically treated room |

| 500 | 15-30 | Standard animal housing room with some treatment |

| 1000 | 30-60 | Empty standard lab room (hard surfaces) |

| >1500 | >75 | Highly reflective tiled/wet lab |

Table 2: Comparative Performance of Common Acoustic Treatment Materials

| Material Type | Average Absorption Coefficient (α) at 1 kHz | Optimal Frequency Range | Key Application |

|---|---|---|---|

| Melamine Foam (e.g., Basotect) | 0.95 | Mid-High (500 Hz - 5 kHz) | Broadband wall/ceiling treatment |

| Fiberglass Panel (rigid) | 0.85 | Mid-High (250 Hz - 4 kHz) | Baffles and gobos |

| Polyester Fiber Panel | 0.80 | Mid-High (500 Hz - 4 kHz) | Safe, non-irritant alternative |

| Acoustic Curtain (Heavy) | 0.60 | Mid-High (500 Hz - 2 kHz) | Temporary barriers, cage covers |

| Bass Trap (Membrane) | 0.70 | Low (50 - 300 Hz) | Corner placement for low-frequency control |

| Perforated Wood Panel | Varies with design | Tuned frequency | Diffusion & low-frequency absorption |

Experimental Protocols

Protocol 1: Characterizing Room Impulse Response (RIR) for Animal Enclosures

Objective: To measure the reverberation time (T60) and multipath profile of a laboratory animal enclosure or testing arena. Materials: Full-range reference speaker, calibrated measurement microphone, audio interface, signal generator software (e.g., ARTA, REW), acoustic treatment materials. Procedure:

- Place the animal enclosure (e.g., rodent home cage, avian flight room) in its standard experimental position.

- Position a calibrated full-range speaker at a representative "animal source" location (e.g., center of cage).

- Place a measurement microphone at multiple potential microphone array locations (≥3 positions).

- Generate and play a swept sine signal (20 Hz - 20 kHz) or maximum length sequence (MLS) from the speaker.

- Record the response synchronously with the emitted signal using the measurement microphone.

- Deconvolve the recorded signal to obtain the Room Impulse Response (RIR) for each microphone position.

- Calculate the T60 (time for sound to decay by 60 dB) from the integrated impulse response (Schroeder backward integration).

- Visually identify significant secondary peaks in the RIR, indicating major multipath reflections, and note their time delay and relative amplitude.

- Iteratively apply acoustic treatments (foam, baffles, diffusers) and repeat steps 4-8 to quantify improvement.

Protocol 2: Validation of Localization Accuracy Post-Treatment

Objective: To quantify the improvement in acoustic localization accuracy for a vocalizing animal model after mitigation steps. Materials: Miniature ultrasonic microphones (e.g., 4-channel array), high-speed data acquisition system, controlled sound source (e.g., calibrated ultrasonic pulser), tracking software (e.g., DeepSqueak, MUPET, or custom TDOA algorithm), treated enclosure. Procedure:

- Set up a microphone array (e.g., tetrahedral configuration) around the treated animal enclosure. Precisely measure and record 3D microphone coordinates.

- Using a calibrated, movable sound source that mimics the animal's vocalization (frequency, duration, amplitude), emit pulses from 10-20 known, pre-mapped locations within the enclosure.

- Record the signals synchronously on all array channels at a high sampling rate (≥250 kHz for ultrasonic species).

- For each source location, compute TDOAs between all microphone pairs using generalized cross-correlation with phase transform (GCC-PHAT).

- Input TDOAs into a localization algorithm (e.g., spherical interpolation, maximum likelihood) to estimate source coordinates.

- Calculate the Root Mean Square Error (RMSE) between estimated and true source positions.

- Compare the RMSE from tests run in the treated enclosure to baseline data from the untreated enclosure. Statistical analysis (e.g., paired t-test) should confirm significant reduction in error.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Acoustic Mitigation in Animal Labs

| Item | Function/Explanation | Example Product/Brand |

|---|---|---|

| Melamine Acoustic Foam | Open-cell foam for broadband absorption of mid/high-frequency reflections and reverberation. Crucial for reducing flutter echoes. | Basotect, Illbruck SFM |

| Movable Acoustic Baffles/Gobos | Freestanding, absorbent panels to place between sound sources and reflective surfaces (e.g., cage walls, equipment). Creates "quiet zones". | ATS Acoustics Baffles, GIK Acoustics Freestanders |

| Ultrasonic Microphone Array | Specialized, phase-matched microphones capable of recording >100 kHz for rodent/bat studies. Enable precise TDOA measurement. | UltraSoundGate (Avisoft), Knowles MEMS mics, Bruel & Kjaer 4939 |

| Acoustic Diffusers | Scatter incident sound waves to break up strong, coherent reflections that cause comb filtering, without removing acoustic energy. | Quadratic Residue Diffusers (QRD), Skyline Diffusers |

| Sound-Absorbing Cage Covers | Covers made from absorbent material placed over standard rodent racks to dampen internal reverberation and isolate between cages. | Tecniplast Cage Top Dampeners, LabGard Noise Reduction Cover |

| High-Speed DAQ System | Data acquisition hardware with simultaneous sampling on all channels (>250 kHz aggregate) to prevent TDOA skew. | National Instruments USB-6366, Avisoft UltraSoundGate 116Hm |

| Calibrated Sound Source | Reference speaker or pistonphone for generating known signals to characterize environment or validate localization. | Avisoft UltraSoundGate Player, Precision Acoustic Pistonphone |

| Room Acoustics Software | Software to design treatment layouts, simulate acoustics, and analyze measured impulse responses. | ODEON, EASE, Room EQ Wizard (REW) |

Visualizations

Diagram Title: Acoustic Mitigation and Validation Workflow

Diagram Title: Multipath Corruption of TDOA Estimation

Optimizing Array Geometry and Microphone Placement for Different Enclosures

Application Notes

This document details the critical considerations for deploying acoustic sensor arrays in diverse enclosure types, a foundational component of a broader thesis on acoustic localization and tracking of vocalizing animals for behavioral pharmacology and neuroethology research. Accurate source localization is paramount for correlating vocalizations with specific behaviors, social interactions, or drug-induced effects in model organisms.

Key Acoustic Parameters and Array Design Principles

The performance of a localization system is governed by the interplay between enclosure acoustics and array geometry. The primary metrics are Time-Difference-of-Arrival (TDOA) accuracy and spatial resolution.

Table 1: Core Localization Performance Metrics

| Metric | Formula/Description | Target for Animal Vocalizations (e.g., Mice, Birds) |

|---|---|---|

| TDOA Estimation Error (σₜ) | Root-mean-square error of time delay estimates. Directly impacts localization accuracy. | < 50 µs (for broadband calls > 10 kHz) |

| Cramer-Rao Lower Bound (CRLB) | Theoretical lower bound on variance of location estimates. Function of array geometry, SNR, and bandwidth. | Minimize via optimal sensor placement. |

| Geometric Dilution of Precision (GDOP) | Describes how geometry magnifies measurement error into localization error. | Aim for GDOP < 2 in region of interest. |

| Central Frequency (f₀) | Dominant frequency of target animal vocalization. | Mice: 50-80 kHz (USV); Birds: 1-8 kHz (song). |

| Wavelength (λ) | λ = c / f₀, where c ≈ 343 m/s. Fundamental scale for array design. | Mouse USV: ~4.3-6.9 mm; Bird song: ~43-343 mm. |

| Array Aperture (D) | Maximum distance between microphones in the array. Governs angular resolution. | Typically 5-20λ for a balance of resolution and ambiguity. |

Enclosure-Specific Array Optimization Strategies

Enclosure geometry and material properties dictate distinct optimization protocols.

Table 2: Optimization Strategies by Enclosure Type

| Enclosure Type | Primary Acoustic Challenge | Recommended Array Geometry | Key Placement Protocol |

|---|---|---|---|

| Small, Reflective (e.g., standard rodent cage) | Strong, short-delay reflections/multipath. | Compact 3D (e.g., tetrahedral). Maximize angular coverage in minimal volume. | Elevate array center, use absorbent lining on enclosure walls below array. |

| Large, Anechoic (e.g., open-field arena) | Low reverberation but low SNR over distance. | Sparse 2D Planar or Large Aperture 3D. Optimize for GDOP over intended tracking volume. | Perimeter placement at multiple heights; ensure line-of-sight to entire arena floor. |

| Complex Naturalistic (e.g., forest/vegetation mesocosm) | Severe multipath and scattering. | Dense, Distributed Node-based. Multiple sub-ararrays deployed throughout space. | Sub-arrays placed in clearings; synchronized wireless operation. |

| Long, Tunnel-like (e.g., burrow system model) | Waveguide effects, axial localization only. | Linear Array along the tunnel axis. | Microphones spaced at non-integer multiples of λ; avoid symmetrical placement. |

| Water-Filled Tanks (e.g., for aquatic mammals) | High sound speed (~1500 m/s), different impedance. | Vertical Line Array or 3D Hull Array. Account for faster speed of sound. | Use hydrophones; consider refraction at air/water interface; rigid mounting. |

Experimental Protocols

Protocol 1: Characterization of Enclosure Impulse Response

Objective: To measure the reverberation time (T₆₀) and reflection profile of the experimental enclosure.

- Setup: Place a calibrated omnidirectional speaker and a reference measurement microphone at the intended animal location(s).

- Stimulus: Broadcast a logarithmic sine sweep (e.g., 1-100 kHz) or a balloon pop impulse.

- Recording: Capture the response with a data acquisition system (≥250 kS/s sampling rate).

- Analysis: Compute the energy decay curve from the recorded impulse response. Calculate T₆₀ (time for sound to decay by 60 dB). Map early reflection delays and amplitudes.

- Use: Determine the critical "acoustic clutter" period post-call during which TDOA estimation is unreliable.

Protocol 2: Empirical Validation of Localization Accuracy

Objective: To quantify the real-world localization error of a configured array for a given enclosure.

- Setup: Install the microphone array according to the chosen geometry. Use a calibration jig to measure precise 3D microphone coordinates.

- Emitter: Use a mobile, automated sound emitter (e.g., a speaker broadcasting synthetic animal calls) at known coordinates within the enclosure. A robotic positioner is ideal.

- Procedure: Move the emitter to N ≥ 20 known positions covering the tracking volume. At each, emit 10 repetitions of the target call type.

- Localization: For each call, compute the TDOAs (using Generalized Cross-Correlation with Phase Transform, GCC-PHAT) and solve for the source location (using least-squares or spherical intersection algorithms).

- Analysis: Calculate the mean and root-mean-square error (RMSE) between the estimated and true positions for each location. Generate an error vector map.

Diagram Title: Acoustic Array Optimization Workflow