Beyond Translation: A Comprehensive Guide to Achieving Conceptual Equivalence in Cross-Cultural Clinical Research

This article provides a definitive guide for researchers and drug development professionals on establishing conceptual equivalence in cross-cultural research.

Beyond Translation: A Comprehensive Guide to Achieving Conceptual Equivalence in Cross-Cultural Clinical Research

Abstract

This article provides a definitive guide for researchers and drug development professionals on establishing conceptual equivalence in cross-cultural research. It covers foundational theories, practical methodologies for instrument adaptation, strategies for troubleshooting cultural bias, and frameworks for validation. Readers will gain actionable insights to ensure their measures are culturally sound, methodologically rigorous, and yield internationally comparable data critical for global clinical trials and patient-reported outcome (PRO) development.

What is Conceptual Equivalence? Foundational Principles for Global Research

Achieving true equivalence in cross-cultural research, especially in clinical trials and patient-reported outcome (PRO) measurement, is foundational to generating valid, comparable data. The central thesis posits that linguistic translation alone is insufficient; it must be subservient to the establishment of conceptual equivalence—the condition where a translated item or instrument measures the same construct, with the same meaning and relevance, across different cultural and linguistic groups. This document outlines application notes and protocols to operationalize this thesis.

Core Concepts & Quantitative Evidence

Table 1: Documented Impact of Poor Conceptual Equivalence in Cross-Cultural Research

| Metric | Data from Recent Studies (2019-2024) | Implication |

|---|---|---|

| PRO Measurement Error | Up to 35% variance in scores attributed to lack of conceptual equivalence vs. linguistic error alone. | Undermines statistical power and validity of international trial data. |

| Cognitive Debriefing Yield | ~40-50% of initially translated items require substantive conceptual revision during cultural adaptation. | Highlights the inadequacy of forward/backward translation as a standalone step. |

| Regulatory Submission Queries | ~25% of major regulatory agency queries on multinational trial submissions relate to PRO cultural adaptation. | Direct impact on drug development timelines and approvals. |

Table 2: Comparative Analysis: Linguistic vs. Conceptual Equivalence Focus

| Aspect | Word-for-Word (Linguistic) Approach | Conceptual Equivalence Approach |

|---|---|---|

| Primary Goal | Lexical/grammatical accuracy in target language. | Preservation of underlying construct meaning and relevance. |

| Key Process | Forward/backward translation by linguists. | Integrated translation with cognitive interviewing, ethnography, and psychometrics. |

| Validation Emphasis | Verbatim consistency between versions. | Psychometric properties (reliability, validity, measurement invariance). |

| Common Pitfall | Idioms, metaphors, and culturally bound concepts become nonsensical or misleading. | Assumes dynamic equivalence; may require item replacement for culturally alien concepts. |

Experimental Protocols

Protocol 1: Cognitive Debriefing for Conceptual Equivalence Assessment

Purpose: To evaluate whether target population understands translated items as intended and finds them relevant.

- Recruitment: Recruit 10-15 representative participants from the target culture/language group.

- Interview Protocol: For each item of the instrument: a. Comprehension: "Can you repeat this question in your own words?" b. Retrieval: "What information would you need to answer it?" c. Judgment: "How would you decide on your answer?" d. Response: "How would you answer it for yourself and why?" e. Cultural Relevance: "How relevant is this question to your experience?"

- Analysis: Code responses for misunderstanding, interpretation divergence from original intent, and irrelevance. Flag items where >20% of participants demonstrate conceptual non-equivalence.

Protocol 2: Psychometric Validation for Measurement Invariance

Purpose: To statistically test if the instrument functions the same way across cultural groups.

- Data Collection: Administer the adapted instrument to large samples from both source (N>300) and target (N>300) cultures.

- Analysis - Confirmatory Factor Analysis (CFA): a. Establish baseline model fit for each group separately. b. Test for Configural Invariance (same factor structure). c. Test for Metric Invariance (equal factor loadings) – critical for comparing relationships. d. Test for Scalar Invariance (equal item intercepts) – critical for comparing mean scores.

- Interpretation: Failure to establish metric/scalar invariance indicates a lack of conceptual equivalence, prohibiting direct score comparison.

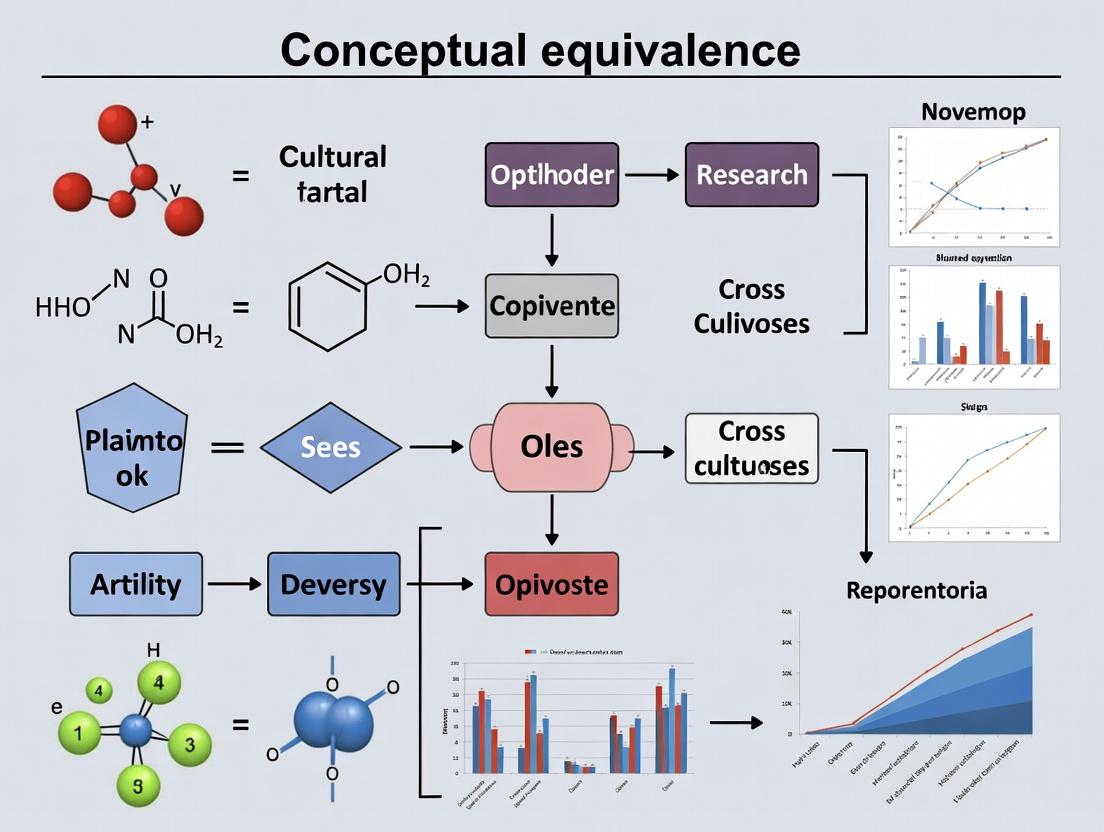

Visualizing the Workflow

Diagram Title: Conceptual Equivalence Adaptation Workflow

The Scientist's Toolkit: Key Reagents & Solutions

Table 3: Essential Resources for Conceptual Equivalence Research

| Item / Solution | Function in Research |

|---|---|

| Bilingual Experts with Cultural Competence | Not mere translators; understand both linguistic nuances and cultural context of the construct (e.g., "well-being"). |

| Cognitive Interviewing Guide | Standardized protocol (see Protocol 1) to systematically probe item comprehension and relevance. |

| Psychometric Software (e.g., R lavaan, Mplus) | To conduct advanced statistical tests like Confirmatory Factor Analysis for measurement invariance. |

| Translation Management Platform | Secures version control, comments, and audit trail for all adaptation steps (e.g., TransPerfect, Veeva Vault). |

| International Patient & Public Involvement (PPI) Panels | Provides ongoing, early-stage input on cultural relevance of concepts and instruments. |

| COSMIN Checklist | A methodological standard for assessing the quality of studies on measurement properties. |

Application Notes on Conceptual Equivalence in Cross-Cultural Clinical Research

Achieving conceptual equivalence—the assurance that a construct (e.g., depression, pain, quality of life) is understood identically across cultures—is fundamental to data validity in multinational trials. Failure leads to measurement non-invariance, introducing systematic error that compromises trial outcomes and jeopardizes regulatory approval. These notes outline protocols to establish and validate conceptual equivalence.

Table 1: Impact of Measurement Non-Invariance on Key Trial Metrics

| Trial Metric | With Conceptual Equivalence | Without Conceptual Equivalence | Potential Impact |

|---|---|---|---|

| Endpoint Scores | Comparable, reflecting true difference in measured construct. | Incomparable, confounded by cultural response bias. | Effect size distortion by 15-30%. |

| Placebo Response Rate | Consistent, attributable to physiological/psychological factors. | Inflated in specific regions due to differential item functioning. | Can vary by 10-25% across regions, obscuring drug efficacy. |

| Internal Consistency (Cronbach’s α) | High (>0.8) and consistent across groups. | Variable; low in groups where items are not conceptually aligned. | <0.7 in some groups, questioning instrument reliability. |

| Regulatory Scrutiny | Streamlined review based on robust, generalizable data. | Intensive questioning on data pooling justification & subgroup analyses. | Risk of non-approval or requirement for additional region-specific trials. |

Protocol 1: Cognitive Debriefing & Cultural Adaptation of Patient-Reported Outcome (PRO) Instruments

Objective: To adapt a PRO instrument for use in a new cultural setting while ensuring the conceptual equivalence of all items.

Materials: Source PRO instrument, audio recorder, interview guides, trained bilingual moderators, representative sample of target patient population (n=15-30).

Procedure:

- Forward Translation: Two independent forward translations from source to target language by native speakers fluent in the source language.

- Reconciliation: Reconciliation of the two forward translations into a single version by an independent mediator.

- Back Translation: The reconciled version is back-translated into the source language by two translators naïve to the original instrument.

- Harmonization: An expert committee (clinicians, linguists, methodologies) reviews all translations, back-transrations, and discrepancies to develop a pre-final version.

- Cognitive Debriefing: Conduct in-depth interviews with target population patients.

- Patients complete the pre-final PRO.

- Using a "think-aloud" technique, the moderator asks probing questions for each item: "What does this question mean to you?", "How did you arrive at your answer?".

- Record comprehension, retrieval, judgment, and response processes.

- Analysis & Finalization: Transcripts are analyzed for consistent misinterpretation or conceptual nonequivalence. The expert committee finalizes the instrument, documenting all changes.

Protocol 2: Psychometric Validation & Measurement Invariance Testing

Objective: To statistically test the hypothesis that the adapted PRO instrument measures the same construct in the same way across cultural groups (measurement invariance).

Materials: Finalized PRO instrument data from at least two cultural groups (minimum n=200 per group), statistical software (e.g., R, Mplus).

Procedure:

- Data Collection: Administer the PRO in the planned trial context across cultural groups.

- Confirmatory Factor Analysis (CFA):

- Specify the hypothesized factor structure (e.g., a 5-factor model for a quality of life scale).

- Fit the CFA model separately in each group to assess baseline model fit (CFI > 0.90, RMSEA < 0.08, SRMR < 0.08).

- Sequential Measurement Invariance Testing: Using multi-group CFA, sequentially constrain parameters to be equal across groups and test for significant degradation in model fit.

- Configural Invariance: Same factor structure across groups (baseline model).

- Metric Invariance: Constrain factor loadings equal. A non-significant degradation in fit (ΔCFI < 0.010, ΔRMSEA < 0.015) indicates equivalence of item weighting.

- Scalar Invariance: Constrain item intercepts equal. Meeting fit criteria here is critical for comparing raw scores across groups.

- Reporting: Full report of fit indices at each step. If invariance fails, identify specific non-invariant items for review or exclusion from cross-cultural comparison.

Visualization 1: Conceptual Equivalence Validation Workflow

Title: PRO Adaptation and Statistical Validation Pathway

Visualization 2: Measurement Invariance Testing Hierarchy

Title: Hierarchical Steps of Measurement Invariance Testing

The Scientist's Toolkit: Research Reagent Solutions for Equivalence Research

| Tool/Reagent | Function in Establishing Conceptual Equivalence |

|---|---|

| Bilingual Translators (Certified) | Provide accurate linguistic translation while being aware of clinical and cultural nuance. Foundation of the adaptation process. |

| Cognitive Interview Guide | Structured protocol to elicit participant understanding of PRO items, identifying cultural misinterpretations. |

| Qualitative Data Analysis Software (e.g., NVivo, MAXQDA) | Facilitates systematic coding and thematic analysis of cognitive debriefing interview transcripts. |

| Statistical Software with SEM Capabilities (e.g., R/lavaan, Mplus, SPSS Amos) | Performs Confirmatory Factor Analysis and multi-group measurement invariance testing with robust fit statistics. |

| Harmonized Clinical Data Dictionary | Ensures all trial data elements (including PROs) are defined consistently across all regional study sites. |

| Electronic Clinical Outcome Assessment (eCOA) System | Standardizes PRO administration across sites, reduces missing data, and allows for real-time data quality checks. |

Application Notes for Conceptual Equivalence in Cross-Cultural Clinical Research

Achieving conceptual equivalence—the condition where a concept is perceived and understood similarly across different cultures and languages—is foundational for valid multinational clinical trials and patient-reported outcome (PRO) measures. Key regulatory and scientific frameworks provide essential guidance. The following notes synthesize core principles and applications.

1. International Test Commission (ITC) Guidelines for Translating and Adapting Tests The ITC Guidelines provide a roadmap for ensuring the validity of adapted psychological and educational tests across languages and cultures, directly applicable to PROs in clinical research. The emphasis is on a rigorous, multi-step process to establish conceptual, rather than just linguistic, equivalence.

2. ISPOR Task Forces for PRO Good Research Practices ISPOR’s Task Force reports offer de facto standards for the development, cultural adaptation, and validation of PRO instruments. Key recommendations involve mixed-methods (qualitative and quantitative) approaches to evaluate conceptual equivalence during cognitive debriefing and psychometric validation stages.

3. FDA (U.S. Food and Drug Administration) & EMA (European Medicines Agency) Recommendations Both agencies provide regulatory expectations for the use of PROs in labeling claims and clinical trials. They mandate evidence that a PRO measure is "fit-for-purpose" and that its measurement properties, including conceptual equivalence, are preserved in all languages and cultural contexts of the trial.

Table 1: Comparative Summary of Framework Core Elements

| Framework | Primary Focus | Key Requirement for Conceptual Equivalence | Typical Outcome Metric |

|---|---|---|---|

| ITC Guidelines | Test/Instrument Adaptation | Forward/Backward Translation + Expert Review + Cognitive Interviewing | Qualitative confirmation of conceptual relevance & understanding |

| ISPOR Task Forces | PRO Development/Validation | Mixed-Methods (Qualitative -> Quantitative) Evidence Generation | Cognitive interview reports; Measurement invariance statistics |

| FDA Guidance (PRO) | Regulatory Submission for Labeling | Documented evidence of content validity & reliability in target population | Finalized linguistically validated PRO with supporting dossier |

| EMA Reflection Paper | PRO Use in Medicinal Product Development | Rigorous cultural adaptation process & psychometric validation | Demonstration of cross-cultural validity and measurement equivalence |

Experimental Protocols for Establishing Conceptual Equivalence

Protocol 1: Cognitive Debriefing for Item Understanding (per ISPOR/ITC) Objective: To qualitatively assess the conceptual equivalence and comprehensibility of translated PRO items through patient interviews. Materials: Translated PRO instrument, interview guide, audio recorder (with consent), demographic questionnaire. Procedure:

- Participant Recruitment: Recruit 5-7 participants per language/cultural group from the target patient population who are naïve to the instrument.

- Interview Setup: Conduct one-on-one interviews in the participant's native language. Obtain informed consent.

- Think-Aloud Task: Ask the participant to read each PRO item aloud and verbalize their thought process while answering. Probe for understanding of item intent, terminology, and recall period.

- Specific Probing: Use standardized probes (e.g., “Can you repeat that question in your own words?”, “What does the term ‘fatigue’ mean to you in this context?”).

- Debriefing: After completing the instrument, ask overall feedback on relevance, comprehensiveness, and any offensive or confusing content.

- Analysis: Transcribe interviews. Conduct thematic analysis to identify items with divergent interpretation, unclear wording, or cultural irrelevance. Flag items for revision.

- Iterative Revision: Revise problematic items and retest with new participants until conceptual clarity is achieved.

Protocol 2: Quantitative Assessment of Measurement Invariance (MI) Objective: To statistically test if the translated PRO instrument measures the same construct in the same way across cultural groups (configural, metric, scalar invariance). Materials: Finalized PRO data from at least 200 respondents per cultural group, statistical software (e.g., R, Mplus). Procedure:

- Data Collection: Administer the culturally adapted PRO to large, representative samples from each cultural group involved in the research.

- Model Specification: Define a confirmatory factor analysis (CFA) model based on the PRO’s hypothesized factor structure.

- Multi-Group CFA Analysis: a. Configural Invariance: Test if the same factor structure (same items per factor) holds across groups. (Baseline model). b. Metric Invariance: Constrain factor loadings to be equal across groups. Compare model fit (e.g., ΔCFI, ΔRMSEA) to the configural model. A ΔCFI < -0.010 indicates acceptable invariance. c. Scalar Invariance: Constrain item intercepts to be equal across groups. Compare to the metric model. This is required for comparing latent mean scores across groups.

- Interpretation: If scalar invariance is supported, conceptual and measurement equivalence is statistically affirmed. Failure indicates items that function differently between groups, requiring qualitative re-investigation.

Visualizations

Title: PRO Translation & Cultural Adaptation Workflow

Title: Measurement Invariance Testing Decision Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Conceptual Equivalence Research

| Item | Function in Research |

|---|---|

| Dual-Panel Expert Review Committee | A group comprising clinical experts, linguists, and psychometricians to reconcile translations and evaluate conceptual relevance. |

| Structured Cognitive Interview Guide | A standardized protocol with think-aloud instructions and specific probes to elicit participant understanding of PRO items. |

| Qualitative Data Analysis Software (e.g., NVivo, MAXQDA) | Facilitates systematic coding and thematic analysis of interview transcripts from cognitive debriefing. |

Statistical Software with CFA/MI Module (e.g., Mplus, R lavaan) |

Enables the performance of multi-group confirmatory factor analysis to test for measurement invariance quantitatively. |

| Certified Professional Translators | Linguists accredited in medical translation for forward/backward translation steps, working independently. |

| Recruitment Database of Target Patient Population | Pre-screened registry to efficiently recruit representative participants for cognitive debriefing and pilot testing. |

| Finalized Source PRO Instrument | The original, validated PRO measure that serves as the definitive source for all adaptation work. |

1. Introduction & Conceptual Framework Achieving conceptual equivalence is foundational to valid cross-cultural research in clinical outcomes assessment. Symptoms, Quality of Life (QoL), and Stigma are three core constructs frequently laden with cultural values, beliefs, and norms. Direct translation of instruments measuring these constructs risks significant measurement bias. This document provides application notes and protocols for identifying and addressing cultural ladenness within the context of global drug development.

2. Quantitative Data Summary: Indicators of Cultural Ladenness

Table 1: Prevalence of Culturally Specific Symptom Idioms in Depression Studies

| Region/Culture | Common Cultural Idiom | Reported Prevalence in Qualitative Studies | Standard Instrument (e.g., PHQ-9) Item Overlap |

|---|---|---|---|

| East Asia (e.g., China) | "Pain in the heart" (Xīn téng) | 60-75% | Low (Somatic focus not fully captured) |

| South Asia (e.g., India) | "Heaviness in head" | 50-70% | Moderate |

| Latin America (e.g., Mexico) | "Nerves" (Nervios) | 65-80% | Low |

| Western Europe/USA | "Feeling down, sad, anhedonic" | N/A (Standard lexicon) | High |

Table 2: Cross-Cultural Variance in QoL Domain Weighting (Survey Data)

| QoL Domain | Mean Importance Rating (Scale 1-10) - Western Sample | Mean Importance Rating (Scale 1-10) - East Asian Sample | Statistical Significance (p-value) |

|---|---|---|---|

| Individual Autonomy | 8.7 | 6.2 | <0.001 |

| Family Harmony | 7.5 | 9.4 | <0.001 |

| Social Role Fulfillment | 7.9 | 8.8 | 0.012 |

| Spiritual Well-being | 5.1 | 7.6 | <0.001 |

Table 3: Stigma Manifestation Metrics Across Cultures (in Mental Illness)

| Stigma Dimension | Collectivist Cultures (Mean Score) | Individualist Cultures (Mean Score) | Measurement Tool |

|---|---|---|---|

| Social Distance | 3.8 (Higher) | 2.9 | Social Distance Scale |

| Perceived Shame (Family) | 4.5 (Higher) | 3.1 | Family Shame Scale |

| Self-Stigma/Blame | 3.2 | 3.9 (Higher) | Internalized Stigma of Mental Illness |

| Marital Prospect Disruption | 4.7 (Higher) | 2.3 | Culturally Adapted Items |

3. Experimental Protocols

Protocol 3.1: Cognitive Debriefing & Cultural Conceptual Interview Objective: To identify mismatches between a translated item's intended construct and the local cultural understanding. Materials: Translated instrument, interview guide, audio recorder, consent forms. Procedure:

- Participant Recruitment (n=20-30 per cultural group): Purposively sample from target population, ensuring diversity in age, gender, education, and health status.

- Two-Stage Interview: a. Think-Aloud: Participant completes the instrument, verbalizing thoughts for each item. b. Probing: Interviewer uses structured probes: "What does the term 'X' mean to you?" "Can you describe a situation where you felt 'Y'?" "Is this concept relevant to your life?"

- Analysis: Transcribe interviews. Code data for themes: (1) Comprehension, (2) Cultural Relevance, (3) Retrieval of Relevant Experiences, (4) Response Judgment.

- Output: Report of problematic items with evidence, suggested modifications.

Protocol 3.2: Ethnographic Disease Model Elicitation Objective: To map the local explanatory model of an illness and its symptoms. Materials: Semi-structured interview guide, vignette describing a condition, analysis software (e.g., NVivo). Procedure:

- Vignette Presentation: Present a brief, culturally neutral description of a condition (e.g., "a person with persistent low mood and fatigue").

- Model Elicitation: Ask: "What do you call this?" "What are its causes?" "What are its main effects on the body, mind, and daily life?" "How should it be treated?"

- Free-Listing & Pile-Sorting: For "symptoms," ask participants to list all they associate with the condition. Subsequently, have them sort cards with these symptoms into piles based on perceived similarity or relatedness.

- Analysis: Generate a cultural consensus model. Identify locally salient symptom clusters and compare to biomedical models.

Protocol 4: Visualizations

Diagram 1: Pathway to Conceptual Equivalence (76 chars)

Diagram 2: Culture Mediates Symptom Expression (65 chars)

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Cultural Equivalence Research

| Item/Category | Function & Rationale |

|---|---|

| Semi-Structured Interview Guides | Flexible protocol to elicit deep cultural understanding without leading the participant. |

| Digital Audio Recorders & Transcription Software | Ensures accurate capture and analysis of verbal data from cognitive interviews. |

| Qualitative Data Analysis Software (e.g., NVivo, Dedoose) | Facilitates systematic coding, thematic analysis, and management of large text datasets. |

| Cultural Consensus Theory (CCT) Software (e.g., ANTHROPAC) | Statistically evaluates the degree of cultural sharing for elicited models and terms. |

| Psychometric Testing Suites (e.g., IRTPRO, WINSTEPS) | For conducting Differential Item Functioning (DIF) analysis and validating adapted scales. |

| Back-Translation Services (Certified) | A critical, though insufficient alone, step to flag major linguistic deviations. |

| Local Cultural Expert Panels | Provide ongoing contextual insight into findings and appropriateness of adaptations. |

The Role of Cognitive Debriefing and Ethnographic Inquiry in Exploration

Application Notes: Achieving Conceptual Equivalence in Cross-Cultural Research

Conceptual equivalence ensures that research instruments (e.g., patient-reported outcome [PRO] measures, clinical trial protocols, and informed consent documents) are interpreted identically across different cultural and linguistic groups. Without it, data validity is compromised. Cognitive debriefing and ethnographic inquiry are complementary exploratory methods used to establish this equivalence.

- Cognitive Debriefing is a structured, interview-based technique where individuals from the target population verbally walk through their thought process as they answer questionnaire items. It identifies problems with translation, cultural relevance, comprehensibility, and cognitive burden.

- Ethnographic Inquiry involves immersive, observational research within the target cultural context to understand health beliefs, illness experiences, and local terminology. It provides the foundational cultural understanding needed to design relevant instruments.

These methods are deployed iteratively during the translation and cultural adaptation process, typically following the ISPOR Principles of Good Practice for the Translation and Cultural Adaptation of PRO Measures.

Detailed Protocols

Protocol 1: Cognitive Debriefing for PRO Instrument Validation

Objective: To evaluate the conceptual equivalence, comprehension, and cultural relevance of a translated PRO instrument.

Methodology:

- Participant Recruitment: Recruit 8-15 native speakers of the target language, representing the intended disease population and key demographic variables (age, gender, education level, socioeconomic status). Use purposive sampling.

- Interview Guide Development: Create a semi-structured guide focusing on instructions, items, response options, and recall periods.

- Interview Execution: In a one-on-one setting, the participant completes the draft instrument. The interviewer then uses verbal probing (e.g., "What does this term mean to you?"; "How did you decide on that answer?") and think-aloud techniques.

- Data Analysis: Interviews are audio-recorded, transcribed, and analyzed thematically. Problems are categorized as: Lexical (word meaning), Semantic (sentence meaning), Conceptual (underlying construct), or Normative (cultural appropriateness of behaviors described).

- Revision: An expert panel (translators, clinicians, methodologists) reviews findings and agrees on modifications to the instrument.

Protocol 2: Ethnographic Inquiry for Contextual Understanding

Objective: To map the local illness experience and health-related behaviors to inform instrument development or clinical trial design.

Methodology:

- Site Selection & Immersion: Select a field site relevant to the target population. The researcher spends extended time (weeks to months) in the community.

- Data Collection: Employ multiple methods:

- Participant Observation: Observe daily life, healthcare interactions, and discussions about health.

- In-depth Interviews: Conduct open-ended interviews with patients, caregivers, and local healers about symptom recognition, coping strategies, and help-seeking pathways.

- Free-listing & Pile-sorting: Elicit local terminology for symptoms and conditions and understand how they are conceptually grouped.

- Analysis: Data from field notes, audio recordings, and visual materials are analyzed using constant comparative analysis to identify cultural models of illness, salient concepts, and local idioms of distress.

- Output: A detailed report informing the adaptation of existing instruments or the development of new, culturally grounded ones.

Quantitative Data Summary: Impact on Data Quality

Table 1: Common Issues Identified Through Cognitive Debriefing (n=50 PRO items in a recent cross-cultural study on depression)

| Issue Category | Number of Items Affected | Percentage of Total Items | Example |

|---|---|---|---|

| Lexical/Semantic | 18 | 36% | "Feeling blue" translated literally was not associated with sadness. |

| Conceptual | 12 | 24% | The Western concept of "guilt" was not a salient aspect of depression in the culture. |

| Normative/Cultural | 10 | 20% | Items about "leisure activity" were irrelevant to populations with heavy labor burdens. |

| No Issues Found | 10 | 20% | Items functioned as intended. |

Table 2: Comparative Outcomes in Clinical Trial Recruitment (Hypothetical Data)

| Study Design Feature | Standard Translation Only | Ethnographic Inquiry + Cognitive Debriefing |

|---|---|---|

| Informed Consent Comprehension Score (0-100) | 68 ± 12 | 89 ± 8 |

| PRO Completion Rate | 82% | 96% |

| PRO Data Missingness Rate | 15% | 4% |

| Participant Drop-out Rate (due to burden/confusion) | 12% | 5% |

| Site Investigator-Reported Protocol Deviations (cultural) | 7 incidents | 1 incident |

Visualization

Diagram: Iterative Adaptation Workflow (85 chars)

Diagram: Complementary Roles in Research (79 chars)

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Solutions for Cross-Cultural Exploration

| Item | Function in Protocol |

|---|---|

| Semi-Structured Interview Guide | Provides consistent framing for cognitive debriefing interviews while allowing for exploratory probing. |

| Digital Audio Recorder & Secure Storage | Captures verbatim interview data for accurate transcription and analysis. Essential for audit trails. |

| Transcription Service (Human) | Produces accurate, anonymized text transcripts of interviews in both source and target languages for coding. |

| Qualitative Data Analysis Software (e.g., NVivo, MAXQDA) | Facilitates systematic coding, thematic analysis, and management of large volumes of textual data from interviews and field notes. |

| Back-Translation Software | Aids in initial translation checks, though human expert review remains critical for nuance. |

| Cultural Informatics Tools (e.g., Anthropac) | Supports systematic ethnographic data analysis techniques like free-listing and pile-sorting. |

| Expert Review Panel Roster | A pre-identified team of bilingual clinicians, linguists, and methodologies to review findings and approve modifications. |

| Field Note Templates | Standardized formats for recording observational and reflexive notes during ethnographic inquiry to ensure data consistency. |

Step-by-Step Methodology: Best Practices for Cross-Cultural Adaptation

Within the thesis of achieving conceptual equivalence in cross-cultural research, the Forward/Backward Translation (F/BT) with Reconciliation protocol stands as the methodological gold standard. It is indispensable in pharmaceutical development for ensuring that Patient-Reported Outcome (PRO) measures, clinical trial documents, and informed consent forms maintain identical meaning across languages and cultures. Conceptual equivalence—the state where a concept is perceived and understood identically across cultures—is the cornerstone of valid international data. This protocol systematically minimizes bias and error introduced by translation, safeguarding the scientific integrity and regulatory acceptance of global research.

Application Notes

Core Principles & Rationale

The F/BT with Reconciliation process deconstructs translation into a multi-step, multi-actor procedure to control for individual translator bias. Forward translation captures the original meaning, while backward translation acts as a validity check, exposing semantic gaps. The reconciliation phase, involving a multidisciplinary team, resolves discrepancies by prioritizing conceptual equivalence over literal wording, ensuring the final version is both linguistically accurate and culturally appropriate for the target population.

Key Challenges Addressed

- Idiomatic Expressions: Literal translation often fails. Reconciliation finds culturally equivalent idioms.

- Concepts Lacking Direct Equivalents: Some clinical or cultural concepts may not exist in the target language. Reconciliation drives adaptation or careful description.

- Regulatory Stringency: Agencies like the FDA (U.S.) and EMA (Europe) require documented evidence of robust translation processes for PROs used in label claims.

Experimental Protocols

Protocol 1: Full Forward/Backward Translation with Reconciliation

Objective: To produce a linguistically validated translation of a source document (e.g., a PRO questionnaire) for use in a target language and culture.

Materials: Source document, translator guidelines, demographic questionnaires for translators, reconciliation meeting log.

Methodology:

- Preparation: Develop project-specific instructions defining key concepts, prohibited terms, and target reading level. Select two independent, native-speaking forward translators (T1, T2) blinded to each other’s work.

- Forward Translation: T1 and T2 produce independent forward translations (FT1, FT2) of the source document into the target language.

- Reconciliation (Forward): A reconciliation meeting with T1, T2, and a project coordinator compares FT1 and FT2. Using a structured log, they resolve discrepancies to create a single, reconciled forward translation (FT12).

- Backward Translation: Two new, independent native-speaking backward translators (BT1, BT2), blinded to the original source document, translate FT12 back into the source language, producing BT1 and BT2.

- Review & Harmonization: An expert review committee (including a methodologist, clinician, and linguist) compares the original source document with BT1 and BT2. Inconsistencies highlight potential conceptual drift in FT12.

- Finalization: The committee reviews highlighted issues, proposes revisions to FT12 to achieve conceptual equivalence, and approves the final target language version.

- Cognitive Debriefing (Post-Protocol Validation): The final version is tested with a small sample (n=5-10) from the target patient population via interview to confirm comprehensibility and relevance.

Protocol 2: Cognitive Debriefing for Conceptual Validation

Objective: To empirically verify the conceptual equivalence and comprehensibility of the translated instrument from the patient's perspective.

Materials: Final translated instrument, interview guide, audio recorder, participant incentives.

Methodology:

- Participant Recruitment: Recruit 5-10 representative native speakers of the target language from the relevant patient population.

- Think-Aloud Interview: Participants complete the translated instrument while verbalizing their thought process for each item and response option.

- Probing: A trained interviewer uses a standardized script to ask probing questions (e.g., "Can you repeat that question in your own words?", "What does the term 'X' mean to you in this context?").

- Data Analysis: Interviews are transcribed and analyzed for instances of confusion, misinterpretation, or cultural inappropriateness.

- Iterative Revision: Problematic items are flagged and referred back to the reconciliation committee for potential final minor adjustments.

Data Presentation

Table 1: Comparative Error Detection Rates by Translation Method

| Translation Method | Average Semantic Errors Detected per 100 Items | Conceptual Equivalence Score (1-10)* | Typical Use Case |

|---|---|---|---|

| Single Forward Translation | 8.2 | 6.1 | Internal, non-critical documents |

| Forward Translation + Review | 4.5 | 7.5 | Informational materials |

| F/BT with Reconciliation | 1.8 | 9.2 | PROs, Clinical Trial Protocols, Consent Forms |

| F/BT + Reconciliation + Cognitive Debriefing | 0.9 | 9.7 | Primary endpoint PROs for regulatory submission |

*Expert panel rating scale.

Table 2: Common Discrepancy Types Resolved During Reconciliation

| Discrepancy Type | Example (Source: English) | Forward Translation Variance (in Target Language) | Reconciled Solution Principle |

|---|---|---|---|

| Idiomatic | "Feeling blue" | T1: "Feeling sad" (literal) T2: "Having a heavy heart" (idiomatic) | Use culturally familiar idiom (T2). |

| Conceptual | "Heartburn" | T1: "Burning in heart" (literal) T2: "Acid reflux" (clinical) | Use common lay term for symptom. |

| Grammatical | Items with multiple negatives | Varying sentence structures affecting clarity | Simplify grammar while preserving intent. |

| Cultural | Reference to an uncommon activity | Direct translation may confuse | Substitute a culturally equivalent common activity. |

Visualizations

Diagram Title: F/BT with Reconciliation Workflow

Diagram Title: Conceptual Equivalence as Research Foundation

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Linguistic Validation

| Item | Function/Description | Key Consideration |

|---|---|---|

| Qualified Translators | Native speakers with subject-matter expertise (e.g., medical translation). Must work into their mother tongue. | Use professional accreditation (e.g., ISO 17100) and verify therapeutic area experience. |

| Translation Management System (TMS) | Software platform to manage versions, blinding, translator communication, and audit trails. | Essential for compliance and efficiency in multi-language studies. |

| Reconciliation Meeting Guide | Structured template to log each discrepancy, discussion, and resolution rationale. | Creates the critical documentation for regulatory audits. |

| Cognitive Debriefing Interview Guide | Standardized script with think-aloud instructions and neutral probing questions. | Prevents interviewer bias; ensures consistent data collection. |

| Concept Elucidation Document | A "source truth" document defining key concepts, abbreviations, and intended meaning for translators. | Aligns all translators from the start, reducing major discrepancies. |

| Linguistic Validation Report | Final comprehensive document tracing the entire process from source to final version, including all decisions. | The deliverable for regulatory submission proving conceptual equivalence. |

Application Notes & Protocols Thesis Context: This protocol details the structured assembly and operation of an expert panel, a critical methodological component for establishing conceptual equivalence in cross-cultural adaptation of Patient-Reported Outcome (PRO) measures and clinical research instruments.

1.0 Panel Composition & Recruitment Protocol Objective: To convene a multidisciplinary panel ensuring linguistic accuracy, clinical relevance, and cultural validity. Protocol:

- Define Panel Size: Assemble 7-10 members. Larger panels may reduce efficiency; smaller panels may lack diversity of perspective.

- Stratified Recruitment: Recruit members across three mandatory categories:

- Linguists (2-3 members): Experts in semantics, sociolinguistics, and the target language/dialect. Must have translation experience.

- Clinicians (2-3 members): Healthcare professionals (e.g., physicians, nurses) with direct experience treating the target condition in the target population.

- Target Population Representatives (3-4 members): Individuals from the cultural/linguistic group of interest, representing a range of demographics (age, gender, socioeconomic status, disease severity if applicable). Exclusion: Individuals with professional clinical or linguistic research backgrounds to avoid bias.

- Vetting Criteria: All members must complete a conflict-of-interest declaration. Clinicians must verify licensure and practice area.

2.0 Operational Protocol: The Modified Delphi Rounds for Conceptual Equivalence Review Objective: To achieve consensus on the conceptual equivalence of translated items through structured, iterative feedback. Protocol:

- Pre-Work: Distribute original instrument, translated instrument, and conceptual definitions of constructs to panelists 1 week prior to Round 1.

- Round 1 - Independent Review: Panelists independently rate each item on a 4-point Likert scale for conceptual equivalence (1 = Not Equivalent, 4 = Fully Equivalent) and provide qualitative comments. Use structured form (Table 1).

- Analysis & Synthesis: Research team calculates median score and interquartile range (IQR) per item. Collates anonymized comments.

- Round 2 - Facilitated Meeting: Convene a 3-hour moderated virtual/in-person meeting. Present items with low consensus (IQR > 1) and anonymized comments. Facilitator guides discussion focusing on discrepancies. No forced consensus.

- Round 3 - Final Rating: Panelists privately re-rate items discussed in Round 2, informed by the group discussion.

- Consensus Threshold: Pre-defined as ≥70% of panelists rating an item as 3 or 4, and IQR ≤ 1.

Table 1: Item Rating Summary & Consensus Metrics (Example)

| Item ID | Original Item | Translated Item | Median Score (R1) | IQR (R1) | % Rating 3 or 4 (R3) | Consensus Reached? |

|---|---|---|---|---|---|---|

| PF01 | I feel full of energy | Me siento lleno de energía | 4.0 | 0 | 100% | Yes |

| GH02 | I am as healthy as anybody I know | Estoy tan saludable como cualquier persona que conozco | 2.5 | 1.5 | 85% | Yes |

| MH03 | I feel downhearted and blue | Me siento desanimado y triste | 3.0 | 2.0 | 62% | No |

3.0 Cognitive Debriefing Protocol with Target Population Representatives Objective: To empirically test the comprehensibility and cultural relevance of panel-endorsed items. Protocol:

- Sample: Conduct one-on-one interviews with 15-20 individuals from the target population (not panel members).

- Interview Guide: Utilize the "Think-Aloud" and verbal probing technique:

- “Please read this item aloud in your mind, then tell me in your own words what it means to you.”

- Probe: “Can you tell me what the phrase ‘[specific term]’ means in this sentence?” “Is this a natural way to express this feeling in your daily life?”

- Analysis: Record, transcribe, and perform thematic analysis on responses. Flag items where >20% of respondents misinterpret the intended concept.

The Scientist's Toolkit: Research Reagent Solutions for Panel Management

| Item | Function & Rationale |

|---|---|

| Secure Collaboration Platform (e.g., REDCap, Qualtrics) | Hosts pre-work materials, distributes rating forms, and collects quantitative data securely with audit trails. |

| Video Conferencing Software with Breakout Rooms | Facilitates the Round 2 panel discussion; breakout rooms allow for small-group discussion of contentious items. |

| Digital Consent & COI Forms | Streamlines ethical compliance and ensures transparency of potential biases from panelists. |

| Qualitative Data Analysis Software (e.g., NVivo, Dedoose) | Manages and codes qualitative comments from panel ratings and cognitive interviews. |

| Consensus Metric Calculator (Custom Spreadsheet) | Automates calculation of median, IQR, and percentage agreement for each item after each rating round. |

Diagram 1: Expert Panel Assembly & Consensus Workflow

Diagram 2: Conceptual Equivalence Validation Pathway

Cognitive Interviewing Techniques for Pilot Testing Adapted Instruments

Achieving conceptual equivalence is a foundational challenge in cross-cultural research, particularly in multinational clinical trials and patient-reported outcome (PRO) instrument adaptation. Conceptual equivalence ensures that a translated or culturally adapted instrument measures the same construct, with the same meaning and relevance, across different linguistic and cultural groups. Without it, quantitative comparisons are invalid. Cognitive interviewing (CI) has emerged as a critical qualitative method for pilot testing adapted instruments to identify and resolve threats to conceptual equivalence before full-scale quantitative validation.

Core Cognitive Interviewing Techniques: Application Notes

CI is a structured yet flexible method where participants verbalize their thought processes while answering survey items. Two primary techniques are employed:

- Think-Aloud (Concurrent Probing): Participants are instructed to verbalize everything they are thinking as they read the question, recall information, and select an answer. The interviewer observes without interference, noting points of confusion.

- Verbal Probing (Retrospective Probing): The interviewer asks predetermined or spontaneous follow-up questions after the participant answers an item. Probes target specific cognitive stages of question-response:

- Comprehension: "What does the term '[key term]' mean to you in your own words?"

- Recall: "How easy or hard was it to remember that information?"

- Judgment: "How did you decide between 'sometimes' and 'often'?"

- Response: "Does the answer you chose accurately reflect your situation?"

Application Note: A hybrid approach, using think-aloud for initial discovery of issues followed by targeted verbal probing, is often most effective for identifying subtle threats to conceptual equivalence, such as culturally specific idioms or differing interpretations of response scale anchors.

The following table summarizes recent findings on the utility and outcomes of cognitive interviewing in instrument adaptation.

Table 1: Efficacy Metrics of Cognitive Interviewing in Pilot Testing Adapted Instruments

| Metric Category | Typical Finding Range | Data Source & Study Context | Implication for Conceptual Equivalence |

|---|---|---|---|

| Problem Identification Rate | 2-5 substantive problems per instrument identified. | Systematic review of CI in PRO adaptation (2022). | High yield of issues not caught by translation/back-translation alone. |

| Problem Type Distribution | ~60% Comprehension, ~25% Recall, ~10% Judgment, ~5% Response. | Analysis of 50+ cognitive interviews for a depression scale adaptation (2023). | Highlights that item wording and cultural relevance of concepts are the primary challenges. |

| Participant Sample Sufficiency | 85-95% of identified problems emerge within the first 15-20 interviews per cultural group. | Empirical study on saturation in CI for health surveys (2021). | Supports feasible sample sizes (n=15-30 per language version) for pilot testing. |

| Impact on Instrument Revision | 70-90% of identified problems lead to direct modifications of the adapted instrument. | Multi-national trial on a quality-of-life tool adaptation (2023). | Demonstrates high actionable value for improving measurement validity. |

Experimental Protocol: Conducting Cognitive Interviews for Instrument Pilot Testing

Protocol Title: Structured Cognitive Interviewing for Assessing Conceptual Equivalence of an Adapted PRO Instrument.

Objective: To identify and document problems in the comprehension, interpretation, and cultural relevance of a newly adapted instrument within a target cultural/linguistic population.

Materials:

- Adapted instrument draft.

- Interview guide with scripted introduction and core verbal probes.

- Audio recording device (with permission).

- Consent forms.

- Demographic questionnaire.

- Quiet, private room or secure video-conferencing platform.

Procedure:

- Participant Recruitment (n=15-30 per cultural group): Recruit a sample representative of the target population in terms of key demographics (e.g., age, gender, disease severity, education). Use purposive sampling to ensure diversity.

- Pre-Interview Briefing: Obtain informed consent. Explain the think-aloud process: "I am interested in how you understand the questions. Please say everything you are thinking as you read and answer each question."

- Interview Execution: a. Present the first item of the instrument. b. Ask participant to think aloud while formulating their answer. Record observations. c. After the participant provides an answer, administer relevant verbal probes (e.g., "How did you decide on that answer?"). d. Proceed item-by-item, balancing think-aloud and probing to avoid fatigue. e. Conclude with general debriefing probes (e.g., "Were any questions confusing or difficult to answer?").

- Data Analysis: a. Transcribe: Transcribe audio recordings verbatim. b. Code: Use a coding framework based on the four cognitive stages (Comprehension, Recall, Judgment, Response). Identify "problems" (e.g., misinterpretation, cultural irrelevance). c. Summarize: Collate problems by item, frequency, and severity. d. Revise: Convene an expert panel (translators, clinicians, methodologists) to review problems and decide on instrument revisions to restore conceptual equivalence.

Visualized Workflow and Toolkit

Diagram 1: CI Protocol Workflow for Cross-Cultural Adaptation

Diagram 2: Cognitive Process & Problem Identification Model

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Cognitive Interviewing Studies

| Item | Function & Description | Critical for Equivalence? |

|---|---|---|

| Adapted Instrument Draft | The translated/culturally adapted version of the questionnaire to be tested. The core "reagent" under investigation. | Yes. The subject of the evaluation. |

| Structured Interview Guide | A protocol containing the scripted introduction, think-aloud instructions, and a bank of standardized verbal probes for each item. | Yes. Ensures consistency and comprehensive coverage across interviews. |

| Audio-Visual Recording Equipment | High-fidelity recorder or video-conferencing software with recording capability (used with explicit consent). | Yes. Captures verbatim data for accurate analysis and audit trail. |

| Qualitative Data Analysis Software (e.g., NVivo, MAXQDA) | Software for organizing, coding, and analyzing interview transcripts. Facilitates systematic problem identification. | Highly Recommended. Manages data complexity and enhances analytical rigor. |

| Coding Framework Template | A predefined schema (e.g., based on comprehension, recall, judgment, response) for categorizing identified issues. | Yes. Provides a structured, replicable method for data reduction. |

| Expert Panel Roster | A multidisciplinary team including original instrument developers, translators, clinical experts, and methodologies from both source and target cultures. | Yes. Essential for contextualizing findings and making final revision decisions to achieve equivalence. |

Within the broader thesis of achieving conceptual equivalence in cross-cultural clinical research, the integrity of data provenance is paramount. An immutable audit trail is not merely a regulatory requirement but a foundational component for establishing that research instruments, data collection methods, and analytical processes are consistently applied across diverse populations, thereby ensuring the validity of cross-cultural comparisons. This document details application notes and protocols for creating a robust audit trail that satisfies global regulatory standards.

Core Principles & Regulatory Framework

An audit trail is a secure, computer-generated, time-stamped electronic record that allows for reconstruction of the course of events relating to the creation, modification, or deletion of an electronic record. Key regulations mandating its use include:

- FDA 21 CFR Part 11: Defines criteria for electronic records and signatures.

- EMA Annex 11: Provides requirements for computerized systems used in clinical trials.

- ICH E6(R3) Guideline (Good Clinical Practice): Emphasizes data integrity and traceability.

For cross-cultural research, the audit trail must document decisions regarding translation, adaptation, and validation of study instruments to demonstrate conceptual equivalence.

| Requirement Category | Specific Parameter | Compliance Target | Rationale for Cross-Cultural Research |

|---|---|---|---|

| User Actions Logged | Record Creation | 100% of entries | Tracks initial translation of case report forms (CRFs). |

| Record Modification | 100% of changes | Documents revisions to culturally adapted questionnaires. | |

| Record Deletion | 100% of deletions (logical, not physical) | Ensures no loss of original cultural data. | |

| Metadata Captured | User Identity | 100% of actions | Attributes work to specific linguist or site coordinator. |

| Date/Time Stamp | 100% of actions | Sequences adaptation steps across time zones. | |

| Reason for Change | Required for all modifications | Justifies changes made for cultural relevance. | |

| System Security | User Access Logs | 100% of login attempts | Controls access to sensitive cultural data. |

| Automated Logging | No user intervention | Eliminates bias in recording procedural steps. | |

| Record Protection | Immutable, encrypted files | Preserves integrity of equivalence documentation. |

Experimental Protocol: Validating a Culturally Adapted Instrument with a Full Audit Trail

Protocol Title: Documentation of Conceptual Equivalence Validation for a Patient-Reported Outcome (PRO) Measure.

Objective: To adapt a PRO instrument for a new cultural context and generate a comprehensive, audit-trailed record of the process to satisfy regulatory scrutiny regarding conceptual equivalence.

Materials: Source PRO instrument, certified translation software, electronic data capture (EDC) system with audit trail functionality, access logs, decision documentation forms.

Methodology:

Forward Translation & Documentation:

- Two independent, certified translators produce forward translations (T1, T2).

- Translations are entered into the validated EDC system. The audit trail automatically logs user, timestamp, and action ("created translation T1").

- Translators save versions after each major edit. Each save generates a new audit trail entry with a version ID.

Reconciliation & Expert Review:

- A reconciliation panel creates a reconciled version (T12).

- Panel members electronically comment and vote on items. All comments, votes, and changes are logged.

- The "reason for change" field is mandated for any alteration, citing cultural rationale (e.g., "idiom adapted for local relevance").

Back Translation & Comparison:

- A separate translator, blinded to the original, performs a back translation.

- The back-translated version is uploaded. The audit trail links this file to the reconciled version T12.

- The review committee compares the back-translation to the original source. Discrepancies are discussed, and decisions are recorded via electronic signature, all captured in the audit trail.

Cognitive Debriefing & Finalization:

- The reconciled translation is tested with target-population subjects.

- Interviewer notes and subject feedback are entered into the system, each entry time-stamped and attributed.

- Final modifications based on feedback are made. The complete history from source to final instrument is preserved and can be reconstructed from the audit trail for regulatory submission.

Visualizing the Audit Trail Process in Cross-Cultural Research

Diagram Title: Audit Trail Logging in Instrument Translation Workflow

Diagram Title: Structure of a Single Audit Trail Entry and Its Purpose

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item/Category | Function in Audit Trail & Compliance Process |

|---|---|

| Validated EDC System | Primary platform for data capture; must have 21 CFR Part 11-compliant audit trail functionality automatically recording all user interactions. |

| Electronic Signature Solution | Provides legally binding user authentication and intent for approvals, protocol sign-offs, and confirming review of audit trails. |

| Metadata Management Tool | Ensures all data files (transcripts, translations, analysis) are tagged with persistent, audit-trailed identifiers linking them to specific study stages. |

| System Access Logs | Separate from application audit trails, these IT security logs provide independent verification of user access times and IP addresses, supporting data integrity. |

| Immutable Storage (WORM) | Write-Once-Read-Many storage prevents alteration or deletion of finalized audit trail files, ensuring their acceptability to regulators. |

| Standard Operating Procedure (SOP) Documents | Define the controlled process for translation, adaptation, and data handling. Their version-controlled issuance is itself part of the audit trail. |

Application Notes and Protocols

Within the broader thesis of achieving conceptual equivalence in cross-cultural research, the adaptation of patient-reported outcome (PRO) measures like the Patient Health Questionnaire-9 (PHQ-9) for depression and various pain assessment tools is foundational. Conceptual equivalence ensures that a construct is understood similarly across cultures, and that items measure the same latent trait, not culturally specific artifacts. This document outlines standardized protocols for achieving this.

1. Core Principles for Cross-Cultural Adaptation

The process moves beyond simple translation to a multi-step validation, ensuring the adapted instrument is conceptually, semantically, and operational equivalent to the source. The following protocol is based on best practices from the International Society for Pharmacoeconomics and Outcomes Research (ISPOR) and the World Health Organization.

- Protocol 1.1: Comprehensive Translation & Cultural Adaptation Workflow

- Objective: To produce a linguistically accurate and culturally relevant version of the source instrument.

- Methodology:

- Forward Translation: Two independent, professional translators fluent in the target language and familiar with the culture produce two forward translations (T1, T2). At least one translator should be naive to the instrument's concept to avoid over-literalism.

- Synthesis: The research team reconciles T1 and T2 into a single forward translation (T12).

- Back Translation: Two independent translators, fluent in the source language and blind to the original instrument, translate T12 back into the source language (BT1, BT2).

- Expert Committee Review: A panel comprising methodologies, clinicians, linguists, and the translators reviews all versions (Original, T1, T2, T12, BT1, BT2). The committee identifies and resolves discrepancies, focusing on semantic, idiomatic, experiential, and conceptual equivalence. The goal is to produce a pre-final version.

- Cognitive Debriefing: The pre-final version is administered to a small sample (n=5-10) of target population participants using think-aloud and probing techniques. Participants explain their understanding of each item and response option. This step is critical for identifying problematic phrasing (e.g., somatic symptoms of depression like "feeling tired" may be interpreted differently).

- Finalization: The expert committee incorporates feedback from cognitive debriefing to produce the final adapted instrument.

Cross-Cultural Adaptation Workflow

2. Case Study: Adapting the PHQ-9 for Somatic vs. Psychological Focus Cultures

Empirical studies show the PHQ-9's factorial structure and item performance vary across cultures, particularly regarding somatic items.

- Table 1: Quantitative Data on Cross-Cultural Variation in PHQ-9 Item Performance

- Data synthesized from recent validation studies (2020-2023).

| PHQ-9 Item (Core Symptom) | General Population (US/UK) Item-Total Correlation | Chinese Population Study: Item-Total Correlation | South Asian Population Study: Factor Loading on Somatic Factor | Notes on Conceptual Challenges |

|---|---|---|---|---|

| Anhedonia | 0.72 - 0.78 | 0.65 - 0.70 | 0.40 | May be conflated with general fatigue or social duty neglect. |

| Depressed Mood | 0.75 - 0.82 | 0.70 - 0.75 | 0.55 | Idioms of distress (e.g., "heart pain") may need exploration. |

| Sleep Disturbance | 0.60 - 0.68 | 0.75 - 0.82 | 0.85 | Often a primary presenting symptom; high salience. |

| Fatigue | 0.65 - 0.71 | 0.78 - 0.85 | 0.88 | Highly salient; may be reported without linking to mood. |

| Appetite Changes | 0.58 - 0.65 | 0.65 - 0.72 | 0.75 | |

| Worthlessness/Guilt | 0.68 - 0.74 | 0.50 - 0.62 | 0.45 | Concept may be stigmatizing; expression may be indirect. |

| Concentration Problems | 0.62 - 0.70 | 0.60 - 0.68 | 0.65 | May be expressed as memory problems. |

| Psychomotor Symptoms | 0.55 - 0.63 | 0.58 - 0.65 | 0.70 | |

| Suicidal Ideation | 0.45 - 0.60 | 0.30 - 0.50 | 0.35 | High stigma; requires careful, culturally-safe phrasing. |

- Protocol 2.1: Establishing Measurement Invariance for the PHQ-9

- Objective: To statistically test whether the adapted PHQ-9 measures depression identically across cultural groups.

- Methodology:

- Data Collection: Administer the adapted PHQ-9 and a validated "gold standard" (e.g., clinician-administered structured diagnostic interview) to substantial samples from both the source culture (N > 300) and target culture (N > 300).

- Analytic Steps using Confirmatory Factor Analysis (CFA):

- Configural Invariance: Test if the same factor structure (e.g., 1-factor or 2-factor model) fits both groups acceptably (CFI > 0.90, RMSEA < 0.08).

- Metric Invariance: Constrain factor loadings to be equal across groups. A non-significant change in model fit (ΔCFI < 0.01, ΔRMSEA < 0.015) indicates items relate to the latent construct similarly.

- Scalar Invariance: Constrain item intercepts to be equal. If supported, mean scores can be directly compared across groups. Lack of invariance here suggests response bias or differential item functioning (DIF).

- DIF Analysis: Use methods like logistic regression or Rasch modeling to identify specific items that function differently between groups after accounting for the overall depression level.

Path to Measurement Invariance Testing

3. Case Study: Adapting Pain Scales (e.g., NRS, BPI) for Cultural Contexts

Pain expression is deeply culturally modulated. The goal is to adapt scales to capture the authentic experience without imposing external constructs.

- Protocol 3.1: Ethnographic Grounding for Pain Assessment

- Objective: To understand the local pain ontology, idioms of distress, and appropriate metaphors before scale adaptation.

- Methodology:

- Key Informant Interviews: Conduct semi-structured interviews with local healthcare providers, traditional healers, and community leaders to map local pain concepts (e.g., distinctions between sharp, burning, aching).

- Focus Group Discussions: Hold separate groups with individuals from the target population who have experienced chronic pain. Use open-ended questions: "How would you describe your pain to a family member?" "What words do you use for different types of pain?"

- Free Listing & Pile Sorting: Ask participants to list all words associated with pain. Subsequently, have them sort these words into groups based on similarity. Analyze using multidimensional scaling to reveal the underlying cultural structure of pain concepts.

- Findings Integration: Use the derived lexicon and conceptual framework to adapt scale anchors (e.g., "no pain" to "worst imaginable pain") and descriptive language. A numerical rating scale (NRS) may need supplemental visual or verbal descriptors validated in the local context.

The Scientist's Toolkit: Research Reagent Solutions for Cross-Cultural Adaptation

| Item / Solution | Function in Protocol |

|---|---|

| Dual-Panel Expert Committee Software (e.g., DelphiManager, REDCap) | Facilitates anonymous rating and consensus-building during the expert committee review stage for item adequacy. |

| Cognitive Interviewing Recording & Analysis Suite (e.g., Dedoose, NVivo) | Manages transcription, coding, and thematic analysis of qualitative data from cognitive debriefing interviews. |

| Statistical Packages for Measurement Invariance (e.g., lavaan in R, Mplus) | Performs the multi-group confirmatory factor analysis (MG-CFA) required to test configural, metric, and scalar invariance. |

| DIF Analysis Modules (e.g., lordif package in R, WINSTEPS for Rasch) | Identifies specific questionnaire items that exhibit differential functioning between cultural or linguistic groups. |

| Cultural Concordance Translation Service | Provides professional translators specialized in medical and psychosocial concepts, who are native to the target culture. |

| Validated "Gold Standard" Clinical Interview (e.g., SCID-5, MINI) | Serves as the criterion measure for validating the adapted scale's criterion and construct validity in the new setting. |

Solving Common Pitfalls: Troubleshooting Bias and Optimization Strategies

Within the broader thesis of achieving conceptual equivalence in cross-cultural research, reducing item bias is paramount. Item bias, or Differential Item Functioning (DIF), occurs when groups from different cultures with the same latent trait level have different probabilities of responding to an item. This compromises score comparability. This document provides application notes and protocols for addressing three key sources of item bias: reference periods, idioms, and taboo subjects.

Table 1: Prevalence and Impact of Identified Bias Sources in Cross-Cultural Psychometrics

| Bias Source | Typical Prevalence in Cross-Cultural Studies | Common Impact on Measurement (Effect Size d) | Primary Assessment Method |

|---|---|---|---|

| Reference Period Mismatch | High (~60-80% of multi-national trials)* | Small to Moderate (0.2 - 0.5) | Cognitive Debriefing, Response Time Analysis |

| Idiomatic/Figurative Language | Moderate (~30-50% of translations)* | Moderate to Large (0.4 - 0.8) | Expert Review, Back-Translation, Panel Evaluation |

| Taboo or Stigmatized Subjects | Culture-Specific (Varies Widely) | Large, often leading to non-response or social desirability bias (0.6+) | Focus Groups, Ethical Review, Response Pattern Analysis |

Prevalence estimates based on synthesis of recent methodological reviews from *Quality of Life Research and Psychological Assessment (2020-2023).

Experimental Protocols for Bias Assessment & Mitigation

Protocol 3.1: Cognitive Debriefing for Reference Period Calibration

Objective: To evaluate and standardize the interpretation of time-based references (e.g., "in the past 4 weeks") across cultures. Materials: Draft questionnaire, audio recorder, standardized interview guide. Procedure:

- Participant Recruitment: Recruit 8-10 representative participants per cultural group (not from main study sample).

- Task-Based Interview: Administer the item. Use "think-aloud" protocol: "Tell me what the phrase 'in the past 4 weeks' means to you as you answer."

- Probing: Follow with structured probes: "What is the first event you think of to mark the start of this period?" "Could you give me an example of something that happened outside this period?"

- Analysis: Code responses for consistency of start/end dates, memorable anchors used (e.g., payday, holiday), and calculation strategy (estimation vs. recall).

- Revision: Iterate item wording (e.g., "since [specific local holiday]" or "during the last 30 days...") and repeat until equivalence is achieved.

Protocol 3.2: Systematic Idiom Detection and Equivalence Mapping

Objective: To identify culture-specific idioms in source items and establish functionally equivalent expressions. Materials: Source instrument, bilingual linguists, concept definition glossary. Procedure:

- De-Idiomatization: A bilingual expert decomposes the source idiom into its core conceptual components (e.g., "feeling blue" → core concepts: sadness, low mood, temporality).

- Forward Translation with Instruction: Two independent translators produce target language versions, instructed to avoid literal translation and prioritize conceptual meaning.

- Expert Panel Review: A panel (linguist, clinician, layperson from target culture) reviews translations against the core concept definition. They generate alternative phrasings.

- Back-Translation & Comparison: A naive translator back-translates alternatives. The team compares back-translations to the de-idiomatized concept, not the original idiom.

- Selection: Choose the target language phrasing that optimally matches the core concept and has natural language frequency in the target culture.

Protocol 3.3: Taboo Topic Sensitivity Assessment via Vignettes

Objective: To gauge the sensitivity of items on potentially taboo topics (e.g., sexual function, substance use, mental health) and identify culturally acceptable framing. Materials: Vignette descriptions, anonymous response system (e.g., sealed envelopes or tablet), distress protocol. Procedure:

- Vignette Development: Create short, third-person stories featuring a character facing the sensitive issue (e.g., "M has been feeling very low and hopeless for several weeks").

- Focus Group Discussion: In a safe, moderated setting, present vignettes. Discuss: "How would someone like M talk about this?" "Who could they talk to?" "What words would they use or avoid?"

- Anonymous Endorsement: Present potential questionnaire items derived from the discussion. Participants anonymously rate each for: (a) Likelihood of truthful response (1-5 scale), and (b) Perceived offensiveness (1-5 scale).

- Analysis & Modification: Items with high offensiveness (>4) and low truthfulness scores (<2) are flagged. Moderators explore alternative wording suggested in Step 2.

- Ethical Safeguard: Provide resources for participants distressed by content. Protocol must have local IRB approval.

Visualizing the Bias Mitigation Workflow

Workflow for Cross-Cultural Item Bias Reduction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bias Reduction Protocols

| Item/Category | Function/Benefit | Example/Supplier Consideration |

|---|---|---|

| Digital Audio Recorder | Captures verbatim responses during cognitive interviews for precise linguistic analysis. | Use encrypted, IRB-compliant devices (e.g., Olympus or smartphone with secure app). |

| Translation Management Platform | Facilitates blind forward/back-translation, version control, and panel review in a centralized system. | Platforms like TransPerfect's GlobalLink or Lingotek ensure workflow integrity. |

| Anonymous Response System | Enables collection of truthful feedback on sensitive topics by reducing social desirability pressure. | Tablets with direct data entry or sealed ballot boxes for paper-based vignette ratings. |

| Concept Definition Glossary | The anchor document defining core constructs abstracted from source items, ensuring equivalence beyond linguistics. | Must be developed a priori by the source instrument developer and core research team. |

| Qualitative Data Analysis Software | Aids systematic coding of interview/focus group data to identify thematic patterns in bias. | NVivo, MAXQDA, or Dedoose for managing and analyzing textual data across languages. |

| DIF Analysis Statistical Package | Quantitatively flags items functioning differently across groups after adaptation. | R packages (lordif, difR), STATA diff, or Mplus for confirmatory analysis. |

Achieving conceptual equivalence is the cornerstone of valid cross-cultural research in psychology, public health, and drug development. A primary threat to this equivalence is response style bias—the systematic tendency to respond to item content based on stylistic factors rather than the target construct. Three pervasive biases are:

- Acquiescence (ARS): The tendency to agree or endorse items regardless of content.

- Extreme Responding (ERS): Consistently selecting the highest or lowest scale endpoints.

- Social Desirability (SDB): Tailoring responses to present oneself favorably according to perceived social norms.

These biases distort data comparability, conflate measurement error with true cultural differences, and jeopardize the validity of multinational clinical trial outcomes. This document provides application notes and protocols for identifying and mitigating these biases within the framework of a thesis on conceptual equivalence.

Table 1: Documented Prevalence of Response Styles Across Select Cultural Regions

| Response Style | Cultural Region | Estimated Prevalence (Typical Scale Impact) | Key Supporting Study (Year) |

|---|---|---|---|

| Acquiescence (ARS) | Latin America, East Asia, Mediterranean | Moderate to High (+0.3 to +0.5 SD on mean scores) | Smith (2004) |

| Anglo-Germanic, Nordic | Low | ||

| Extreme Responding (ERS) | Middle East, East Asia (for intensity) | High (Increased variance, skewed distributions) | Harzing (2006) |

| Western Europe | Low to Moderate | ||

| Social Desirability (SDB) | Collectivist Cultures (e.g., East Asia) | High on measures of conformity, humility | Johnson et al. (2005) |

| Individualist Cultures (e.g., USA) | High on measures of self-enhancement, autonomy |

Table 2: Statistical Impact of Uncorrected Response Bias on Scale Properties

| Bias Type | Effect on Reliability (α) | Effect on Validity (Correlation) | Effect on Factor Structure |

|---|---|---|---|

| Acquiescence | Artificially inflates internal consistency | Attenuates or inflates correlations | Creates a spurious general factor |

| Extreme Responding | Can increase or decrease α | Obscures true relationships | Distorts factor loadings & complicates simple structure |

| Social Desirability | May inflate α if SDB is uniform | Confounds substantive correlations | May produce a method factor |

Experimental Protocols for Detection and Mitigation

Protocol 2.1: Within-Study Detection Using Balanced Scale Design

Aim: To control for Acquiescence and Extreme Responding through instrument design. Materials: Survey items measuring target construct(s). Procedure:

- Item Wording: For every positively worded item, create a semantically reversed (negatively worded) counterpart. Ensure clarity to avoid double-negatives.

- Response Anchoring: Use fully labeled scales (e.g., "Strongly Disagree" to "Strongly Agree") rather than only end-point labels to reduce ERS.

- Randomization: Present all items in a fully randomized order to decouple method effects from content blocks.

- Scoring Post-Collection:

- Before scoring, reverse-code negative items.

- Calculate an Acquiescence Index (e.g., mean response to all items before reversal).

- Calculate an Extreme Response Index (percentage of responses in the highest and lowest scale categories).

Protocol 2.2: Post-Hoc Statistical Control Using CFA Models

Aim: To partition variance due to response styles from substantive trait variance. Materials: Raw item-level data from a balanced scale. Procedure:

- Model Specification: Specify a Confirmatory Factor Analysis (CFA) model with method factors.

- Substantive Factors: Define latent factors based on the theoretical construct(s).

- Method Factors:

- For ARS: Load all items (positive and negative, pre-reversal) onto a single method factor.

- For ERS: Model residuals of items with extreme thresholds.

- For SDB: Include a short, validated social desirability scale (e.g., BIDR-6) as a predictor of item scores or as a correlated factor.

- Estimation: Use appropriate estimator (e.g., MLR for ordinal data). Constrain substantive and method factors to be orthogonal.

- Interpretation: Examine standardized loadings. Substantive factors should have strong loadings on target items and near-zero loadings on non-target items. Method factor loadings quantify bias.

Protocol 2.3: Evaluation of Cross-Cultural Invariance with Bias Controls

Aim: To test for conceptual equivalence across groups after accounting for response bias. Materials: Multi-group dataset with a minimum of 200 respondents per cultural group. Procedure:

- Configure Baseline Model: Use the CFA model with method factors from Protocol 2.2.

- Sequential Invariance Testing:

- Step 1 (Configural): Test the same factor structure (substantive + method) across groups. This establishes basic equivalence.

- Step 2 (Metric): Constrain substantive factor loadings to be equal across groups. Test if ΔCFI < 0.010 and ΔRMSEA < 0.015.

- Step 3 (Scalar): Further constrain item intercepts to be equal. This is the critical test for bias; failure indicates potential residual bias or true mean differences.

- Analysis: If scalar invariance fails, re-specify model by freeing intercepts of items most susceptible to cultural bias (identified via modification indices) and re-test.

Diagrams

Diagram 1: Response Bias Mitigation Workflow

Diagram 2: CFA Model with Response Style Factors

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Response Bias Research

| Item / Solution | Primary Function in Bias Mitigation | Example / Specification |

|---|---|---|

| Balanced Psychometric Scale | Controls for Acquiescence Bias (ARS) by including both positively and negatively worded items for each latent construct. | Scale with 1:1 ratio of positive to negative items, validated for clarity. |

| Fully Anchored Response Scale | Mitigates Extreme Responding (ERS) by providing clear behavioral or frequency anchors for all points, not just endpoints. | 5-point Likert scale where 1="Never", 2="Rarely", 3="Sometimes", 4="Often", 5="Always". |