Bioacoustic Frontiers: How Acoustic Monitoring of Birds and Bats is Revolutionizing Environmental Health and Biomedical Research

This article provides a comprehensive analysis of acoustic monitoring as a non-invasive tool for studying avian and chiropteran populations, with a focus on applications for researchers and drug development professionals.

Bioacoustic Frontiers: How Acoustic Monitoring of Birds and Bats is Revolutionizing Environmental Health and Biomedical Research

Abstract

This article provides a comprehensive analysis of acoustic monitoring as a non-invasive tool for studying avian and chiropteran populations, with a focus on applications for researchers and drug development professionals. It explores the foundational principles of bioacoustics, details current methodological frameworks and technological applications, addresses common challenges and optimization strategies, and validates the approach through comparative analysis with traditional survey methods. The synthesis highlights how acoustic biodiversity data serves as a critical ecological indicator, informing environmental impact assessments for biomedical facilities and offering novel models for understanding vocal communication and auditory systems.

The Sound of Ecosystems: Foundational Principles of Avian and Chiropteran Bioacoustics

Bioacoustic monitoring is the systematic use of audio recording technology to collect, analyze, and interpret sounds produced by wildlife, particularly for ecological research and conservation. Within the scope of a thesis on acoustic monitoring for birds and bats, this guide details the technical pipeline transforming raw environmental recordings into quantifiable ecological data. This process enables non-invasive, large-scale, and continuous biodiversity assessment, critical for tracking population trends, behavioral studies, and evaluating ecosystem health.

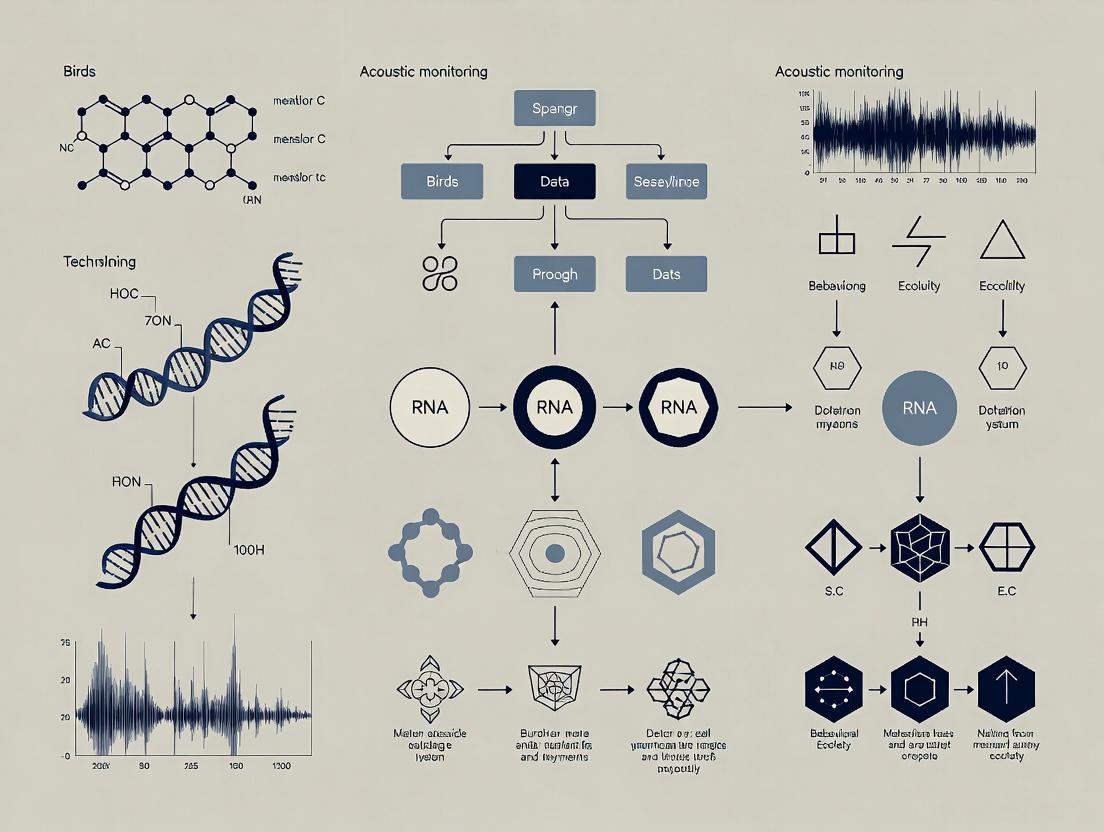

The Bioacoustic Pipeline: From Signal to Insight

The conversion of field recordings into ecological data follows a defined computational and analytical workflow.

Diagram Title: Bioacoustic Data Processing Pipeline

Core Experimental Protocols & Methodologies

Protocol for Passive Acoustic Monitoring (PAM) Deployment

Objective: To systematically collect continuous audio data in a study area for birds and bats.

- Site Selection: Use stratified random sampling or place sensors at predetermined GPS points (e.g., grid vertices, habitat edges).

- Hardware Configuration:

- Mount ultrasonic (bat) or full-spectrum (bird) recorder in weatherproof housing.

- Secure to tree/post 3-4m high (birds) or clear flyway (bats).

- Set microphone gain to avoid clipping from background noise.

- Program schedule: Bats – sunset to sunrise; Birds – dawn + diurnal periods.

- Use 384 kHz sample rate (bats), 44.1-48 kHz (birds). Record in WAV format.

- Metadata Logging: Document GPS coordinates, habitat type, deployment date/time, and sensor specs.

- Data Retrieval: Swap SD cards/batteries at regular intervals (e.g., weekly).

Protocol for Automated Species Identification Using Convolutional Neural Networks (CNNs)

Objective: To automatically identify bird/bat species from segmented audio clips.

- Input Preparation: Convert detected call events into spectrograms (e.g., Mel-spectrograms).

- Model Architecture: Use a pre-trained CNN (e.g., ResNet, VGG) adapted for spectrogram input. Final dense layer nodes equal number of target species.

- Training: Train model on labeled spectrograms from reference libraries (e.g., Xeno-Canto, BatDetect). Use 70/15/15 split for train/validation/test sets. Augment data with time stretching, pitch shifting, noise injection.

- Validation: Evaluate model performance on held-out test set using precision, recall, and F1-score. Confusion matrices identify common misclassifications.

- Inference: Apply trained model to new, unlabeled spectrograms to generate species prediction with associated probability.

Quantitative Data: Key Metrics in Bioacoustic Studies

The following metrics are standard outputs from bioacoustic analysis pipelines.

Table 1: Core Acoustic Indices for Ecological Assessment

| Index | Formula/Description | Application for Birds/Bats | Ecological Interpretation | ||

|---|---|---|---|---|---|

| Acoustic Complexity Index (ACI) | ACI = Σᵢ ( | Δ Iᵢ | / (Iᵢ + ε) ) | Bird community monitoring; measures intensity variance. | Higher ACI often correlates with higher avian biodiversity. |

| Bioacoustic Index | BI = Σᵢ ( SPL(fᵢ) ) - (Background Noise(fᵢ)) in frequency bins. | General biodiversity assessment. | Attenuates geophonic noise; estimates species richness. | ||

| Activity Index | Proportion of audio frames containing signals above amplitude threshold. | Bat foraging activity; bird dawn chorus intensity. | Proxy for overall acoustic activity of target guild. | ||

| Call Rate | (Number of detected calls) / (Unit recording time). | Species-specific bat social calling or bird song rate. | Behavioral indicator, linked to reproduction, disturbance. |

Table 2: Performance Metrics for Automated Identification Models (Hypothetical Data)

| Model Type | Target Taxa | Precision | Recall | F1-Score | Reference Dataset Size |

|---|---|---|---|---|---|

| CNN (ResNet50) | Nocturnal Birds (10 species) | 0.94 | 0.89 | 0.91 | 5,000 labeled clips |

| Random Forest | Anuran Calls | 0.88 | 0.85 | 0.86 | 8,200 labeled clips |

| CNN (Custom) | Bats (25 species) | 0.97 | 0.92 | 0.94 | 15,000 labeled clips |

| Support Vector Machine | Orthopterans | 0.82 | 0.79 | 0.80 | 3,500 labeled clips |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Bioacoustic Monitoring

| Item Category | Specific Product/Example | Function in Research |

|---|---|---|

| Acoustic Recorders | Wildlife Acoustics Song Meter SM4; Audiomoth (Open Acoustic Devices) | Programmable, weatherproof field recorders for autonomous long-term deployment. |

| Reference Libraries | Xeno-canto (birds); Macaulay Library; BatDetect UK database | Curated, labeled audio datasets essential for training and validating machine learning models. |

| Analysis Software | Kaleidoscope Pro (Wildlife Acoustics); Raven Pro (Cornall Lab); tensorflow/keras (Python). |

Software for visualizing, annotating, and automatically detecting/classifying acoustic signals. |

| Calibration Tools | Pistonphone (e.g., GRAS 42AA) | Provides precise reference sound pressure level to calibrate microphones, ensuring data comparability. |

| Bioacoustic Indices | soundecology R package; scikit-maad Python package. |

Computational packages for calculating ACI, BI, NDSI, etc., from audio files. |

Advanced Analysis: From Occurrence to Ecology

Identified species data are synthesized into ecological metrics.

Diagram Title: Synthesizing Acoustic Data into Ecological Insights

Bioacoustic monitoring represents a transformative methodological framework, converting passive audio recordings into robust, quantitative ecological data. For bird and bat research, it enables the scaling of observation across temporal and spatial dimensions impractical for human observers. The integration of standardized protocols, machine learning, and ecological modeling, as detailed herein, establishes bioacoustics as a cornerstone of modern biodiversity science and evidence-based conservation.

Why Monitor Birds and Bats? Keystone Species as Environmental Sentinels

This whitepaper positions acoustic monitoring of birds and bats as a critical, non-invasive methodology within a broader research thesis on ecosystem health assessment. As keystone species, their population dynamics, diversity, and behavior serve as high-fidelity proxies for environmental integrity. For researchers and drug development professionals, changes in these sentinel communities can signal ecological disruptions that may affect natural product discovery, zoonotic disease reservoirs, and the stability of biomechanical used in preclinical models.

Quantitative Impact: Sentinel Value and Ecosystem Services

The following tables synthesize current data on the ecological and economic value of birds and bats, underscoring the rationale for their monitoring.

Table 1: Quantitative Ecological Impact of Birds and Bats

| Metric | Birds (Representative Data) | Bats (Representative Data) | Monitoring Implication |

|---|---|---|---|

| Pest Control | Save ~$1.5B annually in US forestry by preying on insects (USDA). | Save ~$3.7B annually in US agriculture via insect suppression (Boyles et al., 2023). | Acoustic activity correlates with pest outbreak risk. |

| Pollination | Essential for ~5% of global plant crops (e.g., tropics). | Critical for >500 plant species (e.g., agave, durian). | Phenology shifts detectable via call presence/absence. |

| Seed Dispersal | Key for forest regeneration; move thousands of seeds/km². | Tropical bats regenerate clear-cut forests; disperse >300 m daily. | Habitat connectivity assessed via species distribution maps from acoustic data. |

| Bio-indication | Community composition shifts indicate habitat fragmentation, pollution. | Insectivorous bat health directly reflects pesticide bioaccumulation. | Acoustic diversity indices serve as a proxy for overall biodiversity. |

Table 2: Recent Population Trends & Threats (Key Examples)

| Taxon/Species Group | Estimated Population Trend | Primary Threat(s) | Data Source (Recent) |

|---|---|---|---|

| North American Birds | Net loss of ~3B breeding birds since 1970 (~29% decline). | Habitat loss, cats, windows, pesticides. | Rosenberg et al., Science (2019). |

| Insectivorous Bats (NA) | White-nose Syndrome has killed millions; some species declined >90%. | Fungal disease, wind energy mortality. | USFWS (2023 Assessments). |

| Aerial Insectivores (e.g., swallows, nighthawks) | Steep, consistent declines across North America. | Agricultural intensification, insect prey decline. | Smith et al., BioScience (2024 review). |

| Pollinating Bats (e.g., Leptonycteris) | Variable; some species recovering due to protection. | Habitat loss, climate change disrupting nectar corridors. | IUCN Red List (2023 updates). |

Core Methodologies: Acoustic Monitoring Protocols

A robust thesis on acoustic monitoring must detail standardized protocols for data collection, processing, and analysis.

Protocol 3.1: Passive Acoustic Survey Design for Birds and Bats

- Objective: To systematically capture species occurrence, activity patterns, and community composition.

- Equipment: Programmable acoustic recorder (e.g., Swift, Audiomoth), weatherproof housing, external battery, SD cards, calibrated GPS.

- Deployment:

- Site Selection: Stratify by habitat type. Place recorders ≥200m apart to minimize double-counting.

- Mounting: Secure 3-5m high on tree trunk or pole, away from reflective surfaces.

- Programming: Record at a 256-384 kHz sample rate for bats (encompassing ultrasonic frequencies); 44.1-48 kHz for birds. Schedule recordings to cover dawn, dusk, and night (full-night for bats). Deploy for 3-7 consecutive nights/site.

- Metadata: Document coordinates, habitat, date/time, weather, and equipment settings.

- Data Management: Regularly retrieve data, back up raw files (.wav), and organize with strict naming conventions.

Protocol 3.2: Automated Species Identification & Analysis Workflow

- Objective: To process large acoustic datasets for ecological inference.

- Software Tools: Kaleidoscope Pro, SonoBat, or open-source packages (e.g.,

tuneR,monitoRin R;BatDetect2Python). - Steps:

- Pre-processing: Filter noise, normalize amplitude. For bats, convert ultrasonic calls to time-expanded, audible frequencies.

- Detection: Apply noise-adaptive energy detectors or convolutional neural networks (CNNs) to isolate vocalizations from noise.

- Classification: Use region-specific, validated classifiers or trained machine learning models to assign species labels. Critical Step: Manually verify a significant subset (e.g., 10-20%) to quantify and correct false positives/negatives.

- Analysis: Calculate site-specific and temporal metrics: Species Richness, Acoustic Activity (calls/night), Occupancy Probability, and Acoustic Diversity Indices (e.g., ADI, ACI).

Visualizing the Research Framework

Diagram 1: Thesis Framework for Acoustic Sentinel Research

Diagram 2: Acoustic Monitoring Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Acoustic Monitoring Research

| Item/Category | Example Product/Supplier | Function in Research |

|---|---|---|

| Programmable Acoustic Recorder | Wildlife Acoustics Song Meter Series; Open Acoustic Devices Audiomoth | Core device for passive, scheduled audio capture across sonic and ultrasonic ranges. |

| Ultrasonic Microphone | Knowles MEMS microphones (e.g., SPU0410LR5H-QB) | High-frequency capture essential for bat echolocation recording. |

| Weatherproof Housing | Custom enclosures with rain shields and anti-vamp mounts. | Protects sensitive electronics during extended field deployment in all conditions. |

| Reference Call Library | Cornell Lab of Ornithology's Macaulay Library; Bat Call Library (BCID) | Curated, validated audio files essential for training and testing automated classification algorithms. |

| Acoustic Analysis Software | Kaleidoscope Pro (Wildlife Acoustics); SonoBat; R package bioacoustics. |

Software suite for visualizing, detecting, measuring, and classifying acoustic signals. |

| Machine Learning Model | Custom CNN built via TensorFlow/Keras; pre-trained BatDetect2 model. | Automates species identification from spectrograms, drastically reducing manual review time. |

| Calibration Device | Pistonphone (e.g., GRAS 42AA) | Provides a precise reference tone (e.g., 114 dB SPL at 250 Hz) to ensure recorder sensitivity is standardized and data are comparable across studies. |

Within the framework of a broader thesis on acoustic monitoring for ecological and behavioral research, this technical guide provides a comparative analysis of two critical bioacoustic signatures: avian song and bat echolocation. For researchers, scientists, and professionals in related fields, understanding the distinct anatomical, physical, and information-theoretic structures of these calls is paramount for accurate species identification, population monitoring, and the study of behavioral ecology through passive acoustic monitoring (PAM).

Physical & Information-Theoretic Structure

Avian Song

Avian songs are typically longer, complex vocalizations used for mate attraction and territorial defense. They are composed of syllables (discrete acoustic units) arranged into phrases and songs. Spectrographically, they often show harmonic structure, frequency modulation, and broad bandwidth.

Bat Echolocation

Bat echolocation calls are short, high-frequency signals used for navigation and prey capture. They are characterized by constant frequency (CF) components, frequency-modulated (FM) sweeps, or a combination (CF-FM). Their design is governed by the physics of acoustic ranging and Doppler shift compensation.

Table 1: Quantitative Comparison of Call Signatures

| Parameter | Avian Song (Oscine Passerine) | Bat Echolocation (FM Insectivore) |

|---|---|---|

| Frequency Range | 1 kHz - 8 kHz (common) | 20 kHz - 120 kHz (ultrasonic) |

| Call Duration | 0.5 - 5 seconds | 0.5 - 20 milliseconds |

| Bandwidth | Narrow to Moderate (1-4 kHz) | Very Wide (up to 100 kHz sweep) |

| Primary Function | Sexual selection, territory | Navigation, prey detection |

| Source Level | 70 - 90 dB SPL at 1m | 110 - 140 dB SPL at 10 cm |

| Info. Content | Species, individual ID, motivation | Range, velocity, size, texture of target |

Neural & Physiological Pathways

The production and processing of these calls involve specialized neural pathways.

Avian Song System

The avian song control system is a dedicated neural network. The posterior vocal pathway (motor pathway) is essential for song production, while the anterior forebrain pathway (AFP) is crucial for song learning and plasticity.

Diagram 1: Neural pathways for avian song production and learning.

Bat Echolocation System

Bat call production involves laryngeal muscles controlled by motor neurons, while processing involves a comparative auditory pathway where the inferior colliculus and auditory cortex are specialized for analyzing echo delay and Doppler shift.

Diagram 2: Bat echolocation production and auditory processing loop.

Experimental Protocols for Acoustic Analysis

Protocol: Field Recording for Passive Acoustic Monitoring (PAM)

Objective: To collect high-fidelity acoustic data for species identification and density estimation.

- Site Selection: Choose sites representative of habitat, away from constant anthropogenic noise.

- Equipment Calibration: Use a sound level calibrator (e.g., 114 dB SPL at 1 kHz) to calibrate microphones pre- and post-deployment.

- Deployment: Secure ultrasonic (for bats) and/or audible (for birds) recorders (e.g., Wildlife Acoustics SM4, AudioMoth) on poles 3-5m high. Set microphones away from obstructing surfaces.

- Recording Schedule: Program for dawn/dusk choruses (birds) and first 4 hours after sunset (bats). Use a 500 kHz sampling rate for bats, 48 kHz for birds.

- Metadata Logging: Record GPS coordinates, date, time, habitat type, and weather conditions.

- Data Retrieval: Download data at regular intervals, ensuring battery and storage capacity.

Protocol: Controlled Playback Experiment for Avian Response

Objective: To assess territorial or mating responses to specific song variants.

- Stimulus Preparation: Synthesize or select high-quality recordings of conspecific songs (test) and heterospecific songs (control). Normalize amplitudes.

- Subject Selection: Identify and tag territorial males during pre-trial observations.

- Experimental Setup: Place a speaker 10m from the subject's song post. Observer is hidden 20m away with recording equipment.

- Playback Trial: After a 5-minute silent pre-trial, play 30 seconds of stimulus. Record subject's vocal and behavioral response (approach, song rate) for 10 minutes.

- Analysis: Quantify latency to response, closest approach distance, and song rate compared to baseline. Use non-parametric statistical tests (e.g., Wilcoxon signed-rank).

Protocol: Harmonic-to-Noise Ratio (HNR) Analysis for Call Quality

Objective: To quantitatively measure the purity or harshness of a vocalization, often correlated with fitness.

- Call Isolation: Using software (e.g., Raven Pro, MATLAB), manually select a clean, representative call. Apply a band-pass filter to remove noise.

- Spectral Analysis: Compute the Fast Fourier Transform (FFT) with a Hanning window. Identify the fundamental frequency (F0) and its harmonic peaks.

- Power Calculation: Integrate the power within narrow bands centered on the first 5 harmonics. Integrate the power in the noise bands between harmonics.

- HNR Calculation: Compute the ratio: HNR (dB) = 10 * log10 (Powerharmonics / Powernoise).

- Statistical Comparison: Compare HNR values across experimental groups (e.g., treated vs. control, high vs. low fitness) using ANOVA.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Acoustic Research on Birds and Bats

| Item | Function & Specification | Example Use Case |

|---|---|---|

| Ultrasonic Microphone | Captures high-frequency sounds (>20 kHz). Requires flat frequency response up to 200+ kHz. | Recording bat echolocation calls. |

| Time Expansion Detector | Slows down ultrasonic calls in real-time for listening and recording on standard devices. | Field monitoring and verification of bat presence. |

| Acoustic Recorder | Programmable, weatherproof device for long-term PAM. High sampling rate and storage. | Unattended monitoring of bird/bat activity over seasons. |

| Sound Analysis Software | For visualizing (spectrograms), measuring, and classifying calls. | Raven Pro, Kaleidoscope, Avisoft-SASLab. |

| Reference Call Library | Curated database of known species vocalizations. | Automated species identification via machine learning classifiers. |

| GPS Logger | Precise geolocation tagging of recording events or tracked individuals. | Correlating acoustic activity with spatial movement. |

| Portable Calibrator | Generates known SPL at specific frequencies for microphone calibration. | Ensuring data validity for sound level measurements. |

| Telemetry System | For tracking individual animals. Can be paired with bio-loggers (audio tags). | Studying individual vocal behavior and movement ecology. |

This technical guide provides an overview of core sensor technologies—microphones, recorders, and passive acoustic sensors—within the context of acoustic monitoring for avian and chiropteran research. Effective biodiversity assessment, behavioral studies, and impact mitigation in drug development (e.g., assessing environmental effects of new compounds) rely on precise, reliable, and automated acoustic data collection.

Core Sensor Technologies

Microphones

The microphone is the primary transducer, converting acoustic pressure waves into electrical signals.

Key Types and Specifications:

- Condenser Microphones: Utilize a charged capacitor (diaphragm and backplate). Offer high sensitivity and wide frequency response. Require external power (phantom or bias).

- Electret Microphones: Employ a permanently charged material. Lower cost and power requirements than traditional condensers.

- MEMS Microphones: Micro-electromechanical systems. Miniature, robust, and easily integrated into arrays. Performance is rapidly advancing.

Critical Performance Parameters:

- Frequency Response: Must cover species-specific ranges (e.g., 1-120 kHz for bats, 0.1-10 kHz for most birds).

- Sensitivity: Output voltage for a given sound pressure level (dB SPL).

- Signal-to-Noise Ratio (SNR): Critical for detecting faint calls.

- Directionality: Omnidirectional vs. directional (e.g., shotgun) for targeted monitoring.

- Self-Noise: The inherent electrical noise of the microphone.

Table 1: Microphone Performance Comparison for Bioacoustics

| Microphone Type | Typical Frequency Range | Typical Sensitivity | Key Advantages | Best Use Case in Field |

|---|---|---|---|---|

| Full-Spectrum Condenser | 5 Hz - 150 kHz | 12 - 50 mV/Pa | Ultra-wideband, flat response | Bat echolocation studies |

| Wildlife Recorder (Integrated) | 20 Hz - 48 kHz | ~30 mV/Pa | Weatherproof, integrated system | Long-term avian point counts |

| Parabolic Reflector (System) | 0.5 - 16 kHz | High (with gain) | Excellent directionality, distance | Isolating individual bird song |

| MEMS Array Element | 100 Hz - 80 kHz | 10 - 30 mV/Pa | Small, scalable for arrays | Sound localization & tracking |

Acoustic Recorders

Modern passive acoustic monitors (PAM) integrate a microphone, preamplifier, analog-to-digital converter (ADC), data storage, and power management.

Core Components & Considerations:

- ADC Resolution & Sample Rate: 16- or 24-bit depth; sample rate must be >2x max frequency (Nyquist theorem). For bats, ≥250 kHz sampling is common.

- Gain Stages: Programmable gain amplifiers (PGA) adjust for varying call amplitudes.

- Scheduling & Triggering: Time-based schedules or amplitude/spectral triggers conserve power and storage.

- Storage & Power: SD cards; power via batteries (often lithium) with solar recharge options.

- Environmental Hardening: Must be weatherproof, tamper-resistant, and operate across temperature extremes.

Table 2: Recorder Configuration for Target Taxa

| Parameter | Songbirds / Passerines | Nocturnal Migrants (Flight Calls) | Low-Frequency Bats (e.g., Myotis) | High-Frequency Bats (e.g., Tadarida) |

|---|---|---|---|---|

| Min. Sample Rate | 44.1 kHz | 44.1 kHz | 250 kHz | 500 kHz |

| Bit Depth | 24-bit | 24-bit | 24-bit | 16-bit (if dynamic range sufficient) |

| Typical Schedule | Dawn + dusk recording | Full-night recording | 30 mins pre- to post-sunset/sunrise | Full-night recording |

| Trigger Settings | Off or amplitude-based | Spectral (3-8 kHz) + amplitude | Amplitude + frequency (25-80 kHz) | High-frequency trigger (80-120 kHz) |

| Audio Format | WAV (compressed FLAC optional) | WAV or compressed FLAC | WAV | WAV or high-speed compressed |

Passive Acoustic Sensors in Array Configurations

Deploying multiple synchronized sensors enables sound source localization, abundance estimation, and tracking of movement.

- Localization: Time-difference-of-arrival (TDOA) methods calculate the source position by comparing when a sound reaches each microphone.

- Beamforming: Process signals from an array to form a directional "beam," enhancing signals from a specific direction.

- Spatial Capture-Recapture: Uses detection histories across an array to model animal density and distribution.

Experimental Protocols for Acoustic Monitoring

Protocol: Standardized Point Count for Avian Community Assessment

Objective: To systematically collect acoustic data for estimating species richness and relative abundance.

- Site Selection: Use a stratified random design within habitats of interest.

- Sensor Deployment: Mount recorder 1.5m above ground on a secure post, with microphone protected by windscreen. GPS log coordinates.

- Configuration: Set to record continuously from 30 mins before local sunrise for 4 hours. 44.1 kHz/24-bit. Use omnidirectional mic.

- Calibration: Deploy a calibrated sound source (e.g., 114 dB SPL at 1 kHz pistonphone) at the microphone position for 30 seconds at start/end of deployment.

- Duration: Repeat for 3-5 consecutive days during peak breeding season.

- Data Management: Download data, rename files with

SiteID_YYYYMMDD_HHMMSS.wav. Annotate using automated recognition software (e.g., BirdNET) followed by manual verification of a minimum 20% of files.

Protocol: High-Frequency Bat Echolocation Monitoring

Objective: To detect and classify bat species by their ultrasonic echolocation calls.

- Site Selection: Focus on potential foraging corridors, water bodies, and woodland edges.

- Sensor Deployment: Place recorder in a waterproof housing. Elevate microphone to reduce clutter interference. Aim microphone horizontally.

- Configuration: Set to record from sunset to sunrise. 256 kHz/16-bit sample rate. Use a full-spectrum condenser microphone. Apply a high-pass filter trigger at 25 kHz to minimize false triggers.

- Calibration: Use an ultrasonic calibrator (e.g., 124 dB SPL at 40 kHz) pre- and post-deployment.

- Duration: Minimum of 7 nights per site to account for nightly variation.

- Analysis: Process files through bat call classifiers (e.g., Kaleidoscope, bcIdentify). Manually vet all auto-identified calls to genus/species level based on regional call parameters (frequency, shape, duration).

Diagrams

Diagram 1: Acoustic Monitoring Workflow

Diagram 2: Recorder Signal Chain

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Field Acoustic Research

| Item Category | Specific Item | Function & Rationale |

|---|---|---|

| Calibration | Pistonphone (e.g., 94 dB / 114 dB at 1 kHz) | Provides a precise, known SPL to calibrate microphone sensitivity for quantitative measurement. |

| Calibration | Ultrasonic Calibrator (e.g., 40 kHz or multi-tone) | Essential for calibrating high-frequency microphones used in bat/insect research. |

| Deployment | Acoustic Windscreen (Foam or Fur) | Critically reduces wind noise, which is a major source of interference and false triggers. |

| Deployment | Waterproof Housing / Rain Shield | Protects sensitive electronics from precipitation, condensation, and dust. |

| Deployment | Desiccant Packs | Placed inside housing to absorb moisture and prevent internal condensation. |

| Reference | GPS Logger | Accurately logs deployment coordinates for spatial analysis and site relocation. |

| Reference | Temperature/Humidity Logger | Logs microclimate data to correlate with acoustic activity or diagnose sensor drift. |

| Software | Acoustic Analysis Suite (e.g., Raven Pro, Kaleidoscope) | For manual inspection, annotation, and detailed analysis of call parameters. |

| Software | Automated Recognition Model (e.g., BirdNET, Bat Detective) | AI tool for initial screening and classification of large datasets. |

The Acoustic Niche Hypothesis and Its Relevance to Ecological Assessment

The Acoustic Niche Hypothesis (ANH) posits that vocal species in a soundscape partition the acoustic environment to minimize interference and maximize communication efficiency, analogous to resource partitioning in ecological niches. This concept provides a foundational framework for using acoustic monitoring to assess ecosystem health, particularly for birds and bats. This whitepaper details the technical application of the ANH within a broader thesis on acoustic monitoring for avian and chiropteran research, providing methodologies, data analysis, and tools for researchers and drug development professionals engaged in ecological impact assessments.

Core Principles of the Acoustic Niche Hypothesis

The ANH suggests that species will evolve to occupy distinct acoustic spaces defined by frequency, temporal patterning, and amplitude. Overlap in these dimensions suggests competition or adaptation in degraded habitats. Acoustic monitoring leverages this principle to infer biodiversity and community structure non-invasively.

Experimental Protocols for Hypothesis Testing

Protocol 1: Baseline Soundscape Recording and Partitioning Analysis

- Objective: To map the acoustic niches occupied by different species in a habitat.

- Method:

- Deploy calibrated omnidirectional microphones (e.g., Wildlife Acoustics SM4) at standard heights (1.5m for birds, canopy-level for bats).

- Record continuously for a minimum of 7 consecutive days at a 192 kHz sampling rate (for bats) and 48 kHz (for birds).

- Apply spectrographic cross-correlation or machine learning classifiers (e.g., Kaleidoscope, Arbimon) to identify species-specific vocalizations.

- For each identified vocalization, extract metrics: peak frequency (kHz), bandwidth (kHz), temporal duration (ms), and diel cycle time.

- Conduct a Null Model analysis to compare observed niche overlap (Pianka's index) against randomly assembled communities.

- Output: Niche occupancy plots and overlap indices.

Protocol 2: Perturbation Response Experiment

- Objective: To assess the impact of an anthropogenic or pharmaceutical trial disturbance on acoustic niche structure.

- Method:

- Establish paired treatment (e.g., near a development site) and control recording stations.

- Conduct pre- and post-perturbation recordings using Protocol 1.

- Calculate the Acoustic Complexity Index (ACI), Bioacoustic Index, and Niche Overlap Index for each period.

- Statistically compare shifts in species' peak frequency or temporal activity peaks as evidence of niche displacement.

- Output: Time-series data on acoustic indices and niche metrics.

Key Data and Findings

Table 1: Representative Acoustic Niche Parameters for Taxa (Summarized from Recent Studies)

| Taxonomic Group | Typical Peak Frequency Range | Typical Temporal Activity Peak | Niche Width (Frequency) | Key Partitioning Mechanism |

|---|---|---|---|---|

| Temperate Passerines | 2 - 8 kHz | Dawn Chorus (05:00-07:00) | Medium | Temporal (diel cycle), Frequency |

| Neotropical Birds | 1 - 10 kHz | Morning (06:00-10:00) | Broad | Frequency, Spatial (canopy vs. understory) |

| Insectivorous Bats (Echolocation) | 20 - 120 kHz | Nocturnal (Dusk/Dawn peaks) | Narrow | Extreme frequency partitioning (>10 kHz separation common) |

| Frog Chorus | 0.5 - 5 kHz | Evening (19:00-23:00) | Narrow | Temporal, Fine-scale frequency |

Table 2: Impact of Forest Fragmentation on Niche Metrics (Hypothetical Experimental Data)

| Site Condition | Species Richness (Acoustic) | Mean Niche Overlap Index | Acoustic Complexity Index (Mean) | Observed Frequency Shift in Vireo spp. |

|---|---|---|---|---|

| Old-Growth Forest (Control) | 42 | 0.31 | 2150 | Baseline |

| 50% Fragmented | 28 | 0.49 | 1670 | +0.8 kHz |

| 90% Fragmented / Edge | 15 | 0.62 | 980 | +1.5 kHz |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ANH-Based Acoustic Monitoring

| Item | Function/Application | Example Product/Note |

|---|---|---|

| High-Dynamic Range Microphone | Captures sound pressure levels without distortion for accurate niche characterization. | Wildlife Acoustics SM4 Ultrasonic Mic; Knowles FG Series. |

| Calibrated Sound Level Meter | Provides absolute amplitude reference, critical for measuring acoustic space occupancy. | Larson Davis 831C Class 1. |

| Acoustic Recorder (Weatherproof) | Long-duration, programmable field deployment. | Audiomoth, Song Meter Mini. |

| Acoustic Analysis Software Suite | For visualization, detection, and metric extraction (spectrograms, FFT, indices). | Raven Pro, PAMGuard, OpenSoundscape. |

| Reference Vocalization Library | Training and validation data for automated classifiers. | Cornell Macaulay Library, xeno-canto. |

| Bioacoustic Index Scripts/Packages | Calculates ACI, NDSI, and custom niche overlap metrics from audio files. | R packages: soundecology, seewave, tuneR. |

| Precision GPS Unit | Geotagging recording locations for spatial niche analysis. | Garmin GPSMAP 66sr. |

Visualization of Workflow and Relationships

Diagram 1: ANH Experimental Workflow (100 chars)

Diagram 2: Acoustic Niche Partitioning Mechanisms (100 chars)

Relevance to Drug Development and Ecological Assessment

For drug development professionals, ANH-guided acoustic monitoring offers a sensitive, non-invasive tool for pre-clinical and post-approval environmental impact assessments. Monitoring bat and bird vocalizations near research or manufacturing facilities can serve as a bioindicator for ecosystem disruption caused by pollution, habitat loss, or other indirect effects of human activity. Shifts in acoustic niche metrics provide quantitative, early-warning signals of ecological change, supporting regulatory compliance and sustainability reporting.

From Field to Lab: Methodologies and Cutting-Edge Applications in Acoustic Surveillance

Abstract: This technical guide provides a framework for implementing acoustic monitoring strategies within avian and chiropteran research, a core methodological component of a broader thesis on biodiversity assessment and anthropogenic impact. We detail the deployment paradigms of transects, static arrays, and large-scale networks, specifying their respective applications, experimental protocols, and data handling requirements for the scientific and drug development communities, where environmental compliance and ecosystem health are paramount.

Acoustic monitoring is a non-invasive, scalable method for assessing species richness, behavior, and population trends of vocalizing birds and echolocating bats. The choice of deployment strategy directly influences the statistical power, ecological inference, and logistical feasibility of a study.

| Deployment Paradigm | Primary Application | Temporal Scale | Spatial Coverage | Key Metric |

|---|---|---|---|---|

| Transect | Species distribution, habitat use, relative abundance | Short-term (surveys) | Linear, extensive | Detections per unit distance/effort |

| Static Array | Occupancy, density estimation, phenology | Seasonal to annual | Fixed, intensive | Detection probability, site occupancy |

| Large-Scale Network | Continental-scale trends, macroecology, climate change impacts | Long-term (multi-year) | Broad, extensive | Acoustic indices, species distribution models |

Experimental Protocols & Methodologies

Transect-Based Surveys

- Objective: To sample across environmental gradients or habitat types for comparative analysis.

- Protocol:

- Route Design: Define a transect line (e.g., 1-2 km) using GIS, ensuring habitat representation and safe, accessible walk paths.

- Equipment: Use a handheld ultrasonic microphone (for bats) or a directional parabola with recorder (for birds), coupled with a GPS unit.

- Sampling: Conduct surveys during peak activity periods (e.g., dawn for birds, post-sunset for bats). Move at a constant, slow pace (e.g., 2 km/h), recording continuously or at pre-defined stop points.

- Metadata: Log precise coordinates, time, weather conditions, and habitat notes at start, end, and all changes.

- Replication: Survey each transect route multiple times (≥3) per season to account for detection variability.

Static Array Deployment

- Objective: To collect data for statistically robust estimates of occupancy, density, or activity patterns at specific sites.

- Protocol:

- Site Selection: Choose sites using a randomized or stratified random sampling design within the target habitat.

- Sensor Configuration: Deploy weatherproof acoustic recorders (e.g., AudioMoth, SM4) on fixed structures (trees, poles). Set microphones at optimal height (e.g., canopy for birds, 3-5m for bats).

- Recording Schedule: Program a duty cycle (e.g., record 5 minutes every 30 minutes) to extend battery life and storage, or trigger on ultrasonic frequencies for bats.

- Calibration & Synchronization: Use a calibrated sound source for sensitivity reference. Synchronize all recorder clocks via GPS to millisecond accuracy for localization studies.

- Data Retrieval: Service arrays at regular intervals (e.g., bi-weekly) to download data, replace batteries, and verify sensor integrity.

Large-Scale Sensor Network Implementation

- Objective: To create a coordinated, continental-scale dataset for investigating ecological patterns and drivers.

- Protocol:

- Network Architecture: Design a hub-and-spoke or mesh network. Use low-power wide-area network (LPWAN) technologies (e.g., LoRaWAN) or cellular modules (4G/5G) for remote data transfer.

- Standardization: Adopt common data formats (e.g., WAC, FLAC), metadata schemas (Darwin Core), and sensor calibration protocols across all nodes.

- Power Management: Integrate solar panels with charge controllers and lithium battery banks for year-long, autonomous operation.

- Data Pipeline: Implement automated data ingestion, backup, and pre-processing (e.g., noise filtering, species identification via machine learning models like BirdNET or Kaleidoscope Pro) on cloud or high-performance computing platforms.

- Quality Assurance: Establish routine automated health checks (e.g., battery voltage, storage capacity, microphone sensitivity) and anomaly alerts.

Data Management and Analysis

Quantitative data outputs vary by paradigm. The table below summarizes core metrics and analytical tools.

| Data Type | Source Paradigm | Example Metric | Analysis Tool/Model |

|---|---|---|---|

| Raw Detection Lists | Transect, Static Array | Species Count per Survey/Site | R package vegan (diversity indices) |

| Acoustic Indices | All | Acoustic Complexity Index (ACI), Normalized Difference Soundscape Index (NDSI) | R package soundecology |

| Occupancy Data | Static Array | ψ (Occupancy), p (Detection Probability) | R package unmarked |

| Spatial Point Data | Large-Scale Network | Time-stamped Species Presence | MaxEnt, Species Distribution Models in R (dismo) |

| Passive Localization | Dense Static Array | Time Difference of Arrival (TDOA) | Custom algorithms in MATLAB or Python |

Title: Acoustic Monitoring Study Design & Data Flow

Title: Acoustic Data Processing Workflow

The Scientist's Toolkit: Essential Research Solutions

| Item Category | Specific Product/Example | Primary Function |

|---|---|---|

| Acoustic Recorder | Wildlife Acoustics SM4BAT, Open Acoustic Devices AudioMoth | Captures ultrasonic (bat) or audible (bird) frequencies over extended periods. |

| Calibration Source | Pettersson Elekon Ultrasonic Calibrator | Provides a reference tone of known frequency and amplitude for microphone calibration. |

| Analysis Software | Kaleidoscope Pro, R package bioacoustics |

Automated species identification and manual vetting of audio recordings. |

| Machine Learning Model | BirdNET (Cornell & Chemnitz), Bat Detector AI | Leverages AI for high-throughput, scalable species identification from audio. |

| Weatherproof Enclosure | Custom 3D-printed case with acoustic foam | Protects recorder from elements while minimizing wind noise and internal reflections. |

| Power System | 12V LiFePO4 battery + 20W solar panel | Provides autonomous, long-term power for remote sensor nodes. |

| Data Transmission | LoRaWAN module (RN2903) or cellular IoT module (SARA-R4) | Enables wireless, remote data retrieval from field sensors. |

| Metadata Logger | GPS logger (iGot-U) or integrated GPS | Precisely geotags survey start/end points and sensor locations. |

Within the framework of a comprehensive thesis on acoustic monitoring for birds and bats, the selection of field recording hardware is the foundational scientific step. This guide provides an in-depth technical analysis for researchers selecting equipment based on three critical parameters: frequency range, environmental durability, and power management. Optimal selection directly impacts data fidelity, statistical power, and the success of long-term ecological studies or environmental impact assessments.

Core Acoustic Parameters & Hardware Specifications

The vocalizations of target taxa dictate the primary hardware requirements. The following table summarizes key bioacoustic ranges and corresponding hardware specifications.

Table 1: Target Taxa Frequency Ranges & Microphone Specifications

| Taxonomic Group | Key Frequency Range | Required Microphone Response | Sample Rate (Nyquist Criterion) | Common Call Type |

|---|---|---|---|---|

| Passerine Birds | 1 kHz - 8 kHz | ±3 dB from 500 Hz to 10 kHz | 24 kHz (48 kHz recommended) | Whistles, trills |

| Non-passerine Birds (e.g., owls, doves) | 200 Hz - 2 kHz | ±3 dB from 100 Hz to 5 kHz | 8 kHz (16 kHz recommended) | Hoots, coos |

| Bats (Echolocation) | 10 kHz - 200 kHz (ultrasonic) | Flat response (±3 dB) in target range (e.g., 10-150 kHz) | 384 kHz - 500 kHz | Frequency-modulated sweeps, constant frequency |

| Bats (Social Calls) | 5 kHz - 80 kHz | Wide-band, ultrasonic-capable | 192 kHz minimum | Chirps, buzzes |

Equipment Selection: Recorders, Microphones, & Accessories

Table 2: Hardware Comparison for Bioacoustic Monitoring

| Equipment Type | Model Examples | Key Specifications | Durability Features | Power Requirement & Typical Life |

|---|---|---|---|---|

| Ultrasonic Recorder | Wildlife Acoustics Song Meter SM4BAT; Titley Anabat Swift | SR: 192-500 kHz, 16-bit; Built-in condenser mic; Weatherproof housing | IP67 rating; Aluminum/ABS casing; Cable lock system | 4x D-cell Alkaline: 10-14 nights; 12V External: Months |

| Full-Spectrum Recorder | Wildlife Acoustics SM4; AudioMoth 2.0 | SR: 16-384 kHz, 16/24-bit; Omnidirectional electret mic; Programmable schedule | IPX5-IP67; Silicone gaskets; Anti-UV plastic | 3x AA Lithiums: ~2 weeks (AudioMoth); 4x D-cells: ~30 days (SM4) |

| Condenser Microphone | Dodotronic Ultramic; Avisoft CM16/CMPA | Freq. Resp: 10-150 kHz; Sensitivity: -30 dB ±3dB; Polar Pattern: Omni | Requires protective windscreen (foam or fur); Not inherently weatherproof | Plug-in power (2-5V) from recorder (P48 for some) |

| Phantom Power Supply | Wildtronics Phantom Power Module; Tascam PS-P520U | Output: 5V or 48V; Regulated, low-noise | Metal enclosure, shielded cables | Typically 9V battery or recorder supply |

Experimental Protocols for Field Validation

Protocol 1: In-situ Frequency Response Calibration Objective: To verify the system's frequency sensitivity across the operational range in field conditions. Materials: Acoustic calibrator (e.g., 1 kHz tone at 94 dB SPL), ultrasonic speaker (for bats), reference microphone, laptop with analysis software (e.g., Audacity, Kaleidoscope). Methodology:

- In a controlled, quiet field environment, place the reference microphone adjacent to the field recorder's microphone.

- For audible range (<20 kHz): Use a standard acoustic calibrator. Record a 30-second tone.

- For ultrasonic range: Generate a logarithmic chirp (10-150 kHz) from an ultrasonic speaker driven by a function generator. Record.

- Analyze recordings: Generate power spectral density (PSD) plots. Compare the amplitude at known frequencies between the reference and field system.

- Calculate and document any deviation (>3 dB requires calibration factor or hardware service).

Protocol 2: Long-Term Power Endurance Testing Objective: To empirically determine battery life under specific recording schedules. Materials: Recorder unit, new batteries of specified type (alkaline, lithium, rechargeable), data storage cards, environmental datalogger. Methodology:

- Configure the recorder with the target duty cycle (e.g., 5 minutes every 30 minutes, sunset to sunrise).

- Install fresh batteries and a formatted SD card. Note start time and ambient temperature.

- Deploy the unit in a lab or sheltered field setting. Log ambient temperature continuously.

- Monitor remotely or check daily until recorder power fails. Note termination time.

- Plot battery life (hours) against mean temperature. Repeat for different battery chemistries.

System Integration & Signal Pathway

Title: Bioacoustic Recorder Signal & Power Flow Diagram

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Field Deployment Materials & Reagents

| Item | Function & Specification | Rationale |

|---|---|---|

| Desiccant Pouches | Silica gel, 5-10 gram units. | Placed inside recorder housing to prevent internal condensation, protecting electronics and reducing acoustic damping. |

| Acoustic Windscreen | Closed-cell foam or synthetic fur (e.g., Rycote Windjammer). | Reduces wind noise (low-frequency masking) and protects microphone diaphragm from precipitation and dust. |

| Antistatic Brush & Wipes | Non-abrasive, lint-free cloths. | For cleaning microphone membranes and recorder ports without damage or charge transfer. |

| Dielectric Grease | Silicone-based compound (e.g., Dow Corning DC4). | Applied to O-rings, battery contacts, and cable connectors to ensure water resistance and prevent corrosion. |

| Calibration Sound Source | Pistonphone (e.g., 250 Hz/124 dB) or ultrasonic pulser. | Provides a known SPL and frequency for in-field validation of system sensitivity and response. |

| GPS Logger | Compact, weatherproof unit. | Synchronized with recorder to tag each recording with precise location coordinates for spatial analysis. |

| Temperature/Humidity Logger | USB datalogger (e.g., HOBO). | Placed inside recorder case to monitor microclimate, correlating with battery performance and potential anomalous noise. |

This whitepaper details the application of advanced machine learning (ML) pipelines to the domain of bioacoustic monitoring, specifically for birds and bats. The broader thesis posits that automating the detection and classification of vocalizations and echolocation calls is critical for scalable biodiversity assessment, population trend analysis, and impact mitigation in environmental and drug development contexts (e.g., siting wind farms or pharmaceutical manufacturing facilities). The revolution lies in moving from manual, expert-led analysis to fully automated, data-driven pipelines that provide reproducible, high-throughput insights.

Core Pipeline Architecture

The standard automated pipeline consists of four interdependent stages, each leveraging specific AI/ML techniques.

Table 1: Core Stages of the Bioacoustic AI Pipeline

| Stage | Primary Input | Core Technology/Algorithm | Key Output |

|---|---|---|---|

| 1. Data Acquisition | Field Environment | Autonomous Recorders (e.g., Audiomoths), Scheduled Recording | Raw Audio (.wav files) |

| 2. Signal Detection | Raw Audio | Energy-based Detection, Spectral Gating, CNN Detectors | Audio Events (segments containing potential signals) |

| 3. Feature Extraction | Audio Events | Spectrograms, MFCCs, Statistical Features (Spectral Centroid, Bandwidth) | Feature Vector/Matrix |

| 4. Classification | Feature Vectors | Random Forest, CNN, ResNet, EfficientNet, Transformer Models | Species ID, Call Type, Confidence Score |

Detailed Methodologies & Experimental Protocols

Protocol for Deploying an Acoustic Monitoring Grid

- Site Selection: Based on research thesis (e.g., pre-construction bat activity survey). Use GIS data to stratify by habitat.

- Sensor Deployment: Install weatherproof acoustic recorders (sampling rate ≥ 256 kHz for bats, ≥ 44.1 kHz for birds) on standardized poles at 3-5m height.

- Recording Schedule: Program for continuous recording at dusk/dawn (for bats) or a diurnal schedule (for birds), often using duty cycling (e.g., 5 minutes every 30 minutes) to conserve power.

- Data Retrieval: Collect SD cards monthly. Synchronize timestamps and annotate with metadata (GPS, weather, habitat).

Protocol for Training a Hybrid CNN-Random Forest Classifier

- Dataset Curation: Use labeled datasets (e.g., BirdVox, BatDetective). Split: 70% training, 15% validation, 15% testing.

- Pre-processing: Convert detected audio events to standardized log-scaled mel-spectrograms (128 mel bands).

- CNN Training: Train a lightweight CNN (e.g., 4 convolutional layers with batch normalization) on spectrograms. Use Adam optimizer, categorical cross-entropy loss.

- Feature Fusion: Extract features from the CNN's penultimate layer and concatenate with traditional acoustic features (e.g., mean frequency, duration, bandwidth).

- Random Forest Training: Train a Random Forest (100 trees) on the fused feature vector.

- Evaluation: Report precision, recall, F1-score, and confusion matrix on the held-out test set.

Table 2: Performance Comparison of Classifier Models on Bat Echolocation Calls

| Model Architecture | Average Precision (All Species) | Recall (Rare Species) | Computational Cost (TFLOPS) |

|---|---|---|---|

| Random Forest (on hand-crafted features) | 0.82 | 0.45 | < 0.01 |

| 2D CNN (Custom) | 0.91 | 0.68 | 0.5 |

| ResNet-50 (Transfer Learning) | 0.94 | 0.75 | 3.8 |

| EfficientNet-B0 | 0.93 | 0.77 | 0.39 |

| Attention-based Transformer | 0.96 | 0.82 | 2.1 |

Visualization of Workflows

Diagram 1: End-to-End Bioacoustic ML Pipeline

Diagram 2: Signal Detection & Classification Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools & Materials for Automated Bioacoustic Research

| Item/Category | Example Product/Technology | Function in Research |

|---|---|---|

| Acoustic Recorder | Wildlife Acoustics SM4, Audiomoth | High-frequency, weatherproof audio capture in remote fields. |

| Annotation Software | Audacity, Kaleidoscope, Raven Pro | Manual vetting and labeling of calls for creating ground-truth datasets. |

| ML Framework | PyTorch, TensorFlow with Keras | Building, training, and deploying custom detection/classification models. |

| Bioacoustic Analysis Library | scikit-maad in Python, monitoR in R |

Performing feature extraction, spectral analysis, and basic detection. |

| High-Performance Computing (HPC) | AWS EC2 (GPU instances), Google Colab Pro | Training deep learning models on large spectrogram datasets. |

| Data Management Platform | OpenSoundscape, Arbimon | Organizing large audio collections, running cloud-based analysis workflows. |

| Reference Call Library | Macaulay Library (Cornell), EchoBank | Curated datasets of known species calls for model training and validation. |

Acoustic monitoring has become a cornerstone methodology in avian and chiropteran research, providing non-invasive, scalable, and objective data collection. Its applications are critical for evidence-based environmental management and regulatory compliance. This technical guide details three pivotal applications—pre-construction surveys, habitat impact assessments, and long-term population trend analysis—that form the operational backbone of ecological impact studies mandated for infrastructure projects, land-use changes, and biodiversity conservation frameworks.

Application 1: Pre-Construction Surveys

Pre-construction acoustic surveys establish a baseline of species presence, diversity, and activity patterns prior to ground disturbance. This is legally required for permitting under statutes like the U.S. Endangered Species Act and the EU Habitats Directive.

Experimental Protocol for Baseline Acoustic Surveys

- Site Stratification: Divide the project footprint and a surrounding buffer zone (typically 500m) into strata based on habitat types (e.g., woodland, wetland, grassland).

- Sensor Deployment: Deploy autonomous recording units (ARUs) at random or systematic grid points within each stratum. A density of 1 ARU per 20-50 hectares is common, positioned at optimal height (e.g., 3m for bats, 1.5m for birds).

- Temporal Sampling: Program ARUs to record at high sensitivity. For bats, record from 30 minutes before sunset to 30 minutes after sunrise. For birds, conduct dawn chorus recording (from 1 hour before to 4 hours after sunrise) and periodic diurnal sampling.

- Survey Duration: Perform surveys across key biological seasons (breeding, migration) over a minimum of one full annual cycle.

- Data Processing: Audio files are processed through automated call recognition software (e.g., Kaleidoscope, Tadarida, BirdNET). A minimum of 10-20% of auto-identified files must be manually validated by an expert to ensure species verification and account for false positives/negatives.

Key Quantitative Metrics (Pre-Construction)

Table 1: Core Metrics Derived from Pre-Construction Acoustic Surveys

| Metric | Description | Calculation Method | Typical Baseline Output |

|---|---|---|---|

| Species Richness | Number of distinct species detected. | Cumulative count from all validated recordings. | e.g., 12 bat species, 45 bird species |

| Relative Activity Index | Proxy for abundance, based on acoustic encounters. | (Number of recording passes with species calls) / (Total recording nights) | e.g., 0.65 passes/night for Pipistrellus pipistrellus |

| Peak Activity Period | Time window of highest vocalization/echolocation activity. | Histogram of call counts per hourly bin. | e.g., Bat peak: 2300-0100 hrs; Bird dawn chorus: 0500-0700 hrs |

| Habitat Association | Strength of species occurrence link to habitat strata. | Chi-square test or occupancy modeling (Ψ) per habitat type. | e.g., Nyctalus noctula occupancy (Ψ) = 0.85 in mature woodland vs. 0.1 in open field |

Application 2: Habitat Impact Assessments

Impact assessments compare pre- and post-construction data to quantify changes and attribute them to project activities, informing mitigation strategies.

Experimental Protocol for BACI (Before-After-Control-Impact) Design

- Design: Establish Impact (construction site) and Control (ecologically similar, undisturbed site) locations.

- Before Phase: Conduct synchronized acoustic monitoring at both Impact and Control sites for ≥1 year pre-construction (as in Section 2.1).

- During/After Phase: Continue identical monitoring at both sites during construction and for ≥2-3 years post-construction.

- Analysis: Use statistical models (e.g., Generalized Linear Mixed Models - GLMMs) to test for a significant interaction between period (Before vs. After) and site (Impact vs. Control), which indicates a project effect.

Key Quantitative Metrics (Impact Assessment)

Table 2: Metrics for Quantifying Habitat Impact

| Metric | Description | Statistical Test | Interpretation of Significant Result |

|---|---|---|---|

| Activity Shift | Change in relative activity index. | GLMM with Poisson distribution. | Significant decline in Impact site post-construction indicates negative effect. |

| Occupancy Change | Change in probability of site use (Ψ). | Before-After, Control-Impact Occupancy model. | A reduction in Ψ at Impact site suggests habitat avoidance or loss. |

| Community Composition Change | Shift in species assemblage. | PERMANOVA on species call count matrix. | Significant change indicates alteration of the ecological community. |

| Sensitivity Index | Species-specific response magnitude. | (ActivityBefore - ActivityAfter) / ActivityBefore at Impact site. | High positive index denotes high sensitivity to disturbance. |

Application 3: Long-Term Population Trend Analysis

Long-term acoustic datasets enable the tracking of population trends, essential for conservation status evaluation and measuring the efficacy of mitigation measures over decadal scales.

Experimental Protocol for Trend Monitoring

- Fixed Station Network: Establish a permanent grid of ARUs across a region of interest. Use standardized, weatherproof equipment with long-term power solutions (solar).

- Consistent Sampling: Adhere to a rigid, phenologically-based calendar for recording (e.g., same weeks each year for breeding bird surveys, same summer months for bats).

- Data Curation: Maintain a version-controlled, relational database for all raw and processed acoustic metadata. Account for annual variation in detectability due to weather.

- Trend Modeling: Analyze data using advanced time-series models (e.g., Bayesian Occupancy Models, N-mixture models for acoustic counts) that account for imperfect detection and auto-correlation.

Key Quantitative Metrics (Trend Analysis)

Table 3: Metrics for Long-Term Population Trend Analysis

| Metric | Description | Analysis Model | Output Example |

|---|---|---|---|

| Annual Population Growth Rate (λ) | Mean rate of change in activity/abundance year-to-year. | State-Space Model or GLM with year as a covariate. | λ = 0.96 suggests a 4% annual decline. |

| Long-Term Trend Slope | Overall direction and magnitude of change over the study period. | Linear or non-linear regression on annual indices. | Slope = -2.3% per year (p<0.05) indicates a significant declining trend. |

| Power Analysis | Ability to detect a trend of a given magnitude. | Simulation based on observed variance and sample size. | "Current design has 80% power to detect a 3% annual decline over 10 years." |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Acoustic Monitoring of Birds and Bats

| Item Category | Specific Example/Product | Function |

|---|---|---|

| Autonomous Recorder (ARU) | Wildlife Acoustics Song Meter SM4, Audiomoth, Barrie | Programmable, weatherproof device for long-duration audio capture in the field. |

| Calibrated Microphone | Wildlife Acoustics SMM-U2, Dodotronic Ultramic | High-sensitivity, omnidirectional microphone for capturing ultrasonic (bat) and avian frequencies. |

| Acoustic Analysis Software | Kaleidoscope Pro, Tadarida, R package monitoR |

Automated species identification via machine learning algorithms and manual spectrogram review. |

| Reference Call Library | Bats of the Americas, Xeno-canto, BTO Bird Call Library | Curated, validated audio database essential for training classifiers and manual verification. |

| Weatherproof Enclosure & Power | Pelican case, 12V external battery, solar panel kit | Protects electronics and provides continuous power for extended deployments (>1 week). |

| Spatial Analysis Software | QGIS, ArcGIS, R package sf |

For site stratification, sensor placement mapping, and spatial analysis of acoustic data. |

Visualized Workflows and Relationships

Acoustic Monitoring Core Applications & Data Flow

BACI Design for Habitat Impact Assessment

This whitepaper explores the critical intersection between environmental noise pollution research and foundational auditory system models, contextualized within a broader thesis on acoustic monitoring for avian and chiropteran species. The physiological parallels between vertebrate auditory systems provide a unique translational bridge: understanding noise-induced damage in wildlife models directly informs mechanistic studies in mammalian laboratory models, which in turn drive therapeutic development for hearing loss. Acoustic monitoring data from field studies quantifies the anthropogenic noise threat, while controlled laboratory models deconstruct the underlying pathophysiology.

Section 1: The Translational Bridge: From Field Data to Molecular Insight

Quantifying the Environmental Stressor: Noise Pollution Metrics

Acoustic monitoring of habitats provides the empirical foundation for understanding the noise exposure relevant to wildlife and human health. Key metrics are summarized below.

Table 1: Key Noise Pollution Metrics from Acoustic Monitoring Studies

| Metric | Definition | Typical Range in Impacted Habitats | Relevance to Auditory Research |

|---|---|---|---|

| Equivalent Continuous Sound Level (Leq) | The constant sound level that would deliver the same total acoustic energy as the fluctuating noise over a period. | 65-85 dBA (near road/air traffic) | Sets baseline for chronic exposure models in laboratory studies. |

| Peak Sound Pressure Level (SPL) | The maximum instantaneous sound pressure. | 120-140 dB SPL (from impulsive sources like construction) | Models acute acoustic trauma for mechanistic studies of hair cell rupture. |

| Frequency Spectrum | Distribution of acoustic energy across frequencies. | Dominant energy often in 0.5-2 kHz (anthropogenic), vs. 20-120 kHz (bat calls). | Determines frequency-specific cochlear damage; aligns lab model exposure with real-world stimuli. |

| Temporal Profile | Pattern of noise over time (continuous, intermittent, impulsive). | Variable: continuous (traffic), intermittent (aircraft), impulsive (pile-driving). | Informs exposure protocol design to mimic ecological relevance. |

Auditory System Commonalities: Birds, Bats, and Mammalian Models

The avian and mammalian cochlea, while anatomically distinct, share core principles of frequency tonotopy, mechanosensory hair cell function, and susceptibility to noise-induced oxidative stress and excitotoxicity. Bats, reliant on ultrasonic hearing for echolocation, represent a model of high-frequency auditory processing and resistance to noise-induced damage. These parallels validate the use of standard laboratory models (mice, rats, guinea pigs) to investigate phenomena observed in field studies.

Section 2: Core Experimental Models and Methodologies

In Vivo Model: Controlled Noise Exposure and Functional Assessment

Protocol Title: Standardized Noise Exposure and Auditory Brainstem Response (ABR) Threshold Assessment in Rodents.

Objective: To induce noise-induced hearing loss (NIHL) and quantify functional auditory threshold shifts.

Materials:

- Adult C57BL/6J or FVB/NJ mice (or Hartley guinea pigs).

- Calibrated noise generation system (e.g., Tucker-Davis Technologies).

- Sound-attenuating chamber.

- Anaesthesia system (e.g., Ketamine/Xylazine cocktail).

- Subdermal needle electrodes.

- ABR recording system (e.g., BioSigRZ).

Procedure:

- Pre-Exposure Baseline ABR: Anesthetize animal. Place electrodes (vertex-active, mastoid-reference, ground-hip). Present acoustic clicks and tone bursts (4, 8, 16, 32 kHz) at decreasing intensities from 90 to 20 dB SPL. Record neural responses; threshold is the lowest intensity eliciting a reproducible Wave II/III pattern.

- Noise Exposure: Place awake animal in a wire mesh cage within the sound chamber. Expose to octave-band noise (e.g., centered at 8-16 kHz) at 100 dB SPL for 2 hours. Sound level is calibrated at the cage location with a precision sound level meter.

- Post-Exposure ABR: Perform ABR at 24 hours, 1 week, and 2 weeks post-exposure to track temporary (TTS) and permanent (PTS) threshold shifts.

- Cochlear Harvest: Terminally anesthetize and perfuse animal. Extract temporal bones for histological analysis (see Protocol 2.2).

Ex Vivo Model: Cochlear Explant for Mechanistic Studies

Protocol Title: Cochlear Explant Culture for Hair Cell Survival and Immunohistochemical Analysis.

Objective: To directly observe noise-mimetic damage and test otoprotective compounds on sensory epithelium.

Materials:

- Postnatal day 3-5 rodent pups.

- Dissection microscope and micro-dissection tools.

- Basal Medium Eagle, Fetal Bovine Serum, Penicillin-Streptomycin.

- Culture inserts (e.g., Millicell-CM).

- Neomycin or gentamicin (for ototoxin-induced damage model).

- Fixative (4% Paraformaldehyde).

| Item | Function |

|---|---|

| 4% Paraformaldehyde (PFA) | Cross-linking fixative for preserving cochlear tissue morphology for immunostaining. |

| Phalloidin (FITC or TRITC conjugate) | Binds to F-actin, selectively staining the cuticular plate and stereocilia bundles of hair cells. |

| Myosin VIIa or VI Primary Antibody | Specific marker for immunohistochemical identification of hair cell cytoplasm. |

| TUNEL Assay Kit | Labels 3'-OH ends of fragmented DNA, enabling detection of apoptotic hair cells. |

| Cisplatin or Neomycin Sulfate | Common ototoxic reagents used in explants to chemically induce hair cell death, modeling noise-induced loss. |

| N-acetylcysteine (NAC) or D-methionine | Antioxidant reagents tested as potential otoprotectants against oxidative stress in hair cells. |

| Fluorescence Mounting Medium | Preserves fluorescence and provides correct refractive index for high-resolution microscopy. |

Procedure:

- Dissection: Sacrifice P3-P5 pup, decapitate, and place skull in ice-cold HBSS. Remove temporal bones, open otic capsule, and carefully extract the cochlear duct. Remove the stria vascularis and spiral ligament.

- Culture: Place the intact organ of Corti explant on a collagen-coated cell culture insert in a dish. Culture in serum-containing medium at 37°C, 5% CO2.

- Insult: After 24-hour acclimation, add ototoxin (e.g., 1 mM neomycin for 24h) to the medium to induce hair cell loss, modeling noise-induced damage.

- Fixation and Staining: Fix explants in 4% PFA for 1 hour. Permeabilize with 0.3% Triton X-100. Stain with Phalloidin (for stereocilia) and anti-Myosin VIIa antibody. Counterstain nuclei with DAPI.

- Imaging & Quantification: Image using a confocal microscope. Count intact hair cells per 100 µm length of the basilar membrane in the middle turn. Compare treated vs. control explants.

Section 3: Key Pathophysiological Pathways in Noise-Induced Hearing Loss

The primary mechanisms underlying NIHL involve metabolic exhaustion, oxidative stress, and excitotoxicity, culminating in hair cell apoptosis or necrosis. The following diagram outlines the core signaling cascade.

Title: Core Pathway of Noise-Induced Hair Cell Damage

Section 4: Integrated Research Workflow

Translational research in this field requires a closed-loop workflow from environmental observation to preclinical validation.

Title: Translational Research Workflow from Field to Lab

The biomedical crossroads of noise pollution studies and auditory research models represents a potent synergy. Data derived from acoustic monitoring of wildlife provides ecologically relevant exposure parameters, driving biologically precise laboratory investigations. The dissection of conserved molecular pathways in standardized models enables the development of therapeutic interventions, with potential benefits spanning from conservation biology to human auditory health. This integrated approach underscores the value of interdisciplinary research in addressing the pervasive challenge of noise-induced hearing loss.

Clearing the Static: Troubleshooting Common Pitfalls and Optimizing Data Integrity

Acoustic monitoring has become a cornerstone methodology in avian and chiropteran research, providing critical data on species presence, distribution, behavior, and population trends. However, the fidelity of this bioacoustic data is fundamentally compromised by environmental and anthropogenic noise interference. This guide details technical strategies for mitigating three pervasive noise sources—wind, rain, and anthropogenic activity—within the context of a thesis focused on advancing the accuracy and reliability of acoustic monitoring for ecological study and, by extension, applications in bio-inspired drug discovery where natural soundscapes inform biomedical research.

Noise Source Characterization and Impact

| Noise Source | Frequency Range | Primary Impact on Signal | Typical dB Level at Sensor |

|---|---|---|---|

| Wind | Broadband (Low freq. <1kHz dominant) | Masks low-frequency calls, induces microphone turbulence noise, causes physical vibration. | 40-70 dB SPL (5-15 mph wind) |

| Rain | Broadband, impulsive (2-15kHz) | Saturates recordings with splash/impact noise, masks mid-high frequency calls, risks equipment damage. | 50-80+ dB SPL (moderate to heavy) |

| Anthropogenic (e.g., traffic) | Low to Mid-frequency (<3kHz) | Pervasive, chronic masking of bird/bat calls, introduces cyclic patterns confounding automated detection. | 55-75 dB SPL (50m from road) |

Mitigation Strategies: Experimental Protocols & Hardware

Wind Noise Abatement Protocol

Objective: To physically decouple the microphone diaphragm from wind-induced pressure fluctuations. Detailed Methodology:

- Windbreak Fabrication: Construct a spherical windscreen using two layers: a) an inner layer of 30 PPI (pores per inch) open-cell foam (minimum 2-inch thickness), and b) an outer layer of synthetic fur (pile length ~20mm). The fur disrupts laminar airflow, while the foam dissipates turbulent energy.

- Spatial Buffering: Deploy the acoustic sensor (e.g., Audiomoth, SM4) within a dense shrub layer or on the leeward side of natural windbreaks, never on exposed poles. A minimum distance of 10x the obstacle height is recommended.

- Vibration Isolation: Mount the sensor on a vibration-dampening platform (e.g., Sorbothane hemisphere) within its housing to isolate from pole movement.

- Validation Experiment: Conduct a controlled field test. Record for 24 hours with and without the full wind-abatement apparatus during constant wind speeds (5-15 mph). Use a calibrated anemometer and co-located reference microphone. Quantify the reduction in noise floor (dB) in the 100-1000 Hz band via spectral analysis.

Rain Noise Mitigation Protocol

Objective: To prevent direct rain impact on the sensor and dissipate droplet energy. Detailed Methodology:

- Rain Shield Deployment: Install a custom, large-diameter (≥30cm) hydrophobic rain shield (e.g., PVC or aluminum) angled at 45 degrees, positioned 15-20cm directly above the sensor housing. Ensure no part of the shield is within the microphone's field of view to avoid reflecting target sounds.

- Hydrophobic Windscreen Treatment: Treat the outer fur layer of the windscreen with a water-repellent spray (e.g., fluorocarbon-based) to prevent saturation, which reduces acoustic transparency.

- Drainage and Sealing: Ensure all housing seals are intact (IP67 rating minimum). Design mounting brackets with drip edges. For ground units, place in a slight mound to avoid water pooling.

- Validation Experiment: Simulate rainfall using a standardized irrigation system (e.g., 2 inches/hour). Compare recordings from a shielded versus unshielded, but otherwise identical, sensor. Analyze the number of impulsive noise events (spikes >80 dB) and the increase in mean spectral amplitude across 2-15 kHz.

Anthropogenic Noise Mitigation Protocol

Objective: To minimize the intrusion of human-generated noise through spatial, temporal, and analytical planning. Detailed Methodology:

- Site Selection via Soundscape Modeling: Prior to deployment, use a propagation model (e.g., ISO 9613-2) with GIS layers for roads, industrial zones, and topography to predict noise contours. Select sites where modeled anthropogenic noise is at least 6 dB below the target species' call amplitude.

- Temporal Sampling Strategy: For chronic noise sources (highways), program sensors to record during acoustically quiescent periods (e.g., 0300-0530 local time). For episodic sources (agriculture), use schedule triggers based on known activity patterns.

- Hardware Filtering: Apply an analog high-pass filter (cutoff ~200 Hz) at the microphone preamplifier stage to attenuate dominant low-frequency traffic rumble before analog-to-digital conversion, preserving dynamic range.

- Validation Experiment: Conduct a gradient study. Deploy transects of sensors at 100m, 300m, and 500m from a defined noise source. Record synchronously for 72 hours. Correlate distance with both the absolute noise level in the anthropogenic band (e.g., 100-1500 Hz) and the subsequent detection probability of target species calls using automated recognition software (e.g., Kaleidoscope, TensorFlow).

Diagram 1: Integrated noise mitigation workflow from planning to analysis.

Post-Hoc Digital Signal Processing (DSP) Techniques

| Algorithm | Target Noise | Key Parameter | Performance Metric (Typical Improvement) |

|---|---|---|---|

| Spectral Gating | Wind, transient rain | Threshold: -30 dBFS, Attack/Release: 5ms/20ms | +15% true positive rate for bat calls in windy conditions. |

| Adaptive Filtering (LMS) | Chronic anthropogenic (e.g., generator) | Filter length: 256 taps, Step size (μ): 0.01 | Up to 10 dB noise reduction in the 60 Hz harmonic band. |

| Wavelet Denoising | Impulsive rain | Wavelet: Daubechies 4, Level: 6 | Reduces impulsive events by 70%, preserves call structure. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Category | Primary Function in Experiment |

|---|---|---|

| Open-Cell Polyurethane Foam (30 PPI) | Physical Barrier | Core windscreen material; dissipates turbulent wind energy before it reaches microphone. |

| Synthetic Fur Fabric (20mm pile) | Physical Barrier | Outer windscreen layer; disrupts laminar airflow, provides hydrophobic surface. |

| Sorbothane Hemispheres (Isolation Feet) | Vibration Dampener | Absorbs mechanical vibrations from wind or mounting structures. |

| Fluorocarbon Water-Repellent Spray | Chemical Treatment | Maintains acoustic transparency of windscreens by preventing water saturation. |

| Programmable Acoustic Sensor (e.g., Audiomoth) | Data Acquisition | Enables flexible, high-resolution recording with precise temporal scheduling. |

| iZotope RX Spectral Denoise Module | Software/DSP | Provides advanced, user-adjustable spectral subtraction for post-hoc noise cleaning. |

| Kaleidoscope Pro Software | Analysis | Automated species detection and classification; includes noise-resistant templates. |

Diagram 2: Simplified pathway of signal corruption.

Within acoustic monitoring for birds and bats research, automated classifiers are indispensable for processing vast datasets. However, their deployment is hampered by persistent challenges: high false positive rates, inherent species bias, and severe data imbalance. These conundrums directly impact the reliability of population estimates, trend analyses, and the efficacy of conservation interventions. This technical guide deconstructs these issues within the context of ecological informatics, providing methodologies for diagnosis and mitigation.

Quantifying the Problem: Current Landscape

Recent studies and benchmarking reports highlight the prevalence and impact of these classifier issues. The following table summarizes key quantitative findings from current literature.

Table 1: Prevalence and Impact of Classifier Issues in Bioacoustics (2023-2024)

| Issue | Reported Metric | Typical Range in Studies | Primary Impact on Research |

|---|---|---|---|

| False Positive Rate (FPR) | FPR for common species | 5-25% | Inflates species occupancy estimates; increases manual validation workload by 30-50%. |

| Species Bias | Recall disparity (common vs. rare) | 40-75% difference | Under-detection of rare/elusive species; skewed biodiversity metrics. |

| Data Imbalance | Class ratio in training sets | 1000:1 (common:rare) | Classifier indifference to rare classes; high false negative rates for species of conservation concern. |

| Vocalization Variability | Accuracy drop across regions | 15-30% decrease | Poor model generalizability; requires locale-specific retraining. |

Experimental Protocols for Diagnosis

A rigorous diagnosis is prerequisite to mitigation. Below are detailed protocols for experiments to quantify each conundrum.

Protocol 2.1: Auditing False Positives

Objective: To systematically categorize and quantify sources of false positives in an acoustic classifier.

- Sample Collection: Randomly select 500-1000 positive detections from the classifier output over a defined spatiotemporal window.

- Human Validation: Expert annotators review each detection spectrogram and audio clip, labeling as: True Positive (TP), False Positive from abiotic source (wind, rain), False Positive from non-target biotic source (insect, other species), or False Positive from equipment artifact.