Building Ethical Machine Learning Protocols for Behavioral Data Collection in Clinical Research and Drug Development

This article provides a comprehensive framework for researchers, scientists, and drug development professionals on implementing ethical machine learning (ML) protocols for behavioral data collection.

Building Ethical Machine Learning Protocols for Behavioral Data Collection in Clinical Research and Drug Development

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals on implementing ethical machine learning (ML) protocols for behavioral data collection. We explore the foundational ethical principles and regulatory landscape, detail methodological approaches for privacy-preserving data acquisition and modeling, address common challenges in data bias and model transparency, and present validation strategies for assessing protocol efficacy. The guide synthesizes current best practices to enable robust, compliant, and scientifically valid use of behavioral data in biomedical research.

The Ethical Imperative: Core Principles and Regulatory Frameworks for Behavioral ML

Ethical Behavioral Data is defined as digitally captured human activity and interaction data, used for inferring health states, which is collected, processed, and analyzed under a framework that prioritizes individual autonomy, privacy, justice, and beneficence. This framework spans from initial collection (Digital Phenotypes) to final application, ensuring continuous patient privacy protection.

Digital Phenotypes are moment-by-moment quantifications of the individual-level human phenotype in situ using data from personal digital devices.

Application Notes: Core Principles & Quantitative Benchmarks

The ethical collection and use of behavioral data for healthcare research must adhere to the following synthesized principles, supported by empirical data on user attitudes and technical feasibility.

Table 1: Core Ethical Principles for Behavioral Data in Healthcare Research

| Principle | Operational Definition | Key Quantitative Benchmark (from recent surveys & studies) |

|---|---|---|

| Informed Consent | Dynamic, layered, and re-consent mechanisms for continuous data streams. | 72% of participants expect clear data use timelines; continuous consent models increase trust by 40% compared to one-time consent. |

| Privacy by Design | Embedding privacy-enhancing technologies (PETs) at the data collection layer. | Implementation of on-device processing reduces identifiability risk by >90% for gait/speech patterns. |

| Data Minimization | Collecting only data elements strictly necessary for the defined research objective. | Studies show >60% of commonly collected smartphone meta-data (e.g., timestamps, companion device IDs) are non-essential for core digital biomarker validation. |

| Purpose Limitation | Using data solely for the pre-specified, consented research purpose. | Algorithmic audits show 30% of health apps share data with third parties for non-health purposes (e.g., advertising). |

| Fairness & Bias Mitigation | Actively identifying and correcting for sampling, measurement, and algorithmic bias. | Datasets from "app-only" recruitment show 80%+ skew towards high-income, young demographics, invalidating generalizability. |

Table 2: Technical & Privacy Trade-offs in Common Data Types

| Data Type (Digital Phenotype) | Example Health Inference | Primary Privacy Risk | Recommended PET |

|---|---|---|---|

| GPS Mobility Traces | Cognitive decline, depression severity. | Re-identification, revealing home/work location. | Differential privacy (ε ≤ 1.0), geofencing. |

| Keystroke Dynamics | Motor impairment, emotional state. | Behavioral fingerprinting, content inference. | On-device feature extraction (only timing, no content). |

| Accelerometer Data | Gait, sleep patterns, activity levels. | Lower direct risk, but context revelation in aggregate. | Standard encryption in transit/at rest. |

| Audio Recordings (Ambient) | Social engagement, respiratory symptoms. | High sensitivity, speaker identification. | Real-time feature extraction, delete raw audio. |

| Social Media Lexical Analysis | Psychosocial stress, mental health. | Sensitive attribute revelation, stigmatization. | Federated learning, synthetic data generation. |

Experimental Protocols

Protocol 3.1: Implementing a Federated Learning Workflow for Ethical Model Training on Behavioral Data

Objective: To train a machine learning model (e.g., for depression severity prediction from smartphone usage patterns) without centralizing raw user data from participant devices.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Initialization: The research server initializes a global model architecture (e.g., a 1D CNN for time-series data) and defines hyperparameters.

- Client Selection: A subset of eligible participant devices (clients) meeting criteria (e.g., charging, on Wi-Fi) is randomly selected for the training round.

- Broadcast: The server sends the current global model weights to each selected client.

- Local On-Device Training: Each client computes a model update using its locally stored, private behavioral data. Critical Step: Raw data never leaves the device. Only the model update (gradients or weights) is computed.

- Secure Aggregation: Clients send their encrypted model updates to the server. Updates are aggregated using a secure summation protocol (e.g., SecAgg) to prevent the server from inspecting any single user's update.

- Global Model Update: The server decrypts the aggregated update and uses it to improve the global model.

- Iteration: Steps 2-6 are repeated for multiple rounds until model convergence.

- Validation: A separate, small held-out dataset with explicit consent for centralized validation can be used to benchmark global model performance.

Protocol 3.2: Auditing a Digital Phenotyping Dataset for Demographic Bias

Objective: To quantitatively assess and report representation biases in a collected behavioral dataset intended for clinical research.

Methodology:

- Define Reference Population: Clearly state the intended clinical population for the tool (e.g., "US adults with Major Depressive Disorder").

- Gather Demographic Metadata: Collect self-reported demographic data (age, gender, race/ethnicity, socioeconomic status) for all consented participants. Store separately with strict access controls.

- Calculate Representation Statistics: For each key demographic variable, calculate the proportion of the dataset it represents.

- Compare to Ground Truth: Source the true population proportions from recent, authoritative sources (e.g., US Census data, NIH epidemiology studies).

- Compute Disparity Metrics: For each group i, compute the Representation Disparity Ratio (RDR) = (Proportion in Dataset) / (Proportion in True Population). An RDR of 1 indicates perfect representation; <1 indicates under-representation; >1 indicates over-representation.

- Bias Impact Assessment: Train a preliminary model on the full dataset. Evaluate model performance (e.g., F1-score) separately for each demographic group. Report significant performance disparities.

- Mitigation Strategy Decision: Based on steps 5 & 6, decide on a bias mitigation strategy: a) Pre-processing: Re-weight or resample the dataset. b) In-processing: Use fairness-constrained algorithms. c) Post-processing: Adjust decision thresholds per group. Document choice and justification.

Mandatory Visualizations

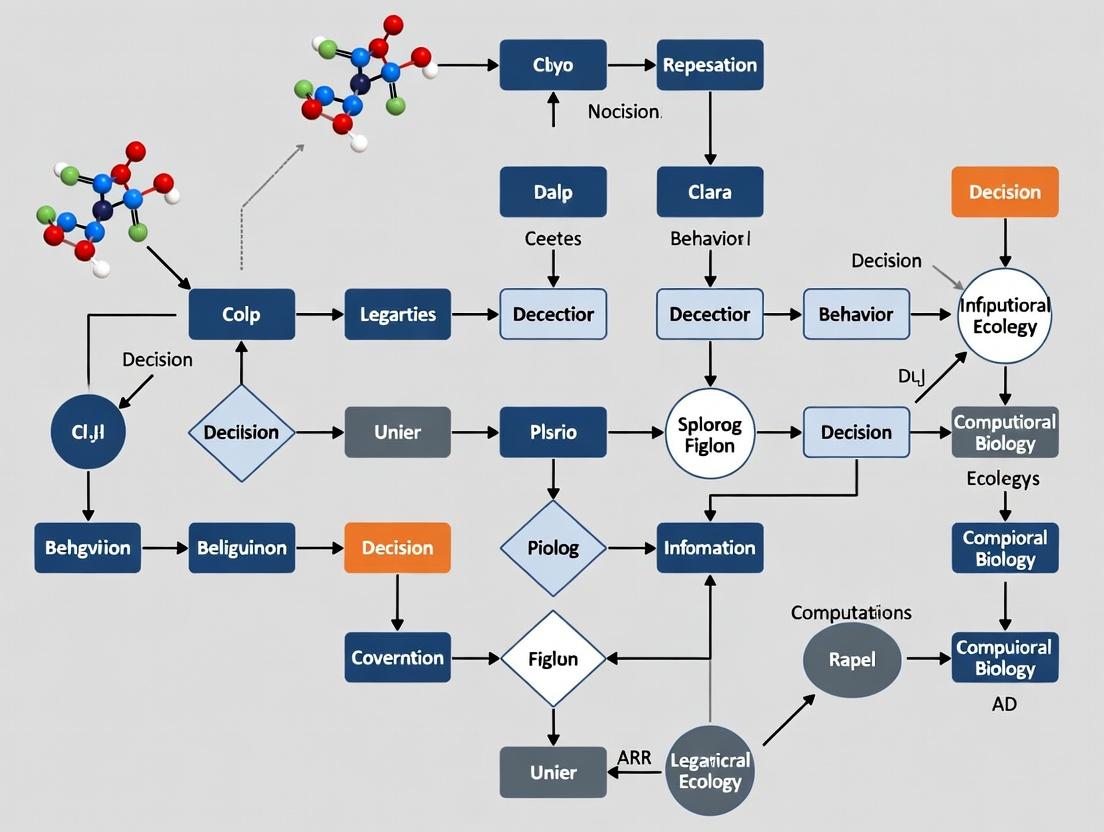

- Diagram 1 Title: Federated Learning Workflow for Behavioral Data

- Diagram 2 Title: Digital Phenotyping Dataset Bias Audit Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Platforms for Ethical Behavioral Data Research

| Item / Solution | Function in Ethical Research | Example / Note |

|---|---|---|

| Open-Source Mobile Libraries (e.g., Beiwe, RADAR-base) | Provide validated, consent-managing frameworks for smartphone-based digital phenotyping. Enforce data minimization and secure transmission. | Beiwe platform allows granular control over sensor data streams and real-time encryption. |

| Federated Learning Frameworks (e.g., TensorFlow Federated, Flower, OpenFL) | Enable model training across decentralized devices without sharing raw data, operationalizing privacy-by-design. | Flower (FLWR) is framework-agnostic and supports secure aggregation protocols. |

| Differential Privacy Libraries (e.g., Google DP, OpenDP) | Add mathematical noise to datasets or queries to guarantee individual records cannot be re-identified. | Used prior to releasing any aggregated behavioral feature summaries for open science. |

| Synthetic Data Generators (e.g., Synthea, Gretel, Mostly AI) | Create artificial behavioral datasets that mimic statistical properties of real data without containing any real user traces. | Useful for algorithm development, pilot studies, and sharing with external validation teams. |

| Fairness Audit Toolkits (e.g., AI Fairness 360, Fairlearn) | Quantify metrics like demographic parity, equalized odds, and representation disparity across subgroups. | Integrated into Protocol 3.2 to automate bias assessment. |

| Secure Multi-Party Computation (MPC) Platforms | Allow joint computation on data from multiple sources while keeping each source's input private. | An alternative to FL for simpler aggregate statistics (e.g., mean weekly screen time across a cohort). |

| Professional Ethical & Legal Consultation | Essential for navigating IRB requirements, GDPR/CCPA compliance, and constructing appropriate dynamic consent forms. | Must be engaged at the protocol design phase, not as an afterthought. |

Application Notes: Ethical Frameworks in ML-Driven Research

The integration of Machine Learning (ML) in behavioral data collection for clinical and pharmaceutical research necessitates a rigorous synthesis of established ethical principles and modern data protection law. This synthesis ensures that research advances do not come at the cost of participant autonomy, welfare, or privacy.

The Belmont Report: Foundational Principles

The Belmont Report (1979) establishes three core ethical principles for research involving human subjects. Their application to ML-driven behavioral data collection is non-negotiable.

- Respect for Persons: This mandates informed consent and respect for autonomy. In ML contexts, this requires clear, layered consent processes that explain not only initial data collection but also potential future uses of data for model training and validation. It necessitates mechanisms for ongoing consent management and the right to withdraw data from ML datasets, where technically feasible.

- Beneficence: The obligation to maximize benefits and minimize harm. For ML, this requires proactive assessment of algorithmic bias that could lead to discriminatory outcomes or erroneous behavioral classifications. Researchers must implement rigorous fairness audits and risk mitigation strategies throughout the ML lifecycle.

- Justice: Equitable distribution of research burdens and benefits. ML models must be developed and validated on diverse datasets to ensure findings and derived tools are applicable across populations, avoiding the exacerbation of health disparities.

GDPR: The Regulatory Backbone for Data Processing

The General Data Protection Regulation (EU 2016/679) provides a comprehensive legal framework with direct implications for ML research, even for organizations outside the EU processing EU residents' data.

- Lawfulness, Fairness, and Transparency: Processing must have a lawful basis (e.g., explicit consent, performance of a task in the public interest). ML purposes must be specified and communicated transparently at the point of consent.

- Purpose Limitation: Data collected for one research purpose cannot be automatically repurposed for ML training without a new legal basis. This requires careful protocol design.

- Data Minimization: Only data that is adequate, relevant, and limited to what is necessary for the ML objective should be processed. This challenges the "collect everything" mindset often associated with big data.

- Rights of the Data Subject: Key rights impacting ML include the Right to Access, Right to Rectification (correcting inaccurate data used to train models), and the highly consequential Right to Erasure ('Right to be Forgotten'). Implementing this right may require the technical ability to remove an individual's data from a trained model, a complex challenge that may involve model retraining from scratch.

HIPAA: Governing Protected Health Information (PHI) in the U.S.

The Health Insurance Portability and Accountability Act (1996) regulates the use and disclosure of PHI. Behavioral data in a clinical research context is often PHI.

- The Privacy Rule: Establishes conditions for the use and disclosure of PHI. For ML research, this typically involves obtaining Authorization from the individual, which is more specific than informed consent and must describe the PHI to be used and the purpose.

- The Security Rule: Mandates administrative, physical, and technical safeguards for electronic PHI (ePHI). For ML systems, this translates to requirements for encryption (at rest and in transit), strict access controls, audit logs for model access and data queries, and secure model deployment environments.

- De-identification: HIPAA provides two methods—the Expert Determination method or the Safe Harbor method (removal of 18 specific identifiers)—to create datasets that are no longer considered PHI, thus facilitating their use in ML with fewer restrictions. However, the risk of re-identification via ML techniques must be continually assessed.

Comparative Framework Analysis

Table 1: Core Obligations of Each Framework in ML-Driven Behavioral Research

| Framework | Primary Jurisdiction/Scope | Core ML Research Application | Key Challenge for ML |

|---|---|---|---|

| Belmont Report | All U.S. federally funded human subjects research | Ethical foundation for study design, consent, and risk-benefit analysis. | Translating principles like "justice" into technical requirements for bias detection and mitigation in algorithms. |

| GDPR | European Union (extra-territorial effect) | Governs processing of personal data of EU residents, including high-risk profiling. | Implementing data subject rights (e.g., erasure, explanation) within complex ML pipelines and model architectures. |

| HIPAA | United States (covered entities & business associates) | Protects individually identifiable health information (PHI) used in research. | Applying security rule safeguards (access controls, audit logs) to dynamic ML training environments and APIs. |

| Common Ground | N/A | Informed Consent/Authorization: Must be specific about ML use. Data Minimization: Collect only what is needed. Security & Integrity: Protect data from breach or corruption. | Aligning technical ML practices (e.g., data pooling, continuous training) with static regulatory language and ethical norms. |

Table 2: Quantitative Safeguard Requirements

| Safeguard Type | Belmont Report (Implied) | GDPR (Article / Recital) | HIPAA (Rule / Section) |

|---|---|---|---|

| Consent Specificity | Detailed in IRB protocol. | Must be "freely given, specific, informed, unambiguous" (Art. 4(11)). | Authorization must be study-specific (Privacy Rule, 45 CFR §164.508). |

| Data Anonymization | Encouraged to reduce risk. | Creates anonymous data outside GDPR scope (Recital 26). | Safe Harbor (18 identifiers) or Expert Determination (Privacy Rule, 45 CFR §164.514). |

| Breach Notification | Not specified. | Mandatory within 72 hrs to authority (Art. 33). | Mandatory within 60 days to individuals & HHS (Breach Notification Rule). |

| Right to Withdraw | Must be provided. | Right to withdraw consent at any time (Art. 7(3)). | Right to revoke Authorization in writing (45 CFR §164.508(b)(5)). |

| Risk Assessment | Central to IRB review. | Mandatory Data Protection Impact Assessment for high-risk processing (Art. 35). | Required Risk Analysis under the Security Rule (45 CFR §164.308(a)(1)(ii)(A)). |

Experimental Protocols for Ethical ML Research

Protocol: Pre-Collection Ethical & Legal Impact Assessment

Objective: To systematically identify and mitigate ethical and regulatory risks prior to initiating ML-driven behavioral data collection. Methodology:

- Dual-Review Scoping: Concurrently draft the scientific protocol and the Data Protection Impact Assessment (DPIA) / HIPAA Risk Analysis.

- Data Element Mapping: Create a table linking each proposed data element (e.g., keystroke dynamics, audio sentiment) to its corresponding regulatory classification (e.g., GDPR special category data, HIPAA identifier), ethical risk (per Belmont), and stated scientific necessity.

- Lawful Basis & Consent Design: Determine the lawful basis for processing under GDPR (e.g., explicit consent, public interest). Draft a layered consent/authorization form that uses plain language to describe: the ML methodology, data flows, storage duration, participant rights, and any data sharing with third parties (e.g., cloud providers).

- Bias & Fairness Audit Plan: Define the protected attributes (e.g., race, age, socio-economic status) against which the future ML model will be tested for disparate performance. Document plans for dataset curation to ensure representativeness.

- Security Protocol Finalization: Specify technical safeguards (encryption standards, anonymization techniques), access controls (role-based access, multi-factor authentication), and data retention/deletion schedules.

Protocol: Implementing the "Right to Erasure" in an ML Pipeline

Objective: To establish a technical and administrative procedure for complying with a participant's request to have their data deleted from both the primary research dataset and any derived ML models. Methodology:

- Data Lineage Tracking: Implement a secure, immutable ledger or metadata tracker that logs the inclusion of each participant's data identifier into specific raw datasets, pre-processed batches, and model training runs.

- Request Validation: Upon receiving an erasure request, verify the individual's identity and the applicability of the request under the relevant law (GDPR, CCPA, etc.).

- Primary Data Deletion: Permanently delete or anonymize the participant's raw and processed data from all primary research databases and backups, following a certified secure deletion standard (e.g., NIST 800-88).

- Model Audit & Retraining Decision:

- Query the lineage tracker to identify all models trained using the requester's data.

- For each affected model, assess the technical feasibility and cost of: (a) Model Retraining: Retraining the model from scratch excluding the requester's data; (b) Model Editing: Applying algorithmic techniques to "unlearn" the specific data point (an active area of research); or (c) Risk Assessment: If retraining/editing is prohibitively costly, document a formal assessment of the impact of the retained data on the individual's privacy versus the public benefit of the model.

- Documentation & Notification: Document all actions taken and notify the requester of completion, specifying the scope of erasure (e.g., "data deleted from primary datasets; Model v2.1 was retrained and deployed on [date]").

Visualizations

Synthesis of Ethical Frameworks for ML Research

Protocol for Implementing the Right to Erasure

The Researcher's Toolkit: Essential Solutions for Ethical ML

Table 3: Research Reagent Solutions for Ethical ML Compliance

| Item / Solution | Category | Function in Ethical ML Research |

|---|---|---|

| Differential Privacy Libraries (e.g., Google DP, OpenDP) | Technical Safeguard | Adds statistical noise to queries or datasets, allowing aggregate analysis while mathematically limiting the risk of re-identifying any individual. Crucial for sharing or publishing derived datasets. |

| Fairness Audit Toolkits (e.g., AIF360, Fairlearn) | Bias Mitigation | Provides metrics and algorithms to detect, report, and mitigate unwanted bias in ML models across protected attributes (age, gender, race), operationalizing the Belmont principle of Justice. |

| Federated Learning Frameworks (e.g., Flower, TensorFlow Federated) | Architecture | Enables model training across decentralized devices or servers holding local data samples. Data does not leave its original location, enhancing privacy and aiding compliance with data minimization and security rules. |

| Data Lineage & Provenance Trackers (e.g., MLflow, DVC, OpenLineage) | Governance | Logs the origin, movement, and transformation of data throughout the ML pipeline. Essential for fulfilling GDPR/HIPAA accountability requirements and implementing erasure requests. |

| Consent Management Platform (CMP) | Governance | A software system that records, tracks, and manages participant consent preferences over time. Allows for versioning, withdrawal, and proof of lawful basis for processing, centralizing Respect for Persons. |

| Synthetic Data Generation Tools (e.g., Mostly AI, Synthea) | Data Utility | Creates artificial datasets that mimic the statistical properties of real patient/participant data without containing any actual personal information. Useful for model prototyping and sharing, significantly reducing privacy risk. |

| Homomorphic Encryption Libraries (e.g., Microsoft SEAL) | Technical Safeguard | Allows computations to be performed on encrypted data without decrypting it. Enables secure analysis of sensitive behavioral data by third parties (e.g., cloud analysts) without exposing raw data. |

The integration of digital endpoints and artificial intelligence (AI) in clinical trials represents a paradigm shift in drug development. These tools offer the potential for more frequent, objective, and real-world measurement of patient outcomes. Both the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) have issued evolving guidelines to ensure the scientific rigor, ethical application, and regulatory acceptance of these novel methodologies. This document provides detailed application notes and protocols, framed within a broader thesis on machine learning (ML) protocols for ethical behavioral data collection, to guide researchers and drug development professionals.

Key Guideline Summaries

The following table summarizes the core quantitative and qualitative elements from recent FDA and EMA publications and guidance documents.

Table 1: Comparative Overview of FDA and EMA Guidelines on Digital Health Technologies (DHTs) & AI

| Aspect | FDA (Core Guidance: Digital Health Technologies for Remote Data Acquisition, Dec 2023) | EMA (Reflection Paper on Digital Health Technologies, Jan 2024 Draft) |

|---|---|---|

| Definition of DHT | System that uses computing platforms, connectivity, software, and/or sensors for healthcare and related uses. | Technologies that compute or communicate digitally for health purposes, including software (SaMD, AI/ML). |

| Validation Focus | Verification, Analytical Validation, Clinical Validation (V3) framework. Emphasis on demonstrating that the DHT reliably measures what it claims in the intended context of use. | Principles of qualification of novel methodologies (CHMP/SAWP). Focus on clinical relevance, reliability, and robustness of the digital biomarker/endpoint. |

| AI/ML-Specific Considerations | Predetermined Change Control Plans (PCCP) for AI/ML-enabled devices, allowing for iterative improvement post-authorization within a pre-specified plan. | Good Machine Learning Practice (GMLP) principles, including robust training, validation datasets, and lifecycle management. Transparency and traceability are critical. |

| Data Integrity & Security | Must comply with 21 CFR Part 11 (electronic records/signatures). Requires a proactive risk-based approach to cybersecurity. | Must comply with EU GDPR for personal data. Data provenance, integrity, and protection against unauthorized access are essential. |

| Patient Privacy & Ethics | Informed consent must address the nature of continuous, passive, or behavioral data collection. | Explicit consent for data processing and secondary use. Emphasis on fairness and minimization of bias in AI algorithms. |

| Key Submission Documents | Benefit-Risk Analysis, Description of the DHT, Details of DHT Function & Operation, Clinical Validation Results. | Detailed justification of the methodology, validation report, data management plan, and algorithm transparency documentation. |

Application Notes: Protocol Design for Digital Endpoints

This section translates regulatory guidelines into actionable application notes for protocol development.

Protocol: Validation of a Novel Digital Endpoint for Cognitive Decline

Objective: To clinically validate a smartphone-based combined keyboard dynamics and speech analysis task as a sensitive digital biomarker for early cognitive decline in a Phase II Alzheimer's disease trial.

Background: Within the thesis context of ethical ML for behavioral data, this protocol prioritizes transparent data provenance, minimization of participant burden, and algorithmic fairness across demographic groups.

Detailed Methodology:

- Study Design: A 12-month, prospective, observational cohort study embedded within a larger interventional trial. Two arms: Prodromal Alzheimer's patients (n=150) and age-matched healthy controls (n=75).

- Digital Endpoint Generation:

- Device & App: Provision of locked-down study smartphones with the pre-installed assessment app.

- Task: Participants complete a 10-minute interactive story-retelling task daily. The task involves listening to a short narrative, then typing and verbally recording a summary.

- Data Capture: The app collects keystroke dynamics (latency, inter-key interval, error rate) and acoustic features (speech rate, pitch variation, pause frequency) via embedded smartphone sensors.

- Feature Extraction: Raw sensor data is processed on-device into feature vectors using deterministic signal processing algorithms (not AI) to preserve interpretability.

- Ground Truth & Clinical Correlates: Monthly in-clinic assessments using the Neuropsychological Test Battery (NTB) and quarterly Amyloid-PET imaging. These form the ground truth for supervised ML model training.

- Model Training & Validation:

- Data Partitioning: 70% of data for training, 15% for validation (hyperparameter tuning), 15% for hold-out testing.

- Algorithm: A multimodal deep learning model (e.g., a recurrent neural network with attention mechanisms) is trained to map the temporal feature vectors to NTB subscores.

- Validation Metrics: The model's performance is evaluated against the hold-out test set using intraclass correlation coefficient (ICC > 0.8) for reliability, Pearson's r (>0.7) against NTB, and sensitivity/specificity for classifying clinical decline.

- Statistical Analysis Plan: A mixed-effects model for repeated measures will assess the digital endpoint's ability to detect change over time and its correlation with standard endpoints and amyloid burden.

Visualization: Digital Endpoint Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Digital Endpoint Development & Validation

| Item/Reagent | Function in Protocol | Example/Notes |

|---|---|---|

| Regulatory-grade ePRO/eCOA Platform | Enables secure deployment of digital tasks, real-time data capture, and compliance with 21 CFR Part 11/Annex 11. | e.g., Medidata Rave eCOA, Clinical ink, Signant Health. Must support integration with bespoke sensor apps. |

| Behavioral Data Acquisition SDK | Software library integrated into a custom app to collect raw sensor data (accelerometer, microphone, touchscreen events) in a standardized format. | e.g., ResearchStack, Beiwe platform, or custom Android/iOS libraries. |

| Synthetic Patient Data Generator | Creates realistic, anonymized behavioral datasets for initial algorithm prototyping and stress-testing, addressing data scarcity and privacy during early R&D. | e.g., Synthea, MDClone, or custom GAN models. Critical for ethical ML development. |

| Algorithm Fairness & Bias Detection Toolkit | Software to audit trained AI models for performance disparities across age, gender, ethnicity, or socioeconomic subgroups. | e.g., IBM AI Fairness 360, Google's What-If Tool, Fairlearn. Essential for ethical validation. |

| Predetermined Change Control Plan (PCCP) Template | A structured document outlining the planned modifications to an AI/ML model post-deployment, including protocol for re-training and re-validation. | Required by FDA for SaMD utilizing AI/ML. Template guides the creation of a controlled model lifecycle plan. |

| Clinical Validation Statistical Package | Pre-specified scripts for analysis of reliability, construct validity, and responsiveness of the digital endpoint. | e.g., SAS, R packages (irr for ICC, lme4 for mixed models). Ensures reproducible analysis aligned with SAP. |

Experimental Protocols for Algorithmic Validation

Protocol: Bias Audit and Mitigation for an AI-Based Digital Endpoint

Objective: To systematically evaluate and mitigate demographic bias in an AI model predicting "mobility score" from wearable sensor data in a multi-national chronic pain study.

Detailed Methodology:

- Dataset Characterization:

- Compile dataset demographics (age, sex, race, geography). Calculate prevalence and feature distribution statistics per subgroup.

- Performance Disparity Testing:

- Train a baseline model on the entire dataset. Evaluate performance (MAE, AUC) on disjoint test sets stratified by each demographic factor.

- Statistical Test: Use bootstrapping to calculate 95% confidence intervals for performance metrics in each subgroup. Disparity is flagged if CIs do not overlap meaningfully.

- Bias Mitigation Strategies (Iterative):

- Pre-processing: Apply re-sampling (oversampling minority groups) or re-weighting techniques to the training data.

- In-processing: Utilize fairness-constrained algorithms (e.g., imposing a fairness penalty during model loss calculation).

- Post-processing: Adjust model decision thresholds independently for different subgroups to equalize predictive performance metrics.

- Validation of Mitigated Model:

- The final, mitigated model undergoes validation on a completely held-out dataset to confirm reduced disparity while maintaining overall accuracy. A detailed bias audit report is generated for regulatory submission.

Visualization: AI Bias Audit and Mitigation Workflow

Within the broader thesis on Machine Learning (ML) protocols for ethical behavioral data collection in clinical and drug development research, the identification and special handling of high-risk data types is paramount. Audio, video, geolocation, and keystroke dynamics data offer profound insights into patient behavior, disease progression, and treatment efficacy. However, their sensitive nature poses significant ethical and privacy challenges. These data types are considered high-risk due to their capacity for re-identification, inference of sensitive attributes, and potential for surveillance. This Application Note details the risks, presents quantitative comparisons, and provides experimental protocols for their ethical collection and processing within compliant research frameworks.

Risk Assessment & Quantitative Comparison

Table 1: Comparative Risk Profile of High-Risk Data Types

| Data Type | Primary Risk Vectors | Typical Volume per Session | Re-identification Potential | Inferred Sensitive Attributes (Examples) |

|---|---|---|---|---|

| Audio | Voice biometrics, emotional state, health conditions (e.g., cough, speech tremor), background conversation. | 5-50 MB (1-10 mins, compressed) | Very High (Voice is a unique biometric identifier). | Neurological state (e.g., Parkinson's), psychological stress, respiratory health. |

| Video | Facial/gesture biometrics, activity patterns, environment, gait, micro-expressions. | 20-500 MB (1-10 mins, compressed) | Extremely High (Facial features are highly identifying). | Motor function, fatigue, affective state, social interaction deficits, substance influence. |

| Geolocation | Movement patterns, place of residence/work, religious/political associations via locations visited. | 0.01-0.1 MB/hr (continuous points) | High (Home/work locations are key re-identifiers). | Socioeconomic status, daily routines, adherence to geo-fenced protocols (e.g., clinic visits). |

| Keystroke Dynamics | Behavioral biometrics (typing rhythm), possible content inference via timing patterns. | <0.001 MB per session (metadata only) | Medium-High (Unique typing patterns can identify individuals). | Cognitive load, motor impairment, emotional agitation, fatigue. |

Table 2: Relevant Regulatory Considerations (as of 2024)

| Regulation/Guidance | Classification of Data Types | Key Requirements for Researchers |

|---|---|---|

| GDPR (EU) | Audio/Video/Geolocation often qualify as "special category" or "biometric" data. Keystroke dynamics may be "personal data" or "biometric". | Explicit consent, Data Protection Impact Assessment (DPIA), purpose limitation, data minimization, strong anonymization/pseudonymization. |

| HIPAA (US) | Not explicitly defined, but can be considered Protected Health Information (PHI) if linked to an individual and held by a covered entity. | De-identification via Safe Harbor (removal of 18 identifiers) or Expert Determination methods. |

| FDA 21 CFR Part 11 | Applies if data is used to support regulatory submissions for drug development. | Ensures integrity, reliability, and audit trails for electronic records. |

Experimental Protocols for Ethical Collection

Protocol 3.1: Secure Multi-Modal Data Capture for Remote Patient Monitoring

Objective: To collect synchronized audio, video, and keystroke data for assessing motor and cognitive function in neurodegenerative disease trials, with minimal privacy intrusion.

Materials: See "Research Reagent Solutions" (Section 5.0). Workflow:

- Participant Onboarding & Consent: Present tiered consent options (e.g., video on/off, audio-only, metadata-only). Obtain explicit, documented consent for each data type.

- On-Device Processing Setup: Install research application configured for local feature extraction (e.g., gait speed from video, speech rate from audio, inter-key latency from keystrokes).

- Data Capture Session: Participant performs standardized tasks (e.g., reading passage, typing test, timed up-and-go) in their home environment.

- Local Anonymization: Software applies real-time filters: video is converted to a skeleton stick figure; audio is downsampled and voice timbre distortion applied; keystroke timing data is computed, and content is discarded.

- Secure Transfer: Only extracted features and anonymized signals are encrypted and transmitted to the research server. Raw biometric data is deleted from the device.

- Server-Side Processing: Data is aggregated and linked only to a pseudonymous participant ID.

Protocol 3.2: Geofencing with Privacy-Preserving Aggregation for Adherence Monitoring

Objective: To verify participant adherence to clinic visit protocols without tracking continuous location.

Materials: Smartphone with GPS/BLE, secure research app, clinic beacon (BLE). Workflow:

- Geofence Definition: Define a virtual perimeter (geofence) around the clinical trial site using GPS coordinates.

- On-Device Logic: The participant's smartphone runs a local algorithm that detects entry/exit from the geofence.

- Privacy-Preserving Logging: The device does not transmit continuous coordinates. It only logs a timestamped "check-in" event when the geofence is entered and a BLE handshake with a clinic beacon is confirmed.

- Data Export: Only the time/date of the "check-in" event is transmitted to the researcher, proving presence without revealing the journey.

Visualization of Data Handling Workflows

Diagram 1: On-Device Anonymization Pipeline for High-Risk Data

Diagram 2: Privacy-Preserving Geofencing Protocol Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for High-Risk Data Research

| Item/Category | Example Product/Technology | Function in Research |

|---|---|---|

| Secure Mobile SDK | Apple ResearchKit/CareKit, Google Android Research Stack | Provides foundational, consent-managing frameworks for building secure data collection apps on iOS/Android. |

| On-Device ML Libraries | TensorFlow Lite, Core ML, MediaPipe | Enable local feature extraction (e.g., pose estimation, audio features) without raw data leaving the device. |

| Differential Privacy Tools | Google DP Library, IBM Diffprivlib | Allow aggregation of population insights from sensitive data while mathematically limiting individual re-identification risk. |

| Homomorphic Encryption (R&D) | Microsoft SEAL, OpenFHE | (Emerging) Allows computation on encrypted data, enabling analysis without decryption. Critical for future protocols. |

| Professional Transcription & Redaction | Rev.com, Sonix (with BAA) | For necessary raw audio analysis, use HIPAA-compliant services that contractually ensure data handling and automatic redaction of PHI. |

| Secure Compute Environment | AWS Nitro Enclaves, Azure Confidential Compute | Provides hardened, isolated cloud environments for processing potentially identifiable data during analysis phases. |

Within the thesis framework on ML protocols for ethical behavioral data collection, establishing stakeholder trust is paramount. This involves developing application notes and experimental protocols that transparently balance the utility of research data—essential for advancing ML model training in clinical and behavioral contexts—with inviolable respect for participant autonomy and informed consent. The following sections provide actionable guidance for researchers and drug development professionals.

Table 1: Participant Perception & Protocol Efficacy Metrics

| Metric | Industry Benchmark (2023) | Target for High-Trust Protocols | Measurement Tool |

|---|---|---|---|

| Informed Consent Comprehension Score | 72% | >90% | Validated post-consent quiz (score ≥8/10) |

| Participant Withdrawal Rate | 5-8% | <3% (non-clinical) | Study tracking logs |

| Data Anonymization Efficacy | 95% re-identification risk | >99.5% de-identification confidence | Differential privacy (ε ≤ 1) or k-anonymity (k ≥ 25) audits |

| Post-Study Trust Perception | 70% positive | >85% positive | Likert-scale survey (1-5, avg. ≥4.2) |

| Granular Consent Adoption | 40% of studies | 100% of studies | Protocol audit - presence of dynamic consent layers |

Table 2: ML-Specific Data Handling Parameters

| Parameter | Standard Practice | Ethical Protocol Requirement | Rationale |

|---|---|---|---|

| Data Minimization | Collect all available signals | Pre-collection feature necessity review | Reduces privacy risk, aligns with purpose limitation. |

| Inferred Data Labeling | Often unregulated | Explicit consent for sensitive inferences (e.g., mood state) | Protects autonomy over data not directly provided. |

| Continuous Consent Model | Single-point consent | ML-driven "re-consent" triggers for novel data use | Ensures ongoing autonomy as ML analysis evolves. |

| Federated Learning (FL) Adoption | ~15% of mobile health studies | Mandatory for sensitive behavioral data where feasible | Minimizes central data aggregation, enhancing security. |

Experimental Protocols

Protocol A: Dynamic, Multi-Layer Informed Consent Process for Behavioral Sensing Studies

- Objective: To obtain genuine, comprehended, and granular consent for continuous passive data collection via smartphones/wearables in a drug adherence trial.

- Materials: Secure tablet, dynamic consent software platform, audio-visual explanation modules, comprehension assessment tool.

- Procedure:

- Pre-Engagement: Provide a one-page visual summary of the study's data flow and key risks.

- Tiered Explanation:

- Tier 1 (Core): Explain primary data collection (e.g., GPS, app usage) and its direct research purpose.

- Tier 2 (Granular): Present optional modules (e.g., social interaction inference via call logs, voice sampling for mood) with separate toggles.

- Tier 3 (ML-Specific): Clearly explain how data will be used to train predictive models, including the possibility of inferring new, sensitive phenotypes.

- Interactive Comprehension Check: Administer a 5-question, scenario-based quiz. Incorrect answers trigger re-explanation of the specific concept.

- Documentation & Access: Provide a downloadable, plain-language consent document and a participant dashboard to view consented data streams and modify choices in real-time.

Protocol B: Implementing Federated Learning with Consent Verification

- Objective: To train an ML model on decentralized behavioral data without centralizing raw data, while auditing consent compliance.

- Materials: Participant mobile devices, FL client software, secure aggregator server, consent state API.

- Procedure:

- On-Device Processing: Deploy the FL client that trains a local model on the device using locally stored sensor data.

- Pre-Aggregation Consent Check: Before each aggregation round, the client pings the Consent State API to verify the participant's status for each data type used in that training round.

- Secure Model Parameter Transmission: Only if consent is valid, encrypted model updates (gradients) are sent to the secure aggregator.

- Global Model Update: The aggregator averages the updates to improve the global model, which is then redistributed.

- Audit Trail: Log all consent checks and transmission events for compliance review.

Visualization: Ethical ML Research Workflow

Diagram Title: Ethical ML Data Collection & Consent Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Solutions for Ethical Behavioral Data Research

| Item / Solution | Function in Ethical Research | Example / Note |

|---|---|---|

| Dynamic Consent Platform | Enables tiered, ongoing consent management and participant communication. | OpenConsent, REDCap Dynamic Consent module. |

| Federated Learning Framework | Allows model training on decentralized data without raw data transfer. | TensorFlow Federated, Flower, PySyft. |

| Differential Privacy Library | Provides mathematical guarantees of participant anonymity in datasets or queries. | Google DP Library, IBM Diffprivlib. |

| Secure Multi-Party Computation (MPC) | Enables joint analysis on encrypted data split across multiple parties. | Used in conjunction with FL for enhanced security. |

| Consent State API | A programmatic interface to verify and track participant consent status in real-time. | Custom-built microservice linking to consent database. |

| Synthetic Data Generator | Creates artificial datasets that mirror statistical properties of real data without privacy risk. | Mostly AI, Syntegra, Hazy. For preliminary algorithm validation. |

| Participant-Facing Dashboard | Provides transparency, allowing participants to view their data and control sharing preferences. | Key for building trust and maintaining autonomy. |

Implementing Privacy-Preserving ML Pipelines for Behavioral Data Acquisition

Ethics-by-Design (EbD) is a proactive framework that embeds ethical principles directly into the architecture of research protocols and Statistical Analysis Plans (SAPs). Within Machine Learning (ML) protocols for behavioral data collection, this shifts ethics from a review hurdle to a core, operational component. This integration is critical for maintaining participant autonomy, ensuring data integrity, and mitigating risks of algorithmic bias, particularly in sensitive domains like digital phenotyping for drug development.

Core Application Notes:

- Pre-emptive Risk Mitigation: EbD requires the identification and documentation of ethical risks (e.g., privacy erosion, unintended behavioral manipulation, group harm from biased models) in the protocol's risk assessment section, alongside corresponding technical and procedural controls.

- SAP Integration: Ethical considerations must directly influence the SAP. This includes pre-specifying fairness metrics for subgroup analyses, defining handling of missing data not-at-random (which may indicate participant distress), and outlining model transparency requirements for the primary analysis.

- Dynamic Documentation: The protocol and SAP should establish an "Ethics Log" or similar appendices to document deviations, participant feedback, and iterative changes to the ML pipeline made for ethical reasons during the study.

Key Quantitative Frameworks & Data

Table 1: Core Quantitative Metrics for Ethical ML in Behavioral Research

| Metric Category | Specific Metric | Purpose in EbD Protocol | Target Threshold (Example) |

|---|---|---|---|

| Fairness & Bias | Demographic Parity Difference | Assess if model outcomes are equal across protected groups. | < 0.05 |

| Equalized Odds Difference | Evaluate if model error rates are similar across groups. | < 0.10 | |

| Disparate Impact Ratio | Measure of adverse impact in model predictions. | Between 0.8 and 1.25 | |

| Privacy | k-Anonymity value (k) | Minimum group size for re-identification risk in shared data. | k ≥ 5 |

| Differential Privacy Epsilon (ε) | Privacy loss parameter for noisy data aggregation. | ε ≤ 1.0 (strict) | |

| Transparency | Model Explainability Score (e.g., LIME fidelity) | Quantifies how well post-hoc explanations match model logic. | > 0.8 |

| Feature Importance Stability | Consistency of identified important features across samples. | > 0.7 | |

| Participant Agency | Consent Comprehension Score (post-quiz) | Validates understanding of complex ML data use. | > 80% correct |

| Withdrawal Rate (Overall & by Stage) | Proxy for burden and trust; triggers protocol review. | Monitor for spikes |

Experimental Protocol: Bias Audit for a Predictive Behavioral Model

Title: Pre-Deployment Bias Audit of an ML Model for Digital Phenotyping.

Objective: To empirically assess a trained behavioral prediction model for unfair discrimination across pre-defined demographic subgroups before its inclusion in the study's SAP for primary analysis.

Materials:

- Trained ML Model: The candidate model for predicting the behavioral endpoint.

- Audit Dataset: A held-out test set representative of the recruitment population, with necessary protected attribute labels (e.g., age, gender, race/ethnicity, socioeconomic proxy). Data must be de-identified.

- Computing Environment: Secure, access-controlled environment with necessary libraries (e.g.,

fairlearn,aif360,sklearn).

Procedure:

- Model Prediction: Generate predictions (and probabilities if applicable) for all samples in the Audit Dataset using the trained model.

- Metric Calculation: For each protected subgroup, calculate the performance metrics (accuracy, F1, recall, precision) and the fairness metrics listed in Table 1.

- Disparity Analysis: Compare metrics across groups. Perform statistical testing (e.g., bootstrapped confidence intervals for differences) to identify significant disparities.

- Root Cause Investigation: If significant bias is detected (> target thresholds), analyze feature distributions, learning curves, and sample sizes per group to identify potential sources.

- Mitigation Decision Point: Based on the audit, the protocol must pre-specify actions: a) Adopt model if within thresholds, b) Apply a pre-specified bias mitigation algorithm (e.g., reweighting, adversarial debiasing), or c) Reject model and trigger a return to model development phase. This decision tree must be in the SAP.

Visualization of Ethics-by-Design Integration Workflow

Diagram Title: Ethics-by-Design Integration in Study Lifecycle

The Scientist's Toolkit: Essential Reagents & Solutions

Table 2: Research Reagent Solutions for Ethical ML Protocols

| Item / Solution | Function in Ethical Protocol | Example / Note |

|---|---|---|

| Synthetic Data Generators (e.g., SDV, Gretel) | Create privacy-safe, representative data for protocol development, testing, and external sharing without exposing real participant data. | Used in pilot phases to simulate rare subgroups. |

| Differential Privacy Libraries (e.g., OpenDP, TensorFlow Privacy) | Provide algorithms to add calibrated noise to queries or model training, mathematically bounding privacy loss (ε). | Integral for protocols sharing aggregated statistics. |

| Bias Auditing & Mitigation Toolkits (e.g., Fairlearn, IBM AIF360) | Standardized libraries to calculate fairness metrics and apply mitigation techniques pre- or post-modeling. | Mandatory for the pre-deployment audit protocol. |

| Explainable AI (XAI) Methods (e.g., SHAP, LIME, InterpretML) | Generate post-hoc explanations for model predictions to ensure scrutability and challengeability as per ethical principles. | Required for protocols involving high-stakes behavioral predictions. |

| Secure Multi-Party Computation (MPC) Platforms | Enable collaborative model training on decentralized data without sharing raw data, preserving privacy and data sovereignty. | For multi-site studies where data cannot be centralized. |

| Consent Management Platforms (Digital, Dynamic) | Facilitate granular, tiered consent and re-consent for new data uses, operationalizing the principle of ongoing informed consent. | Must interface with study data capture systems. |

| Ethics Log Software (e.g., ELANIT, custom REDCap module) | Provides a structured, version-controlled repository to document ethical decisions, incidents, and protocol adaptations in real-time. | Essential for audit trails and study transparency. |

Within the broader thesis on machine learning (ML) protocols for ethical behavioral data collection in clinical and research settings, traditional informed consent models are increasingly inadequate. The integration of AI/ML in healthcare research, particularly in drug development and digital phenotyping, necessitates a paradigm shift towards Dynamic Consent and Explainable Data Usage. This protocol provides application notes for implementing these frameworks to ensure ethical integrity, regulatory compliance, and sustained participant engagement in longitudinal studies.

Core Conceptual Data & Comparative Analysis

Table 1: Quantitative Comparison of Consent Models in AI-Driven Health Research

| Feature | Traditional One-Time Consent | Broad Consent | Dynamic Consent |

|---|---|---|---|

| Frequency of Interaction | Single point at study onset. | Single point, often for unspecified future use. | Continuous, iterative interactions. |

| Granularity of Choice | Binary (yes/no) for entire protocol. | Broad categories of future research. | Granular, data-type and use-case specific. |

| Participant Engagement | Low; static. | Very Low. | High; interactive dashboard common. |

| Adaptability to New AI Uses | None; requires re-consent. | Limited, depends on original scope. | High; new uses can be presented for permission. |

| Explainability Integration | Minimal; paper forms. | Low. | Core function; explanations provided per decision point. |

| Reported Participant Trust (%)* | 45-55% | 50-60% | 80-90% |

| Data Withdrawal Complexity | High, often impractical. | Very High. | Simplified, often via user portal. |

| Regulatory Alignment | FDA 21 CFR Part 50, ICH GCP. | GDPR, with challenges. | Aligns with GDPR, CCPA, AI Act principles. |

*Data synthesized from recent studies on participant attitudes (2023-2024). Trust percentages represent relative satisfaction with understanding and control.

Table 2: Key Metrics for Evaluating Explainable Data Usage Systems

| Metric | Target Value | Measurement Method |

|---|---|---|

| Explanation Fidelity | >95% | Accuracy of explanation vs. actual model operation (e.g., via saliency maps or feature importance). |

| Participant Comprehension Score | >80% | Post-explanation quiz scores on data usage purpose, risks, and rights. |

| Time-to-Consent Decision | < 5 minutes | Mean time for participant to review explanation and make granular choice. |

| Re-consent Engagement Rate | >75% | Percentage of participants engaging with new consent requests for secondary AI analysis. |

| System Usability Scale (SUS) | >68 | Standard SUS questionnaire for the consent platform interface. |

Experimental Protocols

Protocol 3.1: Implementing a Dynamic Consent Framework for Longitudinal Behavioral Data Collection

Objective: To establish a technically and ethically robust dynamic consent system for a multi-year observational study collecting smartphone-derived behavioral data for neurological drug development.

Materials:

- Secure, HIPAA/GDPR-compliant participant portal (web/mobile app).

- Backend database with immutable audit log for all consent transactions.

- RESTful API suite for integrating with Electronic Data Capture (EDC) and ML training platforms.

- Microservices architecture for managing granular consent preferences.

Procedure:

- Initialization & Profiling:

- Participant is onboarded via a secure link.

- System presents a Core Consent module for primary data collection (e.g., passive GPS, app usage metrics).

- Each data stream is accompanied by an Explainable Data Usage (EDU) card, using layperson terms and visualizations (see Diagram 1) to detail:

- Purpose: "How this data will train an AI to detect patterns related to disease progression."

- Process: "The AI will look at changes in your movement patterns over time."

- Protections: "Data is pseudonymized and stored on encrypted servers."

- Participant selects preferences per data stream (Allow/Deny).

Dynamic Interaction Loop:

- When a new research question arises requiring additional data analysis (e.g., applying a novel NLP model to message metadata for mood inference), the system triggers a Re-consent Request.

- The request is pushed to the participant's portal, featuring a new EDU card explaining the novel AI methodology, its goal, and any revised risks.

- Participant action (Allow/Deny for this specific use) is recorded in the audit log with a timestamp. The underlying raw data is tagged with these permissions.

Continuous Control & Audit:

- Participant can access their "Consent Dashboard" anytime to view current permissions, withdraw consent for specific streams, or download a report of all their transactions.

- All ML training pipelines query the consent management API before data access. Data is filtered in real-time based on current permissions.

Protocol 3.2: Experimental Validation of Explanation Modalities for AI Consent

Objective: To empirically determine which explanation modality for AI data usage maximizes participant comprehension and informed decision-making.

Design: Randomized Controlled Trial (RCT) with four arms.

Participants: n=400 recruited from a pool of research-naive and experienced volunteers.

Interventions:

- Arm A (Control): Text-only description of an AI model's data usage (standard paragraph).

- Arm B (Visual-Saliency): Text + Saliency map overlay showing which input features (e.g., specific sensor data points) most influenced a sample AI output.

- Arm C (Counterfactual): Text + Counterfactual examples (e.g., "If your 'time between phone unlocks' was 20% higher, the model's prediction of anxiety likelihood would decrease by 15%").

- Arm D (Interactive-Feature): Text + Interactive tool allowing participants to adjust sliders for hypothetical data values and see the impact on a simulated model output.

Procedure:

- Participants are randomized to one of four arms.

- They review the assigned explanation material for a defined AI task (e.g., "Predicting depressive episodes from accelerometer and call log data").

- They complete a Comprehension Assessment (10-item multiple-choice quiz).

- They complete the Subjective Understanding & Trust Scale (SUTS), a 7-point Likert scale questionnaire.

- They make a simulated consent decision.

- Data Analysis: ANOVA is used to compare mean comprehension scores and trust ratings across arms. Post-hoc tests identify superior modalities.

Diagrams: Workflows and Relationships

(Diagram 1: Dynamic Consent-AI Workflow Integration. Max width: 760px)

(Diagram 2: Components of an Explainable Data Usage Card. Max width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Dynamic Consent & Explainability Platform

| Component / Reagent | Function / Purpose | Example Solutions / Standards |

|---|---|---|

| Consent Management API | Core engine to store, retrieve, and enforce granular consent preferences. Must integrate with EDC and ML ops. | TransCelerate's Digital Consent Solution, Bespoke microservice using FHIR Consent resource. |

| Immutable Audit Log | Provides a verifiable, tamper-proof record of all consent interactions for regulatory compliance. | Blockchain-based ledger (e.g., Hyperledger Fabric), or secured database with cryptographic hashing. |

| Explanation Interface Library | Pre-built UI components (widgets) for generating EDU cards with visual, interactive, or textual explanations. | IBM AI Explainability 360 (AIX360) UI widgets, LIME or SHAP for visual saliency integration. |

| Participant Portal Framework | Secure, user-friendly front-end for participants to manage consent, receive requests, and view explanations. | Custom-built React/Angular app, or modules within patient engagement platforms (e.g., MyDataHelps). |

| Consent-State-Aware Data Filter | Middleware that queries the Consent API and dynamically filters datasets for ML pipelines based on active permissions. | Custom Python/Java service deployed within the data lake or training environment. |

| Compliance Validation Suite | Automated checks to ensure data usage aligns with logged consent states (GDPR/CCPA/AI Act). | Automated policy engines using Rego (Open Policy Agent) or XBRL for reporting. |

Within ethical behavioral data collection research for human-centric studies (e.g., digital phenotyping, patient-reported outcomes in clinical trials), anonymization techniques are critical to preserve participant privacy while enabling robust machine learning (ML) analysis. The following table summarizes the core technical and quantitative characteristics of three principal methods.

Table 1: Comparative Analysis of Primary Anonymization Techniques for Behavioral Data Research

| Feature | Federated Learning (FL) | Differential Privacy (DP) | Synthetic Data Generation |

|---|---|---|---|

| Core Privacy Principle | Data Localization; Model Sharing | Mathematical Noise Injection | Pattern Replication; No Direct Linkage |

| Primary Output | A globally trained ML model | Noisy query results or a trained model with noise | A wholly new synthetic dataset |

| Privacy Guarantee | Architectural (reduces exposure risk) | Quantifiable (ε, δ)-budget | Statistical; risk of membership inference |

| Key Metric | Number of federation rounds, Client participation rate | Privacy budget (ε), typically 0.1-10 | Fidelity scores (e.g., KS statistic <0.1), Utility scores |

| Data Utility | High; model learns from raw data directly | Utility/Privacy trade-off; higher noise lowers utility | High if generative model is well-trained |

| Best Suited For | Collaborative training across silos (hospitals, pharma) | Releasing aggregate statistics or public models | Creating shareable, exploratory datasets for development |

| Computational Overhead | High (distributed training) | Low to Moderate | High (generative model training) |

| Regulatory Alignment | Supports GDPR/CCPA data minimization | Enables GDPR-compliant anonymization | Output must be truly non-identifiable per HIPAA Safe Harbor |

Application Notes and Detailed Protocols

Protocol 2.1: Cross-Institutional Behavioral Phenotyping via Federated Learning

Objective: To train a predictive model for depression severity from smartphone usage patterns (screen time, app usage entropy, circadian rhythm disruption) without centralizing data from multiple clinical research sites.

Materials & Workflow:

- Initialization: Coordinator server initializes a global model architecture (e.g., a 1D CNN-RNN hybrid).

- Client Selection: Each participating research site (client) is screened for minimum local dataset size (e.g., n ≥ 50 participants with validated PHQ-9 labels).

- Federated Training Round:

a. Broadcast: Server sends the current global model weights (W_t) to all selected clients.

b. Local Computation: Each client

ktrains the model on its local data forEepochs (e.g., E=3), computing updated weightsW_{t+1}^k. c. Secure Aggregation: Clients send encrypted model updates (W_{t+1}^k - W_t) to the server. The server decrypts only the aggregated average update using a Secure Aggregation protocol. d. Update: Server computes new global weights:W_{t+1} = W_t + η * (aggregated update), whereηis a server learning rate. - Iteration: Steps 3a-3d repeat for

Trounds (e.g., T=100) until model convergence is reached on a held-out validation set maintained by the coordinator.

Diagram: Federated Learning Workflow for Behavioral Data

Protocol 2.2: Differentially Private Release of Clinical Trial Engagement Statistics

Objective: To publicly release aggregate statistics (mean, standard deviation) on daily app engagement minutes from a sensitive behavioral intervention trial while providing a mathematical privacy guarantee.

Materials & Workflow:

- Query Formulation: Define the query function

f(D)= [mean(D), std(D)] on the raw datasetDof engagement times. - Sensitivity Calculation: Determine the L2-sensitivity (

S) of the vector-valued query. For bounded data (e.g., 0-1440 minutes),Sis calculable. - Privacy Budget Allocation: Allocate a total privacy budget of

ε = 1.0(δ=1e-5) for this release. For a two-output query, budget may be split equally. - Noise Injection: Generate calibrated noise from the Gaussian mechanism.

a. Compute noise scale:

σ = S * sqrt(2*log(1.25/δ)) / ε. b. Draw noise vectorsn_mean,n_std~N(0, σ^2). c. Release:[mean(D) + n_mean, std(D) + n_std]. - Budget Tracking: Deduct

ε=1.0from the total privacy budget for the datasetD. No further queries are allowed once the budget is exhausted.

Diagram: Differential Privacy Mechanism for Query Release

Protocol 2.3: Generating Synthetic Behavioral Actigraphy Data using GANs

Objective: To create a synthetic dataset of actigraphy time-series (rest-activity cycles) and associated mild cognitive impairment (MCI) labels for open-source algorithm development.

Materials & Workflow:

- Real Data Preparation: Curate a real, de-identified source dataset

X_realof actigraphy sequences and labels. Normalize all features. - Model Selection: Implement a Wasserstein GAN with Gradient Penalty (WGAN-GP) or a conditional GAN (cGAN) to preserve label-data relationships.

- Training:

a. Generator (G): Maps random noise

zand condition labelyto synthetic dataX_synth. b. Critic/Discriminator (D): Distinguishes real(X_real, y)from synthetic(X_synth, y)pairs. c. Train in adversarial min-max game for fixed iterations, monitoring loss equilibrium. - Evaluation & Sampling: a. Fidelity: Compare distributions (e.g., using Kolmogorov-Smirnov test) of real vs. synthetic features at the population level. b. Utility: Train a downstream classifier (e.g., for MCI prediction) on synthetic data and test on a held-out real validation set. Report performance degradation. c. Privacy: Perform membership inference attacks on the synthetic data to audit potential leakage.

- Release: Package the trained generator and/or a large sampled synthetic dataset

X_synthfor public use.

Diagram: Synthetic Data Generation via GAN

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Frameworks for Implementing Anonymization Protocols

| Tool/Reagent | Primary Function | Relevance to Protocol |

|---|---|---|

| PySyft / PyGrid | A library for secure, private deep learning in PyTorch. | Implements Federated Learning with Secure Aggregation (Protocol 2.1). |

| TensorFlow Privacy | A library to train ML models with DP. | Provides ready-made optimizers (e.g., DP-SGD) for Protocol 2.2. |

| OpenDP / IBM Diffprivlib | Frameworks for applying DP to statistical queries and data analysis. | Used for accurate sensitivity analysis and noise mechanisms (Protocol 2.2). |

| CTGAN / TVAE | Generative models for tabular data (from SDV library). | Base models for creating synthetic structured behavioral data. |

| DoppelGANger | A GAN designed for time-series synthetic data generation. | Critical for generating realistic actigraphy sequences (Protocol 2.3). |

| SmartNoise Core | Tools for executing DP queries safely. | Helps manage end-to-end DP workflows and budget accounting. |

| Flower Framework | A user-friendly Federated Learning framework. | Simplifies the orchestration of FL experiments across clients. |

| Synthetic Data Vault (SDV) | An ecosystem for creating and evaluating synthetic data. | Provides unified metrics for fidelity and utility (Protocol 2.3). |

This application note details practical protocols for selecting and implementing edge or cloud computing architectures within ethical behavioral data collection research, such as in digital phenotyping for clinical trials. The primary goal is to minimize the data footprint—the volume of raw data transmitted and stored—thereby enhancing privacy, reducing latency, and managing costs.

Table 1: Quantitative Comparison of Edge vs. Cloud Processing for Behavioral Data

| Parameter | Edge Computing | Cloud Processing | Implications for Data Footprint |

|---|---|---|---|

| Data Transmission Volume | Transmits only processed features/alerts (e.g., ~1-10 KB/sec). | Transmits raw, continuous data streams (e.g., ~100-500 KB/sec). | Edge reduces upstream bandwidth by 90-99%. |

| End-to-End Latency | 10-50 milliseconds. | 150-2000+ milliseconds (varies with network). | Edge enables real-time, closed-loop interventions. |

| Data Centralization | Data processed & often discarded locally; only results stored centrally. | All raw data centralized for processing & storage. | Edge drastically limits centralized data liability. |

| Privacy/Security Risk | High; sensitive data retained on device. | Lower; data leaves the device, increasing exposure surface. | Edge aligns with data minimization principles (e.g., GDPR). |

| Compute Cost Model | Higher upfront device cost; lower ongoing bandwidth/cloud costs. | Low upfront cost; variable, scalable ongoing OPEX. | Edge cost-effective for large N or continuous streaming. |

| Scalability | Scales with number of deployed devices; requires device management. | Highly elastic; scales seamlessly with user load. | Cloud favored for sporadic, intensive batch analysis. |

Experimental Protocols for Architecture Validation

Protocol 2.1: Comparative Latency & Data Reduction Experiment

Aim: To quantitatively measure the data footprint reduction and latency improvement of an edge-based feature extraction pipeline versus raw cloud streaming.

Materials:

- Wearable sensor (e.g., Empatica E4) collecting PPG/ACC/EDA data.

- Edge device (e.g., NVIDIA Jetson Nano, Raspberry Pi 4+).

- Cloud VM instance (e.g., AWS EC2 t2.large).

- Custom Python data pipeline.

Methodology:

- Edge Pipeline:

- Deploy a lightweight ML model (e.g., TensorFlow Lite) on the edge device to process raw sensor data in real-time.

- Extract specific biomarkers (e.g., heart rate variability, step count, galvanic skin response peaks) on-device.

- Transmit only these extracted features, timestamped, to a cloud database every 60 seconds.

- Log the timestamp of sensor data acquisition and feature transmission.

- Cloud Pipeline:

- Stream all raw, timestamped sensor data continuously from the wearable to a cloud buffer (e.g., AWS Kinesis).

- Process the data using an identical model on the cloud VM to extract identical biomarkers.

- Store results in the same cloud database.

- Log the timestamp of sensor data acquisition and processed result storage.

- Analysis:

- Data Volume: Compare the total bytes transmitted from the device in each condition over a 24-hour period.

- Latency: Calculate the difference between data acquisition time and result storage time for a sample of events in each pipeline.

- Statistical Comparison: Perform a paired t-test on latency measurements from both pipelines.

Protocol 2.2: Ethical Data Minimization in Digital Phenotyping

Aim: To implement and validate an edge-based "filter-and-forward" protocol that pre-screens data for relevant behavioral episodes before transmission.

Materials:

- Smartphone with sensing capabilities (audio, accelerometer).

- On-device application with embedded ML model for audio event detection.

- Secure cloud backend for episode storage.

Methodology:

- Model Deployment: Integrate a pre-trained, privacy-preserving acoustic event detection model (e.g., for detecting coughs or specific keywords) into a mobile research application. The model must run entirely on-device.

- Continuous Local Monitoring: The app continuously analyzes ambient audio using the local model. Raw audio data is never stored or transmitted. It exists only in volatile memory during analysis.

- Triggered Upload: Only when a target event (e.g., a cough) is detected with confidence >85% does the protocol execute:

- A 5-second audio clip centered on the detected event is temporarily saved.

- This clip is immediately encrypted and uploaded to the study backend.

- The local clip is permanently deleted post-upload.

- Validation: Manually label a ground-truth dataset of recorded sessions. Calculate the percentage of true events captured and the reduction in total data uploaded compared to a continuous streaming approach.

Visualized Architectures & Workflows

Diagram 1: Data Flow Comparison: Edge vs. Cloud Pipelines

Diagram 2: Ethical On-Device Filter-and-Forward Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Edge/Cloud Behavioral Research

| Item | Function in Research | Example Product/Solution |

|---|---|---|

| Edge Compute Device | Provides localized processing power for running ML models on sensor data without cloud transmission. | NVIDIA Jetson series, Google Coral Dev Board, Raspberry Pi. |

| Research-Grade Wearable | Collects high-fidelity, multimodal physiological and movement data in real-world settings. | Empatica E4, Biostrap, ActiGraph GT9X. |

| Mobile SDK for Sensing | Enables controlled, ethical data collection from smartphone sensors (audio, accelerometer, etc.). | Beiwe platform, Apple ResearchKit, AWARE framework. |

| ML Model Optimization Tool | Converts trained models to formats suitable for efficient edge deployment (e.g., quantized, pruned). | TensorFlow Lite, PyTorch Mobile, ONNX Runtime. |

| Secure Data Ingest Service | Provides a scalable, HIPAA/GDPR-compliant endpoint for receiving data from edge devices or apps. | AWS IoT Core, Azure IoT Hub, Google Cloud IoT Core. |

| Federated Learning Framework | Enables model training across decentralized edge devices without centralizing raw data. | Flower, TensorFlow Federated, PySyft. |

| Behavioral Feature Library | Provides validated algorithms for extracting clinical biomarkers from raw sensor data. | NeuroKit2, HeartPy, TSFEL. |

Within the broader thesis on developing ethical machine learning (ML) protocols for behavioral data collection, neurodegenerative disease trials present a critical use case. The quantitative assessment of motor function—gait, balance, tremor, bradykinesia—is essential for evaluating therapeutic efficacy in conditions like Parkinson’s disease (PD), Amyotrophic Lateral Sclerosis (ALS), and Huntington’s disease (HD). Traditional clinic-based assessments (e.g., Unified Parkinson's Disease Rating Scale, UPDRS) are subjective, sparse, and prone to "white coat" effects. Ethical ML-enabled continuous remote monitoring offers a paradigm shift, but introduces significant challenges: ensuring informed consent from potentially cognitively impaired populations, protecting highly sensitive biometric data, mitigating algorithmic bias, and maintaining patient dignity through minimal intrusion.

Recent advancements utilize wearable sensors (inertial measurement units - IMUs), smartphone cameras, and keyboard/typing dynamics to capture digital motor biomarkers. The following table summarizes key quantitative findings from current research:

Table 1: Performance Metrics of ML Models for Digital Motor Biomarkers

| Disease Focus | Data Modality | Primary Sensor | Sample Size (Recent Study) | Key ML Model(s) | Reported Accuracy/Sensitivity | Primary Ethical Concern Addressed |

|---|---|---|---|---|---|---|

| Parkinson's Disease | Gait & Tremor Analysis | Wrist-worn IMU | n=432 | Random Forest, CNN | 94% (Tremor Severity Classification) | Data Anonymization; Continuous vs. Episodic Consent |

| ALS | Speech & Hand Function | Smartphone Microphone & Touchscreen | n=178 | Recurrent Neural Networks (RNNs) | 89% (ALSFRS-R Slope Prediction) | Participant Burden in Progressive Disability |

| Huntington's Disease | Chorea & Postural Stability | Chest-worn IMU + Depth Camera | n=95 | LSTM Networks | 91% (Chorea Detection) | Privacy in Home-Based Video Recording |

| Multiple System Atrophy | Gait Variability | In-shoe Pressure Sensors | n=121 | Gradient Boosting Machines | 87% (Differentiation from PD) | Data Security for Identifiable Movement Patterns |

Ethical ML Protocol: Application Notes

This protocol outlines a principled framework for embedding ethics into the ML pipeline for remote motor function data collection.

3.1 Participant-Centric Consent Framework: Implement a dynamic, layered consent process using a digital platform. This includes initial simplified explanations with visual aids, ongoing "touchpoint" reconfirmations via the app, and clear opt-out mechanisms for specific data types (e.g., audio, video). For participants with declared cognitive impairments, a verified caregiver co-consent mechanism is integrated.

3.2 Privacy-by-Design Data Pipeline: All raw data (e.g., video, GPS-located gait) is encrypted on-device. Feature extraction (e.g., step velocity, tremor frequency) occurs locally on the participant's smartphone or a dedicated edge device before only these de-identified features are transmitted to secure servers. This minimizes exposure of raw biometrics.

3.3 Bias Mitigation & Algorithmic Fairness: Actively recruit diverse cohorts across age, gender, ethnicity, and disease severity during model development. Use techniques like adversarial de-biasing to ensure motor assessment algorithms perform equitably across subgroups. Regularly audit model performance for disparate error rates.

3.4 Transparency & Explainability: Provide participants and clinicians with intuitive dashboards. Use SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to generate simple explanations for automated scores (e.g., "Your gait speed score decreased due to shorter stride length").

Detailed Experimental Protocol: IMU-Based Gait Analysis in PD

Title: A 12-Week Remote Monitoring Study of Gait in Early-Stage Parkinson's Disease Using Ethical ML Protocols.

4.1 Objective: To train and validate an ML model for classifying PD severity (based on MDS-UPDRS Part III gait scores) from weekly 10-minute walking tasks, while adhering to ethical data collection principles.

4.2 Materials & Reagent Solutions: Table 2: Research Reagent Solutions & Essential Materials

| Item Name | Function/Description |

|---|---|

| Inertial Measurement Unit (IMU) | A small, wearable sensor (e.g., Axivity AX3) containing accelerometers and gyroscopes to capture linear and angular motion. |

| Participant Smartphone App | Custom application for task reminders, secure local data processing, dynamic consent management, and encrypted feature transmission. |

| Secure Cloud Database | HIPAA/GDPR-compliant backend (e.g., AWS with de-identified feature store) for aggregated model training and analysis. |

| Reference Clinical Scores | MDS-UPDRS Part III assessments performed via telemedicine at baseline, 6 weeks, and 12 weeks for ground-truth labeling. |

| Adversarial De-biasing Library | (e.g., aif360 from IBM) Software toolkit to reduce bias in the ML model against demographic subgroups. |

| Edge Computing Framework | (e.g., TensorFlow Lite) Enables on-device feature extraction from raw IMU signals, preserving privacy. |

4.3 Participant Enrollment & Ethical Onboarding:

- Recruit participants (n=300 targeted) with early-stage PD (Hoehn & Yahr 1-2).

- Obtain digital informed consent via the study app, employing the layered framework (Section 3.1).

- Pair each participant with a clinician who confirms eligibility and provides clinical ground truth.

4.4 Data Collection Workflow:

- Weekly Task: Participants are prompted by the app to perform a 10-minute walking task at home, wearing IMUs on both wrists and ankles.