Demystifying PCA for Biomedical Research: A Practical Guide to Unsupervised Feature Extraction and Dimensionality Reduction

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying Principal Component Analysis (PCA) for unsupervised feature extraction.

Demystifying PCA for Biomedical Research: A Practical Guide to Unsupervised Feature Extraction and Dimensionality Reduction

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying Principal Component Analysis (PCA) for unsupervised feature extraction. We cover foundational concepts, step-by-step methodology for high-dimensional omics and clinical data, common pitfalls and optimization strategies, and methods for validating and comparing PCA results against other techniques. The focus is on practical application in biomedical contexts, from exploratory data analysis to preparing data for downstream machine learning models.

What is PCA? Core Concepts for Unsupervised Feature Discovery in Biomedical Data

Within the thesis on unsupervised feature extraction for research data, Principal Component Analysis (PCA) is defined not merely as a tool for correlation analysis, but as a foundational eigenvector-based technique that projects high-dimensional data onto a new orthonormal basis of principal components (PCs). These PCs are linear combinations of the original variables, ordered by the amount of variance they capture from the data, thereby maximizing information retention while reducing dimensionality. Its unsupervised nature is critical—it identifies structure without reference to labels or outcomes, making it indispensable for exploratory data analysis, noise reduction, and visualization in domains from genomics to cheminformatics. In drug development, it is routinely applied to analyze high-throughput screening results, 'omics data (transcriptomics, proteomics), and chemical compound libraries to identify latent patterns, batch effects, or outlier samples.

Table 1: Variance Explained by Principal Components in a Representative Gene Expression Dataset (GSE12345)

| Principal Component | Eigenvalue | % of Total Variance Explained | Cumulative % Variance Explained |

|---|---|---|---|

| PC1 | 45.2 | 32.8% | 32.8% |

| PC2 | 28.7 | 20.9% | 53.7% |

| PC3 | 15.4 | 11.2% | 64.9% |

| PC4 | 9.1 | 6.6% | 71.5% |

| PC5 | 6.8 | 4.9% | 76.4% |

Table 2: PCA Application Comparison in Drug Development Research

| Application Area | Typical Input Data Dimensionality | Typical # of PCs Retained | Primary Goal |

|---|---|---|---|

| High-Throughput Screening | 10,000 - 100,000 compounds | 3-5 for visualization | Identify clusters of compounds with similar activity profiles; flag outliers. |

| Transcriptomic Analysis | 20,000+ genes x 100s of samples | 10-50 for downstream analysis | Remove batch effects, visualize sample clustering, reduce noise for models. |

| ADMET Property Modeling | 500-2000 molecular descriptors | 20-100 capturing >95% variance | Eliminate multicollinearity among descriptors for predictive QSAR models. |

Experimental Protocols

Protocol 1: PCA for Batch Effect Detection in Microarray or RNA-Seq Data Objective: To identify and visualize non-biological technical variation (batch effects) in gene expression studies.

- Data Preprocessing: Start with a normalized gene expression matrix (genes as rows, samples as columns). Log-transform if necessary (e.g., log2(FPKM+1) for RNA-seq). Center the data by subtracting the mean expression of each gene.

- PCA Execution: Perform singular value decomposition (SVD) on the centered matrix. This yields the matrices of left-singular vectors (sample loadings for PCs), singular values (related to eigenvalues), and right-singular vectors (gene loadings for PCs).

- Variance Assessment: Calculate the percentage of variance explained by each PC using the squared singular values.

- Visualization: Generate a 2D scatter plot of samples using PC1 and PC2 scores. Color-code samples by known batch variables (e.g., sequencing run, processing date) and biological variables (e.g., treatment group, disease state).

- Interpretation: If samples cluster strongly by batch in PC1/PC2 space, a significant batch effect is present. Further PCs (PC3, PC4) should also be examined.

Protocol 2: PCA for Dimensionality Reduction Prior to Clustering Analysis in Phenotypic Screening Objective: To reduce the dimensionality of multi-parametric cellular feature data to enable robust clustering of compound mechanisms of action.

- Feature Standardization: Begin with a matrix of cellular features (e.g., morphology, intensity measurements) across many compounds and replicates. Scale each feature to have zero mean and unit variance (Z-score normalization).

- PCA Implementation: Apply PCA on the scaled matrix using covariance matrix diagonalization.

- Component Selection: Use the scree plot (eigenvalues vs. PC number) and the cumulative variance rule (e.g., retain PCs explaining >90% total variance) to select the number of components, k.

- Data Projection: Project the original scaled data onto the selected k PCs to create a new, reduced-dimension dataset (the PC scores matrix).

- Downstream Clustering: Use the PC scores matrix as input for unsupervised clustering algorithms (e.g., k-means, hierarchical clustering).

Visualizations

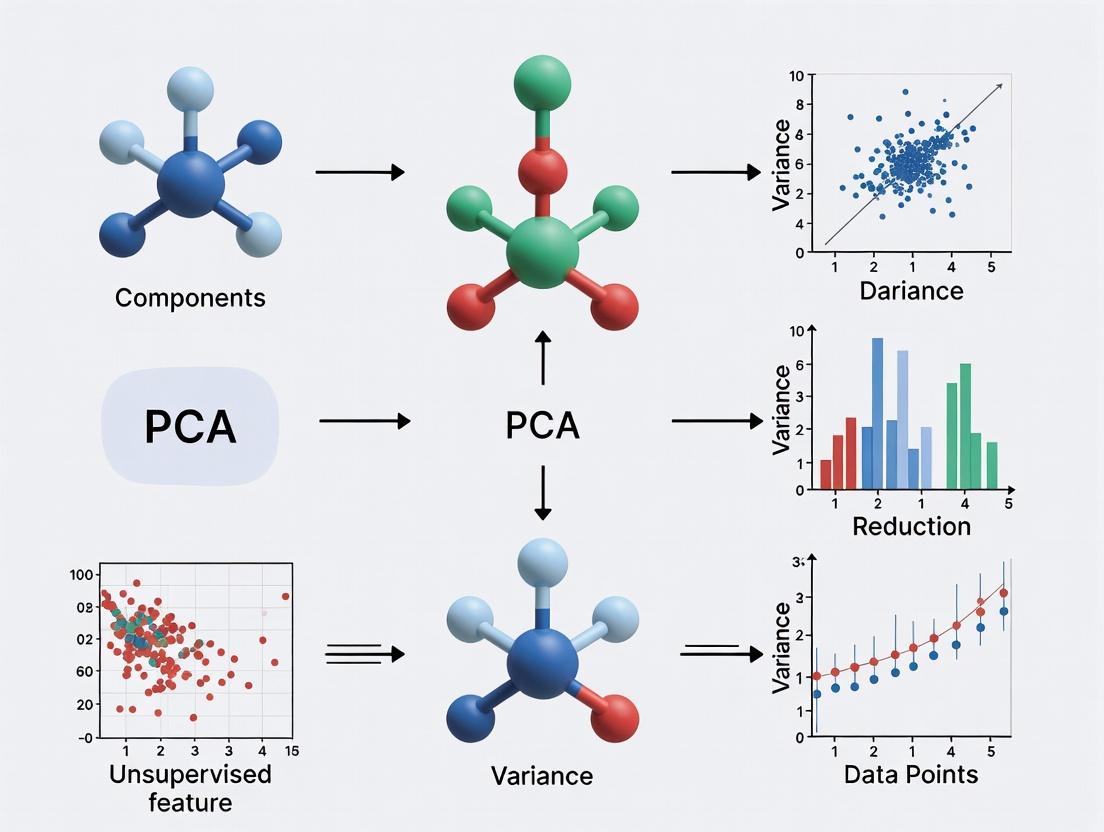

Title: PCA Data Analysis Workflow

Title: PCA Decomposes Data Variance Sources

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in PCA-Centric Analysis |

|---|---|

R (with prcomp/factoextra) or Python (scikit-learn, decomposition.PCA) |

Core computational environment and libraries for performing PCA, calculating variance explained, and generating scores/loadings. |

| Gene Expression Normalization Suite (e.g., DESeq2, edgeR, limma) | For RNA-seq/microarray data: Essential preprocessing to normalize counts and model variance, creating the stable input matrix for PCA. |

| Metadata Management Database (e.g., LabGuru, ELN) | Critical for accurate sample annotation (batch, treatment, etc.) to color-code and interpret PCA score plots correctly. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Necessary for SVD computation on very large datasets (e.g., single-cell RNA-seq with >100k cells). |

| Interactive Visualization Tool (e.g., Plotly, ggplot2, Matplotlib) | Creates publishable and explorable PCA score plots, scree plots, and biplots for hypothesis generation. |

| RobustScaler / StandardScaler (scikit-learn) | For non-omics data (e.g., ADMET properties): Preprocessing module to standardize features to mean=0, variance=1, ensuring PCA is not dominated by scale. |

Application Notes

Foundational Concepts for PCA in Biomedical Research

Principal Component Analysis (PCA) is a cornerstone technique for unsupervised feature extraction, particularly in high-dimensional research data common in genomics, proteomics, and cheminformatics. Its mathematical foundation lies in understanding and computing variance, the covariance matrix, and its eigenvectors/eigenvalues. These components enable the transformation of correlated variables into a set of linearly uncorrelated principal components, maximizing variance capture and facilitating dimensionality reduction.

Table 1: Key Mathematical Quantities in PCA

| Quantity | Formula | Role in PCA | Typical Data Scale (Biomedical) | ||||

|---|---|---|---|---|---|---|---|

| Variance (σ²) | σ² = Σ(xᵢ - μ)²/(n-1) | Measures spread of a single feature. | Gene expression: 0.1 - 100 (log scale) | ||||

| Covariance | Cov(X,Y) = Σ(xᵢ - μₓ)(yᵢ - μᵧ)/(n-1) | Measures linear relationship between two features. | -1 to +1 (normalized), or larger for raw data | ||||

| Eigenvalue (λ) | Det(A - λI)=0 | Indicates variance captured by each principal component. | λ₁ > λ₂ > ... > λₙ; Sum = total variance | ||||

| Eigenvector (v) | (A - λI)v = 0 | Defines the direction of each principal component axis. | Unit vectors ( | v | =1) | ||

| Covariance Matrix (C) | Cᵢⱼ = Cov(Featureᵢ, Featureⱼ) | Symmetric matrix summarizing all feature relationships. | n x n matrix for n features |

Table 2: Impact of Dimensionality Reduction via PCA (Example: Gene Expression Dataset)

| Metric | Original Data (20,000 genes) | After PCA (Top 50 PCs) | Reduction/Change |

|---|---|---|---|

| Number of Features | 20,000 | 50 | 99.75% reduction |

| Total Variance Retained | 100% | ~85-90% (Typical) | 10-15% loss |

| Computational Complexity | O(p²n) for p features, n samples | O(k²n) for k components | Drastically reduced |

| Noise Estimation | High (includes technical variation) | Reduced (assumes noise in low λ PCs) | Improved signal-to-noise |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PCA-Based Analysis

| Item/Category | Function in PCA Workflow | Example Solution/Software |

|---|---|---|

| High-Dimensional Data Handler | Manages large-scale datasets (e.g., RNA-seq, LC-MS). | Python Pandas/R data.table; HDF5 format libraries. |

| Covariance Matrix Computator | Efficiently computes the covariance matrix for large p. | NumPy (.cov), SciPy, or specialized linear algebra libraries (BLAS/LAPACK). |

| Eigen Decomposition Solver | Calculates eigenvalues/vectors of the covariance matrix. | numpy.linalg.eigh (for symmetric matrices), ARPACK for very large matrices. |

| Standardization Scaler | Centers and scales features to mean=0, variance=1 (critical for PCA on mixed units). | Scikit-learn StandardScaler. |

| Visualization Suite | Projects and plots samples in reduced PCA space. | Matplotlib, Seaborn, Plotly for 2D/3D score plots; Scikit-learn for biplots. |

| Variance Explained Analyzer | Computes and plots cumulative explained variance ratio. | Custom script using pca.explained_variance_ratio_ (scikit-learn). |

Experimental Protocols

Protocol 1: Computing the Covariance Matrix and Performing PCA from First Principles

Objective: To extract principal components from a high-dimensional biomedical dataset (e.g., metabolomics concentrations across patient samples) by manually constructing the covariance matrix and performing eigen decomposition.

Materials:

- Dataset matrix X (n samples x p features), with features in columns.

- Computing environment with linear algebra capabilities (Python/NumPy, R, MATLAB).

- Standardization preprocessing script.

Procedure:

Data Preprocessing: a. Center the data: For each feature column

jin X, compute the mean μⱼ. Create a centered matrix B where Bᵢⱼ = Xᵢⱼ - μⱼ. b. (Optional, but recommended) Scale the centered features to unit variance: Divide each columnjof B by its standard deviation σⱼ to create matrix Z. This is crucial when features are on different scales.Covariance Matrix Calculation: a. Let A represent the preprocessed matrix (B for mean-centering only, or Z for standardization). b. Compute the sample covariance matrix C: C = (1/(n-1)) * AᵀA. C is a symmetric p x p matrix. c. Verify symmetry: Check that

C[i, j] == C[j, i]within machine precision.Eigen Decomposition: a. Solve the characteristic equation for C: Find λ and v such that Cv = λv. b. Use a dedicated solver for symmetric matrices (e.g.,

numpy.linalg.eigh). The output will be: - A vector of eigenvalueseigenvalues(λ₁, λ₂, ..., λₚ), typically sorted in descending order. - A matrixeigenvectorswhose columns are the corresponding unit eigenvectors (v₁, v₂, ..., vₚ).Principal Component Projection: a. To reduce dimensionality to

kcomponents, select the firstkeigenvectors from the eigenvectors matrix (columns corresponding to the topkeigenvalues). b. Form the projection matrix W (p x k). c. Compute the transformed data (PC scores): T = A W. T is an n x k matrix containing the coordinates of samples in the new PCA space.Validation: a. Calculate explained variance per PC: Variance explained by PCᵢ = λᵢ / Σ(λ). b. Plot the scree plot (eigenvalues vs. component number) and cumulative explained variance plot to inform choice of

k.

Protocol 2: PCA for Unsupervised Batch Effect Detection in Multi-Cohort Studies

Objective: To apply PCA as a diagnostic tool to identify unwanted technical variation (batch effects) in integrated genomic datasets prior to downstream analysis.

Materials:

- Integrated gene expression matrix from multiple study batches/centers.

- Metadata table annotating sample batch, date, and other technical factors.

- Scikit-learn or equivalent PCA implementation.

Procedure:

Data Integration & Standardization: a. Merge normalized count/fpkm/tpm matrices from all batches, using common gene identifiers. b. Apply log2 transformation if needed (e.g., for RNA-seq counts). c. Standardize the data: Scale each gene (feature) across all samples to have zero mean and unit variance using

StandardScaler. This gives equal weight to all genes in covariance calculation.PCA Execution: a. Fit the PCA model to the standardized data using

PCA().fit(X_standardized). b. Retain a sufficient number of components to explain >80% of total variance, or for visualization, retain at least the top 3-5 PCs.Batch Effect Visualization: a. Project the data into the PCA space using

transform()to get PC scores. b. Generate a 2D scatter plot of PC1 vs. PC2. Color data points bybatch_idfrom metadata. c. Generate additional plots for PC1 vs. PC3, PC2 vs. PC3.Interpretation & Analysis: a. Positive Result for Batch Effect: If samples cluster strongly by batch in the PCA plot (especially along PC1 or PC2), a significant batch effect is present. b. Quantify Effect: Calculate the percentage of variance in key PCs that can be attributed to batch using simple ANOVA (PC score ~ batch). c. Feature Contribution: Examine the eigenvector (loading) weights for genes that contribute most to the PCs separating batches. These may be technical artifacts.

Decision Point: a. If a major batch effect is detected, apply batch correction algorithms (ComBat, limma's

removeBatchEffect) before re-running PCA for true biological discovery. b. If no strong batch effect is seen, PCA can proceed directly for biological feature extraction (e.g., identifying subtypes).

Mandatory Visualizations

Title: PCA Workflow from Raw Data to Dimensionality Reduction

Title: Relationship Between Covariance Matrix, Eigenvalues & Eigenvectors

Table 1: Definitions and Characteristics of PCA Core Terms

| Term | Mathematical Definition / Role | Interpretation in Research Context |

|---|---|---|

| Principal Components (PCs) | Eigenvectors of the data covariance matrix, representing orthogonal directions of maximum variance. PC1 captures the most variance, PC2 the second most, and so on. | New, uncorrelated features constructed from linear combinations of original variables. Used for dimensionality reduction and noise filtering. |

| Loadings | Weights (coefficients) of the original variables in the linear combination that forms each PC. Represented by the eigenvectors themselves. | Indicate the contribution and direction of influence of each original variable on a given PC. High absolute loading = variable is important for that PC's direction. |

| Scores | Projections of the original data points onto the new principal component axes. Calculated as the dot product of the centered data and the loadings. | Coordinates of each sample in the new PC space. Used for visualization (e.g., scatter plots), clustering, and outlier detection. |

| Explained Variance | The proportion of the total variance in the original dataset accounted for by each PC. Derived from the eigenvalues of the covariance matrix. | Quantifies the importance/information content of each PC. Guides the decision on how many PCs to retain for subsequent analysis. |

| Cumulative Explained Variance | Running sum of the explained variance for successive PCs. | Determines the total fraction of information preserved when using a reduced set of k components. Aids in setting dimensionality reduction thresholds. |

Table 2: Typical PCA Workflow Output Metrics (Example from Transcriptomics Data)

| Component | Eigenvalue | Explained Variance (%) | Cumulative Explained Variance (%) | Key Variables with High Loadings ( | loading | > 0.7) |

|---|---|---|---|---|---|---|

| PC1 | 8.92 | 44.6% | 44.6% | GeneA, GeneD, GeneF, GeneX | ||

| PC2 | 4.15 | 20.8% | 65.4% | GeneB, GeneH, Gene_T | ||

| PC3 | 2.01 | 10.1% | 75.5% | GeneC, GeneK, Gene_M | ||

| PC4 | 1.12 | 5.6% | 81.1% | GeneE, GeneQ |

Application Protocols for Unsupervised Feature Extraction

Protocol 2.1: Standard PCA for Exploratory Data Analysis (EDA)

Objective: To reduce dimensionality, visualize sample clustering, and identify dominant patterns and outliers in high-dimensional research data (e.g., metabolomics profiles, clinical biomarkers).

Materials & Reagents:

- Research Data Matrix: Samples (rows) x Variables/Features (columns). Must be numeric.

- Statistical Software: R (with

stats,factoextra,ggplot2packages) or Python (withscikit-learn,pandas,numpy,matplotlib). - Compute Environment: Standard workstation or HPC cluster for large datasets.

Procedure:

- Data Preprocessing: Center the data by subtracting the mean of each variable. Scale variables to unit variance if they are on different measurement scales (using Z-score normalization).

- Covariance/Correlation Matrix: Calculate the covariance matrix (if data is scaled) or correlation matrix (if data is centered and scaled).

- Eigendecomposition: Perform eigendecomposition on the matrix to obtain eigenvalues and eigenvectors. The eigenvectors are the loadings.

- Component Selection: Examine the scree plot (eigenvalues vs. component number) and cumulative explained variance table. Retain components that capture a predetermined threshold (e.g., >70-80% cumulative variance) or those before the "elbow" in the scree plot.

- Calculate Scores: Project the original data onto the selected loading vectors to compute the scores (

data_scaled %*% loadings). - Visualization & Interpretation: Create a scores plot (PC1 vs. PC2) to assess sample clustering. Create a loadings plot or biplot to interpret which original variables drive the separation seen in the scores plot.

Protocol 2.2: PCA for Feature Selection in Drug Response Profiling

Objective: To isolate a subset of original features (e.g., gene expressions) most influential on the major sources of variance, for downstream modeling of drug sensitivity.

Procedure:

- Execute Protocol 2.1, Steps 1-4.

- Identify Significant Loadings: For the first k retained PCs, identify original variables whose absolute loading value exceeds a threshold (e.g., >0.5 or the top 10% per PC).

- Feature Aggregation: Aggregate the union of all variables identified across the k PCs. This forms a reduced, PCA-informed feature set.

- Validation: Use the reduced feature set in a separate predictive model (e.g., regression for IC50 values). Compare model performance (using cross-validation) against models using full feature sets or other selection methods.

Visualization of PCA Workflow and Relationships

Diagram Title: PCA Analysis Workflow from Data to Results

Diagram Title: Logical Relationship Between PCA Core Terms

The Scientist's Toolkit: Essential PCA Research Reagents & Solutions

Table 3: Key Reagents and Computational Tools for PCA-Based Feature Extraction

| Item / Solution | Function / Role in PCA Workflow | Example / Implementation Note |

|---|---|---|

| Data Normalization Suite | Prepares data for PCA by handling scale differences and stabilizing variance. | Z-score scaler, Min-Max scaler, or Pareto scaling (common in metabolomics). Use scale() in R or StandardScaler in Python. |

| Eigendecomposition Solver | Computes eigenvalues and eigenvectors (loadings) from the covariance/correlation matrix. | Singular Value Decomposition (SVD) is the preferred numerical method (prcomp in R, PCA in scikit-learn). |

| Scree Plot Visualization | Aids in deciding the number of components (k) to retain by plotting explained variance against component number. | Use fviz_eig() from R factoextra or matplotlib.pyplot.plot in Python. |

| Biplot Generation Tool | Overlays scores and loadings on the same plot to visualize sample patterns and variable contributions simultaneously. | Use fviz_pca_biplot() in R or custom plotting using loading arrows in Python. |

| Parallel Analysis Script | A statistical method to determine significant components by comparing data eigenvalues to those from random datasets. | Use fa.parallel() from R psych package or setuptools for implementation. |

| High-Performance Computing (HPC) Environment | Enables PCA on extremely large datasets (e.g., single-cell RNA-seq) where matrix operations exceed local memory. | Cloud platforms (AWS, GCP) or local clusters with distributed linear algebra libraries. |

Why Go Unsupervised? The Role of PCA in Exploratory Data Analysis (EDA).

Within the broader thesis on Principal Component Analysis (PCA) for unsupervised feature extraction in research data, this document establishes its foundational role in Exploratory Data Analysis (EDA). Unsupervised methods like PCA are critical first steps in high-dimensional research datasets—common in genomics, proteomics, and chemoinformatics—where no prior labeling or outcome data is available or should be assumed. PCA facilitates dimensionality reduction, noise filtering, and the revelation of intrinsic data structure without bias from supervised targets, guiding subsequent hypothesis generation and experimental design.

Application Notes: Core Functions of PCA in EDA

2.1. Dimensionality Reduction & Visualization: Transforms high-dimensional data into 2 or 3 principal components (PCs) for scatter plot visualization, allowing identification of clusters, outliers, and trends. 2.2. Noise Reduction: By retaining PCs that capture significant variance and discarding low-variance components, PCA can improve the signal-to-noise ratio. 2.3. Detect Hidden Patterns & Correlations: Reveals relationships between variables (loadings) and samples (scores) that are not apparent in raw data. 2.4. Multicollinearity Addressal: Creates new, orthogonal (uncorrelated) features (PCs) from original, often correlated, variables. 2.5. Pre-processing for Downstream Analysis: The reduced, de-noised PCA output serves as optimal input for subsequent clustering (e.g., k-means) or supervised learning algorithms.

Protocols for PCA in EDA

Protocol 3.1: Standard PCA Workflow for Omics Data

Objective: To perform unsupervised exploration of a gene expression microarray dataset (samples x genes) to identify potential sample groupings and driver genes.

Materials & Input Data:

- Normalized and scaled gene expression matrix (e.g., log2-transformed, Z-scored per gene).

- Sample metadata (e.g., treatment, batch, phenotype).

Procedure:

- Data Centering: Center the data matrix so each gene has a mean of zero.

- Covariance Matrix Computation: Calculate the covariance matrix of the centered data.

- Eigendecomposition: Perform eigendecomposition on the covariance matrix to obtain eigenvectors (principal component loadings) and eigenvalues (variance explained by each PC).

- Projection: Project the original data onto the selected eigenvectors to obtain principal component scores for each sample.

- Variance Analysis: Calculate the percentage of total variance explained by each PC.

- Visualization: Generate a scree plot (PC vs. variance explained) and a 2D/3D scores plot (e.g., PC1 vs. PC2). Color points by metadata.

- Loading Interpretation: Examine the genes with the highest absolute loadings (contributions) on PCs defining sample clusters.

Protocol 3.2: PCA for Batch Effect Detection

Objective: To assess and visualize the presence of technical batch effects in high-throughput screening data.

Procedure:

- Perform PCA as in Protocol 3.1 on the entire normalized dataset.

- Generate a PC scores plot (PC1 vs. PC2).

- Color-code data points by the known batch variable (e.g., plating date, instrument ID).

- Interpretation: If samples cluster strongly by batch rather than biological condition along a major PC, a significant batch effect is indicated. This must be addressed (e.g., via Combat, surrogate variable analysis) before biological analysis.

Data Presentation

Table 1: Variance Explained by Principal Components in a Example Transcriptomic Study (n=100 samples, 20,000 genes)

| Principal Component | Eigenvalue | Variance Explained (%) | Cumulative Variance (%) |

|---|---|---|---|

| PC1 | 45.2 | 22.6% | 22.6% |

| PC2 | 18.7 | 9.4% | 32.0% |

| PC3 | 9.8 | 4.9% | 36.9% |

| PC4 | 5.1 | 2.6% | 39.5% |

| PC5 | 4.2 | 2.1% | 41.6% |

| ... | ... | ... | ... |

| PC20 | 0.7 | 0.35% | 55.1% |

Table 2: Top Gene Loadings for PC1 in the Example Study

| Gene Symbol | Loading Value (PC1) | Known Biological Function |

|---|---|---|

| GENEX | 0.145 | Involved in inflammatory response pathway. |

| GENEY | 0.142 | Cell cycle regulator. |

| GENEZ | -0.138 | Metabolic enzyme. |

| GENEW | 0.134 | Transcriptional activator. |

| GENEV | -0.130 | Apoptosis-related protein. |

Visualizations

PCA Workflow for EDA

Geometric View of PCA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PCA-based EDA in Computational Research

| Item/Category | Example/Specific Tool | Function in PCA/EDA |

|---|---|---|

| Programming Environment | Python (scikit-learn, NumPy, pandas), R (stats, factoextra) | Provides libraries for efficient numerical computation and implementation of PCA. |

| Data Normalization Lib | scikit-learn StandardScaler, RobustScaler |

Pre-processes data by centering and scaling, a critical step before PCA. |

| PCA Algorithm | scikit-learn PCA(), TruncatedSVD() |

Performs the core dimensionality reduction calculation. |

| Visualization Library | Matplotlib, Seaborn, ggplot2, plotly | Creates scree plots, biplots, and 2D/3D scores plots for interpreting PCA results. |

| Interactive EDA Platform | Jupyter Notebook, RMarkdown | Allows integrated analysis, visualization, and documentation in a reproducible format. |

| High-Performance Compute | Cloud services (AWS, GCP) or local clusters | Handles eigendecomposition for extremely large matrices (e.g., single-cell genomics). |

Application Notes

Principal Component Analysis (PCA) serves as a foundational tool for unsupervised feature extraction across multi-omics and clinical data, enabling dimensionality reduction, noise reduction, and exploratory data analysis. The following notes detail its application within key research domains, framed within the thesis that PCA is a critical first step for revealing latent biological structures and informing downstream supervised analyses.

Genomics: Population Stratification and Batch Effect Detection

PCA is routinely applied to single nucleotide polymorphism (SNP) array or whole-genome sequencing data to address population stratification—a confounder in genome-wide association studies (GWAS). By extracting principal components (PCs) that capture genetic ancestry, researchers can adjust models to prevent spurious associations. Furthermore, PCA effectively visualizes technical batch effects, allowing for their correction before analysis.

Proteomics: Quality Control and Biomarker Discovery

In high-throughput mass spectrometry-based proteomics, PCA is used to assess technical reproducibility across sample runs and to identify outlier samples. By reducing thousands of protein abundance features to 2-3 PCs, it facilitates the detection of sample clusters based on biological condition (e.g., disease vs. control), guiding initial biomarker discovery efforts.

Metabolomics: Sample Classification and Pathway Analysis

Metabolomic profiles are highly susceptible to experimental variation. PCA provides a rapid, unsupervised method to view global metabolic patterns, distinguishing sample groups based on phenotype. The loadings of the first few PCs highlight metabolites contributing most to variance, which can be mapped onto biochemical pathways for functional interpretation.

Clinical Trial Data: Patient Cohort Identification and Multimodal Integration

In clinical trials, PCA can integrate diverse continuous variables (e.g., vital signs, lab values) to identify distinct patient subgroups or disease severity clusters. When bridging omics and clinical data, PCA on combined feature sets can reveal axes of variation that correlate with clinical outcomes, generating hypotheses for mechanistic drivers.

Table 1: Summary of PCA Applications Across Data Types

| Data Type | Primary Purpose of PCA | Typical Input Features | Key Output | Common Variance Explained by Top 2-3 PCs |

|---|---|---|---|---|

| Genomics | Population stratification, batch correction | 50k-1M SNPs | Ancestry-informative PCs, outlier samples | 1-10% (due to high dimensionality) |

| Proteomics | Quality control, sample clustering | 1k-10k protein abundances | Sample run QC plots, condition separation | 20-40% (subject to high technical noise) |

| Metabolomics | Pattern discovery, metabolite ranking | 100-1k metabolite intensities | Phenotype-driven clustering, key metabolites | 30-60% (higher for targeted assays) |

| Clinical Trial | Patient stratification, data fusion | 10-50 continuous clinical variables | Patient subgroups, integrated disease axes | 40-70% (lower dimensionality) |

Detailed Experimental Protocols

Protocol 1: PCA for Population Stratification in GWAS

Objective: To identify and correct for population substructure using genomic SNP data. Materials: Genotype data (PLINK .bed/.bim/.fam files), computational resources. Software: PLINK, R with snprelate or flashpca.

Procedure:

- Data Pruning: Use PLINK to perform linkage disequilibrium (LD) pruning:

plink --bfile data --indep-pairwise 50 5 0.2. This reduces non-independent SNPs. - PCA Calculation: On the pruned SNP set, run a scalable PCA algorithm:

flashpca --bfile data --ndim 10 --out pc_output. - Visual Inspection: Plot PC1 vs. PC2, coloring samples by presumed population. Identify clear genetic outliers.

- Covariate Inclusion: Include the top 5-10 PCs as covariates in the GWAS association model to control for stratification.

Protocol 2: PCA for Quality Control in LC-MS Proteomics

Objective: To assess technical reproducibility and identify sample outliers. Materials: Normalized protein abundance matrix (samples x proteins), missing values imputed. Software: R with stats package or Python with scikit-learn.

Procedure:

- Data Scaling: Apply unit variance scaling (z-scoring) to each protein abundance feature across samples.

- PCA Execution: Perform PCA on the scaled matrix using the prcomp() function in R.

- Outlier Detection: Generate a scores plot (PC1 vs. PC2). Samples falling beyond 95% confidence ellipse (Hotelling's T²) are flagged as potential outliers for review.

- Batch Visualization: Color samples by processing batch on the scores plot. A strong batch-driven cluster indicates the need for ComBat or similar batch correction.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Omics/Clinical Research |

|---|---|

| Illumina SNP Genotyping Array | Provides high-throughput, cost-effective genome-wide SNP data for PCA-based stratification. |

| TMT/Isobaric Label Reagents (Thermo Fisher) | Enables multiplexed quantitative proteomics, generating high-dimensional data suitable for PCA-driven QC and discovery. |

| Mass Spectrometry-Grade Solvents | Essential for reproducible LC-MS metabolomics/proteomics, minimizing technical variance that PCA can detect. |

| EDTA or Heparin Plasma Collection Tubes | Standardized blood collection for metabolomics/proteomics, ensuring pre-analytical consistency. |

| Clinical Data Standardization Toolkit (CDISC) | Provides standardized formats (SDTM, ADaM) for clinical trial data, facilitating cleaner integration and PCA. |

Visualizations

Title: PCA for GWAS Population Stratification Workflow

Title: PCA for Multi-Omics and Clinical Data Integration

Hands-On PCA: A Step-by-Step Pipeline for Feature Extraction in R/Python

Principal Component Analysis (PCA) is a cornerstone technique for unsupervised feature extraction in research data, particularly in fields like omics sciences and quantitative structure-activity relationship (QSAR) modeling in drug development. Its efficacy is wholly dependent on the quality and preparation of the input data. Inappropriate preprocessing can lead to components dominated by technical artifacts (e.g., measurement scale) rather than biological or chemical variance, yielding misleading conclusions. This document outlines standardized protocols for the three foundational preprocessing steps—scaling, centering, and handling missing data—within the PCA workflow.

Quantitative Comparison of Preprocessing Methods

Table 1: Impact of Scaling & Centering on Simulated Spectral Data (n=100 samples, p=500 features)

| Preprocessing Method | Dominant PC1 Variance Explained | Biological Cluster Separation (Silhouette Score) | Interpretation | Primary Use Case |

|---|---|---|---|---|

| Raw Data | 99.2% | 0.12 | PC1 reflects largest absolute values, not biological signal. | None recommended. |

| Centering Only | 45.7% | 0.58 | Removes mean bias, variance reflects spread from origin. | Features on same scale (e.g., gene expression from same platform). |

| Unit Variance Scaling (Auto) | 22.3% | 0.85 | All features contribute equally, may amplify noise. | Features with different units (e.g., concentration, intensity, temperature). |

| Pareto Scaling | 38.5% | 0.79 | Compromise: scales by sqrt(SD), reduces noise impact. | Metabolomics/NMR data where high-intensity peaks dominate. |

| Range Scaling | 25.1% | 0.82 | Scales to [0,1] or [-1,1], sensitive to outliers. | Bounded measurements or when outlier removal is performed first. |

Table 2: Performance of Missing Data Imputation Methods (Benchmark on LC-MS Dataset, 15% Missing Not at Random)

| Imputation Method | PCA Model Stability (Procrustes Similarity to Complete) | Preservation of Covariance Structure | Computation Time (s) | Recommended For |

|---|---|---|---|---|

| Complete Case Analysis | 0.51 | Very Poor | <1 | Not recommended except for trivial missingness. |

| Mean/Median Imputation | 0.72 | Poor (Biases variance) | <1 | Last resort for very low missingness (<5%). |

| k-Nearest Neighbors (k=10) | 0.94 | Good | ~15 | General purpose, data with local structure. |

| Iterative SVD (MissMDA) | 0.96 | Excellent | ~25 | Low-rank data (e.g., gene expression). |

| Random Forest (MissForest) | 0.98 | Excellent | ~120 | Complex, non-linear relationships. |

Detailed Experimental Protocols

Protocol 3.1: Systematic Preprocessing for PCA on Omics Data

Aim: To prepare a high-dimensional dataset (e.g., proteomics, metabolomics) for robust PCA. Materials: Raw feature matrix (samples x variables), statistical software (R/Python). Procedure:

- Data Audit: Log-transform if data is right-skewed (common in mass spectrometry). Confirm with density plots.

- Missing Data Imputation: a. Identify missingness mechanism (e.g., Missing Completely at Random (MCAR) via Little's test). b. For missingness <10%, use Iterative SVD Imputation (Algorithm 1). c. For higher missingness or complex patterns, use MissForest. d. Algorithm 1 (Iterative SVD): i. Initialize missing values with column means. ii. Perform SVD on the completed matrix. iii. Reconstruct matrix using d principal components (where d is selected by cross-validation). iv. Replace initial missing values with reconstructed values. v. Repeat steps ii-iv until convergence (change < 1e-5).

- Centering: Subtract the column mean from each value: ( X_{centered} = X - \bar{X} ).

- Scaling: Divide each column by its chosen scaling factor. a. Unit Variance: Scaling factor = standard deviation. b. Pareto: Scaling factor = ( \sqrt{standard\ deviation} ). c. Range: Scaling factor = ( max(value) - min(value) ).

- Validation: Run PCA on a stable subset. The variance explained by successive components should decrease smoothly without large drops after the first few PCs.

Protocol 3.2: Protocol for Evaluating Preprocessing Impact

Aim: To empirically determine the optimal preprocessing pipeline for a given dataset. Materials: Dataset, computational environment. Procedure:

- Design a factorial experiment combining 2-3 imputation methods and 3-4 scaling methods.

- For each combination, perform PCA and calculate: a. Total Variance Explained by the first 5 PCs. b. Cluster Cohesion/Separation using a known sample class (e.g., disease vs. control) via Silhouette score. c. Technical Noise Assessment: Correlation of top PC loadings with known technical batches (e.g., processing date). Lower correlation is better.

- Rank pipelines based on a composite score (e.g., maximizing Silhouette score while minimizing batch correlation).

- Select the top-performing pipeline for all downstream analysis.

Visual Workflows

PCA Preprocessing Workflow Decision Tree

Missing Data Imputation Decision Pathway

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software/Packages for Preprocessing in PCA

| Item | Function in Preprocessing | Typical Use Case | Example (R/Python) |

|---|---|---|---|

| Iterative SVD Imputer | Handles missing data by iteratively low-rank approximation. | Gene expression, metabolomics data with MCAR/MAR patterns. | R: missMDA; Python: sklearn.impute.IterativeImputer |

| Random Forest Imputer | Non-parametric missing value imputation using ensemble trees. | Complex, non-linear data with mixed data types. | R: missForest; Python: sklearn.impute.IterativeImputer (with rf estimator) |

| Robust Scaler | Centers and scales using median and IQR, resistant to outliers. | Datasets with significant outlier presence not meant for removal. | R/Python: sklearn.preprocessing.RobustScaler |

| Pareto Scaler | Hybrid scaling: divides by sqrt(standard deviation). | NMR-based metabolomics to balance variance and large intensity ranges. | R: paretoscale() (in-house); Python: custom function |

| Procrustes Analysis Tool | Quantifies similarity between PCA results from different preprocessing. | Validating stability and reliability of the chosen pipeline. | R: vegan::procrustes; Python: scipy.spatial.procrustes |

| Batch Effect Correction | Removes unwanted technical variance prior to PCA. | Multi-batch experimental data (e.g., from different sequencing runs). | R: sva::ComBat; Python: pycombat |

Application Notes

Principal Component Analysis (PCA) is a cornerstone technique for unsupervised feature extraction within research data, particularly in domains like omics analysis, high-content screening, and biomarker discovery. Its primary function is to reduce dimensionality while preserving maximal variance, enabling researchers to visualize complex datasets, identify latent structures, and mitigate multicollinearity prior to downstream modeling. In drug development, PCA is routinely applied to transcriptomic, proteomic, and metabolomic datasets to stratify patient samples, identify batch effects, and highlight key drivers of phenotypic variance.

Key Quantitative Outcomes from PCA Execution

Table 1: Comparative Output of PCA Implementations

| Metric | scikit-learn (fit_transform) |

factoextra (get_pca) |

Interpretation in Research Context |

|---|---|---|---|

| Principal Components | Synthetic variables (PC1, PC2...PCn) | Identical synthetic variables | Represent orthogonal axes of maximum variance. |

| Eigenvalues | pca.explained_variance_ |

eig.val from get_eigenvalue() |

Quantify variance captured by each PC; informs how many PCs to retain. |

| % Variance Explained | pca.explained_variance_ratio_ |

eig.val$variance.percent |

Critical for reporting; e.g., "PC1 and PC2 explain 72% of total variance." |

| Cumulative % Variance | Calculated via np.cumsum() |

eig.val$cumulative.variance.percent |

Determines sufficiency of reduced dimensions for analysis. |

| Loadings (Rotation) | pca.components_ (Rows = PCs, Cols = features) |

var$coord (Coordinates of variables) |

Identifies original features contributing most to each PC; key for biomarker hypothesis. |

| Individual Coordinates | pca.transform(X) (Scores) |

ind$coord (Coordinates of individuals) |

Projected data for clustering or outlier detection (e.g., aberrant drug response). |

Experimental Protocols

Protocol 1: Dimensionality Reduction for Transcriptomic Data using scikit-learn

Objective: To reduce dimensionality of a gene expression matrix (samples x genes) for visualization and exploratory cluster analysis.

Materials: Normalized gene expression matrix (e.g., TPM or log2(CPM+1) values), Python environment with scikit-learn≥1.3, pandas, numpy, and matplotlib.

Methodology:

- Standardization: Center and scale each gene (feature) to have zero mean and unit variance using

StandardScaler. This is critical for PCA as it is variance-sensitive.

PCA Initialization & Fitting: Instantiate PCA, optionally specifying the number of components (

n_components). Fit to the scaled data.Variance Assessment: Extract and plot the explained variance ratio to determine the effective dimensionality.

Biomarker Identification: Analyze loadings (

pca.components_) for PCs of interest. Genes with extreme absolute loading values are primary drivers of that component's variance.

Protocol 2: Integrated Sample & Variable Analysis using factoextra in R

Objective: To perform a unified exploratory analysis, visualizing both sample projections and variable contributions in a pharmacogenomic dataset.

Materials: Processed and scaled pharmacogenomic response matrix (cell lines x compound descriptors), R environment with FactoMineR, factoextra, and ggplot2.

Methodology:

- Data Preparation & PCA Execution: Ensure data is scaled. Perform PCA using

FactoMineR::PCA.

Sample Stratification Visualization: Generate a PCA score plot colored by a known covariate (e.g., cell lineage) using

fviz_pca_ind. Assess for natural clustering or outliers.Variable Contribution Analysis: Create a correlation circle plot to identify which compound features contribute most to the principal dimensions using

fviz_pca_var.Integrated Biplot: Simultaneously visualize the positions of samples and the directions/variable loadings to form hypotheses about which features drive sample separation.

Visualization of PCA Workflow in Research Data Analysis

PCA Analysis Workflow for Research Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for PCA in Research

| Item | Function in PCA Analysis | Example/Note |

|---|---|---|

| scikit-learn (Python) | Provides the PCA class for efficient computation, fitting, and transformation of data. |

sklearn.decomposition.PCA; essential for integration into machine learning pipelines. |

| FactoMineR & factoextra (R) | FactoMineR performs multivariate analysis; factoextra provides publication-ready visualization. |

Streamlines creation of scree plots, variable contribution plots, and biplots. |

| StandardScaler / scale() | Preprocessing reagent to standardize features (mean=0, variance=1) before PCA. | Critical when features are on different scales (e.g., gene expression vs. IC50 values). |

| Jupyter Notebook / RMarkdown | Environment for reproducible execution, documentation, and presentation of the PCA analysis. | Ensures the analytical protocol is transparent and reusable. |

| Matplotlib / ggplot2 | Base plotting libraries for customizing visual outputs beyond default functions. | Needed for fine-tuning plots to meet specific journal formatting guidelines. |

| Pandas (Python) / data.table (R) | Data manipulation libraries for structuring the input matrix and annotating samples/variables. | Enables efficient merging of PCA results with sample metadata for annotation. |

Within a thesis on Principal Component Analysis (PCA) for unsupervised feature extraction in research data, interpreting scree plots and biplots is the critical step that transforms mathematical outputs into biological or chemical insights. These visualizations guide the decision on the number of principal components (PCs) to retain and reveal the relationships between variables and observations, driving hypothesis generation in drug discovery and development.

Table 1: Key Metrics for Interpreting PCA Outputs

| Metric | Source Plot | Interpretation | Typical Threshold/Goal in Research | ||

|---|---|---|---|---|---|

| Eigenvalue | Scree Plot | Variance explained by each PC. | Retain PCs with eigenvalue > 1 (Kaiser criterion) or until cumulative variance >70-80%. | ||

| Percentage of Variance | Scree Plot (Cumulative) | Proportion of total dataset information captured. | Aim for a "knee" or elbow point; sufficient explanatory power for downstream analysis. | ||

| PC Loadings | Biplot (Arrows) | Correlation between original variables and PCs. | Absolute loading | > 0.3-0.5 indicates a meaningful contribution. | |

| Cos2 (Quality of Representation) | Supplementary Biplot Data | How well a variable/observation is represented by PCs. | Cos2 > 0.5 indicates good representation on the factor map. | ||

| Contribution (%) | Supplementary Data | Variable's contribution to a PC's construction. | Above average contribution (100/n_variables %) is significant. |

Experimental Protocol: Generating and Interpreting PCA Visuals

Protocol 1: Generating and Analyzing a Scree Plot Objective: To determine the optimal number of principal components to retain from a high-dimensional dataset (e.g., gene expression, compound screens). Materials: Normalized and scaled research dataset, statistical software (R, Python, SIMCA). Procedure:

- Perform PCA: Execute PCA on the centered (and often scaled) data matrix.

- Extract Eigenvalues: Obtain the eigenvalues for each principal component from the covariance/correlation matrix.

- Plot Scree Plot: Create a line plot with PC number on the x-axis and corresponding eigenvalue (or % variance) on the y-axis.

- Identify the "Elbow": Visually locate the point where the slope of the line markedly decreases (the "knee"). This point suggests components beyond explain diminishing variance.

- Apply Parallel Analysis (Optional but Recommended): Generate a scree plot from a randomized version of your data. Retain PCs whose eigenvalues exceed those from the random data.

- Decision: Retain all PCs before the elbow, ensuring they meet cumulative variance goals for your thesis analysis.

Protocol 2: Generating and Interpreting a Biplot Objective: To visualize both observations (samples) and variables (features) in the reduced PC space to identify patterns, clusters, and correlations. Materials: PCA results (scores and loadings), visualization software (ggplot2, matplotlib). Procedure:

- Select PCs: Choose the two (or sometimes three) PCs for plotting, typically PC1 vs. PC2.

- Plot Scores (Observations): Scatter plot the PC scores for each sample/observation. Color/shape by experimental groups (e.g., control vs. treated).

- Overlay Loadings (Variables): Plot the loading vectors for each original variable as arrows from the origin (0,0). The coordinates of each arrowhead are its loadings on the two PCs.

- Interpret Proximity:

- Observations close together are similar in their variable profiles.

- Variable arrows pointing in the same direction are positively correlated.

- Arrows in opposite directions are negatively correlated.

- The projection of an observation onto a variable arrow approximates the value of that observation for that variable.

- Analyze: Identify which variables drive the separation of observed sample clusters. Formulate biological hypotheses based on these driving variables.

Visualizations: PCA Workflow and Biplot Interpretation

Table 2: Research Reagent Solutions for PCA-Based Analysis

| Item/Resource | Function in PCA Workflow | Example/Note |

|---|---|---|

| Data Normalization Suite (e.g., ComBat, R limma) | Removes technical batch effects before PCA, ensuring biological variation is the primary signal. | Critical for multi-batch genomic or proteomic data. |

| Feature Scaling Module (Auto-scaling, Pareto) | Standardizes variables to mean=0, variance=1 (or other scales), preventing high-variance features from dominating PCs. | Pareto scaling (mean-center/√SD) is a common choice in metabolomics. |

Statistical Software with PCA Suite (R FactoMineR, Python scikit-learn) |

Provides robust algorithms for PCA computation, validation, and generation of scree plots, biplots, and contribution tables. | FactoMineR offers extensive supplementary metrics for interpretation. |

| Parallel Analysis Script | Generates random data eigenvalues to provide a statistical baseline for the scree plot "elbow" decision. | Available in R (psych package) or as custom code; superior to Kaiser criterion for complex data. |

| High-Contrast Color Palette (Colorblind-Friendly) | Ensures clear differentiation of sample groups and variable vectors in biplots for publication and presentation. | Use palettes from viridis or RColorBrewer packages. |

| Bootstrapping/Stability Testing Module | Assesses the robustness of PCA loadings by resampling data; confirms that identified drivers are not artifacts. | Implemented via permutation tests in software like SIMCA. |

Within the broader thesis on unsupervised feature extraction, Principal Component Analysis (PCA) serves as a foundational technique for dimensional reduction and noise filtering. It transforms a set of correlated original variables into a new set of uncorrelated variables, the Principal Components (PCs), which are linear combinations of the original data. This transformation is critical in research data science for visualizing high-dimensional data, mitigating multicollinearity, and enhancing the performance of downstream analytical models.

Core Protocol: Standardized PCA for Omics Data Analysis

This protocol details the application of PCA to a gene expression matrix, a common scenario in drug discovery for identifying latent patterns of co-expression.

Materials:

- Input Data: A

m x nmatrix, wheremis the number of samples (e.g., cell lines, patients) andnis the number of features (e.g., gene expression values). - Software: Python (

scikit-learn,pandas,numpy) or R (stats,factoextra).

Procedure:

- Data Preprocessing: Log-transform and normalize the raw expression matrix (e.g., TPM, FPKM) to stabilize variance.

- Standardization: Center each feature to have a mean of zero and scale to have a standard deviation of one using

StandardScaler. This is crucial when features are on different scales. - Covariance Matrix Computation: Calculate the

n x ncovariance matrix of the standardized data, which captures the relationships between all pairs of features. - Eigendecomposition: Perform eigendecomposition on the covariance matrix to obtain eigenvalues and corresponding eigenvectors.

- Component Selection: Sort eigenvalues in descending order. The eigenvectors (loadings) define the direction of the PCs, and the eigenvalues define their magnitude (variance explained).

- Projection: Transform the original standardized data onto the selected eigenvectors to obtain the new coordinates, the principal component scores.

Key Calculations:

- Variance Explained by PCi: (λi / Σ(λ)) * 100%

- Cumulative Variance: Σ(λ1 to λi) / Σ(λ) * 100%

- Scores for Sample

kon PC_i:PC_i_k = Σ (Loading_ij * Standardized_Feature_jk)for all featuresj.

Data & Results: Variance Explained in a Cytokine Profiling Study

A recent study (2023) applied PCA to a panel of 25 cytokines measured in plasma samples from 120 patients across three disease subtypes. The goal was to reduce dimensionality for patient stratification.

Table 1: Variance Explained by Top 5 Principal Components

| Principal Component | Eigenvalue | Individual Variance Explained (%) | Cumulative Variance Explained (%) |

|---|---|---|---|

| PC1 | 9.85 | 39.4% | 39.4% |

| PC2 | 4.20 | 16.8% | 56.2% |

| PC3 | 2.10 | 8.4% | 64.6% |

| PC4 | 1.55 | 6.2% | 70.8% |

| PC5 | 1.30 | 5.2% | 76.0% |

Interpretation: The first two PCs capture 56.2% of the total variance in the original 25-dimensional data, enabling a meaningful 2D visualization. PC1 is strongly weighted by pro-inflammatory cytokines (e.g., IL-6, TNF-α), while PC2 loads on chemokines (e.g., MCP-1, IL-8).

Visualization of the PCA Workflow

Diagram Title: PCA Dimensional Reduction Workflow (6 Steps)

Diagram Title: Four Key PCA Plots for Interpretation

The Scientist's Toolkit: Essential Reagents & Solutions

Table 2: Research Reagent Solutions for PCA-Preparatory Assays

| Item | Function in Context |

|---|---|

| Luminex Multiplex Assay Panels | Enables simultaneous quantification of dozens of proteins (e.g., cytokines, phosphoproteins) from a single small-volume sample, generating the high-dimensional data ideal for PCA. |

| Nextera XT DNA Library Prep Kit | Prepares sequencing-ready libraries from fragmented DNA/RNA for next-generation sequencing (NGS), producing the gene expression or variant count matrices used as PCA input. |

| CellTiter-Glo Luminescent Viability Assay | Measures cell viability based on ATP content. Results from dose-response screens can be analyzed via PCA to separate compound efficacy from general cytotoxicity. |

| Seahorse XF Cell Mito Stress Test Kit | Profiles cellular metabolic function (OCR, ECAR). PCA can reduce these multiparametric kinetic measurements to key metabolic phenotypes for drug profiling. |

| CETSA (Cellular Thermal Shift Assay) Reagents | Detects drug-target engagement in cells by monitoring protein thermal stability shifts. PCA can analyze differential scanning fluorimetry curves across multiple targets. |

| Compound Management/Library | Curated collections of small molecules or biologics used in HTS. PCA of screening results identifies compounds with similar mechanisms of action based on response patterns. |

Within the broader thesis on Principal Component Analysis (PCA) for unsupervised feature extraction in research data, this case study demonstrates its pivotal role in analyzing high-dimensional transcriptomic datasets. The primary challenge in such data is the "curse of dimensionality," where tens of thousands of gene expression measurements (features) per sample obscure underlying biological signals. PCA addresses this by identifying orthogonal axes of maximum variance, enabling the projection of data into a lower-dimensional space where phenotypic separation—critical for identifying disease subtypes, biomarkers, and therapeutic targets—becomes visually and computationally tractable. This protocol details the application of PCA to separate distinct phenotypes from RNA-seq data.

Application Notes: Key Principles and Outcomes

PCA transforms correlated gene expression variables into a smaller set of uncorrelated principal components (PCs). The first few PCs often capture the majority of biological variance, including systematic differences between phenotypes. Successful separation in a 2D or 3D PCA score plot indicates that global gene expression patterns are sufficiently distinct between sample groups, justifying further targeted analysis.

Table 1: Typical Variance Explained by PCs in Transcriptomic Studies

| Principal Component | % Variance Explained (Range) | Typical Cumulative % |

|---|---|---|

| PC1 | 20-50% | 20-50% |

| PC2 | 10-25% | 30-75% |

| PC3 | 5-15% | 35-90% |

| PC4+ | <5% each | Up to 100% |

Table 2: Impact of Data Pre-processing on Phenotype Separation

| Pre-processing Step | Primary Function | Effect on PCA Separation |

|---|---|---|

| Log2 Transformation | Stabilize variance across expression levels | Reduces skew, improves separation |

| Z-score Standardization (per gene) | Center and scale each gene to mean=0, variance=1 | Prevents high-expression genes from dominating PCs |

| Batch Effect Correction (e.g., ComBat) | Remove non-biological technical variation | Enhances separation by biological phenotype |

| Low-expression Filtering | Remove genes with near-zero counts | Reduces noise, focuses on informative features |

Experimental Protocols

Protocol 1: RNA-seq Data Processing Prior to PCA

- Quality Control & Alignment: Process raw FASTQ files using a pipeline like

nf-core/rnaseq. Assess quality with FastQC. Align reads to a reference genome (e.g., GRCh38) using STAR. - Quantification: Generate gene-level read counts using featureCounts or the STAR built-in option.

- Filtering: Remove genes with fewer than 10 reads in at least 90% of samples.

- Normalization: Perform counts per million (CPM) or library size normalization (e.g., using DESeq2's

vstorrlogfunctions, which include variance stabilization). - Batch Correction (if needed): Apply a method like

sva::ComBatto known batch variables (e.g., sequencing run).

Protocol 2: PCA Execution and Visualization for Phenotype Assessment

Input: Normalized, filtered gene expression matrix (genes as rows, samples as columns). Software: R (stats, ggplot2) or Python (scikit-learn, matplotlib).

- Data Scaling: Transpose the matrix so samples are rows and genes are columns. Center the data by subtracting the mean expression of each gene. Optionally, scale each gene to unit variance.

- PCA Calculation: Perform singular value decomposition (SVD) on the scaled matrix using

prcomp()in R orsklearn.decomposition.PCAin Python. - Variance Assessment: Extract the proportion of variance explained by each PC from the PCA result object. Create a scree plot.

- Score Plot Generation: Plot PC1 vs. PC2 (and PC3 if needed). Color samples by their known phenotype (e.g., Disease vs. Control).

- Interpretation: Assess the degree of visual separation between phenotypic groups. Overlap may indicate subtle signatures requiring supervised methods post-PCA.

PCA Workflow for Transcriptomic Data

PCA Logic for Phenotype Separation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Transcriptomic PCA Analysis

| Item | Function/Description | Example Product/Software |

|---|---|---|

| RNA Extraction Kit | High-quality, intact RNA is foundational for accurate expression quantification. | Qiagen RNeasy Kit, TRIzol Reagent |

| RNA-seq Library Prep Kit | Prepares RNA samples for sequencing by adding adapters. | Illumina TruSeq Stranded mRNA Kit |

| Sequencing Platform | Generates raw read data (FASTQ files). | Illumina NovaSeq 6000 |

| Alignment & Quantification Software | Maps reads to genome and generates count matrix. | STAR aligner, featureCounts |

| Statistical Programming Environment | Provides libraries for PCA and data visualization. | R (stats, ggplot2) or Python (scikit-learn, pandas) |

| Normalization & Batch Correction Package | Critical pre-processing to remove technical artifacts. | R: DESeq2, sva. Python: scanpy |

| High-Performance Computing (HPC) Resources | Essential for processing large RNA-seq datasets. | Local cluster or cloud (AWS, Google Cloud) |

Solving Common PCA Problems: From Overfitting to Interpretability Challenges

Within the broader thesis on Principal Component Analysis (PCA) for unsupervised feature extraction in research data, the choice between a correlation and a covariance matrix is a fundamental scaling dilemma. This decision critically influences the direction of the principal components, the variance explained, and the interpretation of results, especially in domains like biomarker discovery and high-throughput 'omics' data analysis in drug development.

Core Theoretical Framework

PCA operates by eigen-decomposition of a matrix summarizing variable relationships. The covariance matrix is sensitive to the scales of the variables, while the correlation matrix is scale-invariant, as it standardizes each variable to unit variance.

Key Quantitative Comparison

| Aspect | Covariance Matrix | Correlation Matrix |

|---|---|---|

| Data Scaling | Uses original units. | Standardizes variables (mean=0, std dev=1). |

| Sensitivity to Scale | High. Variables with larger magnitudes dominate. | None. All variables contribute equally. |

| Diagonal Elements | Variances of each variable. | Always 1. |

| Off-Diagonal Elements | Covariance between pairs. | Pearson correlation coefficients (-1 to +1). |

| Use Case | Variables are on comparable scales (e.g., gene expression from same platform). | Variables are on different scales (e.g., combining gene expression, potency (nM), molecular weight). |

| Resulting PCs | Maximize variance in original data space. | Maximize variance in standardized space, a mix of original units. |

Application Notes for Research Data

Note 1: When to Use Covariance Matrix

- Homogeneous Data: When all features are measured in the same units and scale is scientifically meaningful (e.g., pixel intensity from a uniform image array, concentration series in nM).

- Preserving Magnitude: When the absolute variance of a variable is relevant to the research question. A high-variance feature will directly influence the first PC.

- Protocol Example: PCA on a matrix of IC50 values (nM) for 100 compounds across 10 related kinase targets. The covariance matrix is appropriate as scale (potency) is directly comparable and chemically meaningful.

Note 2: When to Use Correlation Matrix

- Heterogeneous Data: When features are on different measurement scales (e.g., combining gene counts, patient age, blood pressure in mmHg, and assay readout in RFU). This is common in integrative biomarker studies.

- Avoiding Arbitrary Scale Influence: To prevent variables with numerically high values (e.g., expression of a highly abundant protein) from dominating purely due to unit size.

- Focus on Patterns, Not Magnitude: When the research question concerns the correlation structure between variables, not their absolute variances.

- Protocol Example: PCA for feature extraction from a multi-omics dataset merging RNA-Seq counts, metabolite abundances (peak areas), and clinical lab values (various units).

Experimental Protocols

Protocol 1: PCA Dimensionality Reduction for High-Throughput Screening (HTS) Data

Objective: Reduce dimensionality of a compound profiling matrix (e.g., 5000 compounds x 150 cell-based assay features) to identify latent response patterns.

Materials: HTS data matrix (cleaned, with missing values imputed), computational environment (e.g., Python/R).

Procedure:

- Data Preparation: Log-transform skewed assay readouts. Inspect feature scales.

- Matrix Choice Decision: Since all features are likely from similar assay technologies (e.g., fluorescence intensity), use the covariance matrix if intensity ranges are comparable. If features include derived ratios or normalized values with different ranges, use the correlation matrix.

- Center Data: Subtract the column mean from each value (essential for both covariance and correlation PCA).

- Compute Matrix & Decompose: Calculate the chosen matrix and perform eigen-decomposition.

- Component Selection: Plot scree plot (eigenvalues vs. component number). Retain components explaining >80-90% cumulative variance or using the elbow method.

- Interpretation: Analyze loadings (eigenvectors) of top PCs to identify which original assay features contribute most to each latent pattern.

Protocol 2: Integrative Biomarker Discovery from Multi-Source Data

Objective: Extract composite features from combined genomic, proteomic, and clinical data to stratify patient response.

Materials: Normalized genomic data, normalized proteomic data, clinical variables table.

Procedure:

- Data Integration & Cleaning: Merge datasets by patient ID. Apply appropriate normalization per platform (e.g., VST for RNA-Seq, batch correction for proteomics). Address missing data.

- Mandatory Scaling: Due to heterogeneous units (e.g., FPKM, ppm, mg/dL, years), always use the correlation matrix for PCA.

- Center & Standardize: Center data (mean=0) and scale (standard deviation=1) for each variable. This is intrinsically done when computing the correlation matrix.

- Dimensionality Reduction: Perform PCA on the correlation matrix.

- Validation: Use k-fold cross-validation to assess stability of principal component loadings.

- Downstream Analysis: Use top PCs as inputs for survival analysis, clustering, or regression models to predict treatment outcome.

Visualization of Decision Logic and Workflow

Title: PCA Matrix Selection Decision Flowchart

Title: Correlation PCA Workflow for Heterogeneous Data

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Analysis |

|---|---|

R stats package / Python scikit-learn |

Core libraries providing prcomp(), PCA(), and StandardScaler functions for matrix computation, scaling, and decomposition. |

| Feature Scaling Algorithm (e.g., Z-score) | Standardizes each feature by removing the mean and scaling to unit variance, prerequisite for correlation matrix PCA. |

| Robust Scaler (e.g., based on median/IQR) | Alternative scaling method for datasets with outliers, reducing their influence compared to Z-score. |

| Eigenvalue Stability Assessment Script | Custom code or package (e.g., bootPCA) for cross-validation to ensure extracted components are not artifacts of sampling. |

Visualization Suite (e.g., ggplot2, matplotlib) |

For generating scree plots, biplots, and loading plots to interpret and communicate PCA results. |

| High-Performance Computing (HPC) Cluster Access | For eigen-decomposition of very large matrices (e.g., >10,000 x 10,000) common in genomics and proteomics. |

How Many Components to Keep? Criteria Beyond the Elbow Method

Within the broader thesis on Principal Component Analysis (PCA) for unsupervised feature extraction in research data, determining the optimal number of components is a critical step. While the scree plot elbow method is widely known, this protocol details advanced, robust criteria for researchers, scientists, and drug development professionals.

Quantitative Criteria for Component Retention

| Criterion | Description | Typical Threshold | Primary Use Case |

|---|---|---|---|

| Kaiser-Guttman | Retain PCs with eigenvalues > mean eigenvalue. | Eigenvalue > 1.0 (for standardized data) | Initial, rapid screening. |

| Variance Explained | Retain PCs to achieve a target cumulative variance. | Cumulative Variance ≥ 80-95% | Goal-oriented, application-dependent. |

| Parallel Analysis | Retain PCs with eigenvalues > those from random data. | p-value < 0.05 (or empirical comparison) | Robust against sampling bias; gold standard. |

| Broken Stick Model | Retain PCs where explained variance exceeds random distribution. | Observed variance > Broken Stick variance | Ecological & bioinformatic data. |

| Mean Absolute Error (MAE) of Reconstruction | Minimize error between original & reconstructed data. | Point of diminishing returns on scree plot | Data compression & denoising. |

| Log-Eigenvalue Diagram (LEV) | Find break point in plot of log(eigenvalue) vs. component number. | Visual inflection point | Identifying distinct signal vs. noise separation. |

Detailed Experimental Protocols

Protocol 1: Parallel Analysis for PCA Component Selection

Objective: To determine the number of principal components to retain by comparing observed eigenvalues to those derived from uncorrelated random data. Materials: Dataset matrix (n observations × p variables), statistical software (R, Python). Procedure:

- Standardize Data: Center and scale the original data matrix to have column-wise mean=0 and variance=1.

- Perform PCA: Decompose the standardized matrix to obtain observed eigenvalues (λ_obs).

- Generate Random Datasets: Create k (e.g., 1000) random data matrices with the same dimensions (n × p) but no inherent correlation (e.g., sample from normal distribution).

- Perform PCA on Random Data: For each random matrix, perform PCA and store the eigenvalues.

- Calculate Thresholds: For each component rank (1 to p), compute the 95th percentile (or mean) of the eigenvalues from the random distributions (λ_rand).

- Decision Rule: Retain all components where λobs > λrand.

Protocol 2: Reconstruction Error Minimization for Dimensionality Assessment

Objective: To select the number of components that optimally balance data fidelity and compression by minimizing reconstruction error. Materials: Centered data matrix, computational environment. Procedure:

- Iterative PCA: For k = 1 to p components, compute the PCA projection.

- Reconstruct Data: For each k, reconstruct the data matrix using the k retained components.

- Calculate Error: Compute the Mean Absolute Error (MAE) between the original centered data and the reconstructed data.

- Plot & Analyze: Generate a scree plot of MAE (Y-axis) vs. number of components k (X-axis).

- Identify Knee Point: The optimal k is often at the "knee" or point of sharply diminishing returns in error reduction.

Visual Guide to Decision Pathways

Title: PCA Component Decision-Making Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Research Reagent Solutions for PCA-Based Data Analysis

| Item / Solution | Function / Purpose |

|---|---|

| R Statistical Environment | Open-source platform for comprehensive PCA, parallel analysis, and advanced statistical computing. |

| Python (SciPy, scikit-learn) | Flexible programming language with extensive libraries for PCA, simulation, and machine learning integration. |

| FactoMineR & factoextra (R packages) | Specialized packages for comprehensive PCA, visualization (scree plots), and result interpretation. |

| MATLAB Statistics Toolbox | Proprietary environment with robust, optimized linear algebra routines for PCA on large datasets. |

| Cross-Validation Framework | Methodological "reagent" to validate stability of chosen components by assessing reconstruction on held-out data. |

| High-Performance Computing (HPC) Cluster | Essential for parallel analysis with large k (e.g., 10,000 iterations) on high-dimensional datasets (e.g., genomics). |

Principal Component Analysis (PCA) is a cornerstone of unsupervised feature extraction in research data, particularly within life sciences and drug development. Its utility in dimensionality reduction, noise filtration, and exploratory data analysis is unparalleled. However, the standard PCA method, which minimizes the L2 norm (sum of squared errors), is highly sensitive to outliers and violations of the Gaussian assumption. Real-world research data—from high-throughput genomics to pharmacokinetic studies—are often contaminated with anomalous observations or exhibit heavy-tailed distributions. These deviations can severely distort the principal components, leading to misleading interpretations and flawed downstream analyses. This document, framed within a broader thesis on robust data exploration, details robust PCA alternatives, providing application notes and experimental protocols to ensure reliable feature extraction in the presence of data irregularities.

Quantitative Comparison of Standard PCA vs. Robust Alternatives

The following table summarizes the core characteristics, advantages, and limitations of standard PCA and three prominent robust alternatives, based on current literature and implementations.

Table 1: Comparative Analysis of PCA Methodologies

| Method | Core Objective | Robustness Mechanism | Key Advantage | Primary Limitation | Typical Use Case in Research |

|---|---|---|---|---|---|

| Standard PCA | Maximize variance of orthogonal projections. | None (L2 norm minimization). | Computationally efficient; unique global solution. | Highly sensitive to outliers. | Initial exploration of "clean," normally-distributed data. |

| Robust PCA (RPCA via Decomposition) | Decompose data matrix (M) into low-rank (L) and sparse (S) components. | Convex optimization (nuclear & L1 norms). | Can handle large, sporadic outliers; strong theoretical guarantees. | Assumes outliers are sparse; tuning of λ parameter required. | Anomaly detection in high-content screening; background correction in imaging. |

| Sparse PCA | Find sparse component loadings. | Regularization (L1 norm) on loadings. | Improves interpretability of components; some robustness via constraint. | Primarily for interpretability, not outright outlier robustness. | Identifying key biomarkers from high-dimensional genomic data. |

| Minimum Covariance Determinant (MCD) PCA | Use a robust estimate of the covariance matrix. | Find subset of data with minimum covariance determinant. | High breakdown point; retains PCA framework. | Computationally intensive for very high dimensions. | Multivariate analysis of pharmacokinetic data with potential contamination. |

Experimental Protocols for Robust PCA Implementation

Protocol 3.1: Robust PCA (RPCA) via Principal Component Pursuit

Objective: To decompose a research data matrix into a low-rank matrix (true signal) and a sparse matrix (outliers/noise).

Materials & Reagents: See The Scientist's Toolkit (Section 5).

Procedure:

- Data Preparation: Standardize your n x p data matrix M (e.g., gene expression across samples). Center each feature (column) to have zero mean.

- Parameter Selection: Set the regularization parameter λ. A common heuristic is λ = 1 / √max(n, p). Prepare to tune this based on domain knowledge.

- Optimization: Solve the convex optimization problem: minimize ‖L‖* + λ‖S‖₁ subject to M = L + S, where ‖L‖* is the nuclear norm (sum of singular values of L) and ‖S‖₁ is the L1 norm (sum of absolute values of S). Use an Augmented Lagrangian Multiplier (ALM) algorithm or an efficient ADMM implementation.

- Decomposition: The algorithm outputs L (low-rank) and S (sparse).

- Low-Rank Analysis: Perform standard SVD on the recovered low-rank matrix L to obtain robust principal components.

- Outlier Inspection: Analyze the sparse matrix S to identify samples or features contributing to outlier scores.

Validation: Compare the variance explained by the first k components of L versus those from standard PCA on M. Manually inspect samples with large norms in S for potential experimental artifacts.

Protocol 3.2: PCA based on Minimum Covariance Determinant (MCD) Estimator

Objective: To compute principal components derived from a robust estimate of the covariance matrix, resistant to multivariate outliers.

Procedure:

- Subset Selection: From the n data points in p dimensions, draw many random subsets of size h, where h = ⌊(n + p + 1)/2⌋ provides the highest breakdown point.

- Covariance Calculation: For each subset, compute the mean and covariance matrix.

- Determinant Minimization: Select the subset whose covariance matrix has the smallest determinant.

- Robust Estimation: Compute the robust mean and covariance matrix (using a consistency factor and reweighting step) from this optimal subset.

- Eigen Decomposition: Perform eigen decomposition on the robust MCD covariance matrix.

- Component Projection: Project the original data onto the eigenvectors to obtain robust component scores.

Validation: Calculate the Robust Mahalanobis Distance for each observation using the MCD estimates. Flag observations with distances exceeding χ²(p, 0.975) as potential outliers. Compare the order of eigenvalues to standard PCA.

Visualization of Workflows and Logical Relationships

Decision Workflow for PCA Method Selection

RPCA Matrix Decomposition Process

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools & Packages for Robust PCA

| Item / Software Package | Function / Purpose | Implementation Language | Key Feature for Research |

|---|---|---|---|

| 'robustbase' & 'rrcov' R packages | Provide Fast-MCD and other robust covariance estimators for MCD-based PCA. | R | Essential for statistically robust multivariate analysis; integrates with Bioconductor. |

| 'PCAmethods' Bioconductor package | Provides a suite of PCA-related methods, including robust variants, for bioinformatics data. | R | Designed for omics data (microarray, RNA-seq) with built-in visualization. |

| 'scikit-learn' Python library | Offers SparsePCA and randomized PCA; foundational for custom robust algorithm implementation. | Python | Interoperability with pandas DataFrames and sci-kit-learn pipelines. |

| 'PyMCD' Python library | Direct implementation of Fast-MCD and related algorithms. | Python | Python-native alternative to R's rrcov for integration into machine learning workflows. |

| 'cvxpy' Optimization Library | Modeling framework for convex optimization problems, including the RPCA (Principal Component Pursuit). | Python | Enables customization of the RPCA loss function and constraints for specific data. |