From Motion to Meaning: A Comprehensive Guide to Accelerometer Data Processing and Behavioral Feature Extraction for Biomedical Research

This article provides a detailed roadmap for researchers and drug development professionals seeking to translate raw accelerometer data into quantifiable behavioral biomarkers.

From Motion to Meaning: A Comprehensive Guide to Accelerometer Data Processing and Behavioral Feature Extraction for Biomedical Research

Abstract

This article provides a detailed roadmap for researchers and drug development professionals seeking to translate raw accelerometer data into quantifiable behavioral biomarkers. We explore the fundamental principles of inertial measurement, delve into methodological pipelines for feature extraction across time, frequency, and heuristic domains, address common challenges in data quality and model optimization, and establish frameworks for validating extracted features against established clinical endpoints. The guide synthesizes current best practices to enable robust, reproducible analysis of digital behavior in preclinical and clinical studies.

Understanding the Signal: Core Principles of Accelerometer Data for Behavioral Phenotyping

This application note details the principles, protocols, and processing workflows for inertial motion capture via accelerometers. It is framed within a broader thesis on accelerometer data processing and feature extraction behaviours research, with a focus on applications relevant to biomedical research, clinical studies, and drug development—specifically in quantifying patient movement, gait, tremors, and activity levels in clinical trials.

Fundamental Physics and Operating Principles

Accelerometers are inertial sensors that measure proper acceleration (acceleration relative to free-fall) via Newton's second law of motion (F = m·a). Modern micro-electromechanical systems (MEMS) accelerometers, the most common type in research, typically use a proof mass attached to springs. Displacement of the mass under acceleration is measured capacitively, piezoresistively, or optically.

Key Quantitative Specifications of Modern MEMS Accelerometers: The following table summarizes performance parameters for commonly used research-grade accelerometers, sourced from current manufacturer datasheets (2024-2025).

Table 1: Performance Comparison of Research-Grade MEMS Accelerometers

| Model / Series (Manufacturer) | Measurement Range (± g) | Noise Density (µg/√Hz) | Bandwidth (Hz) | Output Interface | Primary Research Application |

|---|---|---|---|---|---|

| ADXL357 (Analog Devices) | ±40, ±20, ±10, ±2.5 | 25 | 1500 | SPI, I2C | High-resolution motion, vibration |

| BMI323 (Bosch Sensortec) | ±2, ±4, ±8, ±16 | 90 | 1600 | SPI, I2C | Wearable activity & movement tracking |

| LSM6DSO32X (STMicroelectronics) | ±2, ±4, ±8, ±16 | 45 | 6700 | SPI, I2C | High-performance motion analysis |

| KX134-1211 (Kionix) | ±8, ±16, ±32, ±64 | 50 (typical) | 1600 | SPI, I2C | High-g impact, kinetic studies |

| ICM-42688-P (TDK InvenSense) | ±2, ±4, ±8, ±16 | 80 | 3200 | SPI, I2C | 6-Axis IMU for precise trajectory |

Core Experimental Protocols

Protocol 3.1: Calibration and Validation of an Accelerometer for Clinical Gait Analysis

Objective: To establish a precise, validated setup for capturing human gait parameters in a controlled laboratory environment. Materials: See "The Scientist's Toolkit" (Section 6). Procedure:

- Sensor Mounting: Securely attach the accelerometer to the subject's body (e.g., dorsal side of the foot, sternum, or lower back) using a semi-rigid mount and medical-grade adhesive tape. Ensure the sensor's axes align with the anatomical planes (sagittal, coronal, transverse).

- Static Calibration: Place the sensor in six known static orientations (±1g on each primary axis). Record the mean ADC output for each. Calculate a 3x3 calibration matrix (scale and misalignment) and offset vector using least-squares.

- Dynamic Validation (Tilt): Use a precision servo to rotate the sensor through a series of known angles. Compare the calculated tilt from the calibrated accelerometer (θ = arctan(Ax / √(Ay² + Az²))) to the known servo angle. Error should be < 0.5°.

- Gait Data Capture: Have the subject walk at a self-selected pace along a 10-meter walkway. Synchronize accelerometer data collection with a gold-standard system (e.g., optical motion capture, force plates). Record data at a minimum sampling rate of 200 Hz for a minimum of 10 gait cycles.

- Data Processing: Apply the calibration matrix to raw data. Use a high-pass filter (cut-off 0.1 Hz) to remove static tilt components if only dynamic motion is of interest. Segment data into individual gait cycles using heel-strike detection from synchronized force plate data or the accelerometer's own vertical acceleration signal.

Protocol 3.2: Feature Extraction from Tremor Data for Pharmacodynamic Studies

Objective: To extract quantitative features from hand tremor data before and after administration of a neuroactive drug candidate. Materials: High-resolution, low-noise accelerometer (e.g., ADXL357), data logger, standardized hand mount. Procedure:

- Baseline Recording: With the subject seated and arm supported, attach the sensor to the dorsum of the dominant hand. Record tri-axial acceleration for 2 minutes under three conditions: arm at rest, arm extended (postural tremor), and during a finger-to-nose task (kinetic tremor). Sample at 500 Hz.

- Post-Dose Recording: Repeat step 1 at predetermined intervals (e.g., 30, 60, 120 minutes) after drug administration.

- Signal Preprocessing: Detrend the signal. Apply a 5th-order Butterworth bandpass filter (3-25 Hz) to isolate typical pathological tremor frequencies.

- Feature Extraction: For each axis and condition, calculate the following features over 30-second epochs:

- Spectral Peak Frequency: Frequency of the maximum magnitude in the power spectral density (PSD).

- Spectral Peak Magnitude: PSD magnitude at the peak frequency (in g²/Hz).

- Total Power: Integral of the PSD in the 3-25 Hz band.

- RMS Acceleration: Root-mean-square of the filtered time-domain signal.

- Statistical Analysis: Compare features between pre- and post-dose epochs using paired t-tests (or non-parametric equivalent). Normalize post-dose values as a percentage change from baseline.

Data Processing and Feature Extraction Workflow

The logical flow from raw inertial data to extracted behavioural features is defined by the following pipeline.

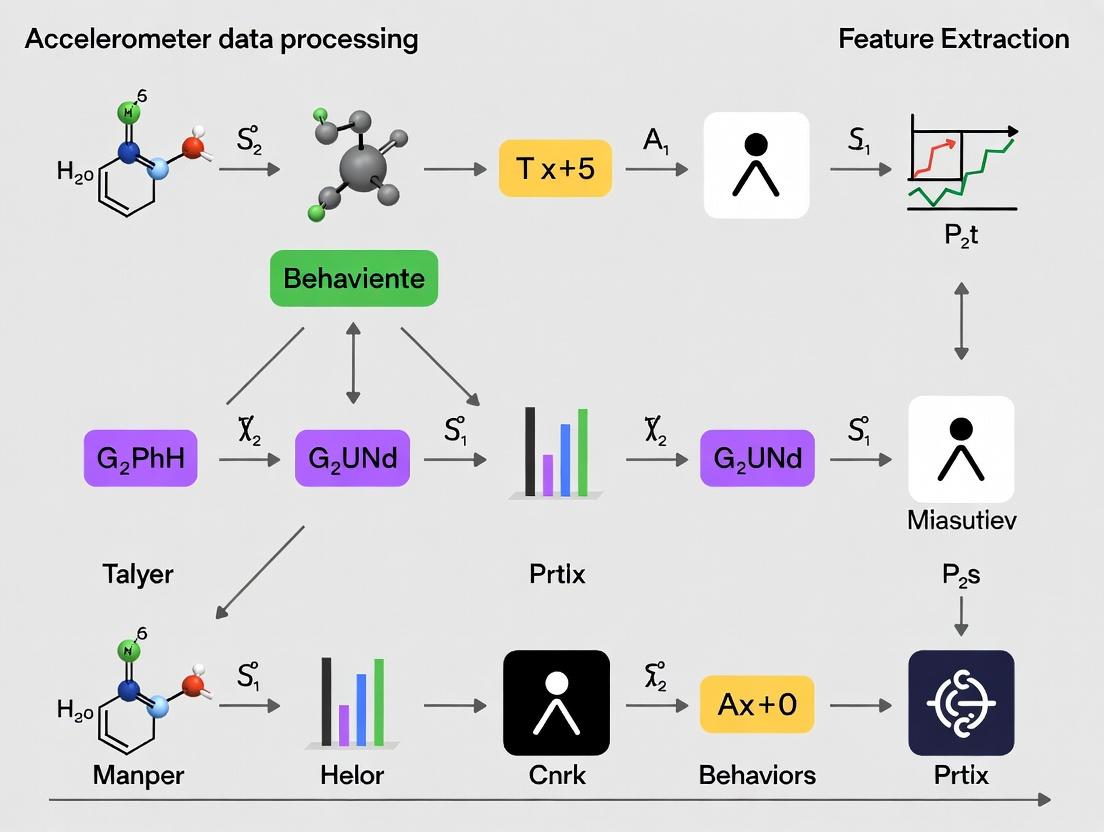

Diagram 1: Accelerometer Data Processing Pipeline

Pathway from Motion to Research Insight

The application of processed accelerometer data to drug development research follows a defined logical relationship.

Diagram 2: From Physics to Pharmacological Insight

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Accelerometer-Based Motion Research

| Item / Reagent | Manufacturer / Example | Function in Research |

|---|---|---|

| High-Performance MEMS Accelerometer | Analog Devices ADXL357, Bosch BMI323 | Core sensor for capturing tri-axial acceleration with low noise and high stability. |

| Programmable Data Logger / Microcontroller | Teensy 4.1, Adafruit Feather M4, custom PCB with BLE | Powers the sensor, collects digital data, and enables real-time streaming or storage. |

| Medical-Grade Adhesive Mounts & Straps | 3M Tegaderm, Hook-and-Loop Straps | Secures sensor to human or animal subjects with minimal movement artifact and skin irritation. |

| Calibration Jig (Multi-axis) | Custom CNC machined, or precision servo stage | Provides known orientations and motions for sensor calibration and dynamic validation. |

| Synchronization Trigger Box | Custom built or lab equipment (e.g., Biopac) | Generates TTL pulses to synchronize accelerometer data with other lab systems (video, EMG, force plates). |

| Signal Processing Software Library | MATLAB Signal Processing Toolbox, Python (SciPy, NumPy) | Provides algorithms for filtering, feature extraction, and spectral analysis. |

| Reference Motion Capture System | Vicon, OptiTrack, Qualisys | Gold-standard system for validating accelerometer-derived kinematics and measuring error. |

This application note is a foundational component of a broader thesis on accelerometer data processing and feature extraction for behavioral research. For scientists in pharmacology and drug development, quantifying subject movement (e.g., in preclinical models) via accelerometers is crucial for assessing drug efficacy, toxicity, and central nervous system activity. The raw voltage output from a micro-electromechanical systems (MEMS) accelerometer must be accurately transformed into meaningful physical vectors to enable robust feature extraction.

Core Principles:

- g-force: The primary unit of measurement, where 1 g = 9.80665 m/s² (earth's gravitational acceleration). An accelerometer at rest measures 1 g along the axis aligned against gravity.

- Sampling Rate (Hz): The frequency at which acceleration is measured. Must be at least twice the highest frequency component of movement (Nyquist theorem) to avoid aliasing. Common rates for behavioral studies range from 10 Hz (gross locomotion) to 1000+ Hz (fine tremor or startle response).

- Axes: Typically three orthogonal axes (X, Y, Z) in a right-handed coordinate system. Raw output is a time-series vector a(t) = [ax(t), ay(t), a_z(t)].

The following tables summarize standard specifications for accelerometers used in biomedical and research applications.

Table 1: Typical Accelerometer Output Parameters & Ranges

| Parameter | Common Range in Behavioral Research | Description & Relevance to Drug Studies |

|---|---|---|

| Dynamic Range (±g) | ±2g, ±4g, ±8g, ±16g | Must be selected to avoid clipping during high-activity bouts (e.g., stimulant-induced hyperactivity). |

| Resolution | 12-bit to 16-bit | Determines smallest detectable change in acceleration. Critical for measuring subtle tremors or sedation. |

| Sampling Rate | 25 Hz - 400 Hz | Lower rates for general locomotion; higher rates for kinematic detail (gait, tremor frequency). |

| Noise Density | 100 - 400 µg/√Hz | Lower noise enables cleaner signal for feature extraction of low-amplitude behaviors. |

| Zero-g Offset | ±50 mg (typical) | Factory-calibrated offset voltage; drift can affect long-term studies. |

| Sensitivity | 100 - 800 mV/g (Analog) | Scale factor converting voltage to g. Calibration is essential for accuracy. |

| or | 256 - 4096 LSB/g (Digital) |

Table 2: Impact of Sampling Rate on Capturable Behaviors

| Target Behavior | Approx. Frequency Content | Minimum Recommended Sampling Rate (Nyquist) | Typical Research Sampling Rate |

|---|---|---|---|

| Gross Locomotion (rodent) | 0-15 Hz | 30 Hz | 50-100 Hz |

| Gait & Stride Analysis | 0-30 Hz | 60 Hz | 100-200 Hz |

| Tremor (physiological) | 4-12 Hz | 24 Hz | 100-250 Hz |

| Tremor (pathological) | 3-18 Hz | 36 Hz | 200-500 Hz |

| Startle Response | 0-80 Hz | 160 Hz | 400-1000 Hz |

| Vocalization (via vibration) | 100-1000 Hz | 2000 Hz | >2000 Hz |

Experimental Protocols

Protocol 1: Calibration of a 3-Axis Accelerometer for g-Force Accuracy

Objective: To establish an accurate conversion from raw digital counts or voltage to calibrated g-force for each axis.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Mounting: Securely attach the accelerometer to a calibration fixture. Ensure the positive sensing axis of interest is precisely aligned with the direction of gravity.

- Data Collection: a. For the +1g point: Orient the target axis vertically, pointing downward. Record raw output for 10 seconds at the intended operational sampling rate. b. For the -1g point: Rotate the device 180° so the same axis points upward. Record raw output for 10 seconds. c. For the 0g point (Optional, 6-point method): Orient the target axis horizontally (perpendicular to gravity). Record output. Repeat for the other two axes.

- Calculation: For each axis, calculate:

- Sensitivity (Scale Factor): ( S = \frac{(Raw{+1g} - Raw{-1g})}{2} ) [Counts/g or V/g]

- Offset (Bias): ( B = \frac{(Raw{+1g} + Raw{-1g})}{2} ) [Counts or V]

- Calibrated g-force: ( g{calibrated} = \frac{(Raw{measured} - B)}{S} )

- Validation: Repeat the procedure at different temperatures if the device is temperature-sensitive.

Protocol 2: Establishing an Appropriate Sampling Rate for a Novel Behavioral Assay

Objective: To determine the minimum sampling rate required to digitally capture a specific behavior without aliasing.

Materials: High-speed camera (reference), accelerometer, data acquisition system synchronized with camera.

Procedure:

- Pilot Recording: Simultaneously record the target behavior (e.g., mouse head tremor) using a high-speed camera (e.g., 500 fps) and an accelerometer at its maximum sampling rate.

- Spectral Analysis: a. Extract a clean, representative burst of the behavior from the accelerometer data. b. Perform a Fast Fourier Transform (FFT) on this signal to create a frequency spectrum. c. Identify the highest significant frequency component (F_max) containing 95% of the signal's power.

- Determine Sampling Rate: Apply the Nyquist criterion: ( fs > 2 \times F{max} ). To provide a safety margin and better waveform definition, select ( fs \geq 4 \times F{max} ).

- Verification: Digitally downsample the original high-rate accelerometer data to the proposed new rate. Visually and spectrally compare the downsampled signal to the original to ensure no critical information is lost.

Visualizations

Diagram 1: Raw Accelerometer Data Processing Workflow

Diagram 2: Key Feature Extraction Pathways from Accelerometer Vector

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in Accelerometer Research | Example / Specification |

|---|---|---|

| 3-Axis MEMS Accelerometer | Core sensor converting acceleration to analog voltage or digital signal. | Analog Devices ADXL357 (low-noise), STMicroelectronics LIS3DH (low-power). |

| Data Acquisition (DAQ) System | Digitizes analog accelerometer output at high fidelity and precise sampling intervals. | National Instruments USB-6003, or microcontroller with ADC (e.g., Teensy 4.0). |

| Calibration Jig | Provides precise ±1g and 0g orientation references for sensor calibration. | Precision-machined block with leveling feet and 90° mounting faces. |

| High-Speed Camera | Gold-standard reference for validating temporal dynamics of movement. | >250 fps capable, used for sampling rate determination. |

| Signal Processing Software | For calibration, filtering, FFT, and feature extraction algorithm development. | Python (SciPy, NumPy), MATLAB, or LabVIEW. |

| Controlled Environment Chamber | Minimizes confounding vibrations and allows for temperature control during calibration/studies. | Acoustic foam, optical table, temperature controller. |

| Reference Vibrator/Shaker | Provides a known acceleration (e.g., 1.0 g RMS) for dynamic calibration validation. | Calibrated piezoelectric or electromagnetic shaker. |

Within the broader thesis on accelerometer data processing and feature extraction for behavior research, defining a "behavior" from raw inertial signals is a foundational challenge. Accelerometers, prevalent in wearables and biologgers, produce high-frequency, multi-axis time-series data reflecting movement dynamics. In pharmacological and neuroscience research, the goal is to map these complex signals onto discrete, biologically meaningful units of action (e.g., grooming, rearing, gait cycles) or behavioral states (e.g., sleep, exploration). This document outlines the conceptual framework, application notes, and experimental protocols for constructing a behavioral corpus from accelerometry data.

Conceptual Framework: Hierarchical Definitions of Behavior

Behavior can be operationalized at different resolutions from accelerometry data. The table below summarizes the standard taxonomy.

Table 1: Hierarchical Definitions of Behavior in Accelerometry Data

| Level | Temporal Scale | Definition | Example in Rodent Models | Typical Accelerometry Feature |

|---|---|---|---|---|

| Movement | Milliseconds to Seconds | A primitive, indivisible unit of motion. | Limb acceleration, startle. | Raw XYZ values, vector magnitude. |

| Action/Motor Gesture | Seconds | A goal-directed sequence of movements. | Single rearing event, head dip. | Defined by waveform shape, peak frequency. |

| Behavioral Episode | Seconds to Minutes | A sustained period of a specific activity. | Grooming bout, running on a wheel. | Sequences of classified actions, duration. |

| Behavioral State | Minutes to Hours | A prolonged, dominant physiological/behavioral condition. | Sleep, active exploration, immobility. | Proportion of activities over a rolling window. |

Application Notes & Key Protocols

Protocol: Tri-Axial Accelerometer Data Acquisition for Rodent Behavior

Objective: To collect high-fidelity, timestamped raw accelerometry data suitable for granular behavioral classification.

Materials & Reagent Solutions:

Table 2: Research Reagent Solutions & Essential Materials

| Item | Function/Description |

|---|---|

| Implantable or Backpack Telemetry System (e.g., DSI, Starr Life Sciences) | Miniaturized device with tri-axial accelerometer for in vivo rodent data collection. |

| Data Acquisition Software (e.g., Ponemah, LabChart, EthoVision) | Software to record, synchronize, and visually inspect sensor data with video. |

| Calibration Jig | Device to physically orient the sensor in known positions (e.g., +/-1g) for signal calibration. |

| Behavioral Testing Arena (Open Field, Home Cage) | Controlled environment where behaviors of interest are elicited. |

| Synchronized High-Speed Video Camera | Gold-standard for ground-truth behavioral labeling. |

| Time-Code Generator | Hardware to synchronize video and accelerometer data streams with microsecond precision. |

Procedure:

- Sensor Calibration: Prior to implantation/attachment, secure the telemetry sensor in the calibration jig. Record static readings for all six cardinal orientations (+X, -X, +Y, -Y, +Z, -Z). Calculate scaling factors and offsets to ensure each axis reads precisely +1g or -1g.

- Surgical Implantation/Attachment: Following IACUC-approved protocols, implant the telemetry device intraperitoneally or subcutaneously, or securely affix a backpack logger. Ensure the sensor's orientation relative to the animal's body axes is documented and consistent.

- Experimental Setup: Place the animal in the testing arena. Start the accelerometer data acquisition software (sampling rate ≥ 100 Hz). Simultaneously, start the synchronized high-speed video recording (≥ 30 fps).

- Data Synchronization: Emit a discrete, time-locked physical tap (detectable in both accelerometer and audio/video) at the start and end of recording. Use these events to align data streams.

- Data Collection: Record continuous accelerometry data (X, Y, Z axes) and video for the duration of the experimental session (e.g., 60-minute open field test).

- Data Export: Export raw accelerometer data as timestamped CSV files. Video should be saved in a format suitable for annotation software.

Protocol: Ground-Truth Behavioral Annotation

Objective: To create a labeled dataset linking accelerometer data segments to discrete behaviors.

Procedure:

- Video Annotation: Using specialized software (e.g., BORIS, DeepLabCut, EthoVision), review synchronized video and annotate the onset and offset of target behaviors (e.g., "rearing," "grooming," "walking").

- Label Mapping: Using the synchronized timestamps, map each annotated behavior episode onto the corresponding segment of the raw accelerometer time-series data.

- Data Segmentation: Segment the continuous accelerometer data into labeled epochs based on the annotations. These epoch files (e.g., all "grooming" bouts from all subjects) constitute the initial behavioral corpus.

Protocol: Feature Extraction for Behavior Classification

Objective: To transform raw accelerometer segments into a set of discriminative features for machine learning.

Procedure:

- Segment Preprocessing: For each labeled data segment, calculate the Vector Magnitude (VM):

VM = sqrt(X² + Y² + Z²). Optionally, apply a high-pass filter (>0.5 Hz) to remove static gravity component. - Feature Calculation: Compute features in time, frequency, and statistical domains for each axis and the VM. Common features include:

- Time Domain: Mean, variance, skewness, kurtosis, zero-crossing rate, signal magnitude area (SMA), peak count.

- Frequency Domain: Spectral entropy, dominant frequency, power in frequency bands (e.g., 0-5 Hz, 5-20 Hz).

- Other: Correlation between axes, tilt angles (pitch, roll).

- Feature Table Compilation: Compile all calculated features for each behavioral segment into a structured table (rows = bouts, columns = features), with an additional column for the ground-truth behavior label.

Data Presentation & Analysis

Table 3: Example Feature Summary for Murine Behaviors (Hypothetical Data)

| Behavior | Mean VM | Spectral Entropy | Peak Freq (Hz) | X-Y Correlation | Bout Duration (s) |

|---|---|---|---|---|---|

| Immobility | 1.02 ± 0.01 | 0.15 ± 0.05 | 0.0 ± 0.0 | 0.05 ± 0.10 | 10.5 ± 8.2 |

| Locomotion | 1.45 ± 0.20 | 0.82 ± 0.08 | 4.5 ± 1.2 | 0.65 ± 0.15 | 3.2 ± 2.1 |

| Rearing | 1.28 ± 0.15 | 0.60 ± 0.12 | 2.8 ± 0.9 | -0.30 ± 0.20 | 1.5 ± 0.5 |

| Grooming | 1.18 ± 0.10 | 0.45 ± 0.10 | 6.8 ± 2.5 | 0.10 ± 0.25 | 5.8 ± 3.4 |

Visualizing the Workflow

Title: Accelerometry Behavioral Classification Workflow

Title: Iterative Definition of a Behavior

The translational pipeline from preclinical discovery to clinical validation relies on robust, quantitative behavioral phenotyping. Accelerometer data, processed for feature extraction, provides a continuous, objective measure of activity and complex behaviors in both rodent models and human subjects. This serves as a critical biomarker for efficacy and safety in therapeutic development for neurological, psychiatric, and metabolic disorders.

Preclinical Rodent Studies: Protocols and Feature Extraction

Protocol: Continuous Home-Cage Monitoring in Rodents

Objective: To assess longitudinal, spontaneous activity and behavioral patterns in mice/rats within a familiar environment, minimizing stress. Materials:

- Standard rodent home cage equipped with a tri-axial accelerometer (e.g., mounted in the cage lid or floor).

- Data acquisition system (e.g., DASYLab, LabChart, or custom DAQ software).

- Standard rodent chow and water ad libitum.

- Dedicated, climate-controlled housing room with a 12:12 light-dark cycle.

Procedure:

- Habituation: House animals singly or in groups (with individual ID transponders if grouped) in the instrumented cage for a minimum of 48 hours prior to data recording.

- Baseline Recording: Record continuous accelerometer data (sampling frequency ≥ 100 Hz) for a minimum of 72 hours to establish baseline diurnal patterns.

- Intervention: Administer the test compound or vehicle control.

- Post-Intervention Recording: Record continuous data for the desired period (e.g., 24h, 7 days).

- Data Export: Export raw acceleration (X, Y, Z axes) time-series data for offline processing.

Accelerometer Data Processing & Feature Extraction Pipeline

1. Preprocessing:

- Calibration: Adjust for sensor offset and gain using known reference positions.

- Filtering: Apply a 4th-order Butterworth bandpass filter (0.1-20 Hz) to remove non-physiological drift and high-frequency noise.

- Vector Magnitude Calculation: Compute the Dynamic Body Acceleration (DBA) for each timepoint t:

VM(t) = √(X(t)² + Y(t)² + Z(t)²).

2. Feature Extraction: The following features are computed for non-overlapping epochs (e.g., 5-second or 1-minute windows).

Table 1: Key Extracted Features from Rodent Accelerometer Data

| Feature Category | Specific Feature | Calculation/Description | Behavioral Correlation | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Time-Domain | Activity Count | Sum of deviations from epoch mean VM. | Gross locomotor activity. | ||||||

| Immobility Time | % of epoch where VM variance < threshold. | Resting/sleep periods. | |||||||

| Signal Magnitude Area (SMA) | (∑ | X | + ∑ | Y | + ∑ | Z | ) / N_samples. | Overall activity energy expenditure. | |

| Frequency-Domain | Dominant Frequency | Peak frequency in VM FFT spectrum (1-10 Hz). | Gait cycle, stereotypy rate. | ||||||

| Spectral Entropy | Regularity of power spectrum distribution. | Behavioral repertoire complexity. | |||||||

| Statistical | Variance/Std. Dev. | Variability of VM signal within epoch. | Movement intensity fluctuations. | ||||||

| Skewness/Kurtosis | Asymmetry and "tailedness" of VM distribution. | Distinguishes gait from tremor. |

Diagram Title: Rodent Accelerometer Data Processing Workflow

The Scientist's Toolkit: Preclinical Research

Table 2: Essential Research Reagents & Materials

| Item | Function & Application |

|---|---|

| Tri-axial Accelerometer Loggers (e.g., ADXL345, MMA8452Q) | Miniature sensors for capturing high-resolution acceleration data in three dimensions. |

| Telemetry Implants (e.g., DSI PhysioTel) | Surgically implanted devices for chronic, untethered recording of activity and physiology. |

| Automated Home-Cage Systems (e.g., Tecniplast DVC, New Behavior NOLDUS PhenoTyper) | Integrated platforms providing environment control, video, and accelerometry. |

| Data Acquisition Software (e.g., LabChart, NeuroLogger) | Software for configuring sensors, recording, and visualizing raw data streams. |

| Computational Environment (e.g., Python with Pandas/NumPy, MATLAB) | Essential for implementing custom filtering, feature extraction, and machine learning pipelines. |

Translation to Human Clinical Trials

Protocol: Wearable Accelerometer Use in Phase II/III Clinical Trials

Objective: To quantify real-world motor activity, sleep/wake patterns, and drug response in patient populations. Materials:

- Wrist-worn or waist-worn research-grade accelerometer (e.g., ActiGraph GT9X, Axivity AX6).

- Regulatory-compliant electronic Clinical Outcome Assessment (eCOA) platform.

- Protocol-specific Patient Diary (electronic).

- Charging station and instruction kits for participants.

Procedure:

- Device Initialization & Distribution: Configure devices with unique IDs and set sampling frequency (e.g., 100 Hz). Distribute to participants at the clinic visit with standardized instructions.

- Wearing Schedule: Participants wear the device continuously for 7-14 days, only removing for water-based activities. Compliance is monitored via light sensors and patient diary.

- Data Collection: Acceleration data is stored onboard or transmitted via Bluetooth to a paired smartphone/tablet.

- Clinic Return & Upload: Participants return devices at the next visit; data is uploaded to a secure, HIPAA/GCP-compliant server.

- Data Processing: Centralized, automated processing using a validated algorithm to extract endpoints.

Feature Extraction for Clinical Endpoints

Human data processing builds upon preclinical methods but focuses on clinically validated digital endpoints.

Table 3: Common Digital Endpoints from Human Accelerometer Data

| Endpoint Category | Digital Endpoint | Epoch | Clinical Relevance |

|---|---|---|---|

| Activity | Total Daily Activity Counts | 24 hours | Overall disease burden, treatment efficacy. |

| Moderate-to-Vigorous Physical Activity (MVPA) | 1 minute | Cardiorespiratory fitness, functional capacity. | |

| Sleep | Total Sleep Time (TST) | Primary sleep period | Sleep quality, a common drug side effect. |

| Wake After Sleep Onset (WASO) | Primary sleep period | Sleep fragmentation (e.g., in Parkinson's). | |

| Circadian Rhythm | Intradaily Stability (IS) | 7+ days | Rhythm strength; disrupted in neuro disorders. |

| Most Active 10-hour Period (M10) Onset | 7+ days | Rhythm phase; measures diurnal shifts. | |

| Movement Quality | Gait Cadence | Walking bouts | Parkinsonian bradykinesia, MS fatigue. |

| Arm Swing Symmetry | Walking bouts | Lateralization of motor symptoms. |

Diagram Title: Translational Path of Accelerometer Biomarkers

The Scientist's Toolkit: Clinical Research

Table 4: Essential Tools for Clinical Accelerometer Research

| Item | Function & Application |

|---|---|

| Research-Grade Wearables (e.g., ActiGraph, Axivity, MotionWatch) | Validated, regulatory-accepted devices for capturing raw acceleration data in clinical studies. |

| Regulatory & Compliance Platform (e.g., Clario, Medidata Rave eCOA) | Ensures data integrity, chain of custody, and compliance with FDA 21 CFR Part 11, GDPR, GCP. |

| Validated Processing Algorithms (e.g., GGIR, ActiLife, Choi Sleep Algorithm) | Open-source or commercial software for generating standardized, reproducible digital endpoints. |

| Clinical Trial Management System (CTMS) | Integrates digital biomarker data with traditional clinical, genomic, and patient-reported data. |

Integrated Data Analysis Protocol

Objective: To correlate preclinical and clinical accelerometer-derived features for translational validation.

Procedure:

- Feature Alignment: Map analogous features across species (e.g., rodent "immobility time" to human "total sleep time"; rodent "signal variance" to human "movement vigor").

- Dose-Response Modeling: In preclinical data, model the effect of drug dose on key activity features. Use this to inform human starting doses and expected effect sizes.

- Longitudinal Trajectory Analysis: Apply mixed-effects models to both datasets to compare the trajectory of change in activity biomarkers before and after intervention.

- Machine Learning for Subphenotyping: Use unsupervised clustering (e.g., k-means, hierarchical) on combined feature sets to identify distinct behavioral response subtypes in rodents and patients.

Diagram Title: Integrated Preclinical-Clinical Data Analysis

Within the broader thesis on accelerometer data processing and feature extraction behaviours for pharmacological research, precise navigation of sensor data specifications is paramount. These parameters dictate the fidelity and utility of motion data for quantifying behavioural phenotypes, assessing drug efficacy, and understanding neuro-motor side effects. Incorrect specification selection leads to aliasing, clipping, quantization errors, or signal obfuscation by noise, fundamentally compromising downstream analysis and scientific conclusions.

Table 1: Core Data Specification Parameters and Impact

| Parameter | Definition | Primary Impact on Feature Extraction | Typical Values (Research-Grade Accelerometers) |

|---|---|---|---|

| Sample Rate (fs) | Number of data points captured per second (Hz). | Determines the maximum observable frequency (Nyquist frequency = fs/2). Insufficient rate causes aliasing of high-frequency movements (e.g., tremor, startle). | 100 Hz (gait), 500-1000 Hz (tremor, high-frequency kinetics). |

| Dynamic Range | The span of accelerations the sensor can measure, expressed in ± g (where g = 9.8 m/s²). | Defines the maximum and minimum recordable acceleration. Too small a range causes clipping during high-force events; too large reduces effective bit resolution. | ±2g (subtle behaviours), ±8g (ambulatory, gross motor), ±16g (high-impact events). |

| Bit Depth (Resolution) | The number of bits used to digitize the analog signal across the dynamic range. | Sets the smallest detectable change in acceleration (LSB = Range / 2^bits). Low bit depth increases quantization noise, masking subtle signal variations. | 16-bit (standard), 24-bit (high-resolution for low-noise systems). |

| Noise Floor (Noise Density) | The inherent electrical noise of the sensor, expressed as µg/√Hz (micro-g per root Hertz). | Defines the lower limit of detectable signal. A high noise floor obscures low-amplitude, biologically critical movements (e.g., breathing, micro-movements in sedation). | 20-100 µg/√Hz (standard MEMS), < 10 µg/√Hz (high-performance lab grade). |

Table 2: Parameter Interdependencies and Trade-offs

| Parameter Pair | Interaction | Research Implication |

|---|---|---|

| Range & Bit Depth | Effective Resolution = (2 × Range) / (2^Bit Depth). A wider range with the same bit depth yields a larger Least Significant Bit (LSB), reducing amplitude precision. | Selecting an unnecessarily wide range diminishes the ability to resolve subtle drug-induced changes in movement magnitude. |

| Sample Rate & Noise | Total Integrated Noise = Noise Density × √(fs × 0.5). Higher sample rates integrate noise over a wider bandwidth, increasing total noise in the time-domain signal. | Oversampling without appropriate filtering increases noise, potentially burying low-SNR behavioural features. |

| Bit Depth & Noise Floor | The ideal system has an LSB smaller than the noise floor. A higher bit depth is wasted if the electrical noise is greater than 1 LSB. | Using a 24-bit ADC with a high-noise sensor provides no real benefit; resources should first be allocated to lowering noise. |

Experimental Protocols for Specification Validation

Protocol 1: Empirical Determination of Usable Bandwidth and Sample Rate

Objective: To establish the minimum required sample rate for a specific behavioural assay without aliasing. Materials: High-sample-rate reference accelerometer (≥2kHz), animal subject or behavioural rig, data acquisition system, spectral analysis software (e.g., MATLAB, Python). Method:

- Mount the reference accelerometer securely to the subject or relevant part of the experimental apparatus.

- Record data during a representative experiment (e.g., rodent open-field exploration, primate reaching) at the reference sensor's maximum rate.

- Compute the Power Spectral Density (PSD) for the recorded data across all axes.

- Identify the frequency (f_max) at which the signal power falls below the sensor's noise floor or a pre-defined threshold (e.g., -40 dB from peak power).

- The minimum non-aliasing sample rate is

fs_min = 2.5 × f_max(providing a safety margin beyond Nyquist). - Validate by downsampling the original data to the proposed

fs_minand comparing time-domain features (e.g., peak amplitudes, zero-crossings) with the anti-alias filtered original.

Protocol 2: Dynamic Range Calibration and Clipping Detection

Objective: To select an optimal dynamic range that captures all relevant accelerations without clipping or sacrificing excessive resolution. Materials: Programmable-range accelerometer, calibration shaker table or known-angle tilt jig, data acquisition software. Method:

- Secure the accelerometer to the calibration apparatus.

- Set the accelerometer to its widest available range (e.g., ±16g).

- Execute the full range of motions expected in the experiment using the shaker/tilt jig. Record data.

- Plot a histogram of the recorded acceleration values. Identify the absolute maximum acceleration (|a_max|).

- Calculate the optimal range as:

Selected Range ≥ |a_max| × 1.2(20% headroom). - Reconfigure the sensor to this new range and repeat a subset of motions to confirm no clipping.

- Clipping Detection Algorithm: In subsequent experiments, flag sequences where the absolute acceleration equals the maximum digital value for consecutive samples (e.g., >5 ms), indicating sustained saturation.

Protocol 3: Quantifying System Noise Floor and Effective Bits

Objective: To measure the real-world noise characteristics of the data acquisition system and derive the Effective Number of Bits (ENOB). Materials: Accelerometer, shielded connection cable, Faraday cage or very stable platform, high-resolution DAQ system. Method:

- Place the accelerometer in a Faraday cage or on a mechanically isolated, stable surface to minimize environmental vibration and EMI.

- Record data for a minimum of 60 seconds at the intended sample rate and range for the experiment.

- Compute the time-domain standard deviation (σ) of the signal from all axes. This represents the total integrated noise.

- Calculate the Noise Density approximation:

Noise Density ≈ σ / √(fs × 0.5), reported in g/√Hz. - Calculate the Effective Number of Bits (ENOB):

ENOB = log2( (2 × Range) / (σ × √12) ). This value, always less than the ADC's nominal bit depth, represents the true resolution after accounting for noise.

Visualization of Data Specification Logic and Workflow

Title: Decision Flowchart for Accelerometer Data Specification Selection

Title: How Data Specifications Constrain the Acquisition Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Accelerometer-Based Behavioural Research

| Item / Solution | Function in Research | Example Product / Specification |

|---|---|---|

| High-Performance MEMS Accelerometer | Core sensor for capturing tri-axial acceleration. Must offer programmable sample rate, range, and low noise. | Analog Devices ADXL357 (low-noise: 20 µg/√Hz), STMicroelectronics IIS3DWB (high-rate: >3 kHz). |

| Programmable Data Acquisition (DAQ) System | Provides precise timing, analog front-end, ADC, and digital communication for sensor data. | National Instruments USB-6000 Series, Texas Instruments ADS131M04 (24-bit ADC). |

| Calibration & Validation Apparatus | Provides known accelerations for sensor calibration and protocol validation. | Precision tilt stage (±0.1°), calibrated vibration shaker table (with known frequency/amplitude). |

| Signal Processing Software Suite | Enables spectral analysis, filtering, feature extraction, and algorithm development. | MATLAB with Signal Processing Toolbox, Python (SciPy, NumPy, Pandas). |

| Shielded Enclosures & Cabling | Minimizes electromagnetic interference (EMI) that can corrupt low-amplitude signals, critical for noise floor measurements. | Faraday cage, coaxial cables with shielding, ferrite beads. |

| Behavioural Rig Mounting Solutions | Secure, stable, and minimally intrusive mounting of sensors to subjects (animal or human) or apparatus. | Miniature enclosures, medical-grade adhesives, lightweight harnesses, screw mounts. |

| Reference Sensor (Gold Standard) | A higher-specification sensor used to validate the performance of the primary experimental system. | Piezoelectric accelerometers (e.g., PCB Piezotronics), optical motion capture systems (e.g., Vicon). |

The Extraction Pipeline: Methodologies for Deriving Behavioral Features from Accelerometer Data

Within a broader thesis on accelerometer data processing and feature extraction for behavioral research, robust preprocessing is foundational. For applications in human movement analysis, pharmacodynamics studies, or digital biomarker discovery in drug development, the raw signal from inertial measurement units (IMUs) is contaminated by sensor errors and the constant gravitational component. This article details the essential preprocessing steps—calibration, filtering, and gravity removal—required to transform raw acceleration into clean, biomechanically meaningful data for subsequent feature extraction.

Sensor Calibration

Raw accelerometer data suffers from systematic errors: bias (offset) and scale factor (sensitivity) inaccuracies. Calibration is the process of estimating and correcting these errors.

Key Parameters & Data

Systematic errors are characterized as:

- Bias (Offset): Non-zero output when measuring zero acceleration.

- Scale Factor: Deviation from the ideal sensitivity (e.g., not exactly 1 LSB/g).

- Non-Orthogonality: Misalignment of sensor axes.

Table 1: Typical Accelerometer Error Characteristics Pre-Calibration

| Error Type | Typical Range (Low-cost IMU) | Impact on Raw Signal |

|---|---|---|

| Bias | ±50 mg | Constant offset on each axis |

| Scale Factor Error | ±3% of full scale | Incorrect amplitude of measured acceleration |

| Cross-Axis Sensitivity | ±2% | Acceleration on one axis leaks to another |

Experimental Protocol: Six-Position Static Calibration

This is the standard method for tri-axial accelerometers.

Materials:

- IMU/device with accelerometer.

- Precision leveling jig or certified flat surface.

- Data acquisition system (e.g., laptop with Bluetooth/UART).

Procedure:

- Fixture Preparation: Mount the IMU securely to the calibration jig, ensuring known alignment between IMU axes and jig planes.

- Data Collection: Orient the IMU so each primary axis (+X, -X, +Y, -Y, +Z, -Z) points directly downwards, parallel to the gravity vector.

- Recording: For each of the 6 static positions, record at least 5 seconds of stable data at a sufficient sampling rate (e.g., 100 Hz).

- Model Fitting: For each axis, the measured output ( S ) is modeled as: ( S = T \cdot (G \cdot A{true} + B) ) where ( A{true} ) is the known reference gravity vector (±1 g), ( B ) is the bias vector, ( T ) is a transformation matrix (containing scale and cross-axis terms), and ( S ) is the raw sensor output. Use least-squares estimation to solve for ( T ) and ( B ).

- Correction: Apply the inverse transformation to all future data: ( A_{corrected} = T^{-1} \cdot (S - B) )

Filtering: High-pass & Low-pass

Filtering isolates the frequency components of interest: low-pass filtering removes high-frequency noise, while high-pass filtering removes low-frequency drift.

Quantitative Filter Design Criteria

The choice of cutoff frequency is critical and depends on the biomechanical activity.

Table 2: Recommended Filter Cutoff Frequencies for Human Movement

| Activity Type | Frequency Band of Interest | Recommended Low-pass Cutoff (Hz) | Recommended High-pass Cutoff (Hz) |

|---|---|---|---|

| Gross Motor (walking, running) | 0.1-20 Hz | 10-20 | 0.1-0.5 |

| Fine Motor (tremor, posture) | 0.5-25 Hz | 15-25 | 0.5-1.0 |

| Impact/Vibration Detection | 5-50+ Hz | 50-100 | 5.0 |

| Drift Removal (for gravity) | N/A | N/A | <0.1 |

Experimental Protocol: Implementing a 4th Order Butterworth Filter

A Butterworth filter provides a maximally flat passband, preferred for preserving signal shape.

Materials:

- Calibrated acceleration time-series data.

- Scientific computing environment (e.g., Python SciPy, MATLAB).

Procedure (Dual-Pass for Zero Phase Distortion):

- Sampling Rate: Determine the sampling frequency (( f_s )) of your data.

- Cutoff Specification: Select cutoff frequency (( fc )) based on Table 2. Normalize it by the Nyquist frequency: ( Wn = fc / (0.5 * fs) ).

- Filter Design: Design a 4th-order Butterworth filter. For a low-pass:

b, a = butter(N=4, Wn=Wn_low, btype='low', analog=False). For a high-pass, usebtype='high'. - Application: Apply the filter using

filtfilt(b, a, data)function. This forward-backward filtering ensures zero phase lag, which is crucial for temporal analysis. - Validation: Plot the frequency spectrum of the signal before and after filtering to confirm attenuation in the stopband.

Gravity Removal

In static or low-dynamic movements, the gravitational component (≈1 g) can dominate the signal, obscuring the smaller dynamic inertial acceleration. Removal is essential for analyzing limb or body segment motion.

Methodology Comparison

Table 3: Gravity Removal Methods Comparison

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| High-Pass Filtering | Gravity is near-DC (~0 Hz). | Simple, no additional sensors needed. | Can attenuate low-frequency dynamic motion; introduces transient artifacts. |

| Tilt Estimation (Static) | Assume static posture: ( a{dynamic} = a{measured} - g \cdot \sin(\theta) ). | Physically intuitive, accurate for static/very slow motion. | Fails under moderate to high dynamics. |

| Sensor Fusion (e.g., Madgwick, Kalman Filter) | Fuse accelerometer with gyroscope data to estimate orientation and subtract gravity vector. | Accurate under moderate dynamics, standard in modern IMU processing. | Requires gyroscope, more computationally complex. |

Experimental Protocol: Gravity Removal via High-Pass Filtering

A simple, effective protocol for studies where low-frequency dynamic content is not of primary interest.

Materials:

- Calibrated and low-pass filtered accelerometer data.

- Scientific computing environment.

Procedure:

- Assess Signal: Plot the raw calibrated signal. The mean value over a stationary period represents the gravitational component.

- Filter Design: Design a high-pass Butterworth filter with a very low cutoff frequency (e.g., 0.1 Hz). The exact value depends on the lowest frequency of biological interest (see Table 2).

- Application: Apply the filter using the

filtfiltmethod (as in Section 3.2) to the data from each axis. This removes the constant and very low-frequency components (gravity and drift). - Output: The resulting signal represents purely dynamic acceleration.

Visualization of Workflows

Diagram Title: Accelerometer Data Preprocessing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials & Computational Tools for Accelerometer Preprocessing

| Item / Solution | Function & Purpose in Preprocessing |

|---|---|

| Precision Leveling Jig | Provides known, stable orientations for the 6-position static calibration protocol. |

| Calibration Software (e.g., IMU_Calibration, OpenIMU) | Implements least-squares algorithms to compute bias, scale, and cross-axis matrices from static data. |

| Digital Signal Processing Library (SciPy Signal, MATLAB DSP Toolbox) | Provides functions for designing and applying Butterworth and other digital filters (butter, filtfilt). |

| Sensor Fusion Algorithm (Madgwick, Mahony, Kalman Filter) | Open-source or commercial libraries that fuse accelerometer, gyroscope, and optionally magnetometer data for accurate orientation and gravity vector estimation. |

| Reference Motion Capture System (Optical, e.g., Vicon) | Serves as gold standard for validating the accuracy of preprocessed accelerometer data in controlled experiments. |

| Controlled Motion Simulator/Shaker Table | Provides ground truth sinusoidal or known trajectory inputs for dynamic validation of filtering and gravity removal. |

This document serves as a detailed protocol for time-domain feature extraction from accelerometer data, a critical component of a broader thesis investigating feature extraction behaviors for quantifying human movement. The reliable computation of Activity Counts, Variance, Signal Magnitude Area (SMA), and Movement Onset Detection is foundational for research applications in human activity recognition, assessing drug efficacy on motor symptoms, and monitoring disease progression in neurological disorders.

Core Feature Definitions & Quantitative Summaries

Feature Definitions and Formulas

Activity Counts: A composite measure representing the magnitude of movement over a discrete epoch, typically obtained by integrating the rectified and filtered accelerometer signal. Variance: Measures the dispersion of the accelerometer signal around its mean, indicating movement intensity and variability. Signal Magnitude Area (SMA): The cumulative area under the curve of the absolute accelerometer signal over time, representing the total magnitude of movement. Movement Onset Detection: The identification of the precise time point at which a movement initiation occurs, often based on threshold crossings of derived kinematic variables.

Table 1: Standard Parameters for Feature Calculation from Tri-Axial Accelerometer Data

| Feature | Typical Epoch Length | Common Sampling Rate (Hz) | Key Formula/Note | Typical Units |

|---|---|---|---|---|

| Activity Counts | 1-60 seconds | 20-100 | Σ|band-pass filtered signal| per epoch | Arbitrary Counts |

| Variance (σ²) | Per epoch or rolling window | ≥20 | (1/N) Σ (x_i - μ)² for each axis (x,y,z) | g² (g: gravity) |

| Signal Magnitude Area (SMA) | Per epoch (e.g., 1s) | ≥20 | ∫(|ax| + |ay| + |a_z|) dt over epoch | g·s |

| Movement Onset Latency | Event-based | ≥100 | Time from cue to when signal exceeds threshold (e.g., 5% of max) | Milliseconds (ms) |

Experimental Protocols

Protocol 1: Standardized Feature Extraction Pipeline for Wearable Accelerometer Data

Objective: To reproducibly extract time-domain features from raw tri-axial accelerometer data for behavioral phenotyping. Materials: See Scientist's Toolkit. Procedure:

- Data Acquisition & Preprocessing:

- Mount inertial measurement unit (IMU) securely on body segment of interest (e.g., wrist, lumbar).

- Record raw acceleration at ≥50 Hz. Synchronize with task timestamps.

- Calibrate sensor: subtract static gravity component from each axis.

- Apply a band-pass filter (e.g., 0.1-10 Hz Butterworth, 4th order) to remove noise and drift.

- Epoch Segmentation:

- For non-event-driven analysis, segment continuous data into non-overlapping epochs (e.g., 1-second windows).

- Feature Calculation per Epoch/Axis:

- Activity Counts: For each axis, apply a proprietary or standard (e.g., ActiLife) counting algorithm to the filtered signal. Sum counts per epoch.

- Variance: Compute the variance of the raw or filtered signal for each axis within the epoch.

- SMA: For the epoch, numerically integrate the sum of absolute values:

SMA = Σ( \|a_x\| + \|a_y\| + \|a_z\| ) * Δt.

- Aggregation: Store computed features (Counts, Var_X/Y/Z, SMA) for each epoch in a structured table.

Protocol 2: Movement Onset Detection from Acceleration Data

Objective: To precisely identify the start time of a directed, voluntary movement. Procedure:

- Signal Selection & Conditioning:

- Align data to an external "go cue" (e.g., visual stimulus timestamp).

- Select the axis of primary movement (e.g., anteroposterior for reaching). Alternatively, use the vector magnitude:

VM = √(a_x² + a_y² + a_z²). - Apply a low-pass filter (e.g., 10 Hz cut-off) to reduce high-frequency noise.

- Onset Detection Algorithm (Threshold-Based):

- Define a baseline period (e.g., 500ms before cue). Calculate mean (μ) and standard deviation (σ) of the signal during this period.

- Set a detection threshold:

Threshold = μ + n*σ(where n is empirically determined, e.g., 3-5). Alternatively, use a percentage of the peak amplitude (e.g., 5%). - The movement onset is the first sample after the cue where the signal sustains above the threshold for a minimum duration (e.g., 50ms) to avoid false positives from noise.

- Validation: Manually inspect a subset of trials by plotting aligned signals and detected onsets to adjust threshold parameters.

Visualized Workflows

Workflow Diagram: Time-Domain Feature Extraction Pipeline

Title: Accelerometer Feature Extraction Pipeline

Workflow Diagram: Movement Onset Detection Logic

Title: Threshold-Based Movement Onset Detection

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for Accelerometer Feature Extraction

| Item Name/Type | Function/Role in Protocol | Example Specifications/Notes |

|---|---|---|

| Inertial Measurement Unit (IMU) | Primary data acquisition device. Measures linear acceleration (and often gyroscope data). | 3-axis accelerometer, ±2g/±4g/±8g dynamic range, sampling rate ≥50Hz. (e.g., Axivity AX3, ActiGraph GT9X). |

| Sensor Calibration Jig | Provides a known orientation for static calibration to remove sensor bias and scale errors. | Precision-machined block to hold IMU at 0°, 90° relative to gravity. |

| Digital Filtering Software Library | Implements signal preprocessing steps (band-pass, low-pass filtering). | SciPy (Python), MATLAB Signal Processing Toolbox, or custom C++/Python implementations of Butterworth filters. |

| Epoch Segmentation Algorithm | Divides continuous time-series data into fixed or variable-length windows for analysis. | Custom script with adjustable window length (e.g., 1s) and overlap (typically 0%). |

| Activity Count Algorithm | Converts raw acceleration to proprietary "counts" for comparison with established literature. | Open-source implementations (e.g., GGIR) or manufacturer SDKs (ActiLife for ActiGraph). |

| Onset Detection Validation Tool | Allows manual verification and adjustment of automated onset detection. | Interactive plotting script (Python matplotlib, MATLAB GUI) to mark true onsets and compare with algorithm output. |

| Time-Series Feature Extraction Library | Provides optimized functions for batch calculation of variance, SMA, etc. | Python: tsfresh, hctsa. MATLAB: Signal Processing Toolbox, custom functions. |

This document presents application notes and protocols for frequency-domain analysis techniques, a core component of a broader thesis on feature extraction from accelerometer data for behavioral phenotyping. The accurate identification of periodic motor behaviors—such as tremors and gait cycles—is critical in preclinical and clinical research for quantifying disease progression (e.g., in Parkinson's disease) and evaluating therapeutic efficacy in drug development.

Foundational Theory & Comparative Analysis

Core Transform Methods

Fast Fourier Transform (FFT): A computationally efficient algorithm for the Discrete Fourier Transform (DFT). It decomposes a time-domain signal into its constituent sinusoidal frequencies, providing a global frequency representation. It is optimal for stationary signals where frequency components are constant over time.

Wavelet Transform (WT): Provides a time-frequency representation by convolving the signal with wavelet functions (mother wavelets) scaled and translated in time. It offers superior temporal localization for non-stationary signals, where frequency components evolve over time (e.g., initiation and termination of a tremor bout).

Table 1: Comparative Analysis of FFT vs. Wavelet Transform for Behavioral Analysis

| Feature | Fast Fourier Transform (FFT) | Continuous Wavelet Transform (CWT) | Discrete Wavelet Transform (DWT) |

|---|---|---|---|

| Time-Frequency Localization | Poor (Global frequency only) | Excellent (Multi-resolution) | Good (Fixed dyadic scales) |

| Stationarity Requirement | Requires stationarity | Handles non-stationary signals | Handles non-stationary signals |

| Primary Output | Power Spectral Density (PSD) | Scalogram (Time-scale map) | Coefficients (Approximation & Details) |

| Computational Complexity | O(N log N) | O(N^2) for naive implementation | O(N) |

| Best Suited For | Identifying dominant, persistent frequencies (e.g., steady-state tremor) | Analyzing transient or evolving oscillations (e.g., gait initiation, changing tremor) | Signal denoising, compression, feature reduction |

| Key Parameter to Choose | Sampling rate, Window size/type | Mother wavelet (e.g., Morlet, Mexican Hat) | Mother wavelet, Decomposition level |

Quantitative Metrics for Behavioral Phenotyping

Table 2: Key Frequency-Domain Features Extracted from Accelerometer Data

| Behavior | Typical Frequency Range | Extracted Feature | Clinical/Research Relevance |

|---|---|---|---|

| Resting Tremor (Parkinsonian) | 4 - 6 Hz | Peak Power Frequency, Band Power (4-6 Hz) | Diagnostic marker, severity quantification |

| Physiological Tremor | 6 - 12 Hz | Band Power Ratio (8-12 Hz / 4-6 Hz) | Differentiate pathological vs. normal |

| Gait Cycle | 0.5 - 3 Hz (Stride Frequency) | Dominant Frequency, Harmonic Ratios | Assess bradykinesia, asymmetry, stability |

| Myoclonus | 1 - 15 Hz (often <5 Hz) | Burst Duration in Time-Freq. domain | Characterize sudden muscle jerks |

Experimental Protocols

Protocol A: FFT-Based Tremor Quantification in Rodent Models

Objective: To quantify tremor power and frequency in a pre-clinical rodent model using tri-axial accelerometer data.

Materials & Setup:

- Implantable or externally secured tri-axial accelerometer (e.g., ±5g range, 100 Hz sampling rate).

- Data acquisition system with time-synchronization.

- Animal restraint or open-field apparatus.

- Signal processing software (Python w/ NumPy/SciPy, MATLAB).

Procedure:

- Data Acquisition: Record accelerometer data (X, Y, Z axes) from the subject at rest for a minimum of 120 seconds. Ensure minimal external manipulation. Sampling rate (fs) ≥ 100 Hz.

- Pre-processing:

a. Detrend: Remove linear trend from each axis signal.

b. Filter: Apply a 4th-order Butterworth band-pass filter (1-15 Hz) to isolate tremor-relevant frequencies.

c. Vector Magnitude: Calculate the vector magnitude

VM = sqrt(x^2 + y^2 + z^2)to obtain a tremor-intensity signal independent of sensor orientation. - Segmentation: Divide the VM signal into 4-second epochs with 50% overlap (Hamming window).

- FFT Computation: For each epoch: a. Apply the Hamming window. b. Compute the FFT to obtain the complex spectrum. c. Calculate the one-sided Power Spectral Density (PSD) in units of g²/Hz.

- Averaging: Average the PSDs across all epochs to obtain a robust ensemble average PSD.

- Feature Extraction: a. Peak Frequency: Identify frequency bin with maximum power within 4-12 Hz. b. Tremor Band Power: Compute the integral of the PSD within the 4-6 Hz band (for parkinsonian tremor). c. Total Power: Compute integral of PSD from 1-15 Hz. d. Ratio Metric: Calculate (Tremor Band Power) / (Total Power).

Protocol B: Wavelet-Based Gait Cycle Analysis from Wearable Sensors

Objective: To decompose stride patterns and identify gait events (heel-strike, toe-off) from a shank- or waist-mounted accelerometer.

Materials & Setup:

- Wearable inertial measurement unit (IMU) with accelerometer and gyroscope.

- Secure mounting on the lower back (L5) or shank.

- Calibrated motion capture system (for validation; optional).

- Processing software with wavelet toolbox.

Procedure:

- Data Acquisition: Record data during a 2-minute walking task at self-selected speed. fs ≥ 128 Hz.

- Axis Selection: Use the vertical (V) axis acceleration from the lower back or the anterior-posterior (AP) axis from the shank.

- Pre-processing: Apply a low-pass filter (cut-off 20 Hz). Subtract the mean (DC component).

- Continuous Wavelet Transform (CWT): a. Select the Morlet wavelet (complex, good time-frequency balance). b. Set scale parameters to correspond to frequencies of 0.5 Hz to 5 Hz. c. Compute the CWT to obtain a scalogram (time-scale map of coefficient magnitudes).

- Ridge Detection: Identify the dominant frequency ridge in the scalogram corresponding to the fundamental stride frequency.

- Gait Event Detection (from shank AP signal): a. Extract the CWT coefficients at the scale corresponding to ~1-3 Hz (stride band). b. The phase of the complex coefficients can be used to identify maxima/minima corresponding to heel-strike and toe-off.

- Feature Extraction: a. Stride Time: Average time between consecutive heel-strikes. b. Coefficient of Variation (CV): (Std. Dev. of Stride Time / Mean Stride Time) * 100%. c. Symmetry Index: Compare features from left and right limbs.

Visualization of Methodologies

Title: FFT-Based Tremor Analysis Workflow

Title: Wavelet-Based Gait Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Accelerometer-Based Frequency Analysis

| Item Name / Category | Example Product/Specification | Primary Function in Research |

|---|---|---|

| High-Resolution Accelerometer | Tri-axial, ±2g to ±16g range, 100+ Hz sampling | Captures raw kinematic acceleration data with sufficient sensitivity and rate for tremor/gait. |

| Data Logging/Telemetry System | Implantable telemetry (DSI, Kaha Sciences) or wearable logger (Axivity, ActiGraph) | Enables continuous, unrestrained data collection in preclinical or clinical free-moving subjects. |

| Signal Processing Software | Python (SciPy, PyWavelets), MATLAB (Signal Proc. Toolbox, Wavelet Toolbox) | Provides algorithms for FFT, wavelet transforms, filtering, and feature extraction. |

| Validated Animal Model | Genetic or neurotoxin-induced (e.g., 6-OHDA) rodent models of Parkinsonism | Provides a biological system with expressed periodic behaviors (tremor, gait deficits) for study. |

| Motion Capture System (Validation) | High-speed camera (e.g., Noldus EthoVision), force plates | Serves as gold-standard for validating accelerometer-derived gait events and kinematics. |

| Reference Mother Wavelets | Morlet, Daubechies (db4), Mexican Hat | Pre-defined wavelet functions optimized for different signal characteristics (oscillatory, transient). |

| Statistical Analysis Package | R, GraphPad Prism, SPSS | For analyzing extracted feature sets, comparing groups, and assessing drug treatment effects. |

Within the broader thesis on accelerometer data processing and feature extraction for behavioral research, this document details application notes and protocols for identifying discrete, ethologically relevant behaviors. The move from generic activity counts to behavior-specific identification is crucial for preclinical neuroscience and psychopharmacology. Heuristic (rule-based) and pattern-based methods, such as template matching and peak detection, provide a computationally efficient, interpretable bridge between raw triaxial accelerometer data and behavioral phenotypes, enabling higher-content analysis in studies of CNS drug efficacy and safety.

Core Methodological Principles

Heuristic Feature Engineering

Heuristic features are derived from domain knowledge (e.g., the kinematics of a rodent rear). Common features computed from accelerometer streams (X, Y, Z axes) include:

- Signal Vector Magnitude (SVM):

SVM = sqrt(X² + Y² + Z²) - Tilt/Orientation Angles: Calculated relative to gravity using

arctanfunctions. - Dynamic Acceleration: High-pass filtered SVM to isolate movement from posture.

- Spectral Power: In specific frequency bands (e.g., 0-5 Hz for gross movement, 10-15 Hz for tremors/seizures).

Pattern Recognition Approaches

- Template Matching: A pre-defined acceleration pattern (template) for a target behavior is slid across the data stream, and similarity (e.g., cross-correlation coefficient) is computed at each point. Peaks above a threshold indicate detection.

- Peak Detection: Applied to derived signals (e.g., dynamic acceleration for rearing, periodicity for grooming). Identifies events based on amplitude, prominence, and width thresholds.

Application Notes: Behavior-Specific Detection Protocols

Rearing (Wall-Rearing in Rodents)

Principle: Characterized by a distinct postural shift from quadrupedal stance to upright position, resulting in a reorientation of the gravity vector on the Y (anteroposterior) and Z (vertical) axes. Protocol:

- Data Acquisition: Triaxial accelerometer sampled at ≥ 50 Hz, mounted on the rodent's dorsal surface (neck/upper back).

- Preprocessing: Low-pass filter (cutoff 10 Hz) to remove noise. Calculate pitch (angle in the sagittal plane) from Y and Z axes:

Pitch = arctan(Y / Z). - Feature Extraction & Detection:

- Heuristic: A rear event is declared when the pitch angle exceeds a species-specific threshold (e.g., > 45 degrees) for a minimum duration (e.g., > 0.5s).

- Template Matching: A canonical rear template (a smooth ~1-2s increase and decrease in pitch) is cross-correlated with the pitch signal.

Table 1: Typical Parameters for Rearing Detection from Accelerometer Data

| Parameter | Typical Value | Description |

|---|---|---|

| Sampling Rate | 50-100 Hz | Adequate for capturing posture change. |

| Pitch Threshold | 45-60 degrees | Minimum angle to define upright posture. |

| Minimum Duration | 0.5 seconds | To distinguish from transient head movements. |

| Template Length | 1.5 seconds | Duration of canonical rear template. |

| Cross-Correlation Threshold | 0.7-0.8 | Similarity score for template match. |

Self-Grooming (Rodents)

Principle: A structured, rhythmic behavior with cephalocaudal progression. Produces a characteristic periodic signal in the dynamic acceleration. Protocol:

- Data Acquisition: Accelerometer at ≥ 100 Hz (to capture finer movements).

- Preprocessing: Calculate dynamic acceleration (SVM high-pass filtered at 1 Hz). Compute the short-time Fourier transform (STFT) or wavelet transform.

- Feature Extraction & Detection:

- Heuristic/Peak Detection: Identify bouts with high power in the 8-12 Hz band (forepaw strokes). A grooming bout is defined by sustained periodicity (>3s) in this band.

- Pattern Recognition: Use a matched filter designed for the ~10 Hz oscillatory pattern to detect grooming epochs.

Table 2: Parameters for Grooming Detection

| Parameter | Typical Value | Description |

|---|---|---|

| Sampling Rate | ≥100 Hz | Needed to resolve ~10 Hz oscillations. |

| Target Frequency Band | 8-12 Hz | Characteristic of vigorous forepaw strokes. |

| Minimum Bout Duration | 3 seconds | To distinguish from other repetitive movements. |

| Spectral Power Threshold | Subject-specific (Z-score > 2) | To define significant bouts. |

Generalized Tonic-Clonic Seizures

Principle: Characterized by high-amplitude, high-frequency, rhythmic convulsions affecting the whole body. Protocol:

- Data Acquisition: High-sampling rate (≥ 128 Hz) is critical.

- Preprocessing: Calculate total power (SVM) or focus on axes of greatest variance.

- Feature Extraction & Detection:

- Heuristic/Peak Detection: Detect epochs where signal amplitude exceeds 5-10x baseline RMS for sustained periods (>2s). High spectral edge frequency (>20 Hz) is also indicative.

- Template Matching: Less common due to seizure variability, but can be used for stereotyped seizures in specific models.

Table 3: Parameters for Seizure Detection

| Parameter | Typical Value | Description |

|---|---|---|

| Sampling Rate | ≥128 Hz | Essential for capturing high-frequency components. |

| Amplitude Multiplier | 5-10 x Baseline RMS | Threshold for high-amplitude convulsive activity. |

| Minimum Duration | 2 seconds | To exclude myoclonic jerks. |

| High-Frequency Power | Significant power > 20 Hz | Indicates clonic phase. |

Experimental Protocol: Validating Detected Behaviors

Title: Protocol for Video-Verification of Accelerometer-Detected Behaviors.

Objective: To establish ground truth and calculate the precision, recall, and F1-score for the accelerometer-based detection algorithm.

Materials: Instrumented rodent (accelerometer + transmitter), video recording system, behavioral scoring software (e.g., BORIS, EthoVision), data synchronization unit.

Procedure:

- Synchronization: Generate a simultaneous visible marker (LED flash) in both the video feed and accelerometer data stream at trial start and end.

- Recording: Record a 30-60 minute baseline and drug-testing session with concurrent video and accelerometry.

- Blinded Scoring: A trained human observer, blinded to algorithm output, reviews video and annotates onset/offset times for all target behaviors (rearing, grooming, seizures) using scoring software.

- Algorithm Output: Run the template matching/peak detection pipeline on the synchronized accelerometer data to generate a list of detected event timestamps.

- Validation Analysis: Define a temporal tolerance window (e.g., ±0.5s). An algorithm detection is a True Positive (TP) if it falls within a window of a human-scored event. Calculate:

- Precision = TP / (TP + FP)

- Recall = TP / (TP + FN)

- F1-Score = 2 * (Precision * Recall) / (Precision + Recall)

Diagrams

Workflow for Behavior Identification

Validation Protocol for Detected Behaviors

The Scientist's Toolkit

Table 4: Essential Research Reagents & Solutions for Accelerometer-Based Behavior Analysis

| Item | Function/Application |

|---|---|

| Triaxial Accelerometer/IMU | Core sensor measuring acceleration in 3 axes (X,Y,Z). Often combined with gyroscope (for rotation) in an Inertial Measurement Unit (IMU). |

| Miniature Telemetry Transmitter | Implantable or backpack device for wireless transmission of accelerometer data, allowing unrestricted movement in home cage. |

| Data Acquisition System | Hardware/software (e.g., from Data Sciences Int., Starr Life Sciences) to receive, timestamp, and store telemetry signals. |

| Video Tracking Software | Software (e.g., Noldus EthoVision, BORIS) for synchronized video recording and manual/automated behavioral scoring to create ground truth data. |

| Signal Processing Library | Python (SciPy, NumPy) or MATLAB toolboxes for implementing filters, FFT, cross-correlation, and peak detection algorithms. |

| Statistical Analysis Software | Software (e.g., R, GraphPad Prism) for performing validation statistics (precision/recall) and group-level behavioral analysis. |

| Time Synchronization Tool | Physical (LED) or software-based tool to align video frames and accelerometer samples with millisecond precision. |

Within the broader thesis on accelerometer data processing for feature extraction in behavioral research, this document provides Application Notes and Protocols for implementing deep learning (DL) approaches. The shift from manual scoring and traditional machine learning (ML) to DL represents a paradigm shift, enabling automated, high-throughput, and nuanced classification of animal and human behaviors from raw sensor data. This is particularly critical in preclinical drug development, where objective, quantitative behavioral phenotyping is essential for evaluating therapeutic efficacy and safety.

Core Concepts: From Feature Engineering to Learned Representations

Table 1: Comparison of Traditional ML vs. Deep Learning for Behavioral Classification

| Aspect | Traditional Machine Learning (e.g., SVM, Random Forest) | Deep Learning (e.g., CNN, LSTM) |

|---|---|---|

| Input Data | Hand-crafted features (e.g., mean, variance, FFT coefficients). | Raw or minimally pre-processed accelerometer time-series. |

| Feature Extraction | Manual, domain-expert driven. Computed per data segment. | Automatic, learned hierarchically by the model. |

| Development Workflow | Feature computation -> Feature selection -> Model training. | End-to-end training on labeled raw data. |

| Data Efficiency | Often effective with smaller datasets. | Typically requires larger, labeled datasets. |

| Model Transparency | High; features are human-interpretable. | Lower ("black box"); requires interpretation tools. |

| Typical Performance | Good for distinct, predefined behaviors. | Superior for complex, subtle, or novel behavior patterns. |

Application Notes

Data Acquisition & Preprocessing Protocol

Objective: To standardize the collection and initial processing of tri-axial accelerometer data for DL model input.

Protocol:

- Device Calibration: Calibrate accelerometers (e.g., from Biobserve, TSE Systems, or custom collars) against gravity and known orientations prior to each study.

- Sampling Rate: Sample at a minimum of 50 Hz (mice/rats) or 30 Hz (larger animals/humans). For very dynamic behaviors (e.g., head flicks), ≥ 100 Hz is recommended.

- Data Segmentation (Windowing):

- Use a sliding window approach to create samples for model input.

- Window Length: 2-5 seconds is common for many behaviors (e.g., grooming, rearing).

- Overlap: 50-75% overlap ensures behaviors split across windows are captured, augmenting data.

- Preprocessing:

- Filtering: Apply a low-pass filter (e.g., 20 Hz cutoff) to remove high-frequency noise not related to behavior.

- Normalization: Normalize each axis per recording session:

x_norm = (x - μ_session) / σ_session.

- Labeling: Synchronize video with accelerometer data. Use expert observers or established ethograms (e.g., MATLAB's Behavioral Observation Research Interactive Software (BORIS) or DeepLabCut) to assign ground-truth labels to each window.

Deep Learning Architecture Selection & Training Protocol

Objective: To train a DL model that maps raw accelerometer windows to behavioral class probabilities.

Protocol:

- Architecture Choice:

- Convolutional Neural Networks (CNNs): Ideal for capturing local patterns and translational invariance in the signal. Use 1D convolutions along the time axis.

- Recurrent Neural Networks (RNNs/LSTMs): Ideal for modeling temporal sequences and long-range dependencies (e.g., behavior bouts).

- Hybrid (CNN-LSTM): CNNs extract local features, which are then fed to an LSTM to model temporal dynamics—often state-of-the-art.

- Model Definition (Example using a 1D CNN):

- Input Layer: Shape = (

window_length*sampling_rate, 3) for (timesteps, axes). - Convolutional Blocks: 2-3 blocks of: 1D Convolution (filters=64, kernel=5) -> Batch Normalization -> ReLU Activation -> MaxPooling (poolsize=2).

- Classifier Head: Global Average Pooling -> Dense Layer (128 units, ReLU) -> Dropout (0.5) -> Output Dense Layer (units = number of behaviors, softmax).

- Input Layer: Shape = (

- Training:

- Loss Function: Categorical Cross-Entropy for mutually exclusive behaviors.

- Optimizer: Adam with default parameters.

- Validation: Use a strictly isolated animal/subject-wise split (e.g., 70% train, 15% validation, 15% test) to prevent data leakage and ensure generalizability.

- Regularization: Employ Dropout, L2 weight decay, and extensive data augmentation (jitter, scaling, time-warping) to prevent overfitting.

- Evaluation: Report accuracy, precision, recall, and F1-score per behavior class on the held-out test set. Confusion matrices are essential.

Table 2: Example Performance Metrics from a Recent Study (Rodent Open Field)

| Behavior Class | Precision | Recall | F1-Score | Support (n) |

|---|---|---|---|---|

| Immobility | 0.98 | 0.96 | 0.97 | 1250 |

| Locomotion | 0.95 | 0.97 | 0.96 | 1800 |

| Rearing | 0.87 | 0.85 | 0.86 | 800 |

| Grooming | 0.92 | 0.88 | 0.90 | 450 |

| Macro Avg | 0.93 | 0.92 | 0.92 | 4300 |

Experimental Protocols

Protocol: Validating DL Classifier Against Standard Assays

Purpose: To correlate DL-predicted behavioral metrics with outcomes from established pharmacological or genetic interventions.

Methodology:

- Animal Model: Use two cohorts: 1) Vehicle control, 2) Treated (e.g., with an anxiolytic like diazepam at 1 mg/kg i.p.).

- Apparatus: Standard open field arena (40 cm x 40 cm for mice) equipped with a tri-axial accelerometer attached to a lightweight animal harness.

- Procedure:

- Administer vehicle/drug.

- Place animal in arena and record simultaneously: a) raw accelerometer data, b) overhead video for 20 minutes.

- Data Analysis:

- Process accelerometer data through the trained DL pipeline to obtain second-by-second behavior predictions.

- Compute total duration and bout frequency for each behavior class per animal.

- From video, have a blinded human rater score the same metrics for a subset of time as a benchmark.

- Statistically compare (t-test or ANOVA) DL-derived metrics between treatment groups. Correlate DL-derived "center zone locomotion" with traditional video-tracking-based "distance in center."

- Expected Outcome: The DL classifier should detect a significant increase in center zone locomotion and decreased immobility in the diazepam group, validating its sensitivity to pharmacologically-induced behavioral changes.

Protocol: Feature Extraction & Dimensionality Reduction for Novel Biomarker Discovery

Purpose: To use the DL model as a feature extractor to identify latent behavioral phenotypes not defined a priori.

Methodology:

- Model Leveraging: Use a trained CNN (excluding its final classification layer) as a feature extractor.

- Inference: Pass all accelerometer windows from a cohort (e.g., disease model vs. wild-type) through the model, extracting the activation vector from the penultimate layer (e.g., the 128-unit dense layer).

- Dimensionality Reduction: Apply Uniform Manifold Approximation and Projection (UMAP) or t-Distributed Stochastic Neighbor Embedding (t-SNE) to these high-dimensional feature vectors, projecting them into 2D/3D.

- Clustering Analysis: Apply clustering algorithms (e.g., HDBSCAN) to the reduced embeddings to identify natural groupings of behavioral "micro-states."

- Validation: Correlate cluster membership with experimental variables (genotype, drug dose) or physiological measures (neural activity from simultaneous telemetry). Manually inspect video corresponding to novel clusters to ethologically describe the behavior.

Visualizations

Title: DL Workflow: From Raw Data to Classification & Discovery

Title: Critical Data Splitting Strategy for Rigorous Validation

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for DL-Based Behavior Classification

| Item | Function & Rationale |

|---|---|

| Tri-axial Accelerometer Loggers (e.g., from Data Sciences International, Millenium) | Primary sensor. Capties high-resolution (3-axis) acceleration, the raw substrate for all subsequent analysis. Lightweight, implantable, or wearable form factors are essential. |