From Movement to Meaning: A Comprehensive Guide to Accelerometer Data Analysis for Behavioral Phenotyping in Biomedical Research

This article provides a structured framework for researchers and drug development professionals to leverage accelerometer data for objective behavioral classification.

From Movement to Meaning: A Comprehensive Guide to Accelerometer Data Analysis for Behavioral Phenotyping in Biomedical Research

Abstract

This article provides a structured framework for researchers and drug development professionals to leverage accelerometer data for objective behavioral classification. Covering foundational principles to advanced validation, it details how raw tri-axial signals are transformed into quantifiable biomarkers for activity, sleep, gait, and specific behaviors. We explore methodological pipelines from sensor selection and signal processing to machine learning application, address common challenges in real-world data, and compare validation approaches. The guide emphasizes translating technical analysis into reliable, interpretable endpoints for preclinical and clinical studies, enhancing the reproducibility and translational power of behavioral research.

Understanding the Signal: Core Principles of Accelerometry for Behavioral Phenotyping

What Behavioral Data Does an Accelerometer Actually Capture?

Within the broader thesis on accelerometer data analysis for behavioral classification, it is critical to define the fundamental raw signals before algorithmic interpretation. An accelerometer is an inertial sensor that measures proper acceleration—the rate of change of velocity relative to a free-falling, or inertial, reference frame. It directly captures tri-axial (X, Y, Z) gravitational and movement-induced inertial forces in units of g (9.81 m/s²). For behavioral research, this raw data is a proxy for movement dynamics, posture, and activity-related energy expenditure, but does not capture behavior per se. Behavioral classification is a subsequent inferential step applied to these derived signatures.

Core Captured Parameters & Derived Metrics

Table 1: Primary Data Streams from a Tri-Axial Accelerometer

| Data Type | Description | Typical Sampling Rate (Research) | Direct Behavioral Proxy |

|---|---|---|---|

| Static Acceleration | Low-frequency component (<0.5 Hz) primarily reflecting orientation relative to gravity. | 10-100 Hz | Posture (e.g., lying, sitting, standing tilt). |

| Dynamic Acceleration | Higher-frequency component (>0.5 Hz) resulting from movement. | 10-100 Hz | Activity intensity, limb/body movement. |

| Vector Magnitude | (\sqrt{X^2 + Y^2 + Z^2}), often with gravity subtracted (ENMO). | Derived | Overall activity magnitude, metabolic cost estimate. |

| Signal Variance | Variability in acceleration over a time window (e.g., 1-5 sec). | Derived | Movement complexity, rest vs. activity state. |

| Spectral Power | Distribution of signal power across frequency bands. | Derived | Differentiation of movement types (e.g., ambulation vs. tremor). |

Table 2: Common Behavioral Constructs Inferred from Accelerometer Metrics

| Inferred Behavioral Class | Key Accelerometer Signatures | Typical Analysis Epoch |

|---|---|---|

| Sedentary Behavior | Low vector magnitude (e.g., ENMO < 50 mg), stable orientation. | 5-60 seconds |

| Ambulatory Activity | Rhythmic, periodic signals in the 1-5 Hz range; high variance. | 2-10 seconds |

| Postural Changes | Shifts in static acceleration angle (e.g., inclination). | 1-5 seconds |

| Sleep/Wake States | Prolonged periods of very low magnitude, circadian rhythm of activity. | 30-60 minutes |

| Stereotypy/Tremor | High-frequency, repetitive oscillations in a specific axis (e.g., 3-12 Hz). | 1-5 seconds |

| Grooming/Feeding | Characteristic bouts of moderate, asymmetric, and irregular movement. | 1-10 seconds |

Experimental Protocols for Behavioral Phenotyping

Protocol 1: Baseline Ambulatory and Exploratory Behavior in Rodents

- Objective: To quantify general locomotion and exploration in a novel home-cage or open field.

- Equipment: Telemetric implant or collar-mounted accelerometer; data acquisition system.

- Procedure:

- Acclimate animals to housing for >7 days.

- Calibrate accelerometer: Record static orientation (e.g., dorsal, lateral, ventral) for 30 seconds each.

- Place subject in a standard, novel testing arena (e.g., open field).

- Record tri-axial acceleration at 100 Hz for a 60-minute session.

- Compute vector magnitude dynamic body acceleration (VM-DBA or ENMO) per 1-second epoch.

- Classify behavior: Apply threshold (e.g., VM < 0.1 g = resting) or machine learning classifier trained on annotated video.

- Key Metrics: Total distance (estimated from acceleration double integration), time spent active/inactive, number of ambulatory bouts.

Protocol 2: Pharmacological Response - Locomotor Activity Modulation

- Objective: To assess stimulant or sedative drug effects on movement signatures.

- Procedure:

- Pre-dose baseline: Record acceleration for 60 minutes pre-administration.

- Administer test compound or vehicle (IP, PO, SC).

- Immediately place animal in a clean home-cage and record acceleration at 100 Hz for 2-6 hours.

- Synchronize with video recording for ground-truth validation.

- Process data in 5-minute bins: Calculate mean VM, signal entropy, and power in 1-5 Hz band.

- Compare treatment time-course to vehicle group using AUC analysis.

- Key Metrics: Latency to effect, peak locomotor response, duration of effect, change in movement pattern complexity.

Protocol 3: High-Frequency Movement Detection (e.g., Tremor, Seizure)

- Objective: To detect and quantify pathological or fine motor movements.

- Procedure:

- Secure accelerometer firmly to relevant body part (head, trunk, limb via implant or adhesive).

- Record at high sampling rate (≥ 200 Hz) to avoid aliasing.

- Apply high-pass filter (>2 Hz) to remove postural components.

- Perform Fourier Transform on 2-second sliding windows.

- Quantify power in the target frequency band (e.g., 6-12 Hz for tremor).

- Set power threshold for event detection based on control population baseline.

- Key Metrics: Event frequency, duration, peak power, and total daily power in target band.

Visualizations

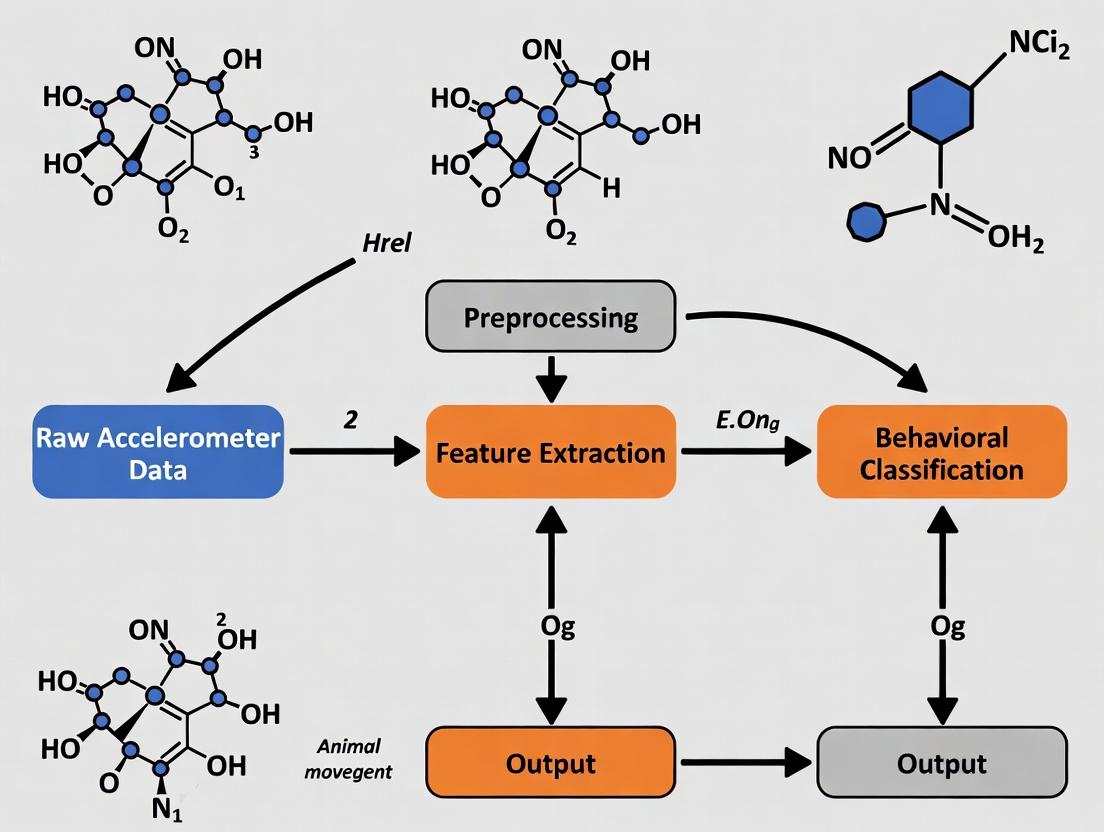

Behavioral Classification Data Pipeline from Raw Acceleration

Workflow for Supervised Behavioral Classification

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Accelerometer-Based Behavioral Studies

| Item / Solution | Function & Application |

|---|---|

| Implantable Telemetry Transmitter | Miniaturized device surgically implanted for high-fidelity, long-term core-body acceleration data with minimal movement artifact. Essential for chronic studies. |

| External Biologger / Collar Tag | Non-invasive attachment for short-term or large-animal studies. Requires careful fitting to minimize rotation artifact. |

| Data Acquisition Software (e.g., Ponemah, LabChart) | Configures sampling parameters, receives & stores raw waveform data, performs initial device calibration. |

| Signal Processing Library (e.g., MATLAB Toolboxes, Python SciPy) | For implementing filters, calculating vector magnitude, and performing Fourier transforms on raw data. |

| Annotation & Synchronization Software (e.g., Behavioral Observation Research Interactive Software - BORIS) | To create ground-truth behavioral labels from synchronized video, used for training and validating classifiers. |

| Machine Learning Environment (e.g., R, Python with scikit-learn) | To develop and train supervised classifiers (Random Forest, SVM, CNN) using extracted acceleration features. |

| Calibration Jig | Physical apparatus to hold the accelerometer sensor at precise, known orientations for static calibration against gravity. |

| Standardized Behavioral Arenas | Open fields, home-cages, or mazes that provide consistent environmental context for interpreting movement data across subjects. |

Application Notes

In behavioral classification research using accelerometers, raw tri-axial acceleration signals are transformed into interpretable metrics that quantify movement volume and intensity. These metrics serve as the primary input for machine learning models and statistical analyses aimed at classifying activities (e.g., sedentary, walking, running) and estimating energy expenditure. The selection and calculation of these metrics directly impact the validity and comparability of research findings across studies and populations.

Core Derived Metrics

The table below summarizes the definition, calculation, and primary use of key interpretable features derived from raw accelerometer data.

Table 1: Key Interpretable Accelerometer-Derived Metrics

| Metric Name | Definition & Calculation Formula | Typical Sampling/Epoch | Primary Research Application | ||

|---|---|---|---|---|---|

| Signal Vector Magnitude (VM) | The Euclidean norm of the three orthogonal axes. VM_i = sqrt(x_i² + y_i² + z_i²) |

High-frequency (e.g., 10-100 Hz) | Raw measure of dynamic body acceleration. Basis for many other metrics. | ||

| Euclidean Norm Minus One (ENMO) | The amount by which the VM exceeds 1g (gravity), zero-corrected. ENMO_i = max(VM_i - 1g, 0) |

High-frequency or summarized (e.g., 1s) | Removes static gravitational component, isolating movement-related acceleration. Widely used in open-source methods (GGIR). | ||

| Activity Counts | Proprietary or open-source summarized measure of movement intensity over an epoch. Derived by band-pass filtering, rectifying, and integrating the raw signal. | Epoch-based (e.g., 5, 15, 30, 60 seconds) | The standard metric for many legacy devices (ActiGraph). Enables comparison with established cut-points for activity intensity. | ||

| Mean Amplitude Deviation (MAD) | The mean absolute deviation of the accelerometer norms from their mean value over an epoch. `MAD_epoch = mean( | VM_i - mean(VM) | )` | Epoch-based (e.g., 5s) | Robust metric highly correlated with energy expenditure. Used as a primary feature in modern research. |

| Sedentary Sphere | A threshold-based classification. If all raw axes (x,y,z) are within a boundary (e.g., ±50 mg) for a period, the epoch is classified as sedentary. | Epoch-based (e.g., 5s) | Directly identifies postural sedentariness without relying on count cut-points. |

Considerations for Drug Development

In clinical trials, these metrics act as digital endpoints. Key considerations include:

- Standardization: Consistent processing pipelines (e.g., using open-source software like GGIR) are critical for multi-site trials.

- Validation: Metrics must be validated against relevant clinical outcomes (e.g., 6-minute walk test, patient-reported fatigue) within the target population.

- Sensitivity: The metric must be sensitive enough to detect subtle, clinically meaningful changes in activity behavior induced by a therapeutic intervention.

Experimental Protocols

Protocol 2.1: Derivation of ENMO and MAD from Raw Acceleration Data

Objective: To process raw tri-axial accelerometer data (.csv, .gt3x, .cwa formats) into the ENMO and MAD metrics for downstream behavioral classification.

Materials: See "The Scientist's Toolkit" below.

Software: R statistical software (v4.3.0+) with GGIR package or Python with scipy and numpy.

Procedure:

- Data Import & Calibration:

- Load raw acceleration files (x, y, z axes in g-units, 30-100 Hz).

- Perform autocalibration using the

GGIR::g.calibrate()function or similar to correct for sensor error relative to local gravity (1g).

- Metric Calculation (Epoch: 5-second):

- For each sample i, calculate the Vector Magnitude:

VM_i = sqrt(x_i² + y_i² + z_i²). - Calculate ENMO:

- Derive:

ENMO_raw_i = VM_i - 1. - Set negative values to zero:

ENMO_i = max(ENMO_raw_i, 0). - Aggregate by calculating the mean ENMO over each 5-second epoch.

- Derive:

- Calculate MAD:

- For each 5-second block of VM samples, compute:

MAD = mean( | VM_i - mean(VM) | )for all i in the epoch.

- For each 5-second block of VM samples, compute:

- For each sample i, calculate the Vector Magnitude:

- Output:

- Generate a time-series dataset with columns: Timestamp, ENMOmean5s, MAD_5s.

- This dataset is ready for feature extraction or direct use with activity classification algorithms.

Protocol 2.2: Validation of Derived Metrics Against Indirect Calorimetry

Objective: To establish the criterion validity of ENMO and Activity Counts against measured energy expenditure (METs) during a structured activity protocol.

Materials: Research-grade accelerometer, portable metabolic cart (e.g., Cosmed K5), standardized activity lab. Participants: N ≥ 20 adults covering a range of ages and BMI.

Procedure:

- Instrumentation: Securely attach the accelerometer to the participant's lower back (L5) or non-dominant wrist. Fit the metabolic cart mask.

- Protocol: Participant performs 5-minute stages of:

- Lying supine

- Sitting quietly

- Standing quietly

- Walking at 2.0, 3.0, 4.0 mph on treadmill

- Running at 5.0 mph on treadmill

- Data Synchronization: Synchronize accelerometer and metabolic cart timestamps via a synchronization event (e.g., 3 jumps) at the start.

- Data Processing:

- Process accelerometer data per Protocol 2.1 to get 5-second epoch values for ENMO and Activity Counts.

- Calculate METs from the metabolic cart data (VO2 / 3.5) for corresponding 5-second epochs.

- Statistical Analysis:

- Use mixed-effects linear regression to model METs as a function of the derived metric (ENMO or Counts).

Table 2: Example Validation Results (Hypothetical Data)

| Metric | Regression Equation (METs ~ Metric) | R² | P-value |

|---|---|---|---|

| ENMO (mg) | METs = 1.2 + 0.0031 * ENMO | 0.85 | <0.001 |

| Activity Counts | METs = 1.1 + 0.0008 * Counts | 0.79 | <0.001 |

Visualizations

Diagram 1: From Raw Data to ENMO and MAD Metrics

Diagram 2: Accelerometer Data Analysis Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Accelerometer Research

| Item / Solution | Function & Rationale |

|---|---|

| Open-Source Software (GGIR) | A comprehensive R package for raw accelerometer data processing. It standardizes the pipeline from raw files to validated metrics (ENMO, MAD) and non-wear detection, ensuring reproducibility. |

| ActiLife / OEM Software | Manufacturer-specific software (e.g., ActiGraph's ActiLife) required for device initialization, data downloading, and generating Activity Counts for legacy analytical methods. |

| High-Precision Calibration Shaker | A motorized device that rotates the accelerometer at known frequencies and angles. Used for pre-deployment calibration to verify sensor accuracy and inter-device reliability. |

| Standardized Placement Harness | A secure, adjustable harness (e.g., for waist, wrist, thigh) to ensure consistent sensor placement and orientation across all participants, minimizing measurement artifact. |

| Synchronization Event Logger | A tool (hardware button or app) to record a timestamped event (e.g., 3 jumps) visible in both accelerometer and validation equipment (e.g., video, metabolic cart) data streams for precise time alignment. |

| Validated Cut-Point Libraries | Published reference values (e.g., ENMO < 45 mg for sedentary behavior in adults) that translate derived metrics into behavioral intensities, allowing comparison across studies. |

Application Notes

Behavioral phenotyping using accelerometer data is a cornerstone of preclinical research in neuroscience, psychopharmacology, and drug development. The accurate classification of discrete behavioral classes—locomotion, rearing, grooming, and sleep-wake states—provides quantitative, high-throughput, and objective measures of animal behavior. This analysis is critical for modeling neurological and psychiatric disorders, assessing drug efficacy, and understanding mechanisms of action.

- Locomotion: Quantified via total distance traveled, velocity, and acceleration magnitude. It is a primary indicator of general activity levels, exploratory drive, and motor function. Sedation, motor impairment, or psychostimulant effects are readily detected.

- Rearing: A vertical movement where the animal stands on its hind legs. It is a key measure of exploratory behavior, inquisitiveness, and response to novel environments. Reductions can indicate anxiety, motor deficits, or sedative effects.

- Grooming: A sequential, stereotyped self-cleaning behavior. Its duration, frequency, and syntactic structure are sensitive to stress, genetic alterations, and dopaminergic modulation. Excessive grooming may model compulsive disorders.

- Sleep-Wake Cycles: Derived from extended periods of immobility (sleep) versus activity (wake). Analysis of bout length, fragmentation, and circadian rhythmicity is essential for studying sleep disorders, sedation, and the effects of therapeutics on arousal.

Integrating tri-axial accelerometer data with machine learning (e.g., random forest, convolutional neural networks) allows for the precise, automated discrimination of these classes from raw movement time-series data, moving beyond simple activity counts.

Table 1: Key Behavioral Metrics and Their Experimental Correlates

| Behavioral Class | Primary Accelerometer-Derived Metric | Typical Baseline (Mouse, 10-min Open Field) | Common Experimental Perturbation & Observed Change |

|---|---|---|---|

| Locomotion | Total Distance Traveled | 1500 - 4000 cm | Amphetamine (5 mg/kg): ↑ 200-300% Diazepam (1 mg/kg): ↓ 40-60% |

| Rearing | Vertical Beam Breaks / Z-axis Variance | 20 - 40 events | NMDA receptor antagonist (MK-801): ↓ 50-70% Novel object introduction: ↑ 100-150% |

| Grooming | Duration of Stereotyped Movement Bouts | 5 - 15% of session time | Acute stress (e.g., splash test): ↑ 300% SSRI (fluoxetine, chronic): ↓ 30-50% |

| Sleep-Wake | % Time Immobile (Bout > 40s) | Wake: ~60% (Light Phase) | Caffeine (10 mg/kg): ↓ Sleep % by 40% Pentobarbital (30 mg/kg): ↑ NREM Sleep % by 80% |

Experimental Protocols

Protocol 1: Simultaneous Accelerometry and Video Recording for Classifier Training

Objective: To collect labeled, ground-truth data for training supervised machine learning models to classify behavior from accelerometer data.

- Animal Preparation: Fit a lightweight (e.g., <10% body weight) tri-axial accelerometer logger (e.g., 100 Hz sampling rate) to the rodent's dorsal surface via a surgical adhesive or harness.

- Experimental Arena: Place the animal in a standard open field (e.g., 40 cm x 40 cm x 40 cm) or home cage. Ensure the environment is uniformly lit and free of external vibrations.

- Synchronized Recording:

- Start the accelerometer logger.

- Simultaneously, begin high-definition video recording (≥ 30 fps) from a top-down and/or side-view camera.

- Use an audible click or LED flash visible to both the video and recorded by the accelerometer as a synchronization event.

- Data Collection: Record a 60-minute session. For robust classification, repeat with n ≥ 12 animals per experimental group (e.g., strain, treatment).

- Behavioral Labeling: Using video software (e.g., BORIS, DeepLabCut), a human observer manually annotates (labels) the video with the exact start and end times of each behavioral class: Locomotion, Rearing, Grooming, and Immobility (proxy for sleep).

- Data Alignment: Use the synchronization event to align the video timestamps with the accelerometer data stream (X, Y, Z, and vector magnitude).

Protocol 2: Pharmacological Validation of Classifier Output

Objective: To validate the trained behavioral classifier by administering compounds with known behavioral effects.

- Subject: Naive adult C57BL/6J mice (n=8-12 per group).

- Baseline Recording: Perform Protocol 1 (without video, using the trained classifier) for a 30-minute session to establish individual baseline activity.

- Drug Administration: Administer vehicle (control) or test compound (e.g., psychostimulant, sedative) via appropriate route (i.p., s.c., p.o.).

- Post-Treatment Recording: Place the animal back in the arena at the compound's time of peak effect (e.g., 10 min post-i.p. for stimulants) and record accelerometer data for 30-60 minutes.

- Analysis: Process the accelerometer data through the trained classifier. Compare the output (e.g., seconds spent locomoting, number of rears) between treatment and vehicle groups using appropriate statistical tests (e.g., t-test, ANOVA). Successful validation is achieved when classifier outputs match expected pharmacological profiles (see Table 1).

Protocol 3: Circadian Sleep-Wake Cycle Analysis

Objective: To characterize 24-hour sleep-wake patterns from accelerometer-derived immobility.

- Habituation: House mice individually in cages equipped with a running wheel (optional) for at least 48 hours before recording under standard 12:12 light-dark (LD) cycle conditions.

- Accelerometer Attachment: Securely attach a long-duration (>24h battery) accelerometer logger.

- Continuous Recording: Begin recording at the start of the light phase. Record continuously for a minimum of 48 hours to capture at least one full baseline LD cycle.

- Data Processing:

- Calculate the vector magnitude (VM = √(X² + Y² + Z²)) for each time sample.

- Apply a low-pass filter to remove high-frequency noise.

- Define "sleep" epochs as periods where VM remains below a validated amplitude threshold for a minimum duration (e.g., >40 seconds for mice).

- Output Metrics: Generate hypnograms and calculate for each 12-hour phase: Total Sleep Time, Sleep Bout Number, Mean Sleep Bout Duration, and Sleep Fragmentation Index.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials

| Item | Function in Behavioral Classification Research |

|---|---|

| Tri-axial Accelerometer Loggers (e.g., from Starr Life Sciences, Data Sciences Int.) | Captures high-resolution (≥50Hz), multi-dimensional movement data (X, Y, Z axes) required for discerning specific behavioral patterns. |

| Wireless Telemetry Systems | Enables continuous, unrestrained data collection in the home cage over days/weeks, ideal for sleep-wake and circadian studies. |

| Machine Learning Software Suites (e.g., Python with Scikit-learn, TensorFlow; DeepLabCut) | Provides tools for feature extraction, model training (e.g., Random Forest, CNN), and automated classification of accelerometer data into behavioral classes. |

| Behavioral Annotation Software (e.g., BORIS, EthoVision XT) | Creates ground-truth labels from synchronized video for supervised machine learning model training and validation. |

| Standardized Behavioral Arenas (Open Field, Home Cage) | Provides controlled, consistent environments for reproducible data collection across experiments and laboratories. |

| Pharmacological Reference Standards (e.g., Amphetamine, Caffeine, Diazepam) | Used as positive/negative controls to pharmacologically validate the output of the behavioral classification algorithm. |

| Data Synchronization Hardware (e.g., LED strobe, audio click generator) | Critical for perfectly aligning video and accelerometer data streams during classifier training phases. |

Visualizations

Title: Accelerometer Data Analysis Workflow for Behavior

Title: Key Neurosystems Modulating Behavioral Classes

The Role of Sampling Frequency, Range, and Placement (Collar, Back, Limb)

This application note is framed within a broader thesis on accelerometer data analysis for behavioral classification in preclinical and clinical research. The accurate quantification of behavior—from general activity to specific, ethologically relevant actions—is crucial for phenotyping, assessing drug efficacy, and understanding disease progression. The fidelity of this quantification is fundamentally governed by three interdependent hardware and configuration parameters: sampling frequency, dynamic range, and sensor placement. Optimizing these parameters is essential to capture the relevant biomechanical signatures without introducing aliasing, saturation, or spatial bias.

Parameter Definitions & Quantitative Considerations

Sampling Frequency (Fs)

Sampling frequency determines the temporal resolution of acceleration capture. The Nyquist-Shannon theorem states that Fs must be at least twice the highest frequency component of the signal of interest.

Table 1: Recommended Sampling Frequencies for Behavioral Components

| Behavioral Component | Approximate Frequency Band | Minimum Nyquist Fs | Recommended Fs (Research) | Rationale |

|---|---|---|---|---|

| Posture, Gait Cycle | 0-5 Hz | 10 Hz | 25-50 Hz | Captures low-frequency shifts in centroid and stride timing. |

| Tremor, Fine Motor | 5-15 Hz | 30 Hz | 50-100 Hz | Required to resolve pathological or drug-induced tremors. |

| Running, Jumping | 10-25 Hz | 50 Hz | 100-200 Hz | Captures rapid limb impacts and high-velocity movements. |

| Vocalization (via vibration) | 50-1000+ Hz | 2000 Hz | ≥500 Hz | Needed if using accelerometer as a contact microphone. |

Dynamic Range (g)

Dynamic range specifies the maximum and minimum acceleration values the sensor can measure before saturation (clipping). Range selection balances sensitivity for subtle movements with the need to capture high-force events.

Table 2: Typical Acceleration Ranges for Different Species & Activities

| Species / Activity | Typical Acceleration Magnitudes | Recommended Range | Risk of Improper Setting |

|---|---|---|---|

| Mouse (cage ambulation) | ± 1.5g | ±2g to ±4g | Too high: reduced resolution of subtle moves. Too low: saturation during bursts. |

| Rat (rearing, jumping) | ± 5g | ±8g to ±16g | Saturation during aggressive behaviors or falls if set too low. |

| Non-human Primate (foraging) | ± 3g | ±4g to ±8g | |

| Human (walking, sitting) | ± 0.5g | ±2g | |

| Human (sports, falls) | > ± 16g | ±16g to ±200g |

Sensor Placement

Placement dictates which biomechanical forces are measured, directly influencing the classification of behavior.

Table 3: Impact of Placement on Signal Interpretation

| Placement | Primary Signals Captured | Behavioral Classification Strengths | Common Research Use |

|---|---|---|---|

| Collar | Neck movement, head posture, feeding/drinking dips. | General activity, ingestive behaviors, resting posture, head tremors. | Long-term welfare monitoring in NHPs and canines; feeding studies. |

| Upper Back / Scapulae | Trunk movement, posture shifts, respiration rate, gross body movement. | Ambulatory vs. sedentary bouts, rearing (in rodents), escape responses, gait symmetry. | Standard for rodent home-cage monitoring; core body activity. |

| Limb (Forelimb/Hindlimb) | Distinct stride phases, impact peaks, tremors, fine paw movements. | Gait analysis (stance/swing), dyskinesia, paw flicking, reaching gestures. | Detailed motor function assessment in neurological disease models (e.g., Parkinson's, ALS). |

| Tail (Rodents) | Tail lift, suspension, flicking. | Tail-specific phenotypes, affective state (tail hang test), balance. | Supplementary sensor for comprehensive profiling. |

Experimental Protocols for Parameter Validation

Protocol 3.1: Determining Optimal Sampling Frequency

Objective: To empirically establish the minimum sampling frequency required to accurately classify a set of target behaviors without aliasing. Materials: Accelerometer capable of high-Fs recording (e.g., ≥500Hz), data acquisition system, video recording system (synchronized). Procedure:

- Fit the subject (e.g., rodent) with an accelerometer at the target placement (e.g., back).

- Record a synchronized session of video and raw acceleration data at the sensor's maximum frequency (e.g., 400 Hz).

- Annotate the video to label occurrences of key behaviors (e.g., walking, rearing, grooming, tremor).

- Extract acceleration epochs for each behavior.

- Perform a Fast Fourier Transform (FFT) on the raw acceleration magnitude stream for each behavioral epoch to identify its highest significant frequency component.

- Downsample the raw data in software to progressively lower frequencies (e.g., 200Hz, 100Hz, 50Hz, 25Hz).

- Extract standard features (e.g., variance, spectral edge) from both the original and downsampled data for each behavior.

- Use statistical comparison (e.g., ANOVA, correlation) to identify the Fs at which feature degradation becomes significant for classification accuracy.

Protocol 3.2: Calibrating Dynamic Range for a Specific Model

Objective: To prevent signal saturation while maximizing resolution for a given animal model and experimental setup. Materials: Accelerometer with programmable range, calibration shaker, or known displacement rig. Procedure:

- Program the accelerometer to its lowest range setting (e.g., ±2g).

- Place the sensor on the subject and record during a "challenge session" that elicits the most vigorous expected behaviors (e.g., open field exploration with novel objects).

- Plot the raw acceleration time-series. Visually and programmatically identify any periods of clipping (values at the maximum/minimum limit for extended periods).

- Quantify the percentage of time or number of events where clipping occurs.

- Incrementally increase the range (e.g., to ±4g, ±8g) and repeat the challenge session until clipping is eliminated or reduced to an acceptable threshold (<0.1% of samples).

- Note: After establishing the safe range, verify that the resolution is sufficient to detect subtle behaviors by analyzing the signal-to-noise ratio during low-activity periods like quiet rest.

Protocol 3.3: Comparative Placement for Multi-Behavior Classification

Objective: To quantify the contribution of data from different anatomical placements to the accuracy of a multi-behavior classifier. Materials: Multiple synchronized accelerometers (or a single multi-node system), harnesses/attachments for collar, back, and limb. Procedure:

- Simultaneously attach accelerometers to the collar, upper back, and one forelimb of the subject.

- Record synchronized data during a structured behavioral battery (e.g., open field, rotarod, feeding session).

- Generate ground-truth behavior labels via synchronized video scoring by trained observers.

- For each sensor location individually, extract a standard feature set (time-domain: mean, variance, integrals; frequency-domain: spectral power bands, entropy).

- Train and test separate machine learning models (e.g., Random Forest) using features from each single placement.

- Train a final model using feature sets fused from all three placements.

- Compare the precision, recall, and F1-score of each model per behavior to create a "contribution matrix" identifying the optimal placement(s) for each target behavior.

Visualization of Decision Pathways and Workflows

Title: Parameter Configuration Decision Pathway

Title: Multi-Sensor Data Fusion Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Accelerometer-Based Behavioral Research

| Item / Solution | Function & Rationale |

|---|---|

| Programmable Biotelemetry Implant/Logger (e.g., from Data Sciences Int., Starr Life Sciences) | Enables long-term, high-fidelity data collection from freely moving subjects with minimal stress. Crucial for chronic studies and home-cage monitoring. |

| Multi-Sensor Node System (e.g., AXIVITY, Opal by APDM) | Provides synchronized sensors for collar, back, and limb placement, essential for comparative placement studies and whole-body kinematic analysis. |

| Bio-Compatible Adhesive & Harness Kits | Secure, subject-safe attachment for external sensors. Minimizes stress artifacts and ensures consistent sensor orientation throughout the experiment. |

| Synchronization Trigger Box | Generates simultaneous timestamp pulses to video and accelerometer data streams, mandatory for creating ground-truth labeled datasets. |

| Open-Source Analysis Software (e.g., DeepLabCut, ezTrack, MARS) | Provides tools for video-based ground truth labeling and/or open-source accelerometer analysis pipelines, promoting reproducibility. |

| Calibration Shaker Table | Device with precisely controlled frequency and displacement to validate sensor gain, range, and frequency response before in vivo use. |

| Data Acquisition Software with Real-Time Preview (e.g., LabChart, Neurologger) | Allows researchers to visually confirm signal quality (no clipping, adequate SNR) during setup and pilot studies, preventing failed experiments. |

Integrating Accelerometry with Other Modalities (Video, EMG, Circadian Tracking)

Within the broader thesis on accelerometer data analysis for behavioral classification, a unimodal approach proves limiting. Accelerometry provides robust, continuous quantification of gross movement and posture but lacks specificity regarding movement type, underlying muscle activation, or the circadian context of behavior. This application note details protocols for the multimodal integration of accelerometry with video recording, electromyography (EMG), and circadian tracking to generate a high-resolution, biologically contextualized profile of behavior for research and drug development applications.

Application Notes & Synergistic Value

Table 1: Synergistic Value of Multimodal Integration with Accelerometry

| Modality | Primary Data | Limitations Alone | Value When Integrated with Accelerometry |

|---|---|---|---|

| Tri-Axial Accelerometry | Body acceleration (g), posture/inactivity. | Cannot classify specific behaviors (e.g., grooming vs. scratching); blind to muscle activation. | Core temporal stream for movement detection and volume. Serves as the alignment timestamp for all other signals. |

| Video Recording | Visual ethogram, kinematic detail, environmental context. | Labor-intensive manual scoring; prone to observer bias; poor in darkness. | Enables supervised machine learning: accelerometer patterns are labeled via video to train automated classifiers for specific behaviors (e.g., seizures, gait anomalies). |

| Electromyography (EMG) | Electrical activity of specific muscles (mV). | Invasive; limited to targeted muscles; does not describe whole-body movement. | Provides mechanistic causation for accelerometer-derived movements. Distinguishes between passive (e.g., being moved) and active (muscle-driven) movement. |

| Circadian Tracking | Light exposure, core body temperature, melatonin/salivary cortisol rhythms. | Describes timing but not the physical manifestation of behavior. | Contextualizes accelerometer-measured activity bouts within the subject's endogenous rhythm. Critical for assessing drug effects on circadian behavior (e.g., sedation vs. true rhythm disruption). |

Experimental Protocols

Protocol: Synchronized Accelerometry, Video, and EMG for Rodent Behavior Classification

Objective: To train an automated classifier for specific, drug-relevant behaviors (e.g., tremor, compulsive grooming).

Materials & Synchronization:

- Implantable or surface EMG system (e.g., Delsys).

- Tri-axial accelerometer (e.g., ADXL337) secured to the subject or implanted.

- High-definition video camera with IR capability for dark cycle recording.

- Central Synchronization Unit: A data acquisition system (e.g., Spike2, LabChart) or microcontroller (e.g., Arduino) that records all analog/digital signals on a single timeline. A shared TTL pulse sent to all systems at experiment start is mandatory.

Procedure:

- Instrumentation: Implant EMG electrodes into the target muscle (e.g., biceps femoris for hindlimb movement). Securely attach the accelerometer to the subject's torso or base of skull.

- Synchronization: Connect EMG and accelerometer outputs to the central DAQ. Program the DAQ to send a 5V TTL pulse to an LED visible in the video frame at recording start/stop.

- Data Collection: Record baseline activity in the home cage for 60 minutes. Administer test compound or vehicle. Continue recording for a defined period (e.g., 4 hours).

- Video Labeling: Using software (e.g., BORIS, DeepLabCut), an expert ethologist manually labels the onset and offset of target behaviors in the video.

- Data Alignment & Model Training: Using the shared TTL timestamps, align the video labels with the corresponding accelerometer and EMG signal windows. Extract features (e.g., spectral power, signal magnitude area) from the multimodal data stream during labeled events. Use these features to train a machine learning model (e.g., random forest, convolutional neural network) to recognize the behavior from accelerometry/EMG data alone.

Protocol: Integrating Accelerometry with Circadian Tracking in Human Studies

Objective: To dissociate acute motor side effects from true circadian rhythm disruption in a clinical drug trial.

Materials:

- Wrist-worn research-grade actigraph (e.g., ActiGraph GT9X Link) with ambient light sensor.

- Salivary cortisol collection kits.

- Sleep/activity diary (digital or paper).

- Synchronization: Time-stamp all data streams to a common clock (e.g., UTC). Actigraph data is typically epoch-aligned (e.g., 60-second epochs).

Procedure:

- Baseline Period (7 days): Subject wears actigraph continuously. Collects salivary cortisol at wake-up, 30 minutes post-wake, and before bed. Completes sleep diary.

- Intervention: Subject begins drug regimen. Continues actigraphy, diary, and cortisol sampling (days 1, 3, and 7 of treatment).

- Analysis:

- Accelerometry: Calculate circadian activity rhythms: Interdaily Stability (IS), Intradaily Variability (IV), and Relative Amplitude (RA) using non-parametric methods.

- Circadian Markers: Plot diurnal cortisol profiles. Calculate dim-light melatonin onset (DLMO) if data collected.

- Integration: Correlate changes in accelerometer-derived RA with shifts in cortisol peak or DLMO. Use the sleep diary to validate accelerometer-derived sleep periods and contextualize daytime activity drops (sedation vs. rhythm shift).

Diagrams

Diagram 1: Multimodal Integration & Analysis Workflow

Diagram 2: Circadian Signaling & Accelerometry Relationship

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Multimodal Studies

| Item | Example Product/Supplier | Function in Multimodal Research |

|---|---|---|

| Research Actigraph | ActiGraph GT9X Link, CamNtech MotionWatch | Provides calibrated, raw tri-axial accelerometry synchronized with light and other sensors. Essential for circadian analysis. |

| Miniature Implantable Telemetry | Data Sciences International (DSI) HD-X02, Kaha Sciences | Enables simultaneous collection of ACC, EMG, EEG, and temperature from freely moving rodents, with built-in synchronization. |

| Central DAQ & Sync System | Spike2 (CED), LabChart (ADInstruments), Ni-DAQ (National Instruments) | Hardware/software platform to acquire, synchronize, and visualize multiple analog/digital data streams in real time. |

| Video Annotation Software | BORIS, DeepLabCut, EthoVision XT | Creates ground-truth labels from video for supervised machine learning. Critical for training behavioral classifiers. |

| Salivary Cortisol/Melatonin Kit | Salimetrics, DRG International | Non-invasive collection of circadian phase markers for integration with actigraphy-derived activity rhythms. |

| Time-Sync Pulse Generator | Custom Arduino setup, Black Box Toolkit | Generates precise TTL pulses sent to all recording devices to establish a common, millisecond-accurate timebase. |

| Multimodal Analysis Software | MATLAB with Toolboxes, Python (Pandas, SciPy, scikit-learn) | Custom scripting environment for aligning data streams, extracting multimodal features, and training classification models. |

Building the Pipeline: A Step-by-Step Guide to Processing and Classifying Behavioral Data

This application note details the critical initial phase of accelerometer data processing within a broader thesis on behavioral classification for preclinical research. Accurate classification of animal behaviors (e.g., rearing, grooming, locomotion) from raw accelerometer signals is fundamental to assessing the efficacy and safety of novel pharmacological compounds in drug development. The reliability of downstream classification models is entirely dependent on rigorous pre-processing, which includes filtering noise, calibrating signals, and segmenting data into analyzable epochs.

Accelerometer data is typically acquired from wearable devices (e.g., collars, harnesses) or implanted telemetry sensors in rodent models. Data logging can be continuous or event-triggered. Key parameters from recent literature are summarized below.

Table 1: Common Accelerometer Acquisition Parameters in Preclinical Research

| Parameter | Typical Range/Value | Rationale |

|---|---|---|

| Sampling Rate | 50-100 Hz | Balances temporal resolution with data storage and processing load for rodent behaviors. |

| Bit Resolution | 12-16 bit | Determines dynamic range for capturing subtle and vigorous movements. |

| Axes | 3 (X, Y, Z) | Essential for capturing movement in three-dimensional space. |

| Range | ±2g to ±16g | Selected based on expected acceleration magnitude of the species and behavior. |

| Data Format | .csv, .mat, .edf | Standard formats for analysis in platforms like Python (Pandas/NumPy) or MATLAB. |

Protocols for Pre-processing

Protocol 1: Noise Filtering

Objective: Remove high-frequency electronic noise and low-frequency drift not associated with behavior.

Materials & Reagents:

- Raw Accelerometer Time-Series Data: Tri-axial (X, Y, Z) signals.

- Digital Signal Processing Software: Python (SciPy, NumPy) or MATLAB.

- Filter Design Tool: SciPy

signalmodule or MATLAB Signal Processing Toolbox.

Methodology:

- Visual Inspection: Plot raw signals to identify obvious artifacts or clipping.

- Band-Pass Filter Application:

- Design a 4th-order zero-lag Butterworth band-pass filter.

- Set cut-off frequencies: High-pass: 0.1 Hz (removes baseline wander); Low-pass: 20 Hz (removes high-frequency noise). The exact low-pass value should be less than half the sampling rate (Nyquist criterion).

- Apply the filter forward and backward (

filtfiltfunction) to eliminate phase distortion.

- Notch Filter (Optional): Apply a 50/60 Hz notch filter if powerline interference is present in the data.

Protocol 2: Calibration & Normalization

Objective: Standardize signal amplitude to gravitational units (g) and correct for sensor orientation bias.

Materials & Reagents:

- Filtered Accelerometer Data.

- Reference Calibration Values: Known static positions (e.g., sensor placed with each axis sequentially aligned with gravity).

- Rotation Matrices (if needed for orientation correction).

Methodology:

- Static Calibration:

- Isolate data segments where the subject is known to be stationary.

- For each axis, calculate the mean value during stationary periods. The known gravitational vector (e.g., +1g or -1g) is used to derive scaling (gain) and offset (bias) coefficients.

- Apply the linear transformation:

Signal_calibrated = (Signal_raw - Offset) * Gain.

- Vector Magnitude Calculation:

- Compute the Signal Vector Magnitude (SVM) = √(X² + Y² + Z²). This metric is less sensitive to sensor orientation and is useful for overall activity analysis.

- Tilt Correction (Optional): Use accelerometer data during stationary periods to estimate the orientation of the animal's body relative to the global vertical and apply rotational correction.

Protocol 3: Segmentation

Objective: Divide the continuous time-series into meaningful windows for feature extraction.

Materials & Reagents:

- Calibrated Accelerometer Data.

- Time-Series Segmentation Algorithm.

Methodology:

- Window Type Selection:

- Fixed Windows: Simple, non-overlapping windows (e.g., 1-5 seconds). Suitable for general activity profiling.

- Event-Driven Windows: Windows triggered by detected events (e.g., SVM exceeding a threshold). More computationally complex but behaviorally relevant.

- Protocol for Fixed Window Segmentation:

- Define window length (e.g., 2 seconds) and step size.

- For a 100 Hz signal, a 2-second window contains 200 samples per axis.

- If using overlapping windows, a step size of 0.5 seconds (50% overlap) is common to increase training data for machine learning models.

- Ensure each window is labeled with the corresponding behavioral state (e.g., "grooming," "rearing") from synchronized video observation for supervised learning.

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for Accelerometer Pre-processing

| Item | Function/Description |

|---|---|

| Tri-axial Accelerometer Sensor | Core hardware for capturing linear acceleration in three orthogonal dimensions. Implantable or wearable form factors. |

| Telemetry Receiver/Data Logger | Receives and stores transmitted sensor data from freely moving animals. |

| Calibration Jig | A physical apparatus to hold the sensor in precise orientations (±1g, 0g) for determining gain and offset. |

| Digital Filter Design Software (SciPy, MATLAB) | Provides algorithms for designing and applying noise-filtering digital filters (e.g., Butterworth). |

| Time-Synchronized Video Recording System | The gold standard for ground-truth behavioral labeling of accelerometer data segments. |

| Data Analysis Environment (Python/R/MATLAB) | Platform for scripting the entire pre-processing pipeline, ensuring reproducibility. |

Visualizations

Title: Accelerometer Data Pre-processing Workflow

Title: Role of Pre-processing in Behavioral Classification Thesis

Within the thesis on accelerometer data analysis for behavioral classification in preclinical research, feature engineering is a critical preprocessing step. It transforms raw tri-axial (X, Y, Z) acceleration signals into a quantitative feature set that machine learning models can use to classify distinct behavioral states (e.g., locomotion, rearing, grooming, resting). This process is foundational for phenotyping in neuropharmacological studies and assessing drug efficacy or side effects in rodent models.

Core Feature Domains: Protocols & Application Notes

Features are extracted from fixed-length, non-overlapping epochs of raw accelerometer data (e.g., 1-5 second windows). The following domains are systematically explored.

Time-Domain Features

These features capture the amplitude, variability, and shape of the signal distribution over time.

Experimental Protocol:

- Data Segmentation: Segment the continuous raw acceleration vector (\mathbf{a}(t) = [ax(t), ay(t), az(t)]) into epochs (Ek) of duration (T) (e.g., 2 seconds).

- Signal Magnitude Calculation: For each epoch, compute the Signal Magnitude Vector (SMV) or L2-norm: (SMV(t) = \sqrt{ax(t)^2 + ay(t)^2 + a_z(t)^2}).

- Feature Computation: Calculate the following for each axis ((ax, ay, az)) and the SMV within each epoch (Ek).

Key Time-Domain Metrics Table:

| Feature Name | Mathematical Formula | Physiological/Behavioral Interpretation |

|---|---|---|

| Mean | (\mu = \frac{1}{N}\sum{i=1}^{N} si) | Average acceleration level; indicates posture or sustained movement. |

| Standard Deviation | (\sigma = \sqrt{\frac{1}{N}\sum{i=1}^{N} (si - \mu)^2}) | Magnitude of movement variability. |

| Root Mean Square | (RMS = \sqrt{\frac{1}{N}\sum{i=1}^{N} si^2}) | Overall signal energy. |

| Peak Amplitude | (max(|s_i|)) | Intensity of the most vigorous movement in the epoch. |

| Minimum Amplitude | (min(s_i)) | Baseline or opposing force measurement. |

| Signal Magnitude Area | (SMA = \frac{1}{N}\sum{i=1}^{N} (|ax|+|ay|+|az|)) | Gross motor activity index. |

| Correlation between Axes | (\rho{xy} = \frac{cov(ax, ay)}{\sigma{ax}\sigma{a_y}}) | Coordination of movement across planes. |

| Zero-Crossing Rate | (ZCR = \frac{1}{N}\sum{i=1}^{N-1} \mathbb{1}{(s_{i+1}<0)}) | Frequency of directional changes in acceleration. |

| Interquartile Range | (IQR = Q3 - Q1) | Spread of the central portion of data, robust to outliers. |

Frequency-Domain Features

These features describe the periodicity and spectral power distribution of the signal, useful for identifying rhythmic behaviors (e.g., tremors, gait cycles).

Experimental Protocol:

- Preprocessing: Apply a Hanning window to each epoch (E_k) to reduce spectral leakage.

- Transform: Compute the Fast Fourier Transform (FFT) for each axis and the SMV: (F(f) = \mathcal{F}{s(t)}).

- Spectral Calculation: Compute the Power Spectral Density (PSD) using the periodogram: (PSD(f) = \frac{1}{fs N} \|F(f)\|^2), where (fs) is the sampling frequency.

- Band Definition: Define physiologically relevant frequency bands (e.g., 0-1 Hz for slow movement, 1-10 Hz for ambulation, 10-20 Hz for tremor).

Key Frequency-Domain Metrics Table:

| Feature Name | Calculation Method | Behavioral Interpretation |

|---|---|---|

| Dominant Frequency | (f{dom} = \arg\maxf PSD(f)) | The most prominent rhythmic component in the movement. |

| Spectral Entropy | (H = -\sum{f} PSDn(f) \log2 PSDn(f)); (PSD_n) normalized | Regularity of the activity; constant motion has low entropy. |

| Band Energy | (E{band} = \sum{f \in band} PSD(f)) | Total power in a behaviorally relevant band. |

| Band Energy Ratio | (E{ratio} = E{band} / E_{total}) | Relative importance of a specific band. |

| Spectral Centroid | (\bar{f} = \sum{f} f \cdot PSDn(f)) | "Center of mass" of the spectrum; indicates movement pace. |

| Spectral Flatness | (\frac{\exp(\frac{1}{N}\sum \ln(PSD(f)))}{\frac{1}{N}\sum PSD(f)}) | Distinguishes tonal from noisy signals (e.g., tremor vs. fidgeting). |

Statistical & Nonlinear Metrics

These features capture the dynamic complexity and distribution characteristics of the signal.

Experimental Protocol:

- Data Preparation: Use the demeaned signal from a single epoch.

- Feature Computation: Apply statistical and information-theoretic algorithms.

Key Statistical Metrics Table:

| Feature Name | Mathematical Formula/Description | Application Note |

|---|---|---|

| Skewness | (\frac{\frac{1}{N}\sum (s_i-\mu)^3}{\sigma^3}) | Asymmetry of the distribution. Impacts from sudden jerks. |

| Kurtosis | (\frac{\frac{1}{N}\sum (s_i-\mu)^4}{\sigma^4}) | "Tailedness." High kurtosis may indicate rare, intense movements. |

| Sample Entropy | (SampEn(m, r, N) = -\ln\frac{A}{B}) where A=# of template matches for m+1 points, B=# for m points. | Regularity and complexity. Lower values indicate more self-similarity. |

| Hurst Exponent (H) | Estimated via Rescaled Range (R/S) analysis. H=0.5 (random), 0.5 | Long-range correlations in the activity time series. |

| Mean Absolute Deviation | (MAD = \frac{1}{N}\sum|s_i - \mu|) | Robust measure of dispersion. |

| Higuchi Fractal Dimension | Approximates the fractal dimension of the time series directly in the time domain. | Quantifies the complexity of the movement trajectory. |

Visualizing the Feature Engineering Workflow

Diagram Title: Feature Engineering Workflow for Behavioral Classification

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Accelerometer-Based Behavioral Research |

|---|---|

| Implantable/Telemetered Accelerometer (e.g., HD-X02, Data Sciences Int.) | Miniaturized device surgically implanted in rodents to capture high-fidelity, tri-axial acceleration data in a home-cage, minimizing stress artifacts. |

| High-Sampling Rate DAQ System (>100 Hz) | Ensures Nyquist criterion is met for capturing rapid movements (e.g., tremor, startle) without aliasing. |

| Behavioral Observation Software (e.g., Noldus EthoVision, ANY-maze) | Provides ground-truth video scoring for supervised machine learning, enabling labeled datasets for model training. |

| Digital Signal Processing Library (SciPy, MATLAB Signal Proc. Toolbox) | Essential for implementing FFT, filtering, and complex feature extraction algorithms reliably and reproducibly. |

Feature Selection Toolbox (e.g., scikit-learn SelectKBest, RFECV) |

Addresses the "curse of dimensionality" by identifying the most discriminative features from the large engineered set. |

| Standardized Behavioral Arena | A controlled environment (e.g., open field, home cage) to elicit and record species-typical behaviors under consistent conditions. |

| Pharmacological Reference Compounds (e.g., Amphetamine, Clozapine) | Established psychoactive agents used as positive/negative controls to validate the sensitivity of the feature set to drug-induced behavioral changes. |

| Computational Environment (Jupyter Notebook, R Markdown) | Facilitates reproducible analysis pipelines, integrating data loading, feature engineering, and model training in a single document. |

In behavioral classification research using accelerometer data, the choice of machine learning paradigm dictates the hypothesis-testing framework. Supervised learning is employed when distinct behavioral states (e.g., "grooming," "tremor," "rearing") are pre-defined and labeled, enabling the model to learn mappings from raw or processed accelerometry signals to these known classes. This is critical for quantifying specific behaviors in pharmacological studies. Unsupervised learning is used for discovery-driven research, where latent patterns, novel behavioral phenotypes, or unanticipated drug effects are identified without a priori labels, such as segmenting continuous activity into discrete, meaningful motifs.

Table 1: Core Characteristics and Applications

| Feature | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Primary Goal | Learn a function to map inputs (features) to known, labeled outputs. | Discover intrinsic patterns, structures, or groupings within input data. |

| Data Requirement | Requires a labeled dataset (X, y). | Requires only unlabeled data (X). |

| Common Algorithms | Random Forest, Support Vector Machines (SVM), Gradient Boosting, Logistic Regression. | k-Means Clustering, Hierarchical Clustering, DBSCAN, Principal Component Analysis (PCA), Autoencoders. |

| Typical Output | Predictive model for behavioral class. | Clusters, latent dimensions, or a lower-dimensional representation. |

| Validation Metric | Accuracy, Precision, Recall, F1-Score, ROC-AUC. | Silhouette Score, Calinski-Harabasz Index, Davies-Bouldin Index, reconstruction error. |

| Role in Behavioral Research | Classification: Assigning pre-defined labels (e.g., "sleep" vs. "active").Regression: Predicting continuous scores (e.g., activity intensity). | Behavioral Phenotyping: Identifying novel, ethologically relevant behavior clusters.Dimensionality Reduction: Visualizing high-dimensional feature space for outlier detection. |

| Advantages | High predictive accuracy for target variables; results are directly interpretable in the context of known behaviors. | No need for costly/manual labeling; can reveal unexpected patterns or subtypes of behavior. |

| Disadvantages | Dependent on quality and scope of human labeling; cannot identify novel classes outside the training labels. | Results can be ambiguous and harder to validate; often requires post-hoc interpretation by domain experts. |

Experimental Protocols

Protocol 3.1: Supervised Classification of Drug-Induced Behaviors

Objective: To train and validate a classifier that distinguishes between saline-treated and drug-treated (e.g., psychostimulant) animal states based on tri-axial accelerometer data.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Acquisition & Labeling:

- Record tri-axial accelerometer data (e.g., at 100 Hz) from subjects in controlled trials (saline vs. drug).

- Synchronize video recordings with accelerometer streams.

- Annotate video data to create ground-truth labels for distinct behavioral epochs (e.g., "stationary," "ambulation," "stereotypy"). Use an annotation software (e.g., BORIS).

Feature Engineering:

- Segment the continuous accelerometer signal into fixed-length windows (e.g., 1-5 seconds with 50% overlap).

- For each axis (X, Y, Z) and the vector magnitude

VM = sqrt(x² + y² + z²), calculate features per window:- Time-domain: Mean, variance, skewness, kurtosis, zero-crossing rate.

- Frequency-domain: Spectral centroid, bandwidth, energy in 0-5Hz band (via FFT).

- Time-frequency: Wavelet coefficients (e.g., Daubechies 4).

- Compile features into a tabular dataset

(n_samples, n_features), aligned with the window's majority label.

Model Training & Validation:

- Split data into training (70%), validation (15%), and hold-out test (15%) sets. Maintain class balance via stratification.

- Standardize features using

StandardScaler(fit on training, transform all sets). - Train a Random Forest Classifier on the training set. Optimize hyperparameters (e.g.,

n_estimators,max_depth) using cross-validated grid search on the validation set. - Evaluate the final model on the held-out test set, reporting Accuracy, Precision, Recall, F1-Score per class, and the confusion matrix.

Protocol 3.2: Unsupervised Discovery of Behavioral Motifs

Objective: To identify recurrent, intrinsic behavioral motifs from continuous, unlabeled accelerometer data.

Procedure:

- Data Preprocessing & Dimensionality Reduction:

- Acquire and segment accelerometer data as in Protocol 3.1, Step 2, but without labels.

- Compute a comprehensive feature set for each window.

- Apply Principal Component Analysis (PCA) to the standardized feature matrix to reduce dimensionality, retaining components explaining >95% variance. This denoises the data.

Clustering for Motif Discovery:

- Apply k-Means clustering to the PCA-reduced data.

- Determine the optimal number of clusters (

k) using the Elbow Method (plotting within-cluster-sum-of-squares vs.k) and the Silhouette Score. - Assign each data window (and thus its corresponding time segment) a cluster label.

Post-Hoc Labeling & Validation:

- Review video recordings corresponding to high-purity cluster members (samples closest to cluster centroids).

- Ethologically interpret each cluster to assign a putative behavioral label (e.g., "Cluster 3 = Grooming").

- Validate by checking the temporal consistency of cluster assignments (e.g., do "grooming" clusters form coherent bouts?) and their sensitivity to pharmacological manipulation.

Visualization

Title: ML Workflow for Accelerometer-Based Behavior Analysis

Title: Algorithm Selection Guide for Behavior Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Accelerometer-Based ML Research

| Item/Reagent | Function/Role in Research | Example/Note |

|---|---|---|

| Implantable/Attachable Telemetry Accelerometers | Core data acquisition device. Captures high-frequency (≥50 Hz), tri-axial acceleration data in freely moving subjects. | Examples: Data Sciences International (DSI) HD-X02, Starr Life Sciences, open-source platforms like OpenBCI. |

| Behavioral Annotation Software | Creates ground-truth labels for supervised learning by synchronizing and annotating video recordings. | Examples: BORIS, Noldus Observer, DeepLabCut (for pose estimation as a label source). |

| Signal Processing & Feature Extraction Library | Processes raw accelerometer streams into feature vectors for machine learning. | Primary Tool: Python libraries (SciPy, NumPy, tsfeature for domain-specific features). |

| Machine Learning Framework | Provides algorithms for supervised and unsupervised learning, model evaluation, and hyperparameter tuning. | Primary Tool: Scikit-learn, XGBoost. For deep learning: TensorFlow/PyTorch. |

| Computational Environment | Handles the storage and computational demands of large-scale accelerometry datasets and model training. | Note: Cloud platforms (Google Colab Pro, AWS) or local workstations with significant RAM (>16 GB) and GPU acceleration are often necessary. |

| Validation & Metrics Suite | Quantifies model performance (supervised) or clustering quality (unsupervised) to ensure scientific rigor. | Tools: Scikit-learn's metrics module. Custom scripts for ethological validation of clusters (e.g., bout analysis). |

Within the broader thesis on accelerometer data analysis for behavioral classification, this application note details protocols for quantifying drug effects on key behavioral domains. The integration of continuous, high-resolution accelerometry with traditional observational scoring enables robust, objective, and sensitive measurement of motor function, sedation, and neuropsychiatric behaviors in preclinical models, significantly enhancing the drug development pipeline.

Core Behavioral Domains & Quantitative Metrics

The following table summarizes the primary behavioral domains, their clinical relevance in drug development, and the key quantitative metrics derived from triaxial accelerometer data.

Table 1: Behavioral Domains and Accelerometer-Derived Metrics

| Behavioral Domain | Drug Development Relevance | Primary Accelerometer Metrics | Typical Model/Assay |

|---|---|---|---|

| Motor Function | Efficacy in neurodegenerative (PD, ALS) & movement disorders; motor side-effect profiling. | - Total Activity Counts- Ambulatory Bouts & Duration- Movement Velocity (cm/s)- Gait Symmetry Index- Power Spectral Density in 1-10 Hz band | Open Field, Rotarod, Gait Analysis (DigiGait) |

| Sedation | Therapeutic sedation (anxiolytics, anesthetics) & unwanted sedative side effects. | - Immobility Time (%)- Mean Bout Duration of Immobility- Spectral Edge Frequency (shift to lower frequencies)- Low-frequency (0.5-4 Hz) Power Increase | Open Field, Loss of Righting Reflex (LORR) |

| Neuropsychiatric (Anxiety/Depression) | Efficacy of antidepressants, anxiolytics; psychotomimetic side effects. | - Time in Center vs. Periphery (%)- Thigmotaxis Index- Volitional Movement Initiation Latency- Entropy/Regularity of Movement Patterns | Elevated Plus Maze, Forced Swim Test, Social Interaction |

| Stereotypy & Seizure | Antipsychotic efficacy; pro-convulsant risk assessment. | - Repetitive Motion Counts- Pattern Autocorrelation- High-Frequency (10-50 Hz) Burst Power & Duration | Apomorphine-induced stereotypy, Pentylenetetrazol (PTZ) challenge |

Detailed Experimental Protocols

Protocol 3.1: Integrated Open Field Test with Continuous Accelerometry

Objective: To simultaneously assess general locomotor activity, exploration (anxiety-like behavior), and sedation in rodents following acute drug administration. Materials: Open field arena (40cm x 40cm x 40cm), triaxial accelerometer implant (e.g., DSI HD-X02, 10Hz sampling) or collar-mounted tag, video tracking system, data acquisition software. Procedure:

- Baseline Recording: Place animal (with activated accelerometer) in home cage for 60 min to record baseline activity and acclimatize.

- Drug Administration: Administer vehicle or test compound via predefined route (i.p., p.o., s.c.).

- Open Field Placement: At T0 (time of peak plasma concentration), gently place animal in center of open field. Record simultaneously for 30 minutes using video and accelerometer telemetry.

- Data Acquisition: Acquire accelerometer data (X, Y, Z vectors) at ≥10Hz. Synchronize clock with video tracking software.

- Analysis: Segregate data into 5-minute bins. Calculate:

- Total Activity: Vector magnitude

VM = √(x²+y²+z²). Sum deviations from baseline per bin. - Immobility/Sedation: Percentage of epochs where VM < threshold (e.g., 0.1g).

- Thigmotaxis: Using video tracking, derive time spent in peripheral zone (>10cm from walls). Correlate with low-velocity movement bouts from accelerometry.

- Total Activity: Vector magnitude

Protocol 3.2: Rotarod Performance with Kinetic Acceleration Analysis

Objective: To quantitatively evaluate motor coordination, balance, and fatigue. Materials: Accelerating rotarod, implantable telemetric accelerometer, high-speed data logger. Procedure:

- Training: Train animals on rotarod (4-40 rpm over 5 min) for 3 consecutive days until a stable baseline latency to fall is achieved.

- Test Day: Implant accelerometer 7 days prior. Administer drug/vehicle.

- Kinetic Recording: Mount rotarod with wireless receiver. As animal runs, collect high-frequency (100Hz) accelerometer data, focusing on the Z-axis (vertical plane) and Y-axis (anterior-posterior).

- Endpoint: Record latency to fall. Continue recording accelerometer data for 60s post-fall to assess recovery of righting and motor activity.

- Analysis: Calculate:

- Pre-fall Stability Metric: Standard deviation of rhythmic oscillation frequency in the Y-axis.

- Corrective Movement Bursts: Count of high-amplitude, short-duration spikes in Z-axis preceding a fall.

- Fatigue Index: Decline in stride regularity (via autocorrelation) over the trial duration.

Protocol 3.3: Accelerometry-Enhanced Forced Swim Test (FST)

Objective: To objectively differentiate active climbing/swimming from passive floating in antidepressant screening. Materials: Glass cylinder (height 40cm, diameter 20cm), water (25°C), triaxial accelerometer collar, overhead video. Procedure:

- Preparation: Fit animal with waterproofed collar accelerometer. Place in swim tank for 6 min.

- High-Rate Recording: Record accelerometer data at 50Hz to capture fine limb movements.

- Synchronized Observation: Simultaneously record video for traditional manual scoring (immobility time).

- Analysis: Apply machine learning classifier trained on labeled accelerometer epochs:

- Active Struggle: Characterized by high-frequency, high-amplitude movements in all axes.

- Climbing: Distinct rhythmic, vertical (Z-axis) periodicity.

- Passive Floating: Low variance in VM, with only small corrections from tail/head.

Diagrams

Title: Workflow for Accelerometer-Based Behavioral Classification

Title: Drug Effect to Accelerometer Signal Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Accelerometer-Based Behavioral Pharmacology

| Item / Reagent Solution | Function / Application |

|---|---|

| Implantable Telemetric Accelerometers (e.g., DSI HD-X02, Starr Life Sciences) | Provides continuous, high-fidelity 3-axis acceleration data from freely moving rodents with minimal behavioral impact. Essential for long-term or home-cage studies. |

| High-Sampling Rate Data Loggers (≥100Hz capability) | Captures rapid, fine-scale movements crucial for gait analysis, tremor, or seizure activity classification. |

| Calibrated Open Field Arena with Controlled Lighting | Standardized environment for assessing locomotor activity, exploration, and anxiety-like behaviors. Requires shielding for RF telemetry. |

| Integrated Software Suite (e.g., NeuroScore, ANY-maze, EthoVision with accelerometry module) | Synchronizes video tracking with accelerometer data, enables automated scoring, feature extraction, and machine learning-based behavioral classification. |

| Reference Pharmacological Agents (e.g., Amphetamine, Diazepam, Haloperidol, Clozapine) | Positive/Negative controls for validating assay sensitivity. Amphetamine (increases locomotion), Diazepam (sedation/anxiolysis), Haloperidol (motor suppression). |

| Machine Learning Libraries (e.g., scikit-learn, TensorFlow/PyTorch for Python) | Used to develop custom classifiers for distinguishing complex behavioral states from raw or feature-engineered accelerometer data. |

| Data Processing Pipeline (Custom scripts in Python/R for filtering, feature calculation) | Critical for transforming raw acceleration data into analyzable metrics (e.g., activity counts, spectral power, movement regularity). |

Within the broader thesis on accelerometer data analysis for behavioral classification, the objective quantification of locomotor activity—hyperactivity or bradykinesia—is a critical endpoint in preclinical CNS research. This case study details protocols for data acquisition, processing, and interpretation using accelerometer-based systems to model disorders like ADHD, schizophrenia, Parkinson's disease, and depression.

Key Behavioral Paradigms & Quantitative Data

The following table summarizes core locomotor tests and typical accelerometer-derived metrics.

Table 1: Core Rodent Locomotor Tests & Accelerometer Output Metrics

| Test Paradigm | Primary Measured Behavior | Key Accelerometer Metrics | Typical Baseline Value (Mean ± SD, Adult Mouse) | Associated CNS Disorder Model |

|---|---|---|---|---|

| Open Field Test (OFT) | Horizontal locomotion, exploration | Total distance (cm), Velocity (cm/s), Movement duration (s) | Distance: 2000-4000 cm/10 min | Hyperactivity: ADHD, Schizophrenia |

| Cylinder Test (Forelimb Akinesia) | Rear-supported rearing, forelimb use | Number of rears, Time spent rearing (s) | Rears: 15-25 counts/5 min | Bradykinesia: Parkinson's Disease |

| Rotarod Test | Motor coordination, fatigue | Latency to fall (s), Constant speed vs. accelerating | Latency: 180-300 s (32 rpm) | Bradykinesia/Failure: PD, MS |

| Home Cage Monitoring | Circadian spontaneous activity | Beam breaks/active bouts per hour, Power spectral density | Nocturnal act.: 500-800 bouts/12h | Circadian disruption: Depression |

Table 2: Expected Direction of Change in Key Metrics for Disorder Models

| CNS Disorder Model | Inducing Agent/Genetic Manipulation | Total Distance | Velocity | Movement Duration | Rearing Frequency |

|---|---|---|---|---|---|

| ADHD/Hyperactivity | MK-801 (0.1-0.3 mg/kg, i.p.) | ↑↑ (150-200% of control) | ↑ (120-150%) | ↑↑ | ↑ or |

| Parkinson's Bradykinesia | MPTP (20-30 mg/kg, s.c., over 24h) | ↓↓ (40-60% of control) | ↓ (50-70%) | ↓↓ | ↓↓ (70-80% reduction) |

| Depression (Psychomotor Retardation) | Chronic Mild Stress (4 weeks) | ↓ (60-80% of control) | or ↓ | ↓ | ↓↓ |

| Mania/Hyperactivity | d-amphetamine (2-5 mg/kg, i.p.) | ↑↑ (200-300% of control) | ↑↑ | ↑↑ | ↑ |

Detailed Experimental Protocols

Protocol 1: Open Field Test with Tri-axial Accelerometry for Hyperactivity Assessment

Objective: To quantify hyperactivity in a novel arena. Materials:

- Rodent (mouse/rat) with implanted or externally attached tri-axial accelerometer (e.g., HD-X02, DSI).

- Open field arena (40cm x 40cm x 40cm for mice).

- Data acquisition system (Ponemah, LabChart, EthoVision X).

- Calibration platform. Procedure:

- Calibration: Place the accelerometer on a calibration platform. Record static positions (0g, +1g, -1g on each axis) for 10 seconds each.

- Habituation: Acclimate animal to the testing room for 60 minutes.

- Baseline Recording: Place animal in its home cage on the acquisition receiver. Record 30 minutes of baseline activity.

- Open Field Recording: Gently place the animal in the center of the open field arena. Record locomotor activity for 30 minutes.

- Data Export: Export raw accelerometry data (X, Y, Z in g) and timestamp at a minimum sampling rate of 100 Hz. Analysis:

- Calculate vector magnitude:

VM = sqrt(X^2 + Y^2 + Z^2). - Apply a low-pass filter (cut-off 20 Hz) to remove noise.

- Derive velocity and position via integration (ensure drift correction using high-pass filter >0.1 Hz).

- Compute total distance, average velocity, and time spent in motion (velocity > 2 cm/s).

Protocol 2: Assessment of Bradykinesia using Cylinder Test & Accelerometer-derived Rearing

Objective: To quantify forelimb akinesia and hypokinesia. Materials:

- Rodent with head-mounted or backpack-style accelerometer.

- Transparent glass or Plexiglas cylinder (20 cm diameter for rats).

- Video camera (side-view). Procedure:

- Setup: Position cylinder on a stable surface. Ensure camera and accelerometer receiver are aligned.

- Testing: Gently place the animal in the center of the cylinder. Record for 5-10 minutes.

- Synchronization: Generate a sync pulse (LED flash + TTL pulse to acquisition software) at trial start. Analysis:

- Accelerometry Method: Isolate the Z-axis (vertical) signal. A rearing event is identified when the Z-axis signal exceeds a threshold (e.g., +1.5g for >200ms) with simultaneous low X/Y variance.

- Video Validation: Manually score rearing from video (forepaws off the wall/floor >1s). Correlate with accelerometer events to validate threshold.

- Metrics: Calculate total number of rears, total time rearing, and latency to first rear.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Locomotor Phenotyping

| Item | Function & Application | Example Product/Model |

|---|---|---|

| Implantable Telemetry System | Chronic, stress-free recording of locomotion/activity in home cage or during tests. | Data Sciences International (DSI) HD-X02, Millar TR181. |

| Backpack-style Miniature Loggers | Acute or sub-chronic recording for high-throughput testing, lower cost. | Starr Life Sciences ACH-4K, Cambridge Neurotech. |

| Calibration Tilt Stage | Precisely calibrate accelerometers to convert voltage to g-force, critical for accurate integration. | 3-Axis Manual Precision Tilt Stage. |

| Software for Biomechanics | Process raw accelerometer data (filter, integrate, classify behavior bouts). | Noldus EthoVision X, Axelero Scope, custom MATLAB/Python scripts. |

| Pharmacological Agents (Agonist/Antagonist) | To induce or rescue locomotor phenotypes (validate model pharmacology). | MK-801 (NMDA antagonist), d-amphetamine (dopamine releaser), MPTP (neurotoxin). |

| Synchronization Pulse Generator | To temporally align video recordings with accelerometer data streams. | Med Associates DIG-716 TTL Pulse Generator. |

| Standardized Bedding | Control for environmental variability in home cage monitoring. | Corn cob bedding, Shepherd Specialty Papers. |

Visualizations

Workflow for Accelerometer Data Analysis Leading to Phenotype Classification (99 chars)

Simplified Basal Ganglia Pathway Leading to Bradykinesia (71 chars)

Experimental Protocol Workflow with Quality Checkpoints (85 chars)

Solving Real-World Challenges: Noise, Artifacts, and Model Optimization

Within accelerometer-based behavioral classification research, data fidelity is paramount. Artifacts such as cage bumping, signal saturation, and battery discharge effects introduce significant noise, confounding the extraction of meaningful ethological endpoints. These artifacts can obscure drug-response phenotypes and reduce the statistical power of preclinical studies. This document provides application notes and standardized protocols for identifying, mitigating, and correcting these prevalent issues, framed within the broader thesis of ensuring robust, reproducible accelerometry data for behavioral analysis in drug development.

Artifact Characterization and Impact

Table 1: Characterization and Impact of Common Accelerometer Data Artifacts

| Artifact Type | Typical Frequency Range | Amplitude Distortion | Primary Impact on Classification | Common Source |

|---|---|---|---|---|

| Cage Bumping | 1-10 Hz (low-frequency transients) | Can exceed ±8g | Masks voluntary locomotion; mimics rearing or jumping. | Cage cleaning, adjacent animal activity, human intervention. |

| Signal Saturation | DC to Nyquist frequency | Clipped at sensor max range (e.g., ±16g). | Loss of true peak acceleration; distorts gait dynamics and high-intensity behavior metrics. | Animal falls, intense seizures, sensor impacting cage wall. |

| Battery Effect | Very low frequency (<0.1 Hz) | Gradual baseline drift or sudden voltage drop. | Causes false negative activity counts; alters long-term circadian rhythm analysis. | Discharge curve of lithium cell, low-temperature operation. |

Experimental Protocols for Artifact Detection and Mitigation

Protocol: Controlled Cage Bump Induction and Signature Identification

Objective: To empirically define the accelerometric signature of cage bumps for algorithmic filtering. Materials: Telemetric accelerometer implant, rodent cage, calibrated impact device (or standardized drop weight), high-speed data acquisition system (≥500 Hz sampling). Procedure:

- Implant accelerometer in an anesthetized or euthanized model animal (to isolate external forces).

- Secure the animal/cage in a typical housing configuration.

- Using a solenoid actuator or drop mechanism, deliver a standardized lateral impact to the cage frame at known intensities (e.g., 0.5J, 1.0J).

- Record tri-axial accelerometry data at 500 Hz for 10 seconds pre- and post-impact.

- Repeat (n=20) at different cage locations.

- Analysis: Identify the characteristic waveform: a high-amplitude, low-frequency transient in the axis of impact, followed by damped oscillations, synchronized across all axes with near-zero vector magnitude change.

Protocol: Signal Saturation Calibration and Recovery

Objective: To establish post-hoc correction boundaries for saturated signals. Materials: Programmable centrifuge, accelerometer logger, calibration jig. Procedure: